I used to think player behaviour in games was unpredictable until I started paying attention to it inside Pixels.

At first, everything looks familiar. Same loops, same rewards, same early progression. But after a while, players who start in similar ways begin to move in completely different directions. Some stay consistent, some disengage quickly, some suddenly convert and then disappear. It feels messy until you realize it isn’t.

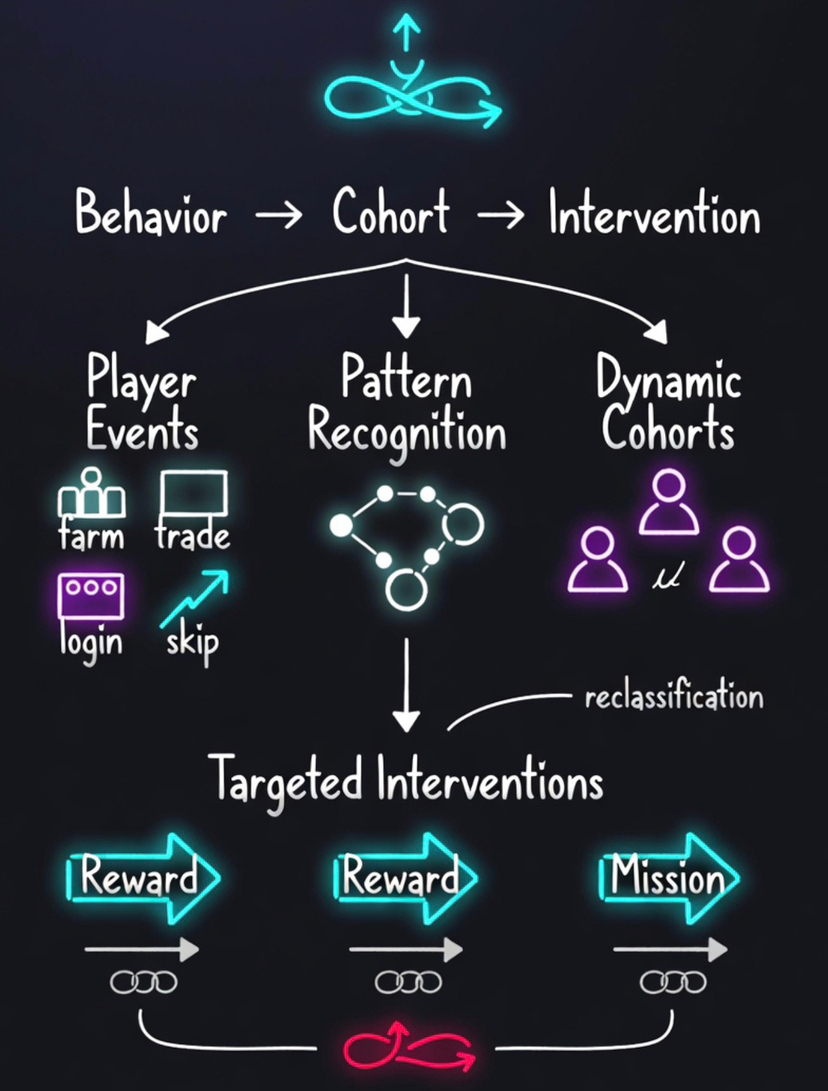

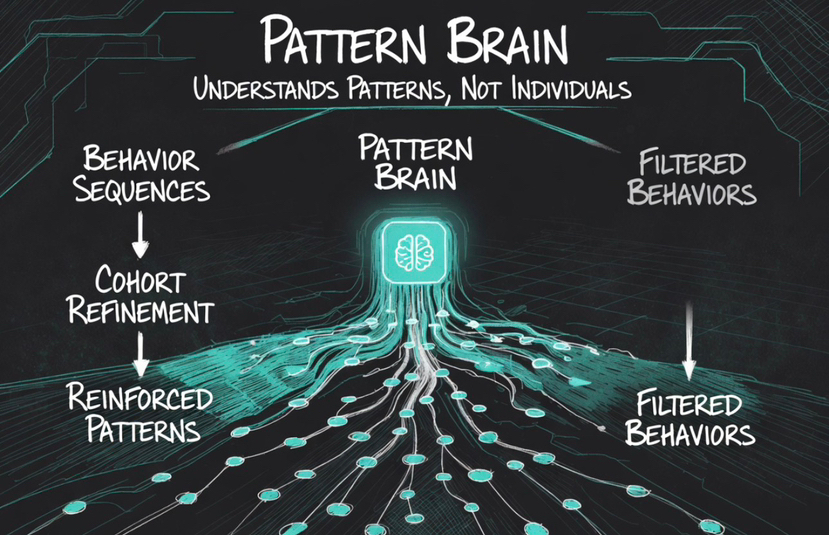

What Pixels is actually doing through Stacked is not reacting to individual players. It is identifying patterns that repeat across players and grouping them before deciding how to respond.

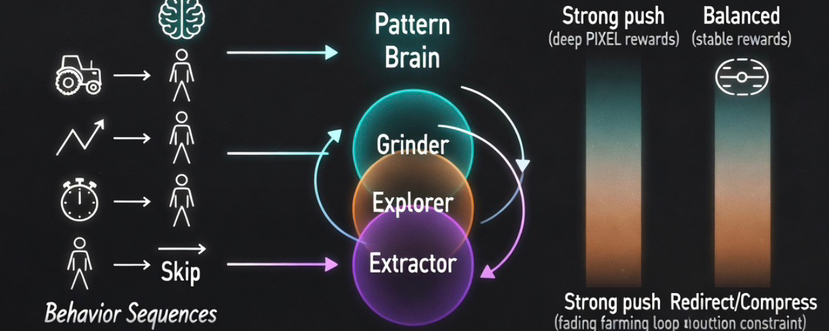

Inside Pixels, actions don’t stay isolated. A player farming, skipping time, trading, or logging in at irregular intervals is not evaluated as a single event. Those actions are combined and interpreted as part of a behavior pattern.

event → behavior pattern → cohort → intervention → outcome

The shift happens at the cohort level. Instead of asking what a single player did, Stacked inside Pixels tries to match that behaviour to a pattern it has already seen before.

That is where decisions begin to diverge.

I didn’t catch this at first, but it becomes obvious once you track progression over time. Two players can follow the same path early on, but after a few cycles, they start seeing different outcomes. One is pushed deeper into the system, while the other starts receiving lighter engagement or fewer incentives.

That difference is not random. It comes from how Stacked inside Pixels has already classified their behaviour into different cohorts.

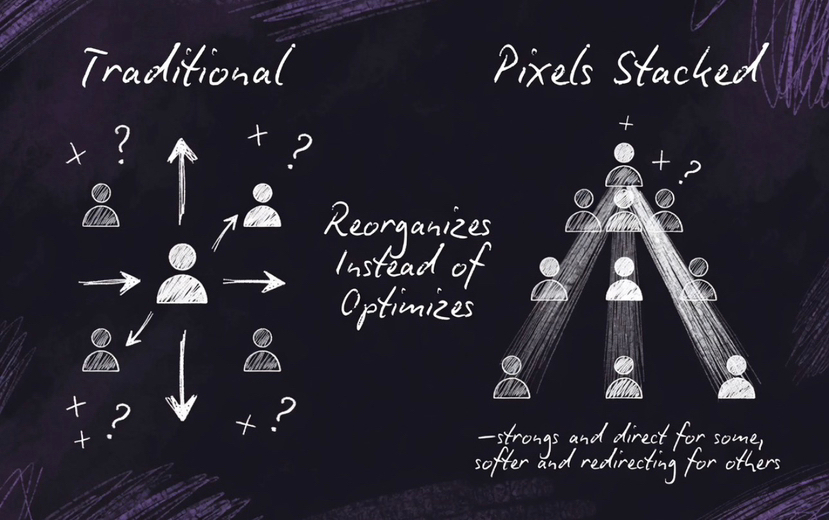

Most systems try to optimize players individually. Pixels doesn’t.

Pixels doesn’t optimize players. It reorganizes them.

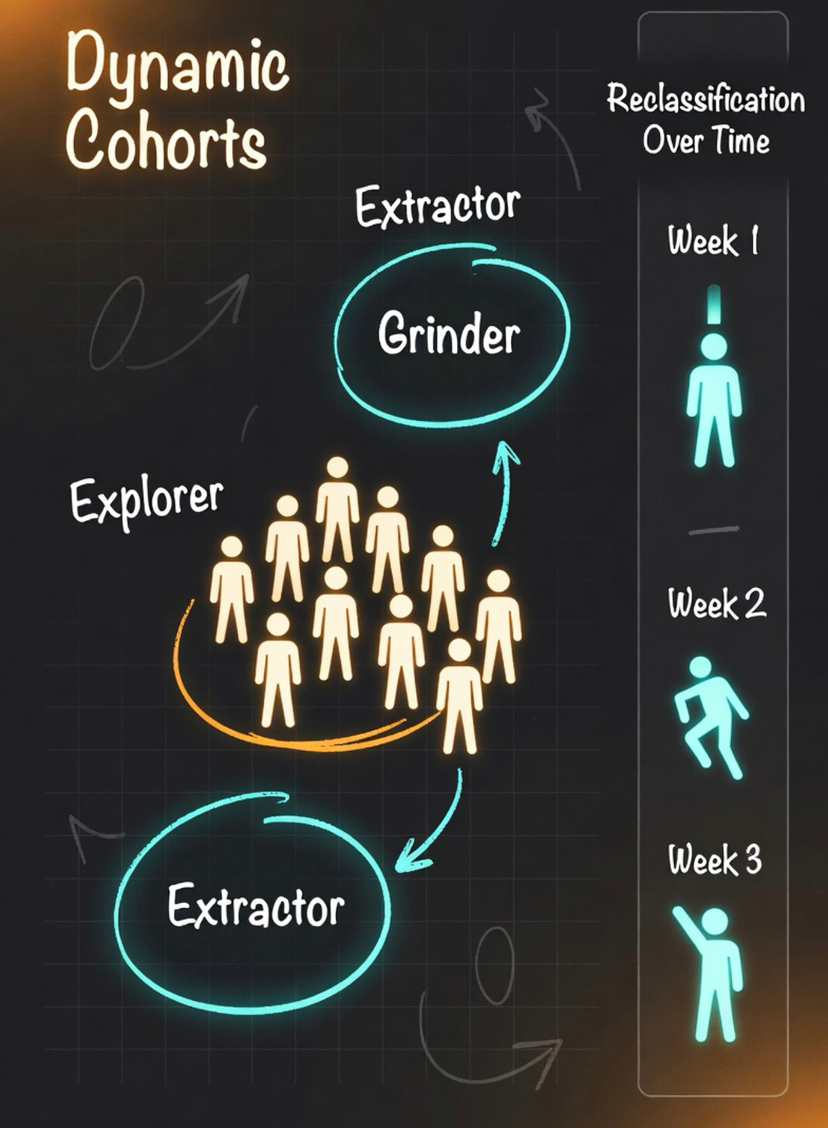

These cohorts are not fixed labels like “new user” or “whale.” They are dynamic and change based on behavior. A player who starts by grinding without spending may later shift into a different cohort if their behavior changes. The system tracks that transition and adjusts its response.

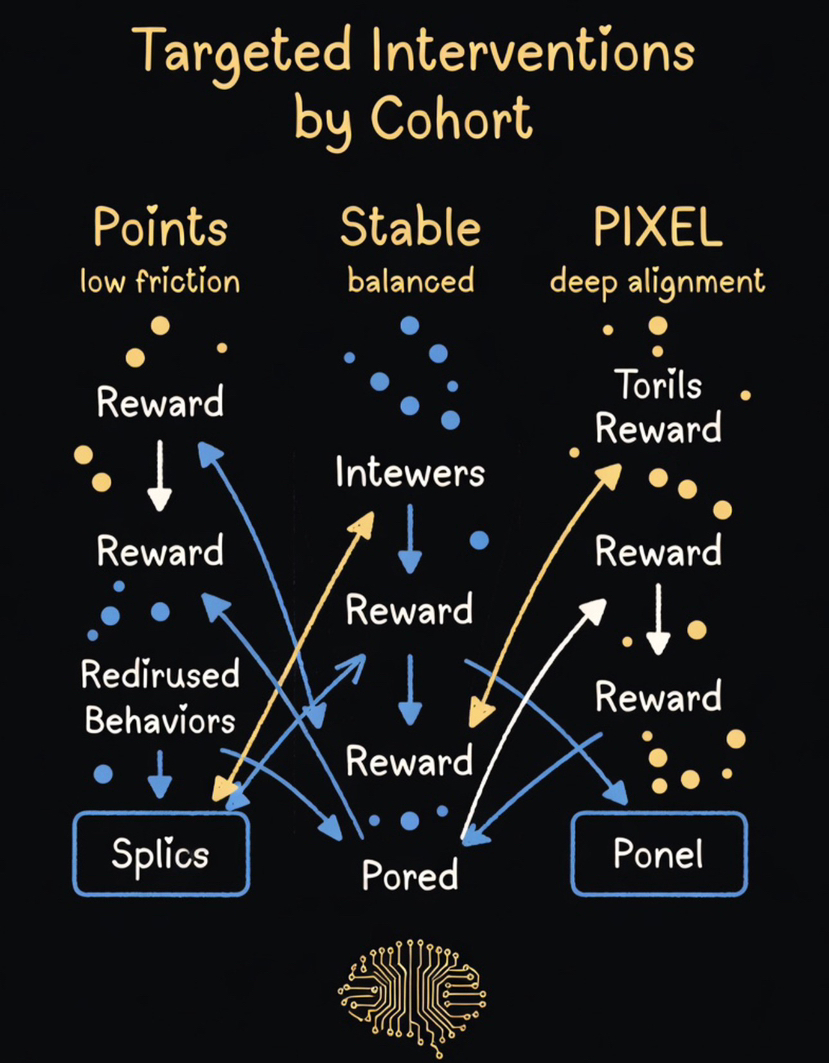

Once a player is mapped into a cohort, rewards stop being generic incentives. They become targeted interventions.

For some cohorts, the system increases visibility and pushes missions that deepen engagement. For others, it reduces reward intensity or introduces friction to prevent over-optimization.

A simple example inside Pixels makes this clear. A player who repeatedly farms the same loop too efficiently will start seeing that loop lose priority. Missions shift elsewhere, and rewards tied to that behavior appear less often. Nothing breaks, but the system quietly redirects attention.

The AI layer inside Stacked is not doing anything flashy. It is not predicting outcomes in a way that is visible to players. What it does is continuously refine how cohorts are defined and how players move between them.

That movement matters more than the initial classification.

A player can start in one cohort, then shift as their behavior changes. The system does not treat them as fixed. It re-evaluates them and adjusts incentives accordingly.

This is also why progression inside Pixels can feel uneven. From the outside, it might look inconsistent, but it is actually a result of continuous reclassification.

Different cohorts receive different signals at different times. That is why similar effort does not always lead to similar outcomes.

The architecture behind this is what allows it to work.

Every event feeds into a model that tracks sequence, repetition, and outcome. It does not just measure what happens, but how actions connect over time and what those sequences tend to produce.

From there, Stacked inside Pixels updates its cohort definitions and adjusts how each group is treated.

The intervention layer is where this becomes visible.

Different cohorts are exposed to different types of rewards and different levels of friction. Some are pushed toward deeper loops, some are redirected, and some are simply not encouraged further.

That selectivity is what keeps the system stable.

If every behavior was rewarded equally, the system would be easy to exploit. Pixels avoids that by reinforcing only the patterns that continue to perform across cohorts.

The multi-reward structure supports this.

Different cohorts respond to different signals, so the system uses different reward types accordingly. Points allow low-risk experimentation, stable rewards provide clearer value signals, and $PIXEL connects behaviour back to the broader ecosystem.

This is not just variety. It is alignment between cohort behavior and incentive design.

Over time, this creates a system that learns from behavior instead of reacting blindly.

It identifies which patterns sustain engagement, which ones collapse under scale, and which ones lead to short-term extraction. Those patterns are then used to refine future decisions.

Most GameFi systems fail because they scale whatever behavior appears first. Pixels does not do that. It filters behavior through cohorts and only reinforces what continues to work across different conditions.

That is why the system remains stable even as it changes.

So when progression feels inconsistent inside Pixels, it is not because the system is unclear.

It is because Stacked inside Pixels is continuously reorganizing players based on how they behave.

That is the real mechanism.

Pixels is not trying to understand players one by one. It is trying to understand patterns of behavior, group them into cohorts, and shape outcomes based on those groups.

And once you see that, rewards stop looking universal.

They start looking like signals targeted at specific behavioral patterns inside Pixels.