There was a moment when I was using a crypto app during what looked like normal network activity, but something felt slightly off in the timing. A simple action went through, yet the confirmation didn’t settle in the way I expected. It wasn’t a failure, just a delay that made me pause longer than usual. I remember thinking that the system wasn’t broken it was just busy in a way I couldn’t see.

After seeing this happen a few times, what I noticed is that most crypto environments don’t really struggle at the surface. The interface still works, actions still go through, but the timing starts to stretch when demand increases. Some updates feel instant, others feel delayed, and that uneven rhythm often reflects what’s happening underneath more than what’s visible on top.

From a system perspective, this usually comes down to how behavior is coordinated under pressure. Every user action is not just a standalone event it becomes part of a shared pipeline that has to be validated, ordered, and synchronized. When activity is low, everything feels seamless. When activity rises, the system starts making subtle decisions about what moves first and what waits.

I often think about it like a marketplace that also clears payments in real time. When only a few people are trading, everything feels immediate and effortless. But when the crowd grows, the system doesn’t stop it starts organizing itself. Some transactions are prioritized, some are queued, and timing becomes a reflection of internal structure rather than user intent.

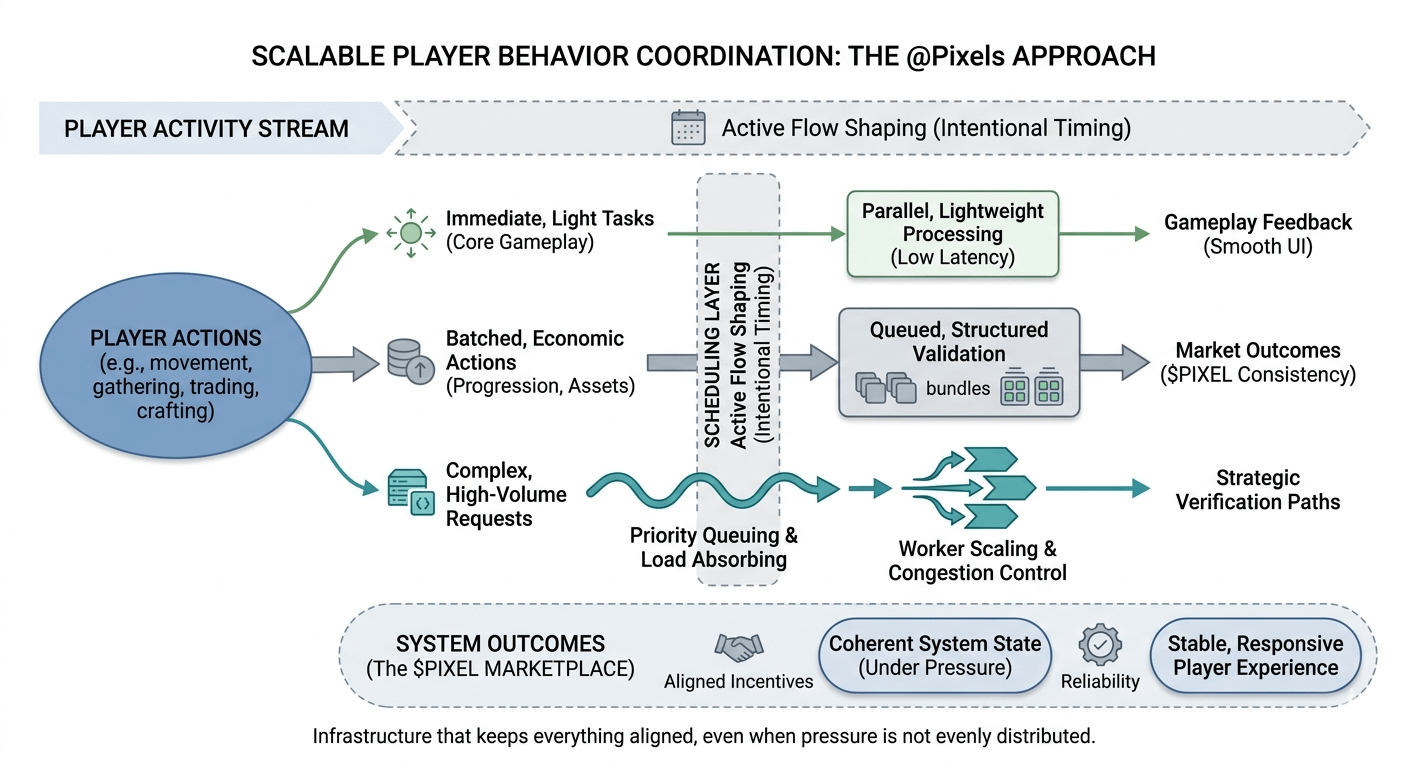

When I look at how @Pixels approaches this, what caught my attention is that it doesn’t seem to treat player activity as a single uniform stream. Instead, it feels like different types of actions are handled differently depending on their role in the system.

What interests me more is how that separation quietly supports scalability.

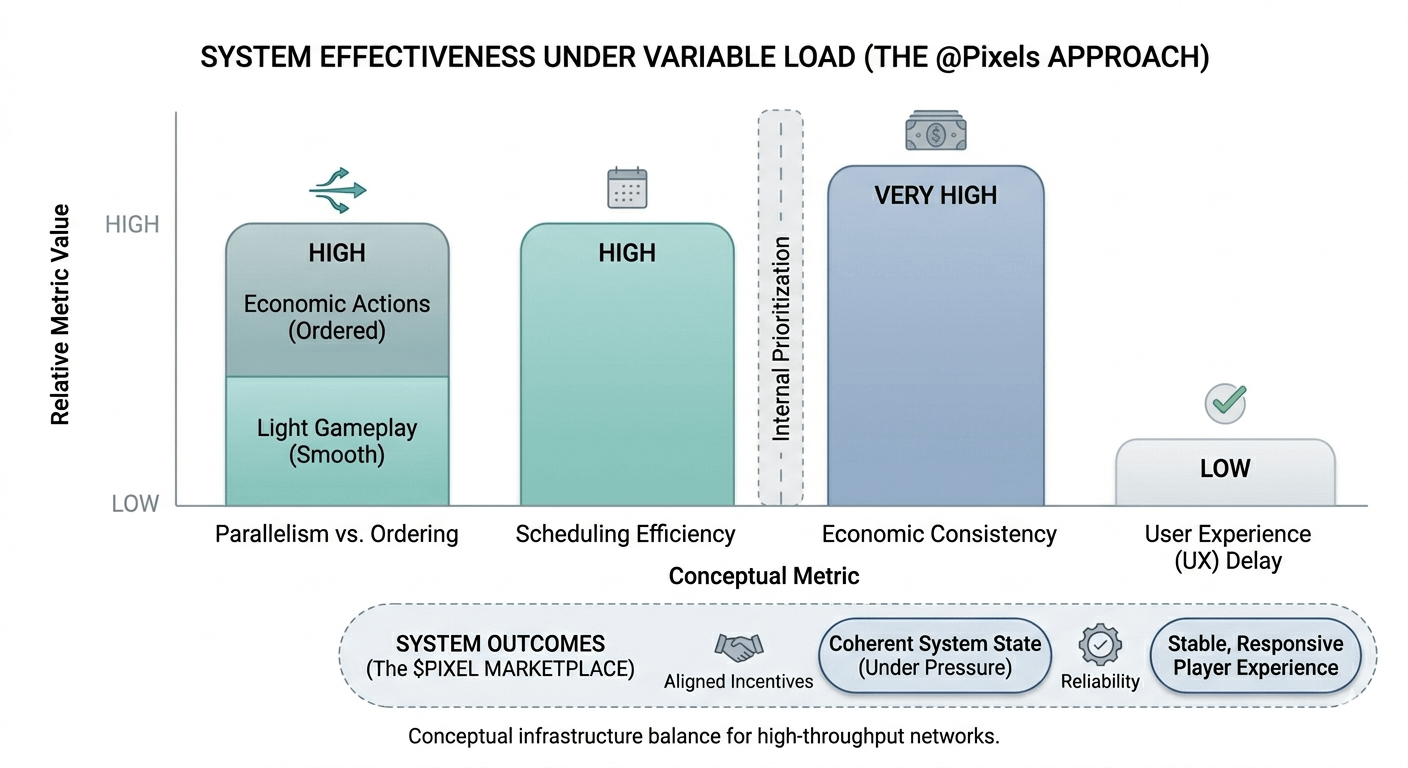

Scheduling feels intentional rather than reactive. Not every action moves with the same urgency, and that difference doesn’t feel accidental. Some interactions resolve instantly, while others are slightly paced. From a system perspective, that usually means timing is being used as a tool to prevent overload rather than just a byproduct of execution.

Task separation is another layer that stands out. The core gameplay loop remains light and responsive even when activity increases, while economic or progression related processes seem to operate in another layer entirely. That separation reduces friction and keeps the visible experience stable even when the system is under pressure.

Verification flow also plays a role. In my experience watching networks, systems that scale well rarely process everything the same way. Some actions are lightweight and immediate, while others go through deeper validation paths. That layered approach helps prevent the system from slowing down uniformly.

Then there is congestion control. What matters in practice is not avoiding load, but absorbing it without breaking the rhythm. Backpressure is part of that slowing specific parts of the system just enough so everything else can continue moving without collapse.

Worker scaling and workload distribution only really work when tasks are actually spread across different paths. If everything funnels into one bottleneck, scaling just adds capacity without solving the imbalance. The real improvement comes from how evenly the system distributes pressure.

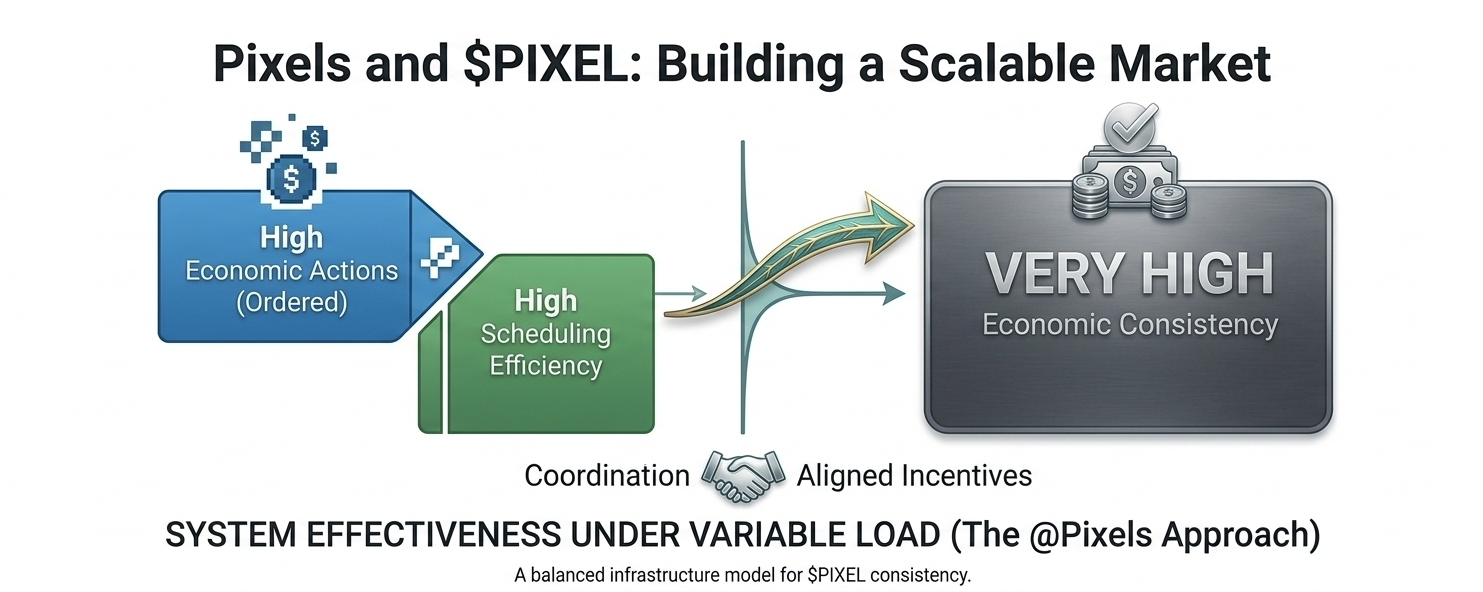

And then there’s the balance between ordering and parallelism. Parallel execution keeps gameplay feeling responsive, but ordered processing is often necessary to keep economic outcomes consistent. Managing both without letting them interfere with each other is where system design becomes visible.

What stands out to me is that Pixels doesn’t feel like it is just reacting to player activity. It feels like it is shaping how that activity flows through different layers of the system. And in that sense, PIXEL is not just part of interaction it feels tied to how that flow is organized over time.

From a broader perspective, this is where systems like this become interesting. Not in how they behave when things are quiet, but in how they adapt when participation increases.

A reliable system is not the one that feels perfectly smooth at all times, but the one that stays coherent when conditions change. Good infrastructure doesn’t draw attention to its mechanisms. It simply keeps everything aligned, even when the pressure isn’t evenly distributed.