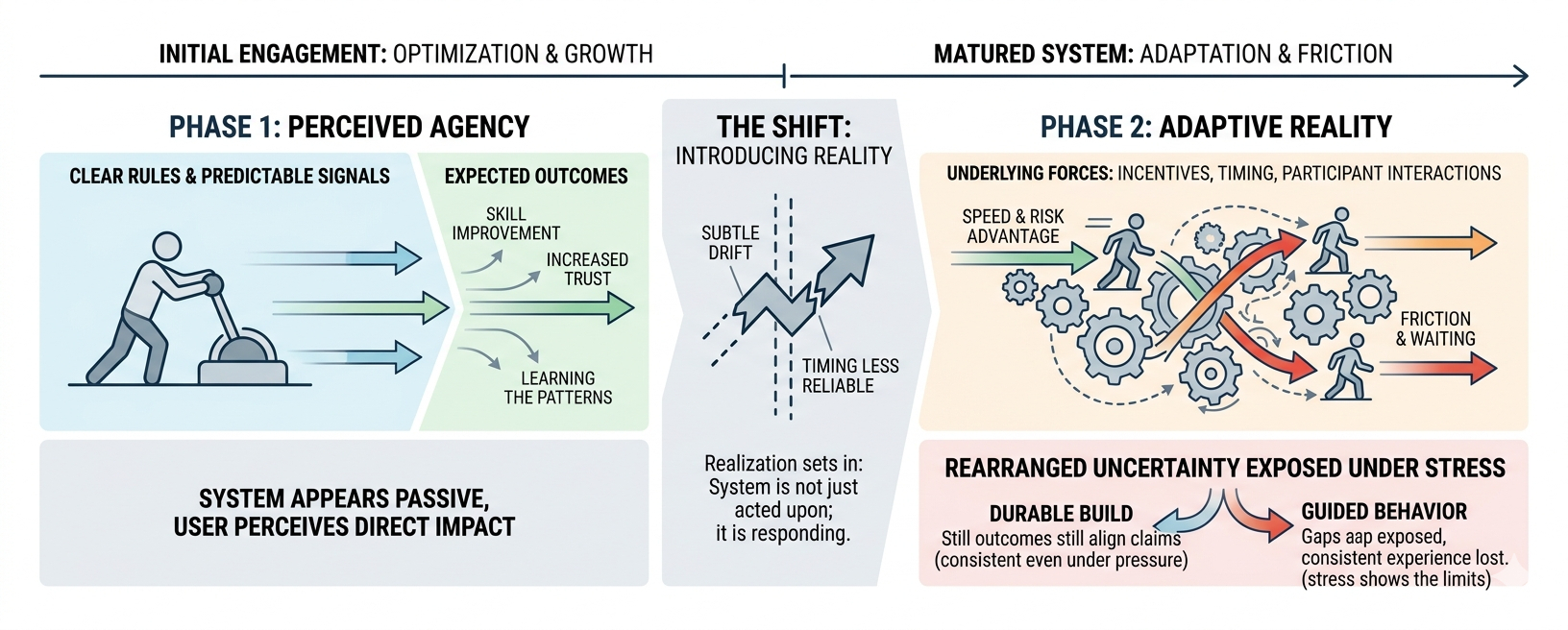

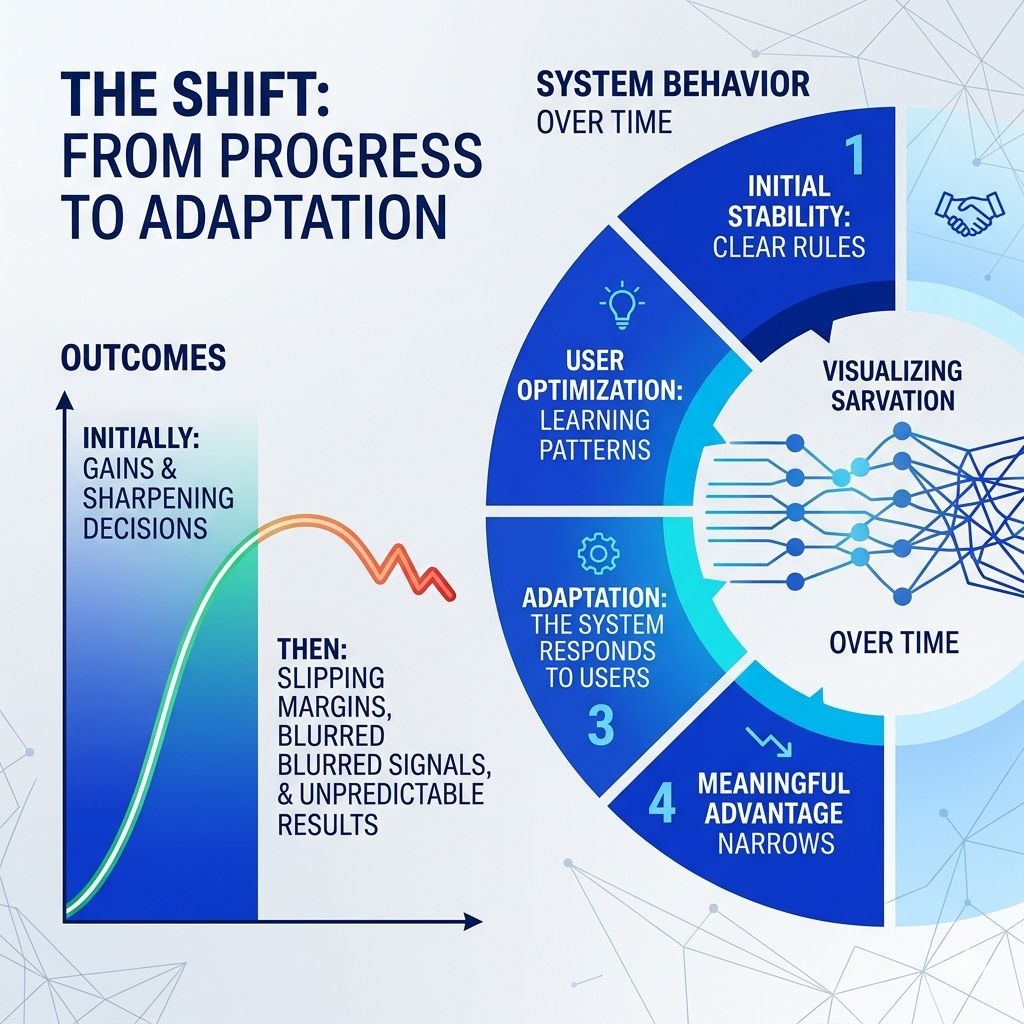

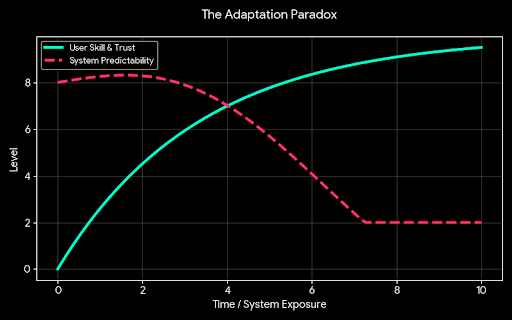

It didn’t feel dramatic when it changed. There was no moment where the rules were rewritten or some clear signal that the environment had shifted. It was more subtle than that. At first, it felt like progress you learn the system, you get sharper, your decisions improve, and outcomes begin to reflect that. You start to trust your read on it. That trust is what pulls you deeper in.

Then something small starts to slip. The same decisions don’t produce the same results. Timing feels less reliable. What used to look like clear signals begin to blur. It’s not that the system stopped working it’s that it stopped behaving in a way that could be anticipated from the outside. And that’s when the realization sets in: it’s no longer just you acting on the system. The system is adjusting to you, and to everyone else who has learned the same patterns.

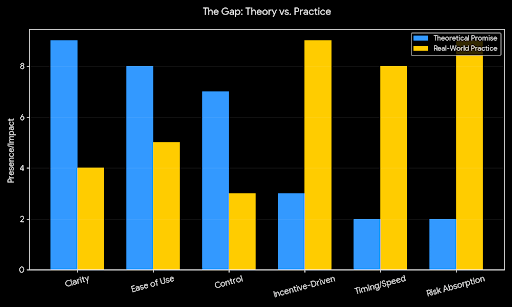

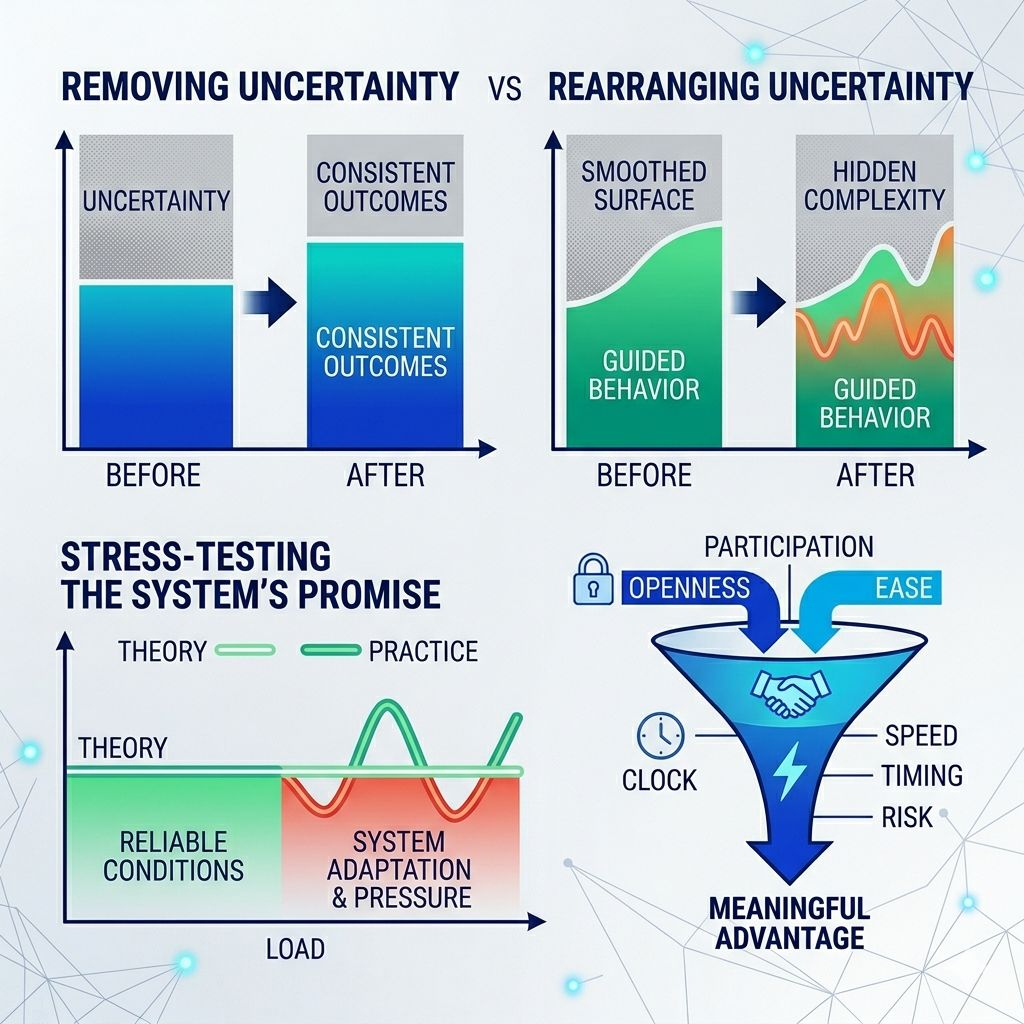

This is where the project’s promise starts to feel different in practice than it does in theory. On paper, it might be about making things simpler, more accessible, more efficient. And in a narrow sense, it often succeeds. It lowers the barrier to entry. It organizes interaction into something cleaner. But those improvements sit on top of a deeper layer that doesn’t disappear just because the interface looks better.

That deeper layer is where the real behavior lives. It’s shaped by incentives, by timing, by how different kinds of participants interact when they’re all trying to get something slightly different out of the same structure. No matter how clean the design is, those forces don’t go away. They just become less visible.

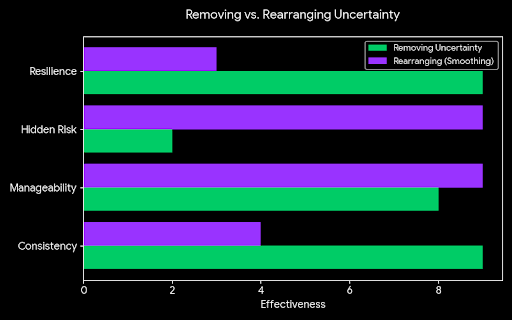

There’s a difference between removing uncertainty and rearranging it so it feels manageable. Removing it would mean that outcomes become more consistent, that the system holds its shape even when people push against it. Rearranging it means the uncertainty is still there, but it’s been smoothed into something that looks controlled. From the outside, both can feel similar. From the inside, they behave very differently over time.

You start to notice it in the margins. Not in the obvious paths, but in the moments where things don’t go exactly as expected. A delay here, a missed opportunity there, a shift in how quickly something resolves. Individually, these things are easy to ignore. Together, they start to form a pattern. The system isn’t breaking it’s adapting. And that adaptation isn’t neutral. It tends to favor those who can move faster, see earlier, or absorb more risk.

That’s not necessarily a flaw. It’s what most systems do once they become active enough. But it does change the nature of what’s being offered. The language might still emphasize openness and ease, but the lived experience becomes more conditional. Participation remains broad, but meaningful advantage narrows.

What’s interesting is that none of this requires the system to explicitly exclude anyone. It doesn’t need to. It only needs to respond efficiently to pressure. As more participants optimize their behavior, the system begins to reflect that optimization. It becomes less about what’s allowed and more about what’s actually possible under real conditions.

You feel this most clearly when things speed up or tighten. Under normal conditions, everything seems to flow. Actions resolve, expectations hold, and the system feels stable enough to rely on. But when activity increases or conditions shift, the structure starts to show. Some parts move faster than others. Some participants seem to have more flexibility, while others are left waiting. The differences that were subtle before become harder to ignore.

That’s when the original idea gets tested. Not when everything is working smoothly, but when the system has to handle more than it was ideally designed for. If it really reduces uncertainty, it should become more dependable in those moments. The experience should stay consistent, even as the environment becomes more complex. But if it has only organized uncertainty into something that looks stable, then stress will expose the gaps.

The shift from feeling in control to feeling guided by the system is part of that test. It suggests that the structure has reached a point where individual actions are no longer isolated. They feed into a larger pattern that shapes what happens next. You’re still making decisions, but those decisions are being absorbed into something that is constantly adjusting around you.

That doesn’t make the system weak. In some ways, it might be the opposite. It might be what allows it to scale, to keep functioning as more people engage with it. But it does mean that the original promise of clarity, of control, of reduced friction—needs to be understood differently. Those qualities may exist, but they exist within a system that is actively responding, not passively waiting to be used.

So the real question isn’t whether the idea makes sense or whether the experience feels smooth at first. It’s whether the structure holds when people stop interacting with it casually and start interacting with it seriously. When they learn it, test it, and push against it, does it continue to behave in a way that aligns with what it claims to offer?

If it does, then the shift you feel from playing the system to being part of its response might be a sign that something durable has been built. If it doesn’t, then that same shift might just mean the system has become better at guiding behavior without actually reducing the uncertainty it was meant to solve. And that distinction only becomes clear over time, when the pressure is real and the outcomes can’t be smoothed over by design.