I often catch myself thinking that whenever a working model emerges in GameFi, the market almost always mimics the outer shell: quests, tokens, 'smart' rewards. But copying doesn't work if the internal framework isn't replicated. In the case of @Pixels , that framework is the connection of data, analytics, and distribution $PIXEL under real load. And that's where scalability hits a wall.

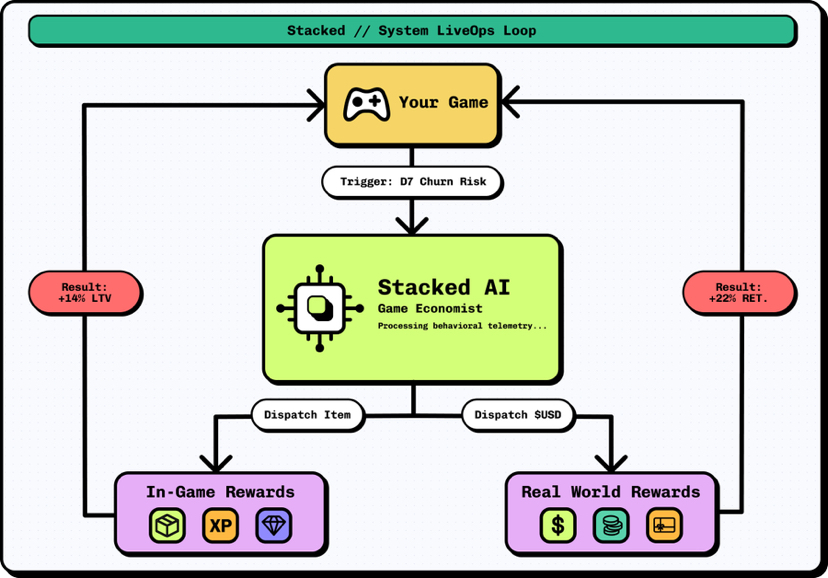

Stacked wasn’t 'designed on paper' as a perfect system. It grew out of pressure: bots, farming, retention failures, ineffective payouts. 200M+ rewards isn’t a showcase; it’s a stress test. At this volume, any mistake scales up: excess emission weighs down on $PiXEL, incorrect incentives amplify the wrong behavior, and poor filtering brings back farming. If the system survives this, it means it has gone through a series of iterations that can’t be sped up by copying.

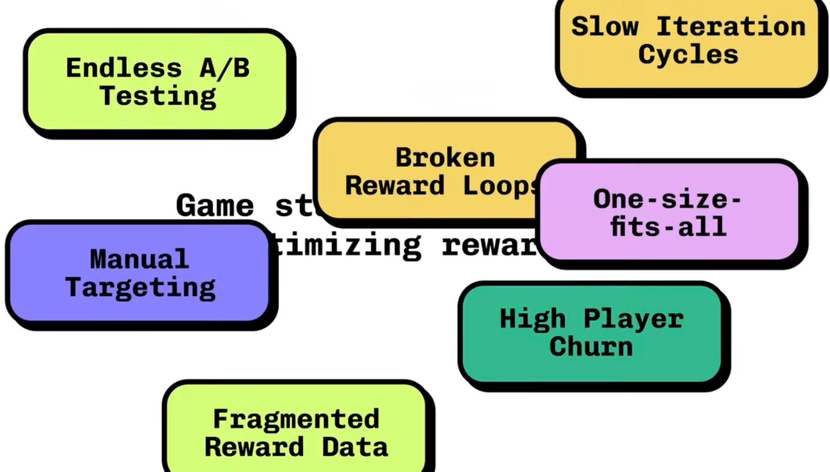

The key barrier is data. Behavioral analytics require dense signals: who leaves on D1–D7, where the cycle breaks, what actions correlate with returns. A new project lacks this and operates on hypotheses. In @Pixels this layer has already been accumulated, so an AI economist isn’t just decoration, but a tool that shortens the cycle of 'hypothesis → payout $PiXEL → measurement → adjustment.' Without data, this cycle is either slow or inaccurate.

The second barrier is integration. Many have separate pieces: anti-bot filters, quests, analytics. But they aren’t closed into one loop. In Stacked, the chain is tight: data → segmentation → decision → payout $PIXEL → effect measurement. The token here isn’t a universal reward, but a selective incentive directed where it yields measurable results. This reduces the share of 'empty' emissions and makes the pressure on $PiXEL manageable, not chaotic.

And the third factor - economics vs farming. If payouts are predictable, they get optimized. When $PiXEL is tied to effects and timing, stable ROI on farming drops. This doesn’t eliminate bots, but it shifts the equation: it’s more profitable to be 'useful' to the system than just active. To reproduce such logic, it’s not enough to copy the rules - you need infrastructure and a history of calibrations.

Now about the market itself. The 'play-to-earn' model relies on inflow. It quickly ramps up activity but struggles to retain. When payouts drop, players leave, and emission starts to weigh on the token. The shift I see in Pixels is towards 'play-and-stay': $PIXEL is used for retention and deepening engagement, rather than maximizing giveaways. This is costlier to set up but cheaper in the long run, as it reduces retention costs and increases LTV.

I don’t think this is the final form of GameFi - the risks haven’t gone away. Players adapt to metrics, the model requires constant calibration, and scale can expose new weak spots. A mistake in segmentation - and $PiXEL goes to the wrong ones; too soft a filter - farming returns; too hard - motivation drops. But having a closed loop allows for faster corrections than in static systems.

Honestly, in my opinion: most projects won’t replicate @Pixels not due to the complexity of the idea, but because of the lack of data scale, system connectivity, and discipline in managing the emission of $PiXEL. This isn’t a template that can be deployed; it’s a process that needs to be experienced.