I didn’t catch the moment things changed. There wasn’t a clear break. Everything looked the same on the surface—running the usual loops, repeating familiar actions, moving through the routine without much thought.

Plant. Collect. Upgrade. Repeat.

At some point, even checking the $PIXEL chart became part of that rhythm. Not intentional—just something that slipped in naturally.

But somewhere along the way, the experience shifted.

It didn’t feel like I was playing anymore. Not in the usual sense. I was adjusting—subtly, almost automatically. Changing timing, skipping certain actions, favoring others. Not because I consciously decided to optimize, but because some choices simply started to feel more “correct” than others.

It wasn’t obvious. It felt like background conditioning.

I’ve seen enough Web3 loops to think I understood the pattern. Enter, learn the mechanics, maximize output, and eventually exit when the system either saturates or loses its appeal. That cycle has repeated enough times to feel predictable.

But this didn’t follow that script so cleanly.

The drop-off wasn’t as sharp. The loop didn’t immediately collapse into pure extraction. And the relationship between effort and outcome didn’t behave as linearly as expected.

That’s what stood out.

You could invest similar time across different activities and still end up with very different results. At first, it’s easy to label that as balance adjustments—normal tuning. But over time, it feels less like simple tweaking and more like something layered beneath it.

Not random. Not entirely fixed either.

More like the system is reacting—not just to how much is being done, but to how it’s being done.

That’s where the perspective changes.

It’s no longer just about action—it’s about translation.

How effectively does what you do convert into something the system recognizes as meaningful? Not all effort carries the same weight, even if it looks identical on the surface.

You don’t see that directly. You feel it gradually.

Certain patterns seem to “work” more often. Others lose relevance, even if they require the same input. Without realizing it, you stop experimenting freely and start leaning into what produces consistent outcomes.

And that subtly reshapes how you interact.

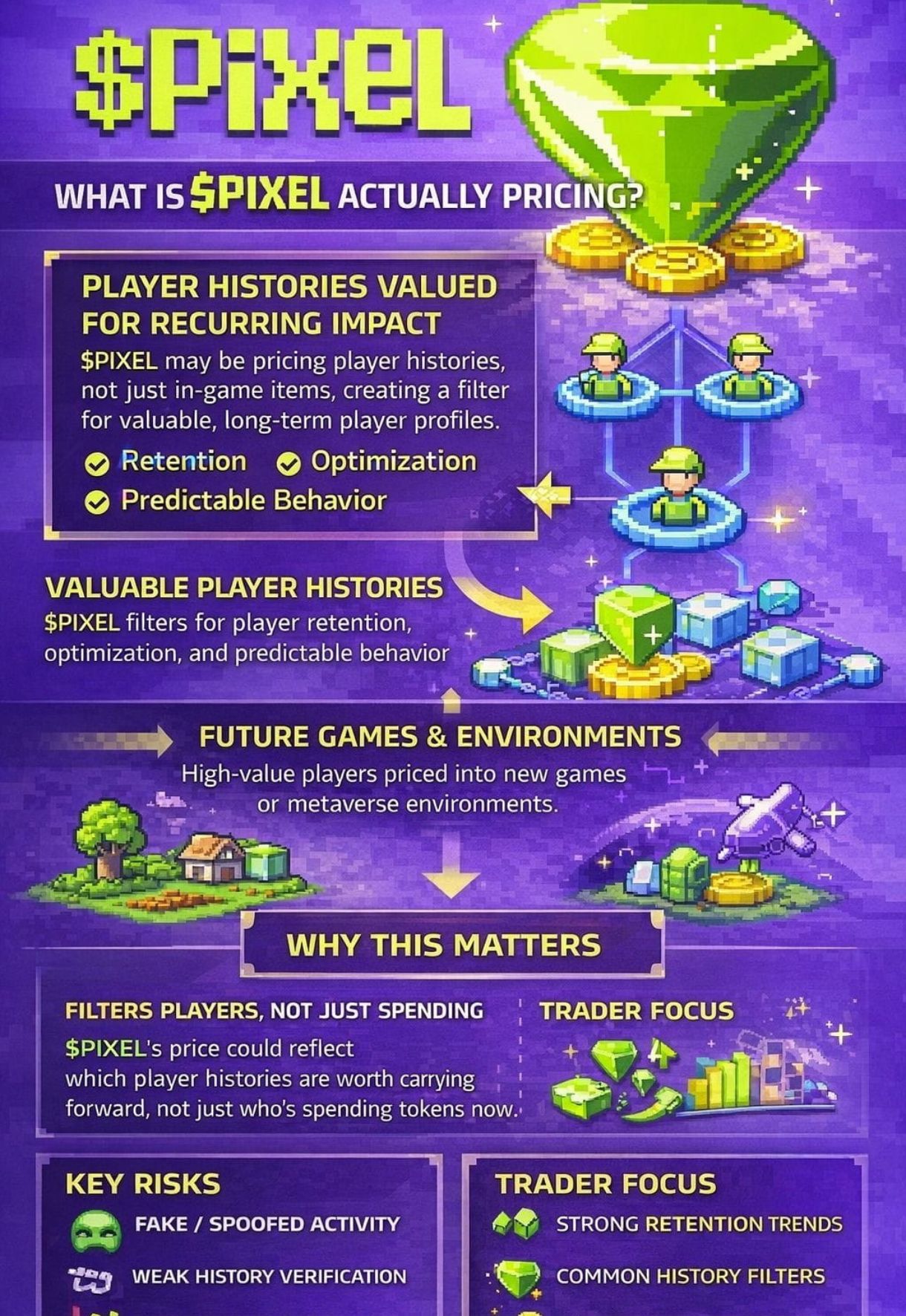

Most GameFi systems lean heavily on volume—more activity, more rewards. Simple cause and effect. But here, activity alone doesn’t seem sufficient. There’s another layer—alignment.

Alignment with what exactly isn’t clearly defined, which might be intentional. The system seems to filter behavior, prioritizing some forms of participation over others without explicitly stating why.

Even the sinks start to look different through that lens.

They’re not just mechanisms to slow things down. They redirect flow. They influence where value accumulates and where it doesn’t. Fees, upgrades, progression steps—they don’t just restrict movement, they shape it.

At that point, it stops feeling like a straightforward game economy.

It starts to resemble a controlled environment—one that’s testing how behavior and incentives interact under constraint. Almost like different pieces—rewards, friction, pacing—are being tuned together.

There’s a sense that it’s more of a framework than a finished product.

Something that could extend beyond a single game if the structure holds.

But above all of that sits a completely different layer—the market.

And that layer plays by its own rules.

Price doesn’t wait for systems to mature. It reacts to attention, liquidity, momentum. So even if the internal design is carefully shaping behavior, the token itself moves according to external pressure.

That creates a disconnect.

One layer tries to refine participation and improve how value flows internally. The other reacts instantly to broader market dynamics, often ignoring that structure entirely.

And those two don’t always align.

You can have a system that’s logically sound—balanced, efficient, controlled—and still have a token that doesn’t reflect any of it in the short term.

That tension is hard to ignore.

There were moments where I caught myself questioning the experience:

Am I actually playing…

or just adapting to a system that already defined what matters?

That’s where it gets uncomfortable.

The more precisely a system defines “valuable behavior,” the more it narrows what players naturally do. You gain efficiency—but you lose some unpredictability.

And unpredictability is part of what makes games feel alive.

Players don’t just respond to rewards—they respond to how those rewards feel over time. If everything becomes too structured, exploration fades. You stop experimenting and start complying, often without noticing.

Still, one thing keeps standing out.

People come back.

And that matters more than any optimization layer or incentive model.

Because no system—no matter how refined—means anything if players don’t choose to reenter it.

Retention becomes the real signal.

So I’ve started to see $PIXEL less as a typical game token and more as part of a broader experiment—one that’s trying to understand how value moves when behavior, not just activity, becomes the input.

It doesn’t feel finished. Maybe it isn’t supposed to be.

A system can be technically well-designed and still miss what makes participation enjoyable in the first place.

But this doesn’t feel like pure extraction either.

It feels like it’s testing a boundary:

How far can incentive design go before it starts reshaping how people naturally behave?

And maybe that’s the real question here.

Not whether it works.

But whether something this structured still feels like a game… once you’re inside it.