Automation has always promised leverage. Do more with less. Scale effort beyond human limits. For years, this promise focused on scripts, bots, and rule-based workflows. They worked, but only within narrow boundaries. The moment conditions changed, systems broke or required human intervention.

AI was supposed to fix this. Models added flexibility, language understanding, and decision-making. Yet even with powerful models, something remained missing. Most AI systems still behave like advanced calculators. They respond, but they do not grow. They act, but they do not accumulate experience.

This is where @Vanar philosophy becomes distinct.

Instead of treating intelligence as an endpoint, VANAR treats it as a process that unfolds over time. For any system to truly operate autonomously, it must preserve continuity. It must know what it has done, why it did it, and how that history should shape future actions. Without this, autonomy is an illusion.

Stateless systems cannot scale because they have no past. Each interaction exists in isolation. Even if outputs are correct, effort is wasted repeating reasoning that should already exist. This is why large systems often feel inefficient despite massive compute. They are intelligent, but amnesiac.

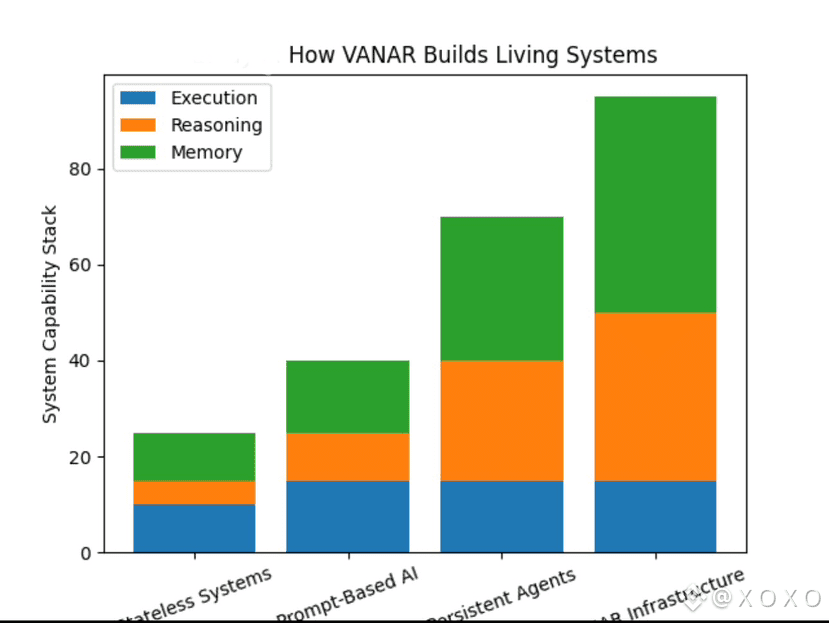

VANAR directly targets this problem by building infrastructure where memory and reasoning are not optional layers, but foundational ones.

In practice, this changes how intelligent systems behave. Agents can operate across tools without losing identity. Decisions made in one context inform actions in another. Over time, systems develop internal consistency rather than relying on constant external correction.

For builders, this represents a major shift in design mindset.

Instead of thinking in terms of prompts and responses, builders can think in terms of evolving systems. Workflows become adaptive rather than brittle. Agents become reliable rather than unpredictable. The system itself carries the burden of coherence, freeing developers to focus on higher-level logic.

This is especially important as AI moves closer to real economic activity.

Managing funds, coordinating tasks, handling sensitive data, or interacting with users over long periods all require trust. Trust does not emerge from intelligence alone. It emerges from consistency. A system that behaves differently every time cannot be trusted, no matter how advanced it appears.

By anchoring memory at the infrastructure level, VANAR reduces this risk. It allows intelligence to accumulate rather than fragment. It also creates a natural feedback loop where usage improves performance instead of degrading it.

The implications extend beyond individual applications.

Networks built around persistent intelligence develop stronger ecosystems. Developers build on shared memory primitives. Agents interoperate instead of existing in silos. Value accrues not just from activity, but from accumulated understanding across the network.

This is why VANAR is not competing with execution layers or model providers. It sits orthogonally to them. It accepts that execution is abundant and models will continue to improve. Its focus is on what those models cannot solve alone.

Memory. Context. Reasoning over time.

My take is that the next phase of AI will be defined less by breakthroughs in models and more by breakthroughs in infrastructure. The systems that win will be the ones that allow intelligence to persist, learn, and compound. VANAR is building for that future deliberately, quietly, and structurally.