Three in the morning. Staring blankly at the dense code and white papers on the screen, the coffee in my hand has long gone cold.

I am once again pondering that old cliché: who will own the next decade of blockchain? A few years ago, everyone was frantically competing on TPS (transactions per second), as if speed alone could solve all problems. But recently, while studying the technology architecture, I have been feeling increasingly strongly that 'so that's how it is.' This isn't a race about speed; it's an evolution about 'intelligence.'@Vanar When looking at the technical architecture, my mind is becoming clearer about this evolution.

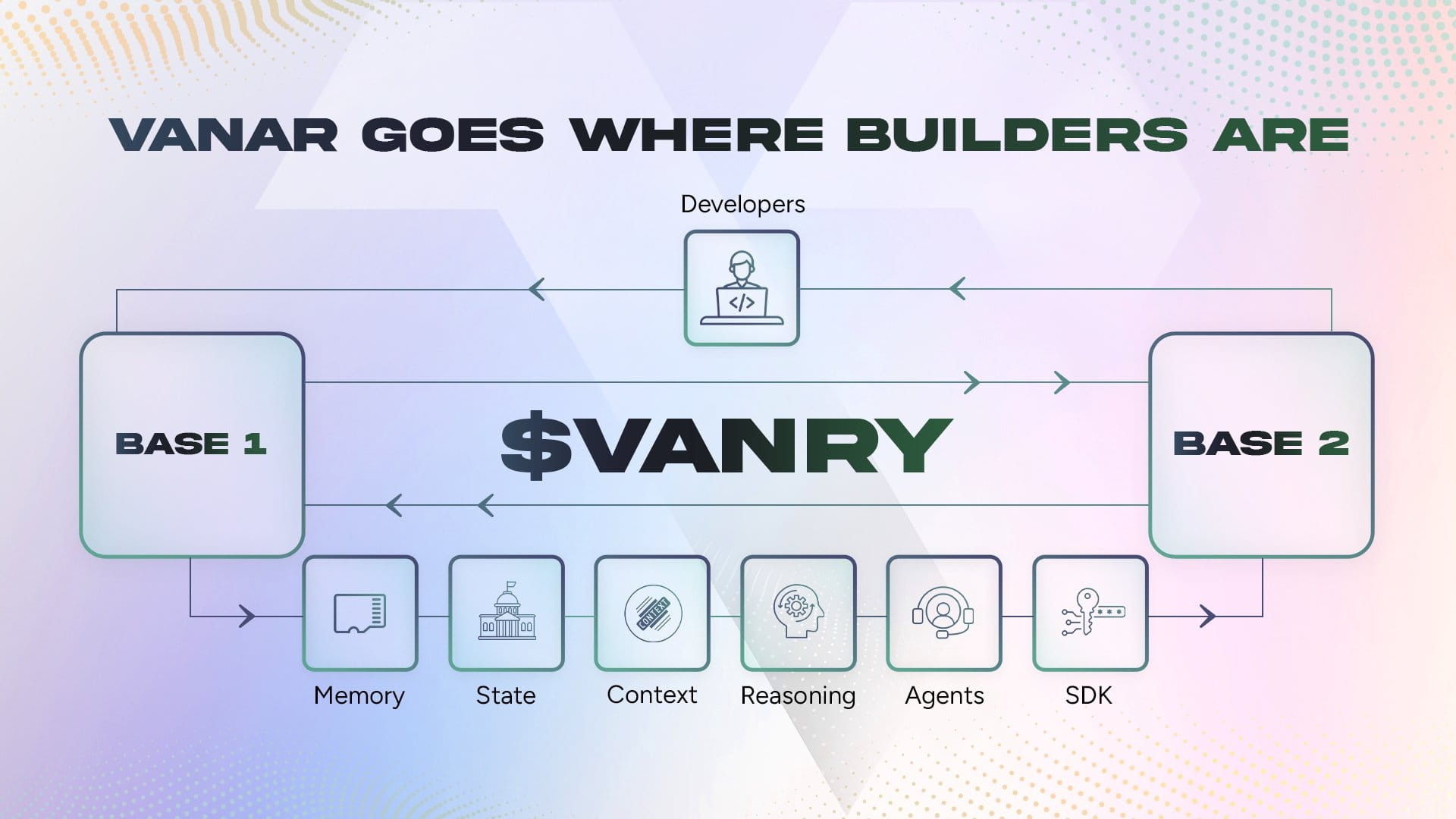

Looking at the architectural diagram of Vanar Chain on the screen, I can't help but want to clarify this logic line. To be honest, the current public chain market resembles a group of people competing on who can run faster, but no one cares where they are running to. Everyone is shouting 'AI + blockchain,' but in my view, most so-called 'AI public chains' are just forcibly 'attaching' AI models to an old chain. It feels like putting a GPS on a horse-drawn carriage; it looks advanced, but the core power is still the horse.

But @vanar gives me a different feeling. It is native.

I have been pondering the weight of the word 'native'. When I perused their technical documents about the Neutron layer, this sense of difference became tangible. Usually, when we store data on-chain, we only store a hash value to save money and space, while the real data—such as NFT images or PDF documents—are actually thrown on IPFS or Amazon cloud servers. This leads to a problem that I have always been anxious about: the so-called 'on-chain ownership' is actually an illusion. If the server corresponding to the NFT you bought goes down, the hash value in your hand becomes a piece of waste paper.

And Vanar's Neutron layer seems to have directly tackled this issue head-on. I have been staring at the concept of 'semantic storage' for a long time. They are not storing hashes but using AI algorithms to perform semantic compression of data. The 500:1 compression ratio surprised me a bit. What does this mean? It means that a complex contract or technical document that originally is 25MB can be compressed into dozens of KB of 'Seed' and then directly stored in the block.

I simulated this process in my mind: when an AI Agent needs to read this contract, it does not need to search the world for the original file off-chain; it can decompress, read, and understand it directly on-chain. This sense of 'presence' of data is something that the vast majority of public chains cannot achieve. This is not just a change in storage methods; it is equipping AI with a 'native brain'. An AI without memory cannot handle complex tasks, while Neutron is like equipping the blockchain with a hippocampus, turning on-chain data into 'knowledge' that can be understood by AI, rather than cold binary code.

My thoughts drifted a bit, and I pulled my focus back to their five-layer architecture. Vanar Chain is at the bottom layer, Neutron does memory, and above it is Kayon—the reasoning layer.

When I saw Kayon, what flashed through my mind was the rigidity of current smart contracts. Current smart contracts, to put it bluntly, are simply 'if A happens, then execute B', which is completely linear and rigid logic. It cannot understand human language or complex contexts. But Kayon's ambition seems to lie in giving on-chain logic 'reasoning capabilities'.

I imagine a scene like this: in the future, DeFi protocols are not just triggered by price to liquidate, but an AI Agent analyzes market sentiment, macro news, and even on-chain capital flows through the Kayon layer, and then makes the decision of 'whether to liquidate' in this complex context. This is no longer simple 'automation'; this is 'intelligence'. What Vanar is doing seems to provide infrastructure for this future. If code can think like humans, or at least process fuzzy logic like an extremely intelligent assistant, then the application layer of Web3 can truly explode.

Many projects on the market now rush to connect to the ChatGPT API to ride the hype and then claim to be AI projects. Every time I see this, I roll my eyes internally. This reliance on external APIs not only has high latency, but once OpenAI's interface goes down, the entire on-chain application becomes paralyzed. Not to mention privacy issues—would you dare to hand over core financial logic completely to a centralized black box model?

Vanar's idea is obviously trying to avoid this pitfall. They place reasoning and validation on-chain, or at least solve it at the protocol layer, rather than outsourcing it to the giants of Web2. This is what Web3 should look like: decentralized intelligence.

I couldn't help but think of the news I recently saw about their collaboration with Google Cloud and NVIDIA. Normally, I don't pay much attention to this 'logo wall' style of collaboration, but combined with their tech stack, this is quite interesting. NVIDIA provides computing power tools, and Google Cloud offers green node support, which seems more like paving the way for high-load AI computing rather than just simple brand endorsement. After all, running even a simplified reasoning model on-chain requires very high infrastructure demands.

At this time, I thought of those L2s that are still obsessing over gas fees and TPS. It is not that they are not important, but the dimensions are different. If the future internet consists of billions of AI Agents interacting, trading, and signing contracts on-chain, then what they need most may not be the speed of transactions at a hundred thousand per second, but rather—'Can I understand this contract?', 'Is this data real?', 'Can I establish long-term memory on this chain?'.

In this dimension, Vanar seems to be ahead. It treats 'intelligence' as a first principle rather than an option.

Just now, I casually checked the recent discussions in the #Vanar community and found that everyone is beginning to realize the trend of transformation from 'application chains' to 'intelligent chains'. In the past, when we said 'everything on-chain', it was actually quite cumbersome. Uploading a bunch of junk data is not only expensive but also useless. Now with Neutron's semantic compression and Kayon's reasoning, we may really achieve 'everything has spirit'—allowing every asset on-chain, whether financial instruments or game items, to carry a logic that can be read and operated by AI.

This depth of technology excites me, but it also leads me into deeper contemplation. Building such a system is a monumental task. From optimizing semantic compression algorithms to controlling the cost of on-chain reasoning, each link is a hard nut to crack. I look at the technical details about 'Seeds' on the screen and can't help but sigh; these people really want to make fundamental changes at the core logic level.

For developers, writing code in this ecosystem may no longer be as simple as writing Solidity; instead, it requires designing the thinking path of Agents. I may need to define how my AI retrieves memories in Neutron, how it calls Kayon for judgment, and ultimately executes automated operations through the Axon layer. This completely changes the development paradigm of DApps. Future developers may resemble 'trainers' or 'logic architects' of AI.

I thought of that classic 'impossible triangle'. Perhaps there is also a new triangle in AI blockchain: reasoning depth, response speed, and degree of decentralization. Vanar's current architecture seems to be attempting to balance these three through layering. The bottom layer is responsible for consensus and settlement, while the middle layer is responsible for memory and reasoning. This modular design philosophy seems to be the most scientific at present.

The night is getting deeper, and the city outside has completely quieted down, but the neurons in my brain are still jumping wildly.

I wonder what the future scenarios will look like if this architecture really works. Perhaps on Vanar, I will have a 'second brain' that truly belongs to me. It is not just storing my files; it understands my transaction habits, remembers every white paper I have read (in the form of compressed Seed), and it can even help me analyze whether the new DeFi protocols fit my risk preferences while I sleep. Moreover, all this data and logic are verifiable on-chain and do not belong to any tech giant.

This is the future that Web3 promises us, right?

The current market is always too restless, and the red and green bars of prices easily obscure the essence of technology. But I prefer to focus on these dry architectural diagrams. Because I know that when the tide goes out, what can truly remain to define the next era will definitely be those infrastructures that solve core pain points.

And in the AI x Crypto track, the pain point lies in that: AI needs decentralized computing power, storage, and verification. Current chains cannot provide it, but Vanar is.

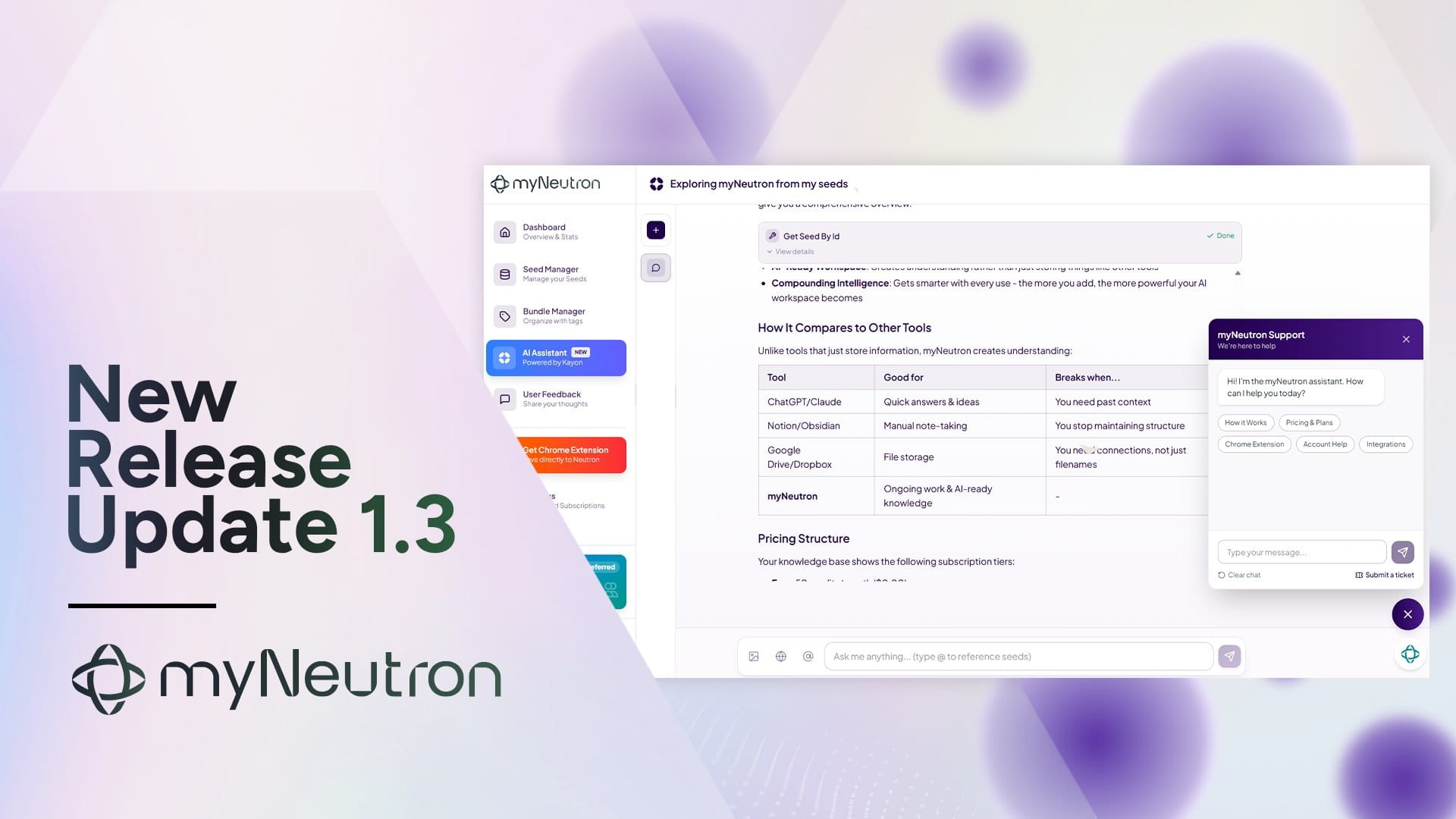

I looked back at their recent Roadmap. From the landing of myNeutron to the advancement of the Vanguard testnet, every step is focused on 'making blockchain smarter'. This kind of execution is actually quite rare in the crypto circle. Many projects are just making promises, but there are indeed not many that can turn something that sounds very sci-fi like 'semantic storage' into reality.

Writing to this point, I suddenly felt that perhaps we should no longer define @vanar with the term 'public chain'. It is more like a decentralized intelligent cloud. In this cloud, computing power, data, and algorithms are no longer monopolized resources but public infrastructure that can circulate through tokens.

I imagine a day in the future when thousands of AI Agents shuttle through the #Vanar network. They exchange high-density information compressed by Neutron, reach consensus through Kayon, and automatically execute complex business logic. Humans in this might only need to play the roles of supervisors and rule-makers. How far are we from that world?

Looking at the time in the bottom right corner of the screen, it is almost four in the morning. But I have no sleepiness at all. The thrill brought by technological change is much more effective than caffeine.

For those of us who have been crawling through this industry, what we fear most is not losing money but missing the moment of paradigm shift. When Ethereum introduced smart contracts, turning blockchain from a 'ledger' into a 'computer', it was a leap. Now, Vanar is trying to transform blockchain from a 'computer' into a 'brain'; could this be the next leap?

I can't guarantee it, but looking at this tight five-layer tech stack, my intuition tells me: the direction is right.

In this noisy market, projects that can calmly focus on fundamental architectural innovations deserve another look. I closed my notebook, and the words 'semantic seeds', 'on-chain reasoning' were still echoing in my mind.

The sky outside is getting bright. The AI era of blockchain may also be at the moment before dawn. We are standing at a huge watershed, on one side is the old mechanical ledger, and on the other is the new smart network. I am very fortunate to be focusing on the latter now.

This is all my thoughts tonight—or rather this morning. About technology, about the future, about a decentralized world that may be built by codes and algorithms, and that is more intelligent.#vanar $VANRY