have spent far too many nights scrolling through endless crypto narratives that all seem to recycle the same set of buzzwords without ever landing on something that feels truly grounded. Decentralized this, tokenized that, trustless everywhere — it’s hard not to feel a bit numb when another whitepaper drops claiming to “fix” some deep structural problem the broader market has wrestled with for years. I came to look at the Mira Network with that weary skepticism, not because I don’t believe innovation is possible, but because I’ve learned that the difference between real solutions and catchy slogans usually only reveals itself when you slow down and observe how a project positions itself against real world friction rather than abstract ideals.

At first glance, Mira can easily be filed alongside many ambitious narratives: it’s a blockchain-powered protocol, it has a token, there are partnerships and ecosystem metrics. But when you begin to disentangle what the project actually tries to do, something more specific starts to emerge — a response not to nebulous crypto fantasies, but to a very tangible problem in the broader world of artificial intelligence. In essence, Mira does not purport to make AI smarter or more capable. Instead, it tries to make AI more reliable. And that distinction matters more than you might realize until you watch how AI systems influence real markets and decisions today.

Unblock Media +1

If you’ve spent any time interacting with large language models or generative systems in contexts that matter — like research feeds, tools embedded in trading environments, automated reporting channels, or customer-facing assistants — you quickly grasp that impressive outputs do not necessarily mean trustworthy outputs. Models will “hallucinate” facts, invent dates, misattribute quotes, and offer confident but incorrect assertions with the same assertive tone as a well-supported answer. In markets where information latency, accuracy, and execution precision are everything, these errors are not minor inconveniences; they are structural risks. A flawed data summary can lead to erroneous trading decisions; a misleading explanation of events can exacerbate volatility around an asset; an incorrect regulatory interpretation can influence compliance strategy. And as these systems are embedded deeper, the cost of error scales up. Mira’s thesis sits directly on that fault line — what if the industry could build a trust layer that makes these AI outputs auditable and verifiable before they propagate into decision systems?

Messari

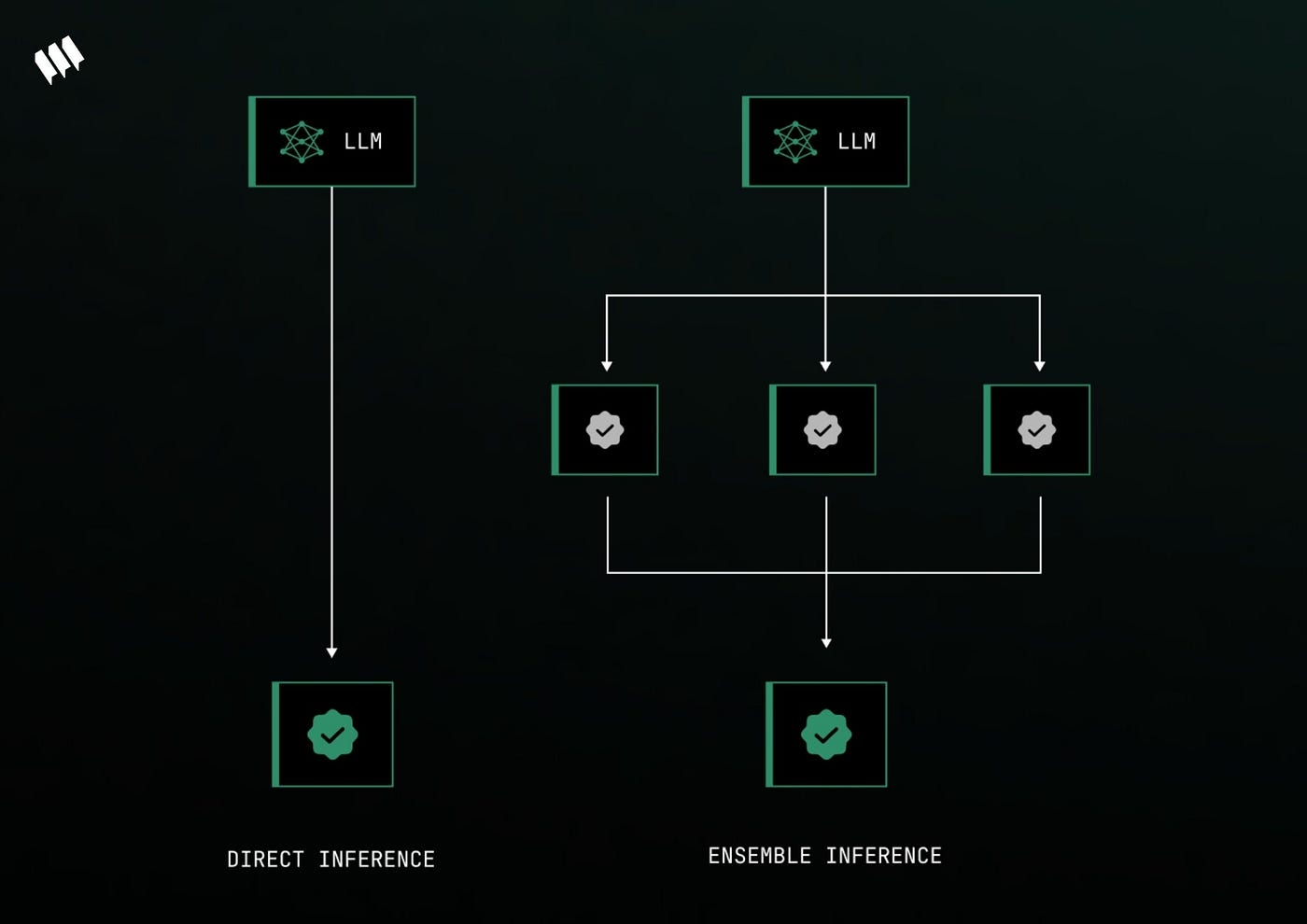

Rather than building another language model or generative engine, Mira rethinks the pipeline around verification. When an AI produces an output — think of a summary, an analysis, or a response — that output is broken down into individual factual claims. Instead of assuming the model’s internal confidence is predictive of truth, that bundle of claims is broadcast to a network of independent verifier nodes where each claim is checked by multiple distinct AI models. Think of it less as an editing pass and more like a distributed peer review: multiple views come together, and only when a supermajority consensus is reached is a claim considered verified. Verified claims are cryptographically certified with transparent records that include which models participated and how they voted. This verification certificate can then accompany the output wherever it travels, so platforms, end-users, or even auditors can see that what they are reading has passed through a trust circuit rather than ending at a single black-box model.

블록미디어 +1

There is beauty in that simplicity. Ordinary verification approaches today — manual reviews, rule-based filters, or internal confidence scores — are fraught with latencies, bias, and a lack of scalability. In a way, Mira acknowledges that no single model, no matter how large, can reliably police its own outputs. Instead of hoping that retraining, bigger datasets, or more compute will miraculously resolve hallucination, Mira targets agreement across diversity as the mechanism for trust. This shift from centralized self-assessment to distributed consensus may sound abstract, but if you think about fault tolerance in markets, it resonates. Price discovery, for example, is not derived from a single bid or ask, but from the aggregation of diverse participants. Similarly, validation that emerges from multiple independent evaluators carries a robustness that self-certification cannot.

Messari

That said, the trade-offs in this design are palpable. Anytime you decentralize, you invite complexity and potential friction. Verification on a distributed network means additional computational overhead, coordination latency, and dependency on the network participants’ honesty and performance. Mira tries to manage this via a hybrid consensus mechanism that blends delegated proof-of-stake dynamics with incentives and penalties for accurate verification work, and by enabling GPU resource providers (called node delegators) to support the verification process without needing to run nodes themselves. There are economic incentives to encourage honest participation and slashing penalties for nodes that consistently provide low-quality votes, which theoretically aligns interests toward accuracy. But in practice, you have to observe how these incentive systems withstand adversarial behavior, collusion attempts, and the inevitable cycles of participation and exit that decentralized networks face.

Mira +1

Another tension lies in scalability. Real world AI systems churn through countless tokens every minute. Mira’s own reported metrics indicate billions of tokens processed daily across integrated applications and millions of daily verification requests, which suggests a certain level of throughput. But we have to appreciate that verification is inherently more work than generation alone — you’re not just producing an output, you’re checking each piece of it against a distributed verdict. So questions about latency, peak load behavior, and cost efficiency (especially when this infrastructure is used in time-sensitive environments like financial decision engines) are real. It’s the same kind of concern traders have when evaluating order execution systems: a verification layer does not help if it introduces intolerable delays or unpredictable bottlenecks.

Crypto Briefing

What also sets Mira apart from many crypto narratives is that its design choices are directly informed by problems outside crypto volatility. Its application context extends into education, fintech, chat interfaces, and for platforms that cannot tolerate high error rates. The goal isn’t to supplant AI models but to sit alongside them as a trust scaffold. In that sense, it acknowledges both its necessity and its limitations. It is not claiming to end AI errors, but to reduce their frequency and make them transparent. That humility is refreshing because many projects lean heavily on promises of perfection or inevitability.

블록미디어

Yet, as with any infrastructure layer, the question of adoption looms large. A protocol like this only becomes meaningful if it is widely integrated and relied upon by significant ecosystems. Adoption hinges on developers embedding it into their stacks, on enterprises trusting this verification layer enough to build mission-critical applications upon it, and on users valuing the added layer of transparency enough to insist on its use. And here, the network effect matters more than technical elegance. You can build a verification system that, in theory, strengthens reliability, but without real usage and integration it remains an architectural curiosity rather than a structural solution.

CoinLaunch

Looking at how Mira presents itself today, I don’t see the typical breathless claims. I see an attempt to grapple with a real structural issue in the AI ecosystem — the gap between impressive output and trustworthy output. The design embraces decentralization not for its own sake, but as a means to distribute verification risk and reduce reliance on a single point of failure. It applies economic incentives to encourage honest contribution and uses cryptographic certificates to enhance auditability. These are not cosmetic decisions; they are attempts to address specific problems with real consequences in automated reasoning systems.

Messari

That said, I remain cautiously curious rather than convinced. A layer like this must prove itself over time in varied conditions — under load, across domains with different error sensitivities, and in the face of deliberate manipulation attempts. Decentralized infrastructures are notoriously uneven in their early phases, with participation concentrated among a few and with shifting security assumptions. But the problem space Mira targets — making AI outputs verifiable without central authorities — is undeniably structural. It resonates with the market’s broader struggle to balance automation with accountability, speed with correctness, and decentralization with coherent governance.

블록미디어

In the end, understanding a project like Mira is less about assessing its token mechanics or its hype cycle and more about observing whether it earns trust by providing meaningful, observable mitigation of real world risks. That is the benchmark that matters to someone who has seen narrative after narrative fade: does the solution address friction points that people and systems cannot easily workaround? Mira’s focus on reliability, consensus, and transparency touches on those points. Whether it ultimately becomes a widely accepted layer in AI ecosystems or remains an interesting experiment will depend on execution over time, integration across diverse platforms, and the network effects that extend beyond crypto enclaves into the broader digital economy. For now, I find myself watching with a sense of genuine interest, not because I expect miracles, but because the problem it tackles is one we cannot afford to ignore.

@Mira - Trust Layer of AI #Mira