There’s a subtle moment many traders rarely admit. It isn’t tied to a massive loss or an obvious error. Instead, it’s that quiet recognition that a choice was influenced by information that appeared reliable—but wasn’t entirely accurate. In fast-moving markets, significant damage often doesn’t come from blatant misinformation. It stems from minor inaccuracies delivered with unwavering certainty.

That realization has reshaped how I think about Mira Network.

The broader conversation around AI is relentless—more intelligent systems, faster responses, improved reasoning. Yet beneath that momentum lies an unresolved structural issue: AI models frequently produce answers that sound authoritative even when certain elements are incorrect. Not dramatically incorrect—just slightly off. And in financial markets, “slightly off” can be extremely costly.

A misquoted statistic can alter valuation assumptions. A weak causal link can shift positioning. An outdated data point can distort risk calculations. AI-generated responses often bundle multiple claims—figures, timelines, definitions, correlations—into a single fluid explanation. When even one piece is flawed, the entire argument can subtly lean in the wrong direction.

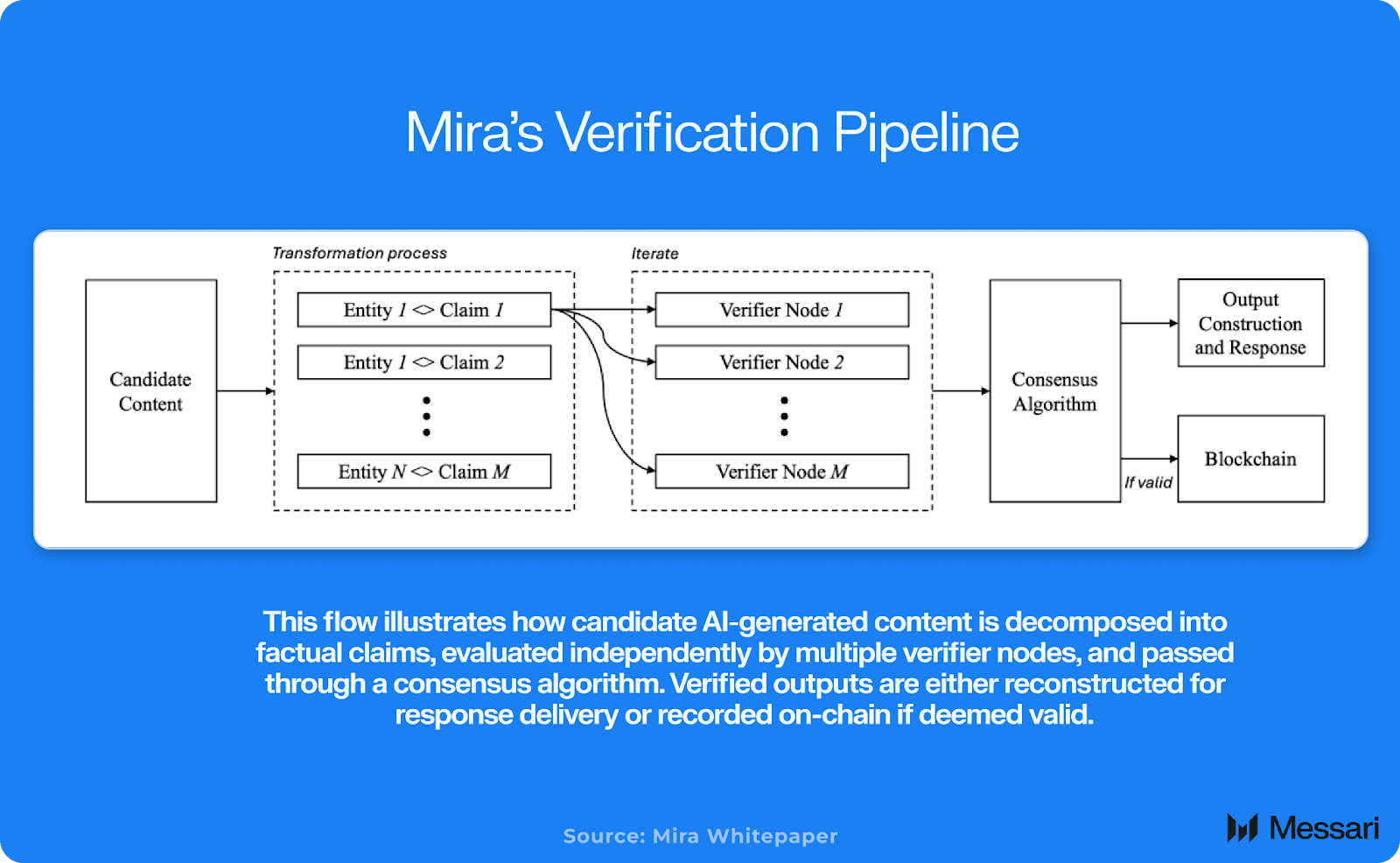

Mira’s approach doesn’t revolve around building a more advanced standalone model. Instead, it focuses on transforming how AI outputs are validated.

Rather than treating a single model’s response as definitive, the system reportedly separates outputs into distinct claims and distributes them to independent verifiers. These validators assess specific components, and the final response is constructed from aggregated consensus. Conceptually, this reframes AI from a “confident narrator” into a system designed for verification.

On paper, that shift appears nuanced. In application, it could be meaningful.

Financial markets didn’t evolve simply because participants formed stronger opinions. They matured because infrastructure—clearing, auditing, reconciliation—became dependable. Verification mechanisms turned promises into enforceable actions. A similar dynamic may unfold in AI. If generative systems become embedded within trading operations, compliance reviews, reporting systems, and research pipelines, some form of accountable validation becomes increasingly necessary.

The compelling dimension is how this plays out economically and within token markets.

When a project positions itself as infrastructure rather than a consumer-facing application, the value thesis changes. Success becomes less dependent on retail enthusiasm and more reliant on recurring functional demand. If verification integrates into workflows where mistakes are financially expensive, usage could become structural rather than cyclical.

However, meaningful challenges remain.

Agreement among validators does not automatically guarantee correctness. If multiple verifiers share similar data sources or systemic blind spots, they can collectively reinforce flawed conclusions. Decentralization reduces reliance on a single source but does not eliminate shared bias.

Operational efficiency presents another concern. Verification layers introduce additional computation, cost, and latency. In environments where speed is paramount, participants may prioritize rapid execution over precision. If verification slows processes or raises costs significantly, adoption may lag until a costly mistake forces reconsideration. Ultimately, integration will hinge not on philosophical appeal but on seamless workflow compatibility.

From a market standpoint, the real question becomes measurable traction.

Infrastructure-focused tokens typically reprice when usage metrics become visible and consistent. Indicators such as verification request volume, fee generation, staking engagement, and sustained network activity matter more than narratives. Attention may initiate momentum, but lasting valuation expansion generally requires demonstrable demand.

Supply structure also deserves scrutiny, particularly for early-stage tokens. If substantial portions of supply remain locked, scheduled unlocks can influence price behavior. Even strong rallies can encounter resistance if markets anticipate additional issuance. Long-term appreciation requires organic demand robust enough to absorb structural supply increases.

This makes the outlook non-binary.

In the constructive scenario, verification demand expands steadily, integrations multiply, staking participation deepens, and the network begins to resemble a quality-control layer for AI systems rather than a speculative concept. Under favorable market conditions, valuation frameworks could gradually shift from narrative-based to usage-based, potentially supporting multiple expansion.

The less favorable scenario is familiar in emerging sectors. Verification remains an appealing idea but struggles to secure widespread adoption. Costs remain high, latency noticeable, and competing—perhaps more centralized—solutions gain traction because implementation is simpler. In that case, the token’s price action may be driven more by attention cycles than underlying fundamentals.

The divergence between these outcomes won’t be determined by marketing materials or social discussions. It will appear in usage data and price structure over time.

What makes this sector compelling isn’t hype—it’s structural necessity. As AI systems integrate deeper into financial, operational, and governance frameworks, the consequences of unverified outputs intensify. The more authority AI assumes, the more expensive its small mistakes become.

And small mistakes are often the most dangerous.

An AI confidently presenting an incorrect figure rarely causes immediate drama. It doesn’t generate headlines. But multiplied across thousands of decisions—across desks, research teams, and automated processes—the cumulative cost grows quietly.

If networks like Mira succeed, it won’t be due to futuristic branding. It will be because they address a recurring and practical issue at scale: ensuring that confident outputs are genuinely accurate.

Markets tend to reward systems that reduce unseen friction. They also penalize stories that fail to translate into measurable usage. Observing which direction this category ultimately takes will matter far more than debating whether AI verification is theoretically important.

Conceptually, its importance is evident.

In reality, only sustained demand will determine the outcome.

@Mira - Trust Layer of AI #Mira $MIRA