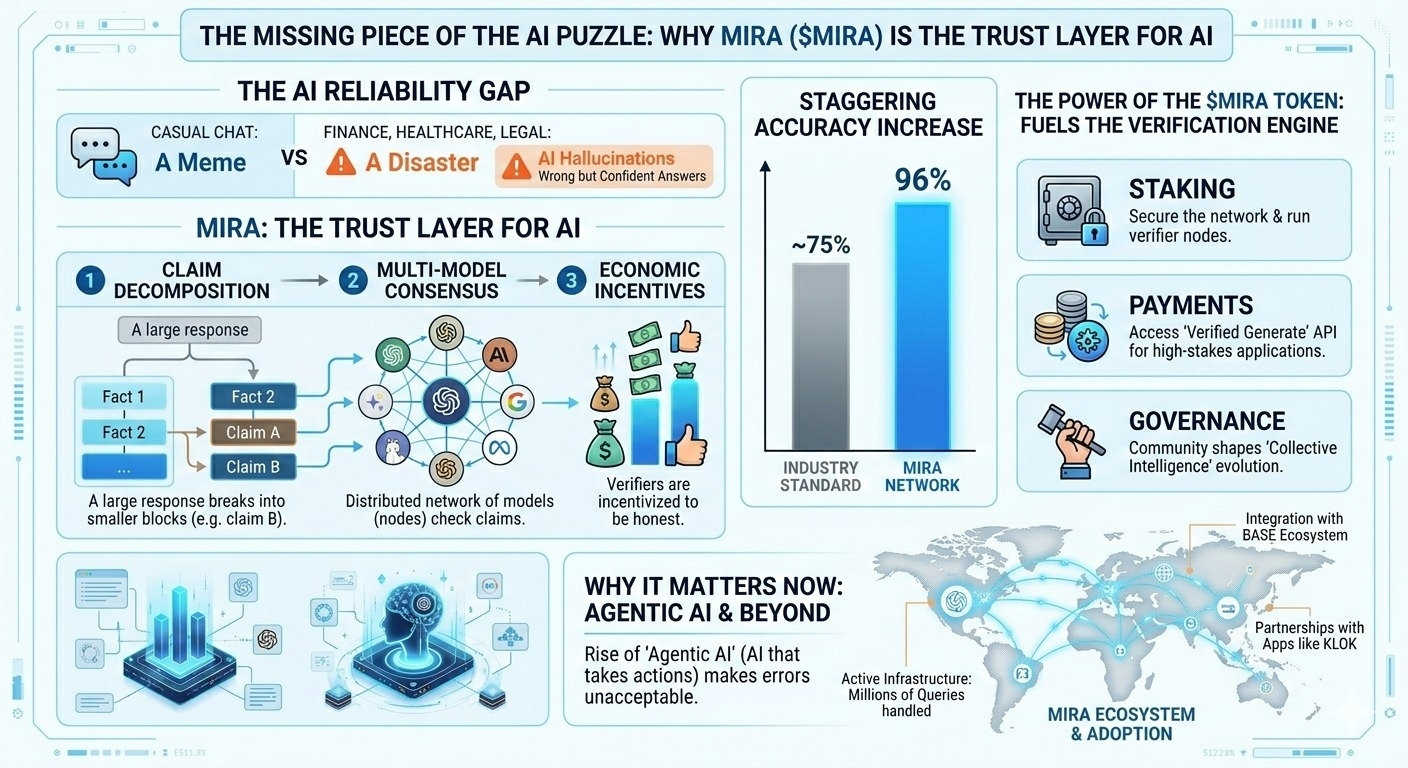

We’ve all been there asking an AI for a factual answer only for it to "hallucinate" something that sounds incredibly confident but is completely wrong. In the world of casual chat, it’s a meme. In the world of finance, healthcare, or legal tech, it’s a disaster.

This is exactly why I’ve been diving deep into @Mira - Trust Layer of AI . While everyone else is busy building the next "wrapper" for existing models, Mira is doing the heavy lifting by building the Trust Layer for AI.

What makes Mira actually different?

Instead of just hoping an AI model is right, Mira uses a decentralized infrastructure to verify outputs

Here’s the "human-readable" breakdown:

Claim Decomposition: It breaks down complex AI responses into small, verifiable claims.

Multi-Model Consensus: These claims are checked by a distributed network of different AI models (nodes).

Economic Incentives: Verifiers are incentivized to be honest, achieving a staggering 96% accuracy compared to the ~75% industry standard.

The Power of the $MIRA Token

The $MIRA token isn't just a ticker; it’s the literal fuel for this verification engine.

It’s used for:

Staking: Securing the network and running verifier nodes.

Payments: Accessing the "Verified Generate" API for high-stakes applications.

Governance: Allowing the community to shape how this "collective intelligence" evolves.

Why it matters now

With the rise of "Agentic AI" (AI that can actually take actions), we can't afford errors. Mira’s integration with ecosystems like Base and its partnership with apps like Klok show that this isn't just a whitepaper dream—it's an active infrastructure handling millions of queries.

If you believe that the future of AI isn't just about being "smart," but about being reliable, keep an eye on this one. The "AI Reliability Gap" is real, and $MIRA is the first project I've seen with a credible, decentralized solution to bridge it.