Right now, the entire artificial intelligence industry has a massive centralization problem. If a healthcare application or a financial trading bot relies entirely on one centralized AI model from a single corporation, they are taking on an insane amount of risk. What happens if that single model goes down, hallucinates a bad medical diagnosis, or spits out a biased financial metric? It is a single point of failure that massive industries simply cannot afford to have.

This is exactly why I am paying so much attention to the enterprise infrastructure side of this project. Developers building high-stakes applications don't want to build their own verification systems from scratch—it is too expensive and technologically complex. Instead, they can just plug the official @Mira - Trust Layer of AI SDK and Enterprise APIs directly into their apps.

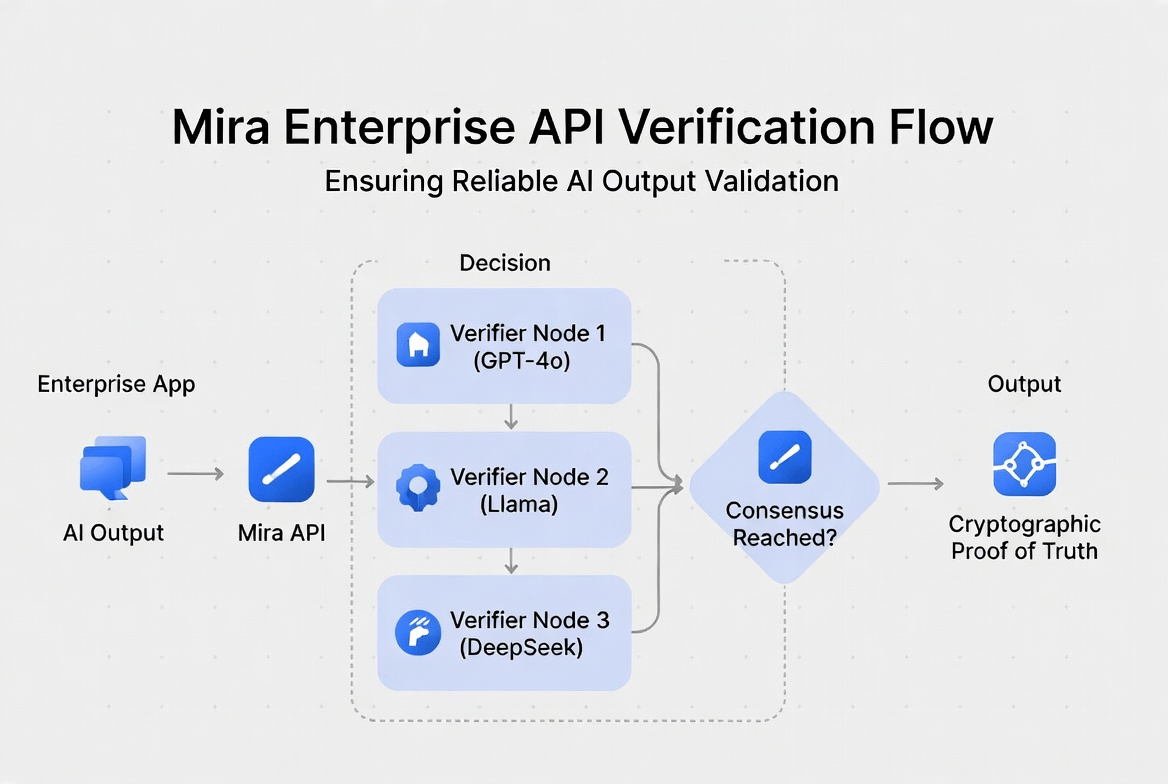

By doing this, an enterprise app instantly gets access to a decentralized "Trust Layer." When their app generates an AI response, it doesn't just blindly trust one single source. The API automatically routes the data through a decentralized network of independent verifier nodes running completely different models. They evaluate the claims in parallel, and the system only outputs a cryptographic proof of truth when those independent models actually agree with each other.

This completely removes the need for human intervention in the fact-checking process. It allows developers to build autonomous, reliable AI tools for the real world without worrying about corporate monopolies or random AI hallucinations ruining their product. The infrastructure is live, it is processing millions of queries weekly, and it is setting the absolute standard for how AI should actually be integrated safely. $MIRA #Mira