Mira Network and the Infrastructure for Verifiable AI

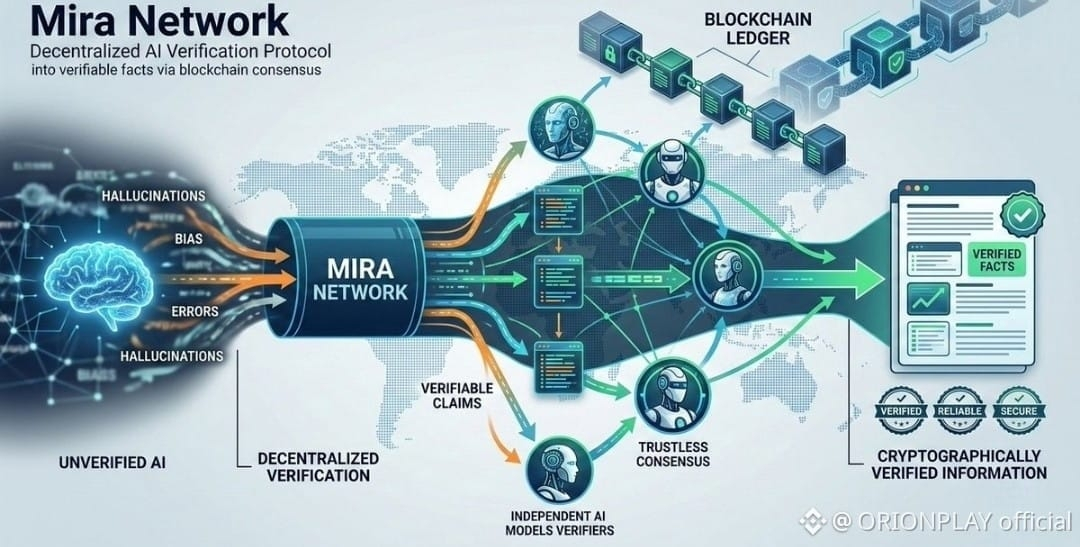

Artificial intelligence is increasingly used to generate answers, analysis, and decisions, yet the systems behind it often operate without a reliable way to prove whether those answers are correct. Confidence is easy for machines to produce; verification is much harder. Mira Network approaches this gap by focusing on the infrastructure of trust around AI outputs rather than simply improving the models themselves.

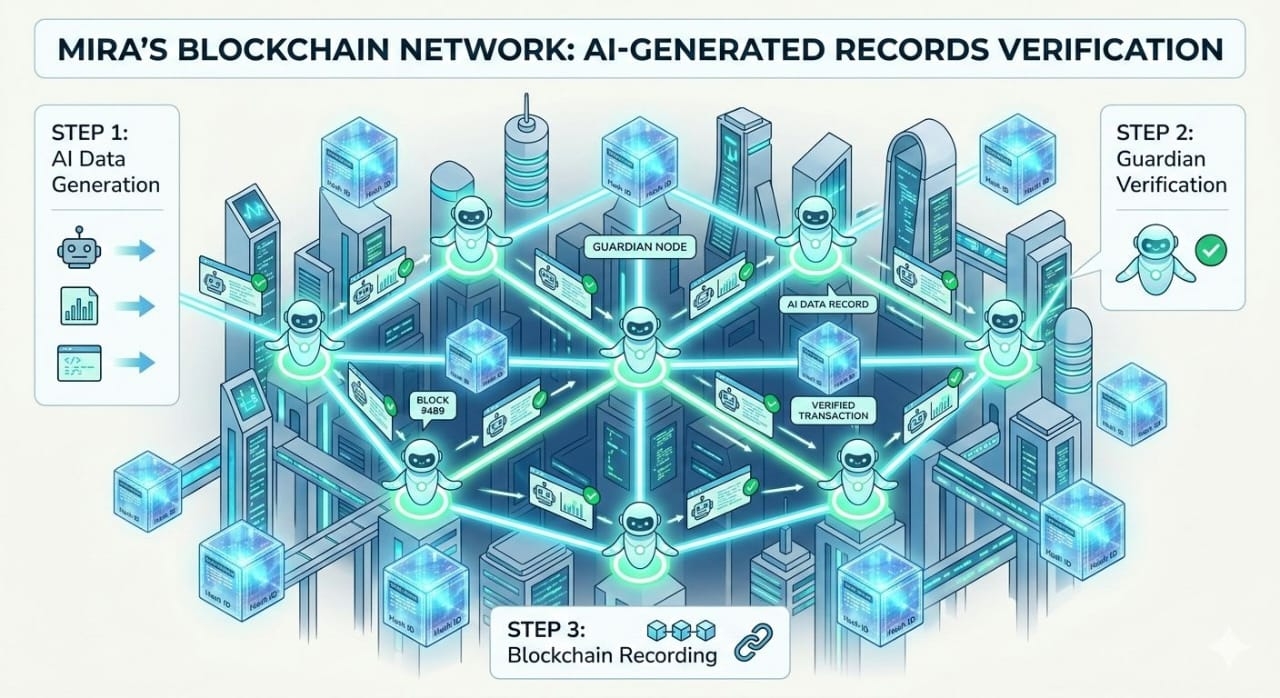

The protocol treats AI responses as collections of individual claims instead of a single final statement. Each claim can be examined, challenged, and validated across a decentralized network of independent AI models and verifiers. By distributing this evaluation process, the system reduces reliance on any single model and replaces blind trust with a process closer to structured consensus.

Blockchain infrastructure plays a central role in coordinating this verification layer. Every step of the validation process can be recorded on-chain, creating a transparent record of how an answer was tested and confirmed. The network’s token provides the economic mechanism behind this system, rewarding accurate verification while discouraging unreliable contributions. In this way, incentives align with the goal of producing information that can withstand scrutiny.

What emerges is a framework where AI does not operate as an unquestioned authority but as a participant in a system that demands evidence. Models generate outputs, the network evaluates them, and consensus determines which information earns credibility. This structure is especially important as AI moves into environments where errors carry real financial or operational consequences.

Mira Network is ultimately attempting to turn AI responses from confident guesses into statements that carry verifiable proof of reliability.