Right now, using Artificial Intelligence is a privacy nightmare. Every time a hospital tries to use AI to review a patient's medical history, or a business uses it to read a private legal contract, they are taking a massive risk. They are handing highly sensitive, private information over to centralized tech servers.

This is exactly why high-stakes industries have been so slow to adopt AI. They simply cannot afford the security risk. But @Mira - Trust Layer of AI has built a solution directly into their infrastructure called a Privacy-Preserving Architecture.

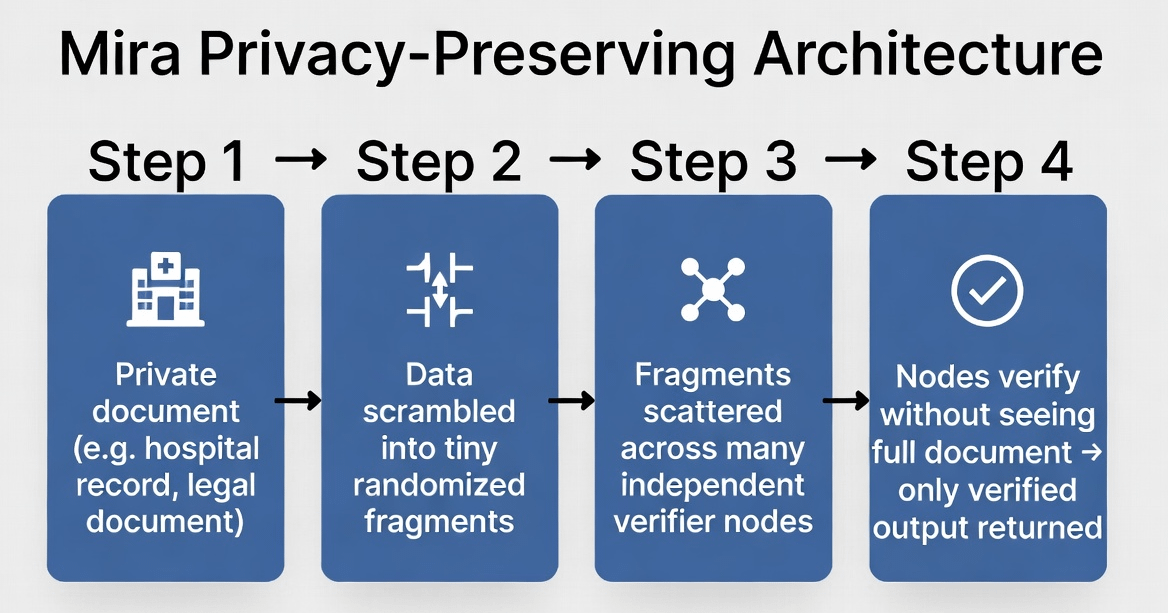

Instead of sending your entire private document to one single server to be verified, the network scrambles it. It breaks the complex information down into tiny, randomized fragments.

These fragmented pieces are then scattered across a decentralized network of independent verifier nodes. Because the data is chopped up and randomly distributed, no single node operator can ever reconstruct or read your complete original document. They only see a tiny, context-less fragment that they need to verify.

This means a business can finally get 100% verified, mathematically proven AI outputs without ever exposing their proprietary data or violating customer privacy. It completely removes the risk of corporate data mining. We are finally getting the infrastructure needed to use AI safely in the real world. $MIRA #Mira