I’ve noticed that the more I rely on AI in routine work, the more I treat its output as a draft rather than a destination. It’s not that the model is always wrong; it’s that I can’t quickly see where it’s weak without doing extra checking. The friction shows up in ordinary places—summaries that drop a condition, confident statements with no traceable basis, or advice that sounds reasonable but doesn’t match the underlying facts.

The core problem is that it’s hard to scale trust when generation and validation live inside the same black box. Models are trained to be coherent and helpful, not to prove each sentence they produce. If you tighten guardrails to cut hallucinations, you often narrow what the model will attempt and can concentrate bias through curation choices. If you widen coverage to reduce that skew, you usually increase variance and create more room for confident guesses. Centralized “verification layers” can help, but they often recreate a single point of authority: someone still decides which sources count, which verifiers are trusted, and how disagreement gets resolved.

It’s like letting one newsroom write the story and also choose which fact-checkers are allowed to review it.

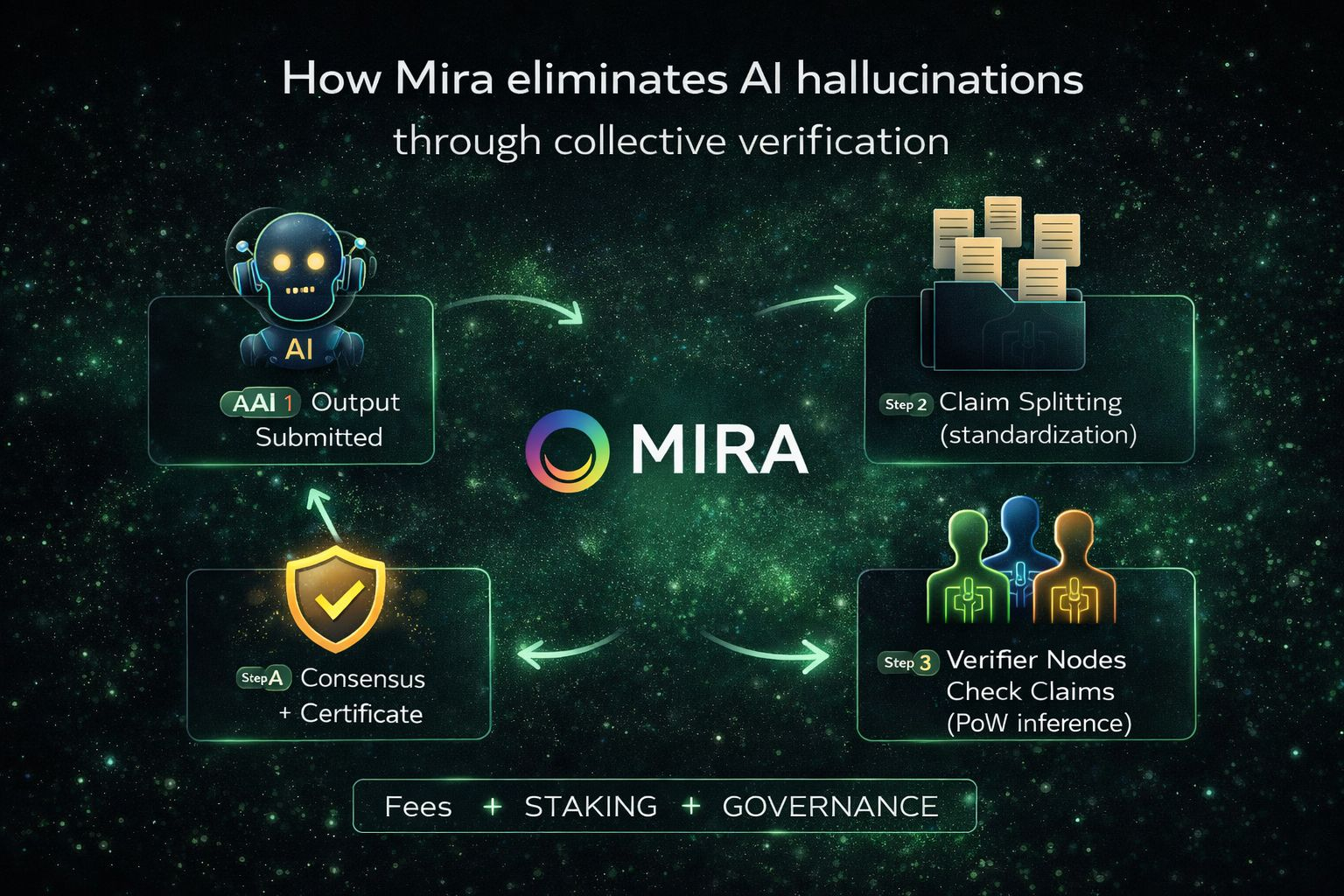

Mira approaches this by treating verification as an independent, decentralized function rather than an internal feature of one model or one curator. The main idea is to turn an output into smaller claims that can be checked consistently, then let multiple independent verifiers evaluate those claims and converge on outcomes through consensus. Instead of trusting a model’s confidence, trust shifts to an auditable process that’s designed to be expensive to manipulate.

Mechanically, the workflow begins with standardization. Free-form paragraphs are messy because two verifiers can read the same text, focus on different sub-claims, and still report “agreement” while talking past each other. The network’s transformation step breaks candidate content into discrete, verifiable claims, aiming to preserve logical relationships while making the unit of verification comparable across operators. Those claims are routed to node operators running verifier models, and each operator returns structured results that can be aggregated.

Consensus matters because the system assumes some operators will be wrong, lazy, or adversarial. A threshold rule—how much agreement is required—creates a concrete decision boundary for each claim. This fits the reliability goal because a single verifier can be fooled by a persuasive hallucination, while independent verifiers often fail differently, reducing correlated error. The design can also watch for suspicious similarity in responses, since collusion tends to look like unnatural agreement rather than random noise.

On the chain side, the excerpt reads as task-oriented infrastructure more than a general-purpose smart contract platform, and it doesn’t clearly specify whether the base state model is account-based or UTXO, or what VM (if any) executes on-chain logic. What is clearer is the lifecycle: users submit verification requests with parameters like domain and consensus threshold; the network orchestrates transformation and routing; nodes submit signed verification results; and the chain orders those submissions into a finalized, auditable record. The certificate that comes out of this flow is essentially a receipt for what was checked and what the aggregated outcome was, with finality depending on the chain’s consensus plus the assumption that honest stake isn’t overwhelmed.

Data availability and privacy sit in the middle of all this. A verification system that leaks inputs won’t get used, and one that hides everything can’t be audited. The design emphasizes sharding claim fragments so no single node can reconstruct the full original content, and minimizing what is revealed in certificates so only necessary details are exposed. The boundary between on-chain storage and off-chain handling isn’t fully described in the excerpt, so I treat the storage model as partially unspecified.

Incentives are where decentralization stops being a philosophy and becomes enforceable. Standardized verification can resemble constrained-choice inference, which makes guessing tempting unless there’s a cost to being wrong. Staking provides that cost: operators lock value to participate and face slashing if their behavior repeatedly deviates from expected honest inference patterns. Users pay fees for verification work, fees fund rewards, and governance adjusts stake requirements, slashing triggers, and consensus depth as conditions change.

Price negotiation shows up here in a neutral way as well: users and operators bargain over scarce verification capacity through fees and reward rates, allocating throughput when demand rises and compressing rewards when it falls. This isn’t about forecasting token performance; it’s about whether the system can sustain a stable cost of honesty that deters manipulation without making verification unusably expensive.

One limitation I can’t resolve from the current material is how well this approach holds up in domains where “truth” is contextual or policy-defined, especially as participation and incentives evolve.

I keep returning to the everyday habit of double-checking AI because it signals that today’s trust is still informal and manual. A decentralized verification network won’t eliminate uncertainty, but it can change what it means to rely on an output by making the path from claim to decision inspectable and economically defended. For me, the practical promise is not perfect answers, but a system where trustworthiness is a property of process, not a matter of who gets to speak the loudest.

@Mira - Trust Layer of AI $MIRA #Mira