Over the past year I’ve noticed something interesting about the intersection of artificial intelligence and crypto. Everyone talks about scaling AI models, training data, or GPU shortages. Almost nobody talks about verification. Yet if you spend enough time actually using AI systems, you start to realize the real problem isn’t intelligence — it’s trust.

AI systems generate answers with confidence even when they are wrong. Hallucinations, subtle inaccuracies, and biased outputs are not rare edge cases. They are structural problems. For casual use this might be tolerable. But the moment AI begins touching finance, research, legal systems, or autonomous infrastructure, unreliable outputs become a serious risk.

This is the context in which @Mira - Trust Layer of AI starts to make sense.

When I first looked into $MIRA , what stood out wasn’t a flashy narrative or a grand promise. It was a quiet observation about the future of AI: if machines are going to make decisions, their outputs must become verifiable.

Instead of treating AI responses as final answers, Mira treats them as *claims that need validation*.

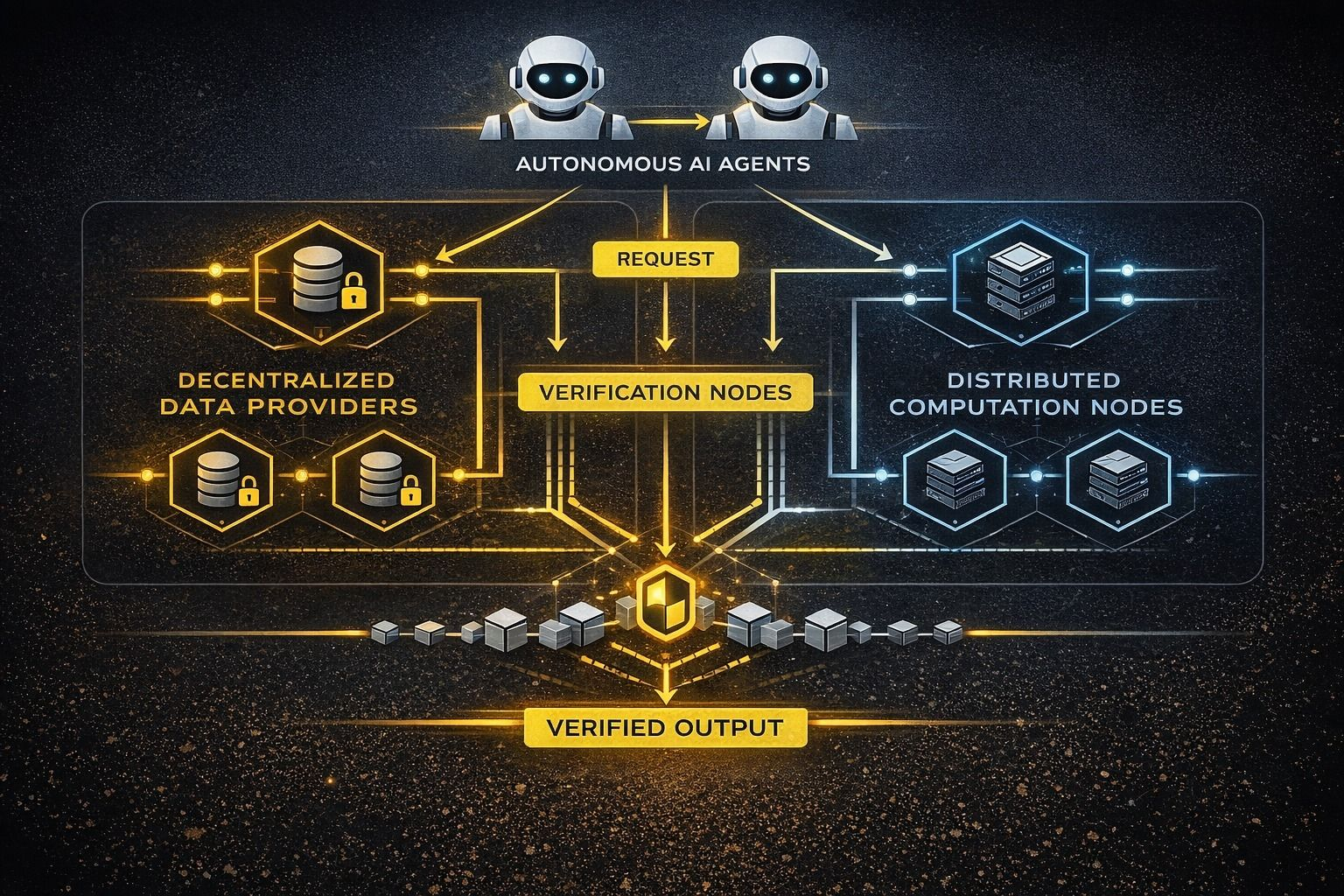

The architecture is surprisingly straightforward when you strip away the technical language. Imagine an AI model produces a complex output — a financial analysis, a research summary, or even a code snippet. Rather than accepting the output directly, Mira breaks it down into smaller statements that can be individually verified.

Those claims are then distributed across a network of independent verification nodes. Different models or validators evaluate whether each claim is accurate. The results are aggregated through blockchain-based consensus, producing an output that is not just generated by AI, but cryptographically verified by a decentralized system.

In simple terms, Mira turns AI answers into something closer to *audited information*.

What I find interesting about this design is that it mirrors something that already exists in financial markets. Traders rarely trust a single data source. They cross-check signals, compare information across platforms, and look for confirmation before acting. Mira attempts to build that same process directly into AI infrastructure.

Another detail that deserves attention is the incentive layer.

Verification is not free. It requires computation, models, and coordination. Mira introduces economic incentives so that participants in the network are rewarded for performing verification tasks. Instead of relying on a centralized authority to judge correctness, the system uses economic alignment and consensus mechanisms to determine reliability.

This is where the **$MIRA** token begins to play a functional role. In systems like this, tokens are not merely speculative instruments. They help coordinate the network by rewarding validators, securing participation, and aligning incentives between users and verification providers.

Of course, this approach also introduces trade-offs.

Verification layers add latency. AI systems are valued for speed, but verification requires additional steps. If every output must pass through multiple validators before reaching final consensus, the system becomes slower than direct model responses.

There is also the question of cost. Decentralized verification networks require participants to run models, compute results, and stake economic value. The sustainability of these incentives will ultimately depend on real demand for verified AI outputs.

And that raises a deeper question: will users actually care about verification?

Right now most people interact with AI casually. They accept answers without questioning them. But as AI becomes embedded in automated trading strategies, financial analysis tools, research pipelines, and governance systems, the tolerance for unreliable outputs will shrink dramatically.

Markets eventually punish uncertainty.

If an AI-driven trading system makes decisions based on hallucinated data, the cost is immediate. If an automated research pipeline spreads inaccurate claims, the consequences compound quickly. At scale, reliability becomes infrastructure.

This is the quiet thesis behind Mira.

It is not trying to build another AI model. It is trying to build a *verification layer for AI itself*.

When viewed through that lens, the network begins to resemble something closer to an auditing system for machine intelligence. Instead of asking “what did the AI say?”, the question becomes “can this output be proven reliable?”

From a market perspective, projects like this often sit in an awkward position early on. They are not consumer-facing products, and they do not produce immediate excitement like trading platforms or new Layer 1 chains. Their value only becomes obvious when the systems they secure begin scaling.

Infrastructure tends to look boring until it becomes indispensable.

What I’m watching closely is how this model evolves as AI agents become more autonomous. If machines begin interacting with financial systems, executing transactions, or coordinating with other machines, then verification layers may become a requirement rather than a feature.

And if that happens, networks designed around validating machine-generated information could become foundational pieces of the AI economy.

Right now Mira still sits in the early stage of that narrative. The architecture is ambitious, the problem is real, but adoption will ultimately determine whether verification becomes a niche service or a core layer of AI infrastructure.

Personally, I find the premise difficult to ignore. The industry has spent years trying to make machines smarter. Very few projects are asking how we make them accountable.

If AI is going to reshape digital systems the way many expect, then trust will eventually become the most valuable layer of all.