Mira Network caught my attention for a reason that has nothing to do with hype.

It wasn’t the usual crypto noise. Not the token talk. Not the loud promises. It was the simple fact that AI has started slipping into everyday decisions way faster than most people seem ready to admit, and almost nobody is talking enough about what happens when people stop checking it. That is the part that bothers me. Not the intelligence. Not the speed. The trust.

We are getting used to asking AI things and moving on as if the answer has already been cleared, tested, and confirmed. It gives a clean response, the wording feels sharp, the tone sounds sure of itself, and most people accept it in one pass. That habit is forming quietly. No big announcement. No dramatic moment. Just a slow shift in behavior. And honestly, that shift feels more dangerous than most of the loud arguments around AI.

Because AI does not need to be evil to cause damage. It only needs to be wrong in a way that sounds right.

That is what makes this uncomfortable. When a human is guessing, you can often feel it. There is hesitation. There are gaps. The tone gives something away. With AI, the mistake comes dressed like certainty. It can hand you something completely off, but the structure is neat, the language is polished, and the answer arrives without doubt. That is exactly why people trust it too fast.

And once you see that pattern, it becomes hard to ignore how risky it is becoming.

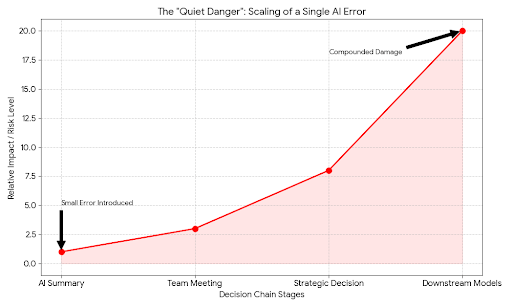

Right now, people use AI to summarize reports, draft legal notes, review contracts, scan research, write code, answer medical questions, interpret market moves, and support customer decisions. In a lot of cases, it is not replacing the final human decision, but it is shaping the direction of that decision. That still matters. A wrong answer at the start can quietly bend everything that comes after it.

Think about a team using AI to prepare a market brief before a trading day. One false figure gets inserted into the summary. Nobody catches it because the report looks clean. That bad number makes its way into a meeting, shapes a decision, feeds another model, and suddenly the original error is no longer sitting alone. It has spread into a chain. That is how these things work now. Mistakes do not stay small when machines help move them faster.

That is why Mira Network feels interesting. Not because it is claiming to build some magical perfect intelligence. Honestly, that pitch would be less interesting. What makes it worth watching is that it starts from a more realistic assumption: AI will get things wrong. Not once in a while. Repeatedly. In different ways. At different scales. So the real job is not pretending those errors will disappear. The real job is building a way to check the output before people act on it.

That framing matters.

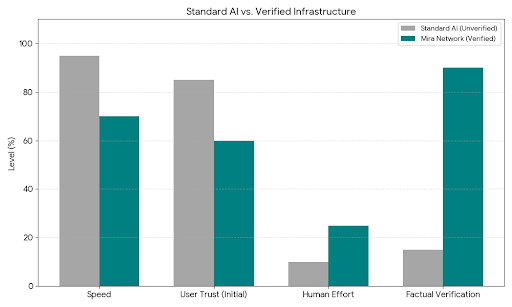

Mira is trying to build a verification layer around AI, not just another system that spits out answers and asks everyone to trust the result. The idea is simple in theory but important in practice. Instead of taking one model’s response at face value, the network breaks the output into separate claims and has those claims checked across independent validators. Different models. Different evaluators. Different points of view inside the system. Then it looks for agreement, conflict, uncertainty. It turns one answer into something closer to a review process.

That sounds less glamorous than building a giant model, but it feels more grounded in reality.

Because if AI is going to be used in places where mistakes actually cost something, then the missing layer is not more confidence. It is proof. Or at least a stronger attempt at proof than what most systems offer right now.

The way I see it, Mira is building around a human truth that tech people sometimes ignore: people are terrible at resisting confident delivery. If something sounds organized and immediate, we lean toward belief. We do it with experts. We do it with headlines. We do it with polished apps. AI only intensifies that weakness because it produces certainty on demand. No pause. No self-consciousness. No visible doubt. Just answer after answer after answer.

So a network that slows that process down just enough to verify the claims starts to feel less like an optional add-on and more like basic infrastructure.

That becomes even more important when you think about autonomous systems. Everyone wants to talk about agents now. AI agents handling tasks, making transactions, coordinating workflows, managing operations. Fine. Maybe some of that lands. Maybe some of it is early. But even in the practical versions of that future, one thing stays true: an agent that acts on false information is still dangerous, even if it is efficient. Fast mistakes are still mistakes. Scaled mistakes are worse.

A verification layer changes that conversation. It creates a checkpoint between generation and action. That checkpoint is boring compared to the usual AI marketing language, but boring infrastructure is often the thing that actually matters. Nobody gets excited about guardrails until they realize how expensive it is to live without them.

And this is bigger than tech architecture. There is a social habit underneath all of it. People are losing the reflex to verify. Search engines made information fast. AI is making it feel final. That is a different level of risk. When people stop treating answers as provisional, they become easier to mislead, even without bad intent involved. Sometimes the damage comes from deception. Sometimes it just comes from polished nonsense.

That is why this subject hits differently.

The danger is quiet.

It does not arrive like some dramatic machine uprising. It arrives through convenience. Through habit. Through repetition. Through the subtle feeling that checking is unnecessary because the answer looked complete enough. That is how trust gets outsourced. Not in one reckless moment, but in a thousand small ones.

And that is where Mira Network starts to matter. It is trying to rebuild the friction people are losing. Not by telling users to be more careful every single time, because most people will not do that consistently. It tries to place verification inside the system itself. Into the rails. Into the process. Into the part users do not have to think about every time.

That is a smarter place to build.

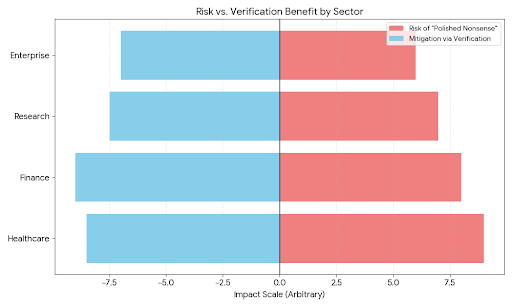

In healthcare, that kind of verification could matter because summaries and recommendations should not slide through just because they sound professional. In finance, it matters because one flawed interpretation can ripple outward very quickly. In research, it matters because fabricated citations and cleanly worded falsehoods can pass too easily when nobody checks the original material. In enterprise tools, it matters because teams increasingly trust outputs they barely inspect if the workflow feels smooth enough.

The more AI becomes part of real operations, the less acceptable casual trust becomes.

And to me, that is the real story here. Not whether Mira is trendy. Not whether AI verification becomes a flashy category. Not whether everyone suddenly starts talking about trust layers like they discovered something new. The real story is that someone is at least building around the actual weakness in the room.

People believe AI too fast.

That is the quiet danger.

Not because people are stupid. Not because the technology is useless. But because confidence travels well, and verification does not. Confidence is instant. Verification takes effort. Confidence feels modern. Verification feels slow. Confidence wins attention. Verification rarely does.

Still, the systems that last are usually the ones that respect reality instead of trying to market around it. And reality says AI will keep making mistakes. Better models may reduce some of them, sure. But as long as these systems generate language in ways that can blur confidence and correctness, the problem stays alive.

So the question is not whether AI will become more capable. It will.

The harder question is whether the systems around it will become trustworthy enough to deserve the role people are already giving them.

That is why Mira Network stands out. It is not trying to romanticize machine intelligence. It is trying to make it legible before people hand over too much trust. That is a more serious job. Less flashy. More necessary.

And honestly, that may end up being the difference between AI becoming useful at scale and AI becoming another layer of polished confusion that people only question after the damage is already done.