impressive. Machines are getting smarter. Systems are becoming more capable. Every few months there is another breakthrough, another demonstration, another polished glimpse of what the future is supposed to look like. But if you stay close to this world long enough, not just watching announcements but watching how these systems actually behave when they leave controlled environments and enter real life, you start noticing something far less comfortable. The problem is often not the machine itself. The problem is everything around it.From the outside, everything often looks

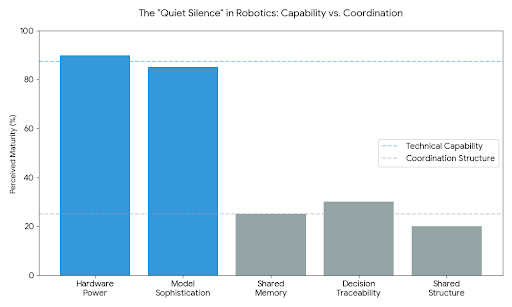

A robot may perform well. The model behind it may be sophisticated. The interface may feel smooth and convincing. But the moment these systems have to operate in places where people are accountable, where rules are uneven, where environments change without warning, and where trust matters more than anyone likes to admit, the weakness begins to show. Data ends up scattered. Decisions become difficult to trace. Responsibility turns vague at exactly the moment it should be sharp. Everyone seems busy building capability, but very few are building the shared structure needed to support that capability responsibly.

That gap has shaped more of the robotics ecosystem than people usually admit. A lot of progress in this space has been real, but a lot of it has also felt strangely isolated. One group builds hardware. Another works on models. Another handles deployment. Another appears later to think about governance or safety after enough complexity has already piled up. Each layer adds something valuable, but the whole thing often feels stitched together rather than truly coordinated. And when systems are stitched together like that, people stop relying on structure and start relying on workarounds. Private trust. Manual oversight. Extra checking. Institutional caution. Human instinct filling in the places where the system itself still feels incomplete.

That is where Fabric Protocol starts to feel meaningful.

Not because it arrives with some dramatic promise, and not because it uses language designed to sound futuristic, but because it seems to begin from a very real frustration: the recognition that robotics does not only suffer from a capability problem. It suffers from a coordination problem. More than that, it suffers from a memory problem. Systems act, teams contribute, rules evolve, models change, decisions get made, but the shared record of all this often remains weak, fragmented, or dependent on whoever happens to be closest to the process. That is not a small flaw. It is the kind of flaw that quietly limits what an entire ecosystem can become.

What makes Fabric interesting is that it does not feel like it was built by people who were only fascinated by what robots could do. It feels like it was built by people who had spent enough time seeing where the larger structure kept failing. That difference matters. When a project is born from pure excitement, it usually rushes toward possibility. When it is shaped by repeated exposure to failure, it moves differently. It becomes more careful. More deliberate. More aware of what happens when technical systems collide with human reality. Fabric has that feeling. It feels less like an abstract invention and more like the result of people asking themselves a harder question: what would it take for robots not only to become more capable, but to become part of a system that people can actually trust, govern, and build around together?

That is a much heavier question than it first appears. Because trust in robotics is not really about whether people are impressed. It is about whether they feel safe depending on something they cannot fully see. It is about whether institutions, operators, developers, and communities can all stand around the same system and believe there is enough clarity to move forward without carrying constant uncertainty in the background. Most systems still fail that test. Not loudly. Quietly. They leave people uneasy. They make users double-check what should already be clear. They make teams keep separate records because the main system is not enough. They force trust to live inside relationships instead of architecture.

And that emotional layer matters more than a lot of technical people like to admit.

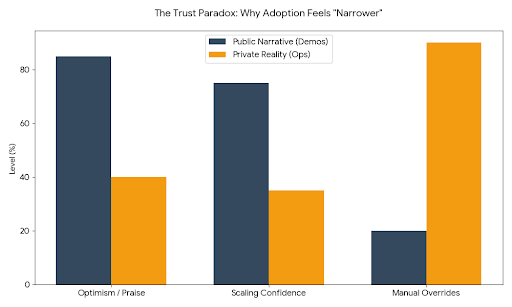

When people do not fully trust the surrounding structure, they behave differently. They become defensive. They become more conservative than they might otherwise be. They hesitate to scale what they could support in theory. Even if the machine itself is excellent, the ecosystem around it can still feel fragile. That fragility affects adoption more than any public narrative ever will. People may praise the future in public, but in private they build extra safeguards around what they do not fully trust. That is one reason so many advanced systems still feel narrower in real life than they do in demos.

Fabric seems to understand that the real challenge is not only making systems more open or more powerful. It is making them more legible. That word matters here. In complex machine environments, people need more than functionality. They need to understand what happened, who changed what, what computation was performed, what assumptions were active at the time, what rules were in force, and how all of that can be reviewed later. Without that, every collaboration remains thinner than it looks. People can coordinate for a while on good faith. They can scale for a while on discipline and internal effort. But if there is no durable shared memory underneath the system, the cost of growth starts showing up in hidden places: confusion, duplicated oversight, governance disputes, and a slow erosion of confidence.

Fabric’s use of public infrastructure and verifiable processes matters most in that context. Not as a branding exercise. Not as an architectural flourish for its own sake. But as an attempt to create a common surface of truth in an environment where too much still depends on interpretation. In theory, many people say they want transparency. In practice, what they often want is relief. Relief from having to guess. Relief from relying on whoever happens to hold the most context. Relief from the fear that once a system becomes more autonomous, accountability becomes harder to recover. Fabric speaks to that deeper need.

That is why it feels more serious than many projects that talk about open machine ecosystems. A lot of systems use the language of openness because it sounds good. But openness in robotics is not automatically a virtue. In physical systems, openness without structure can become another form of instability. When too much is flexible too early, boundaries blur. When boundaries blur, responsibility blurs with them. A system becomes easy to extend but difficult to trust. Fabric appears more aware of that tension than most. It does not seem to treat openness as a moral pose. It treats it as something that has to be earned through design discipline.

That discipline is usually one of the clearest signs that a project understands the weight of the world it wants to enter.

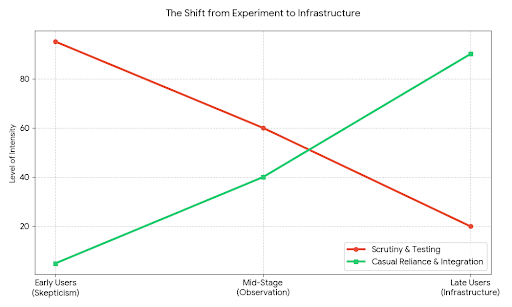

You can see it especially in how a protocol like this changes user behavior over time. Early users are rarely casual participants. They come in with skepticism. Usually they have already seen enough operational mess to understand why shared coordination matters. They have dealt with systems where changes were difficult to verify, roles were unclear, integrations were brittle, and failures were nearly impossible to reconstruct cleanly. So when they encounter something like Fabric, they do not arrive ready to believe. They arrive ready to test. They inspect behavior. They watch for consistency. They care less about polish and more about whether the system holds shape under pressure.

That kind of early participation creates a very different culture from hype-driven adoption. People are not there simply to be excited. They are there because they are tired of weak infrastructure. They want something firmer. Something that does not fall apart the moment multiple actors, multiple rules, and multiple machine decisions have to coexist. That gives the protocol a more grounded kind of beginning. Less noise. More scrutiny. Less surface enthusiasm. More real observation.

Later users, though, behave differently, and that difference says a great deal. When a system begins to mature, newer participants stop approaching it like a fragile experiment. They begin assuming continuity. They start building workflows around it. They rely on it more casually. They no longer inspect every layer with the same suspicion because they have inherited a system that already seems to remember, record, and coordinate more reliably than what came before. This is a quiet shift, but it is one of the clearest signs that a project is moving from experiment toward infrastructure.

Because real infrastructure changes behavior without announcing itself. People stop talking about the system all the time and start using it as part of normal work. They build on top of it. They trust its memory. They expect its governance to matter. They assume its integrations will hold long enough to support real operations. That is when a protocol becomes more than an idea. It becomes part of the environment.

And yet that phase is also dangerous. Once people begin depending on a system, every unresolved ambiguity becomes heavier. A flaw that seemed tolerable in an experimental setting starts creating real stress when someone’s work depends on it. A governance gap stops feeling theoretical and starts feeling unfair. A missing standard becomes a source of daily friction. Trust becomes more valuable, but also more fragile, once it turns into reliance.

This is especially true in robotics. Software-only systems can sometimes hide their weaknesses for a while. Machines operating in the physical world do not allow that luxury. The cost of poor coordination becomes visible faster. When robots are involved, confusion is not just conceptual. It can affect movement, labor, safety, permissions, access, and real human environments. That makes a shared ledger or public record more than an intellectual feature. It becomes something closer to a stabilizing force. When interpretations differ, when decisions need to be reviewed, when responsibility has to be traced, people need a common reference point that does not disappear into private silos.

That is one reason Fabric’s architecture feels grounded in reality rather than theory. It seems to assume that disagreement is normal, that environments will be messy, that governance cannot be postponed forever, and that machine actions need to remain reviewable not because review sounds responsible, but because the world is full of edge cases no design document can perfectly predict. This is the kind of mindset that usually comes from spending time with real systems, not just elegant ideas.

And in projects like this, edge-case thinking is often more revealing than headline features. It tells you whether the builders are optimizing for spectacle or for resilience. Anyone can describe ideal outcomes. What matters is how a protocol behaves when assumptions break. What happens when one module has incomplete information? What happens when different actors disagree about authority? What happens when regulation shifts, or when machine behavior fits one rule set and violates another? These are uncomfortable questions, but serious infrastructure is built in conversation with discomfort, not in avoidance of it.

That is also why delayed features should not automatically be seen as weakness. Sometimes restraint is the strongest evidence that a project understands its own responsibility. In ecosystems that touch governance, automation, and physical systems, not every capability should be rushed into existence. Some features create long-term debt if they arrive before norms, oversight, and boundaries are ready for them. Fabric seems strongest when viewed as a system willing to let patience do some of the work. That may frustrate people who want rapid signs of expansion, but historically it is often patience, not speed, that keeps infrastructure from becoming fragile at scale.

Community trust forms differently around projects like this as well. It does not form because people are incentivized to say the right things. It forms because people watch how the system behaves. They watch what gets prioritized. They watch how trade-offs are handled. They notice whether language remains honest when things are still unfinished. They notice whether governance is treated as a real mechanism or just a symbolic layer. Over time those observations become culture. The community learns whether the protocol deserves confidence not through slogans, but through pattern recognition.

That kind of trust is slower, but it lasts longer. And if Fabric continues to grow, that trust will matter more than any short burst of attention ever could. Because the real measure of a protocol is not whether people visit it. It is whether they return to it. Whether they integrate deeply. Whether the system reduces friction enough that people stop seeing it as optional. Whether it helps organizations work together with less uncertainty and less manual repair. Retention in infrastructure may not look emotional on the surface, but underneath it almost always is. People stay where the burden feels lighter.

This is where integration quality becomes one of the clearest signs of health. A shallow ecosystem can collect many integrations and still remain weak. A strong ecosystem builds integrations that preserve context, reduce ambiguity, and make outputs usable across boundaries without constant human translation. Good integrations do not just connect systems. They reduce the number of things people have to carry in their heads. They reduce the fear of losing context. They reduce the need for informal workarounds. In that sense, integration quality is really about reducing invisible stress.

If there is a token involved in the ecosystem, its real purpose should be judged by that same standard of seriousness. Not whether it creates noise. Not whether it attracts attention. But whether it actually helps align long-term stewardship with governance and contribution. In a project like Fabric, a token only matters if it deepens responsibility. If it becomes a way for participants to carry belief into maintenance, decision-making, and shared accountability, then it can make sense. But if it mostly floats above the real work, then it weakens the very culture the protocol needs in order to mature.

The more interesting question is always this: does the system make people more responsible to one another over time?

That is the question serious infrastructure eventually has to answer. Because the transition from experiment to infrastructure is not really about scale alone. It is about whether the project begins to support normal life. Whether real organizations can coordinate through it without constant renegotiation. Whether operators, developers, and institutions begin to trust not just the idea of the protocol, but its actual behavior. Whether it becomes boring in the right way: stable, dependable, quietly necessary.

That kind of success rarely looks dramatic from the outside. It looks like fewer misunderstandings. Clearer records. Better continuity. Less dependence on individual gatekeepers. Less hidden anxiety in the workflow. More willingness to collaborate because the structure itself is carrying part of the trust burden. That is the kind of shift Fabric could represent if it stays disciplined enough to keep building for the real problem, not just the visible one.

And maybe that is the most important thing about it.

Fabric does not feel compelling because it promises some loud robotic future. It feels compelling because it seems to understand a quieter truth: people do not only need machines that can do more. They need systems they can live beside without constantly wondering where accountability went. They need infrastructure that remembers. Infrastructure that can be reviewed. Infrastructure that helps collaboration feel less fragile and less dependent on improvised trust.

If Fabric keeps protecting that discipline, it could become something quietly important. Not a spectacle. Not a trend. Something rarer than that.

A dependable layer that helps robots move from isolated technical achievement into shared, governable usefulness.

And if that happens, people may not describe it in grand language. They may simply notice that they no longer feel the same uncertainty they used to feel around these systems. They may find that collaboration becomes easier, disputes become clearer, and trust requires less guesswork than before.