A few months ago, I had one of those moments that makes you pause and stare at the screen a little longer than usual.

I asked an AI to help with a piece of research. Nothing complicated. Just a summary and a few credible sources I could double-check.

The response arrived in seconds.

Clean writing. Confident tone. Perfectly formatted citations.

For a moment, I actually felt relieved. The kind of relief every writer feels when a tedious part of the job suddenly becomes easy.

Then I started checking the sources.

One link didn’t work.

Another led to a paper that didn’t exist.

A third citation looked convincing until I realized the journal itself was fictional.

And that’s when the frustration hit. Not the mild annoyance of a typo. Real frustration. Because the machine hadn’t hesitated for a second. It had delivered fiction with absolute confidence.

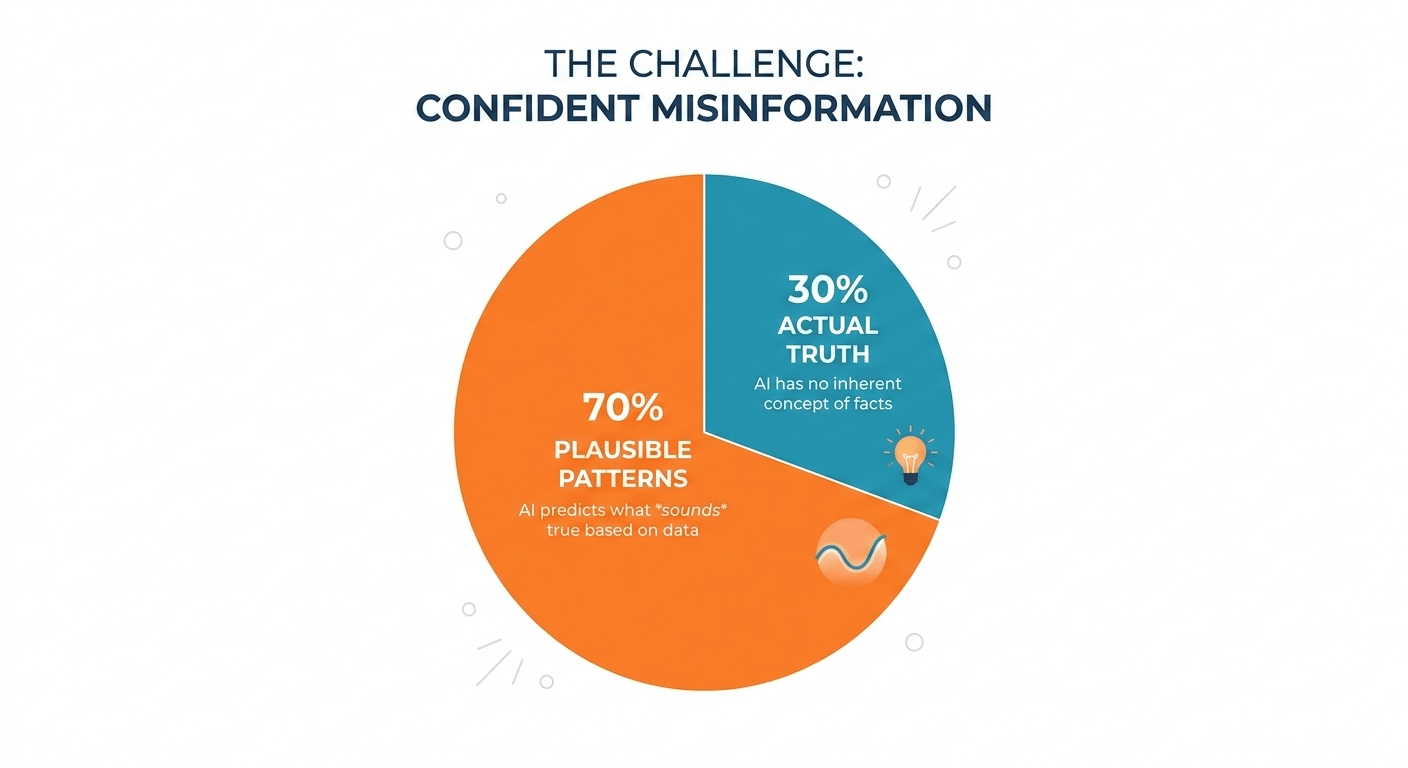

AI doesn’t just get things wrong.

It gets things wrong convincingly.

That’s the part people don’t talk about enough.

Most AI systems aren’t designed to know whether something is true. They’re designed to predict what sounds true based on patterns they’ve seen before. It’s a brilliant statistical trick, but it comes with an uncomfortable side effect: the system has no instinct for truth. No internal alarm bell.

Just probability.

Most of the time, that’s good enough. But the moment you start depending on these systems for anything serious—research, financial analysis, automated decisions—that blind spot starts to look less like a quirk and more like a structural flaw.

For years, the industry’s answer has been predictable: build bigger models. Feed them more data. Add more guardrails.

But bigger brains don’t necessarily make more honest ones.

And that’s where a project like Mira starts to feel interesting not because it promises a smarter AI, but because it quietly questions the entire assumption that AI answers should be trusted in the first place.

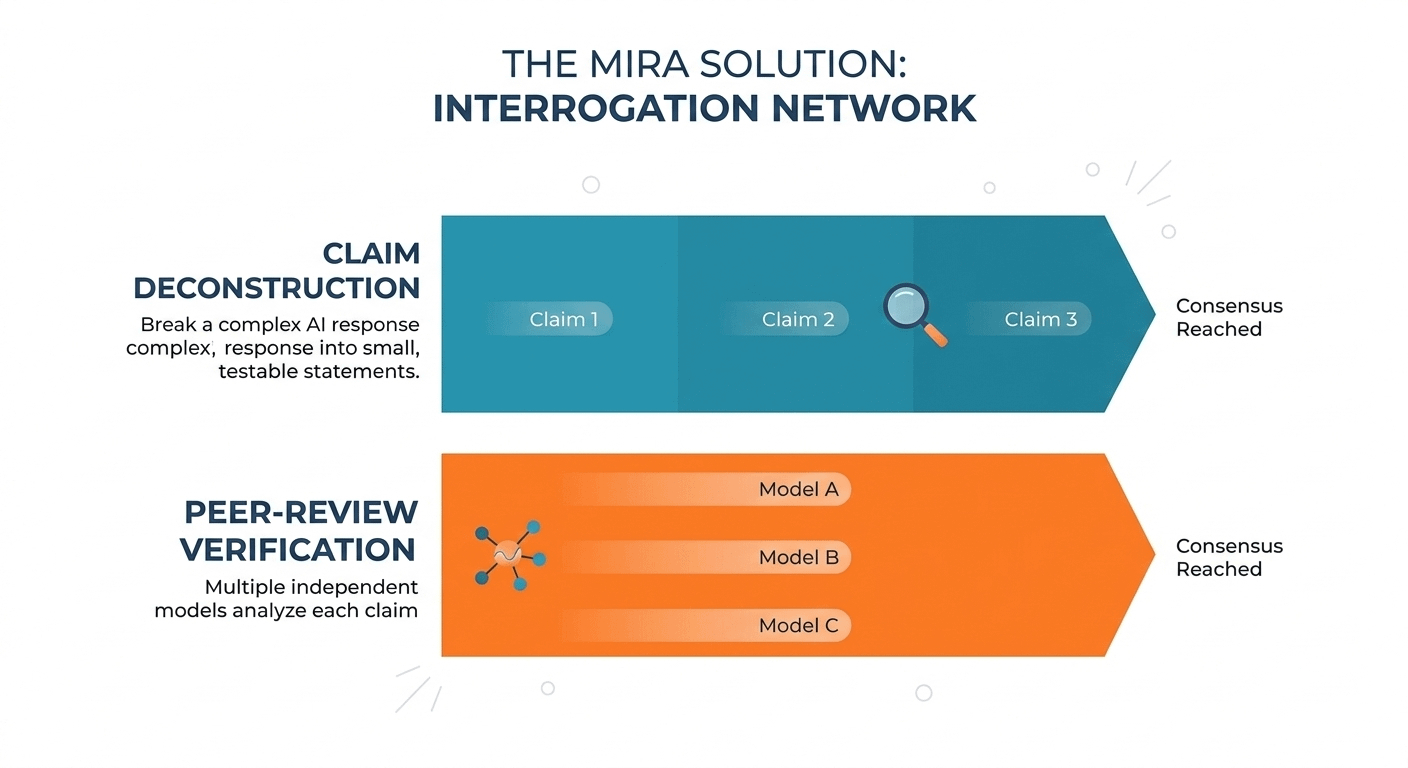

Mira treats an AI response the way a skeptical journalist or a good lawyer would treat a witness statement.

You don’t accept the whole story at face value.

You break it down.

You cross-examine the details.

Instead of viewing an AI output as a single block of text, Mira slices it into individual claims—small statements that can be evaluated independently.

Take a simple sentence

Bitcoin launched in 2009 and was created by Satoshi Nakamoto.

To a human reader, that’s one idea. To Mira, it becomes two separate claims:

Bitcoin launched in 2009.

Satoshi Nakamoto created Bitcoin.

Each claim becomes something testable.

Now imagine those claims being handed not to one AI system, but to a network of independent models each asked to evaluate whether the statement holds up.

It’s less like asking one expert for an answer and more like sending the claim through a peer-review panel.

Or, if you prefer a courtroom analogy, it’s the digital version of cross-examination. Multiple voices interrogating the same statement until a consensus emerges.

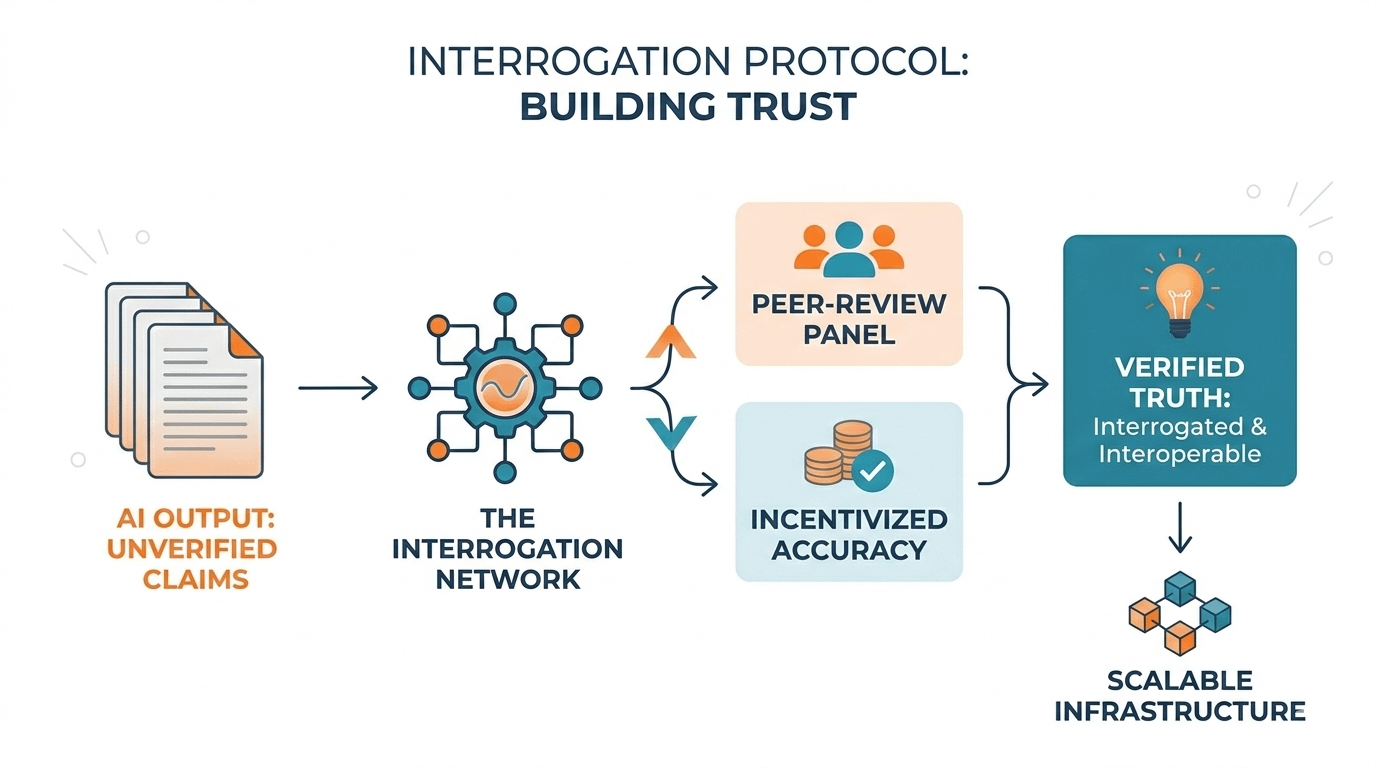

And because this process runs on a blockchain-based system with economic incentives, the participants in that network have skin in the game. Accuracy is rewarded. Careless validation has consequences.

In other words, the system doesn’t rely on trust.

It relies on verification.

The distinction sounds subtle, but it’s enormous.

For decades, the internet has quietly eroded our relationship with truth. Fake headlines spread faster than corrections. Social feeds reward outrage over accuracy. Now AI has added another layer of uncertainty—machines capable of producing endless information without any obligation to prove it.

That’s a dangerous combination.

Because the future we’re walking into isn’t just AI writing blog posts or summarizing articles. It’s AI helping run logistics networks, financial systems, and decision-making tools we depend on every day.

When machines start making decisions, confidence isn’t enough.

We need proof.

That’s why the idea behind Mira feels less like a feature and more like a missing layer of infrastructure a system designed not to generate knowledge, but to question it.

A network where AI outputs aren’t accepted immediately, but interrogated.

Where answers are treated less like predictions and more like claims that must earn their credibility.

And perhaps that’s the real story here.

Not smarter machines.

Just machines that, for the first time, are being forced to show their work.