When I first realized what the Mira network was truly built on, what surprised me most wasn't the technology itself, but the Mira Foundation.

In the cryptocurrency space, foundations typically emerge after a project has grown significantly, but Mira took an interesting step early on. In August 2025, the team established the Mira Foundation and invested $10 million in it. What truly impressed me was the significance behind this decision. It felt like the developers were intentionally building an architecture that could eventually operate independently of them.

I've seen similar initiatives in other important protocols. The Ethereum Foundation and the Uniswap Foundation share the same goal: to protect the long-term direction of the network from the short-term decisions of the initial team. Mira's early move made me feel that their plan was far more ambitious than the traditional project lifecycle.

Mira also established a fund to support developers and researchers involved in the protocol's development. These initiatives make Mira seem less like a temporary product and more like an infrastructure designed to operate sustainably for many years.

As I began to delve deeper into the technical aspects, the reasons became clearer. The current power of artificial intelligence (AI) lies in its immense capabilities, but it also carries risks. Models can generate complex answers, code, or strategies in seconds. However, many of us encounter the same problem: these answers may be completely wrong.

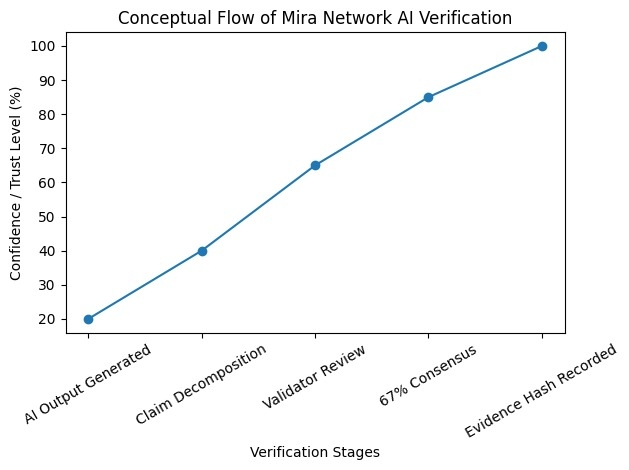

Mira's solution differs. Instead of treating the AI's response as the final answer, it breaks down each output into smaller, verifiable claims through a trust layer. Each claim is audited by a decentralized network of validators to check its accuracy.

The system requires 67% consensus among validators to accept a claim. If the network disagrees or finds any contradictions, the claim is not accepted until the verification process is complete. The final result is recorded as a hash value, clearly demonstrating the verification process.

When I first understood this structure, my perspective on AI systems changed. Most platforms focus on increasing the speed or intelligence of AI. However, Mira's focus is different: it focuses on ensuring the verifiability of AI outputs.

This verification layer may become crucial as AI systems begin to interact with financial systems, search tools, and automated infrastructure. Generating information is only half the battle; proving its reliability is equally important.

In my view, Mira is less another AI project and more an attempt to build a trustworthy AI decision-making and settlement layer. If this ecosystem continues to develop, this trust layer could become one of the most important components of AI infrastructure.

@Mira - Trust Layer of AI $MIRA