I noticed something interesting while using AI tools. The answers were fast, the explanations sounded confident, but sometimes, when I double-checked the information, the details didn’t fully match reality.

That experience made me realize something important: AI can generate answers quickly, but verifying those answers is a completely different challenge.

The more I explored this problem, the more I started paying attention to projects trying to solve it. One project that stood out to me was @Mira - Trust Layer of AI

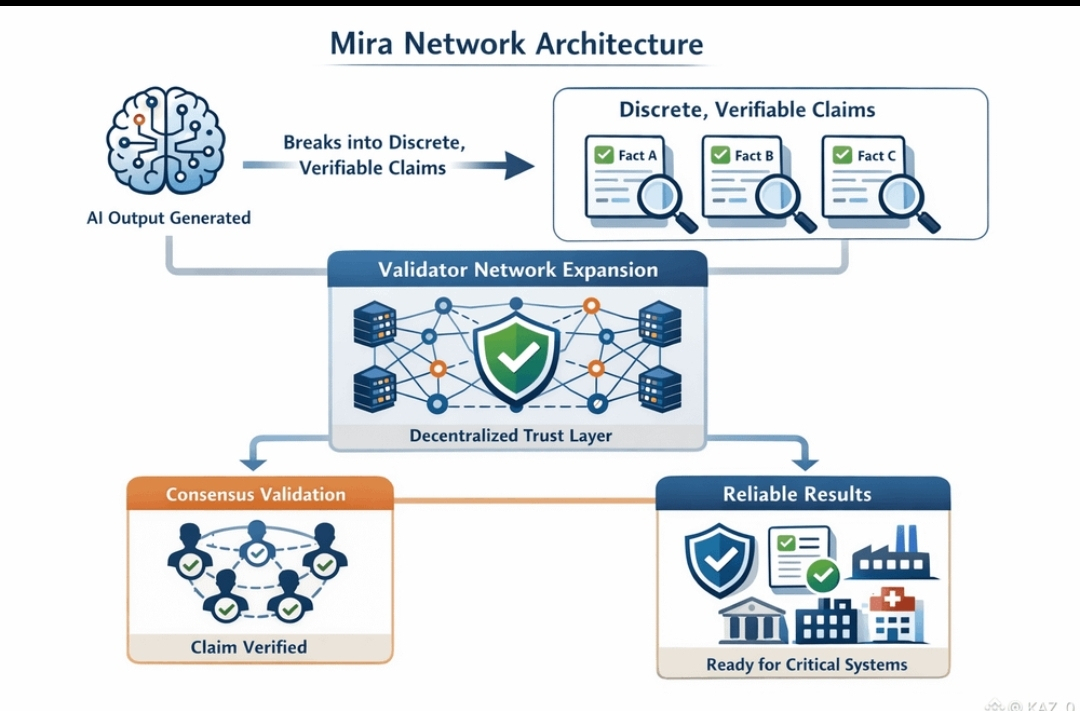

Instead of focusing only on creating smarter AI models, Mira is building a verification layer for artificial intelligence. In simple terms, the network focuses on checking whether AI outputs are actually correct.

The process is surprisingly elegant.

When an AI produces an answer, Mira doesn’t immediately treat that answer as truth. Instead, the response is broken down into smaller claims. Those claims are then evaluated by multiple independent validators across the network.

Each validator analyzes the information using different models and reasoning processes. Once the network reaches agreement, the verification result is recorded on-chain. This creates something most AI systems lack today: a transparent record showing how a conclusion was validated.

This is where the token $Mira becomes important.

Validators stake $Mira in order to participate in the network’s Dynamic Validator Network. Their stake acts as both an incentive and a form of accountability. If validators perform their role accurately, they earn rewards. If they behave dishonestly or validate incorrectly, they risk losing part of their stake.

At the same time, developers and applications use $Mira to pay for verification services on the network.

What I find interesting about this model is that it connects AI reliability directly with economic incentives. The more systems rely on verified AI outputs, the more activity flows through the network.

And that means greater utility for $Mira.

As artificial intelligence continues expanding into research, finance, automation, and decision-making systems, verification may become just as important as the AI models themselves.

Because in the long run, the most valuable AI systems might not just be the ones that generate answers.

They may be the ones that can prove those answers are true.

That’s the problem @Mira is trying to solve — and it’s a problem the AI industry will eventually have to face.

What do you think?

Will verification layers become a core part of future AI infrastructure?

#Mira $MIRA #StockMarketCrash #MarketPullback #AltcoinSeasonTalkTwoYearLow