There was a moment not long ago when I relied on a system that seemed perfectly capable. It gave a clear result, presented neatly, with the kind of quiet confidence that makes questioning it feel unnecessary. I passed the information along without much hesitation. Later, when I realized a small detail was wrong, the feeling wasn’t dramatic frustration. It was a softer kind of embarrassment — the recognition that I had trusted the system’s certainty more than I had examined the outcome.

Moments like that are becoming more common as machines become more capable. The deeper issue rarely comes from total failure. Instead, it appears when systems operate smoothly while hiding small uncertainties. The real tension sits between confidence and reliability. Machines can appear certain long before they are actually accountable for what they do.

That tension becomes even more noticeable when intelligent systems leave the purely digital world and begin acting in the physical one. Robots now sort packages, inspect equipment, assist with logistics, and move through environments shared with people. As these systems become more autonomous, the challenge shifts. The question is no longer simply whether machines can perform tasks. It becomes whether their actions can be understood, traced, and governed responsibly.

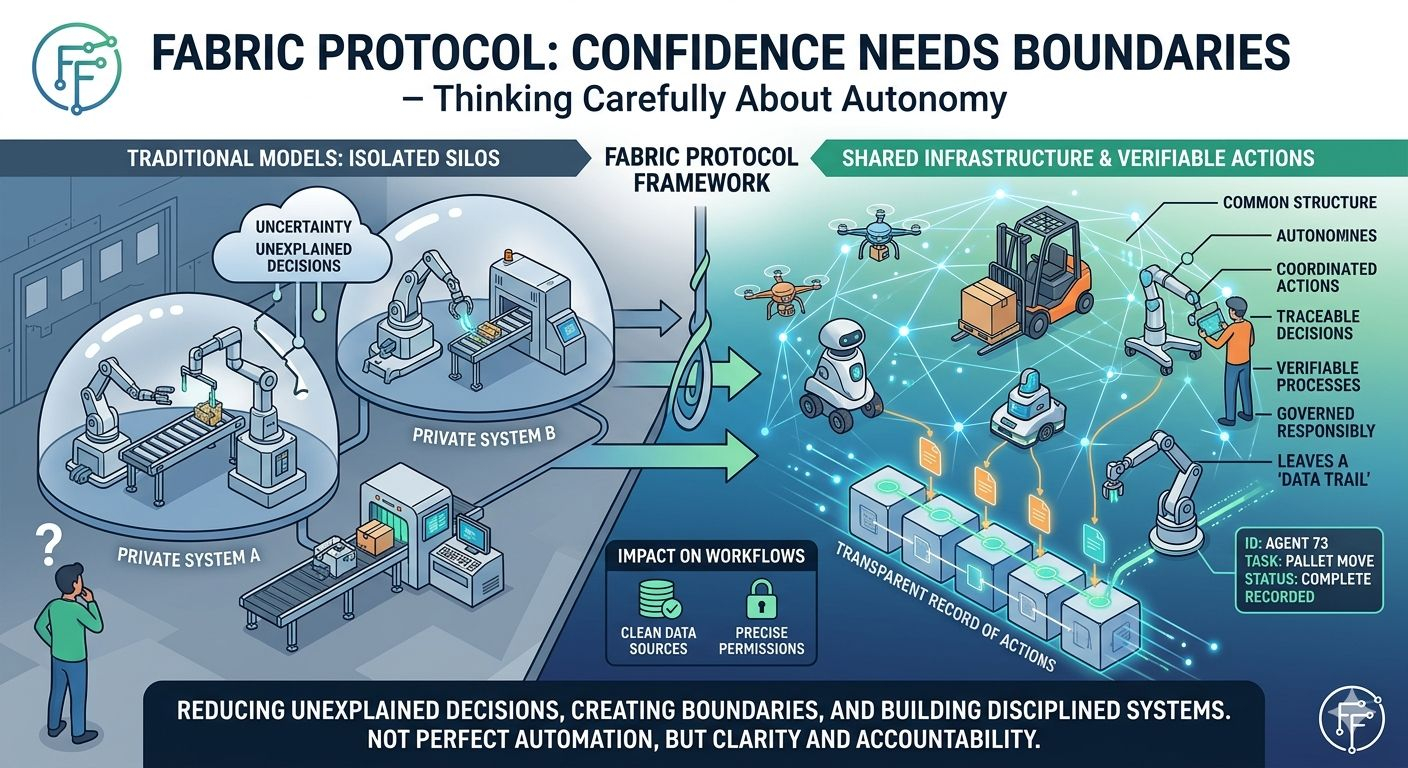

Historically, robotics systems have often been closed environments. A company builds the machines, controls the software, and manages the rules internally. If something goes wrong, the explanation usually remains inside that organization. For small systems this may be manageable. But as robots become more distributed and collaborative, the limits of that model begin to show.

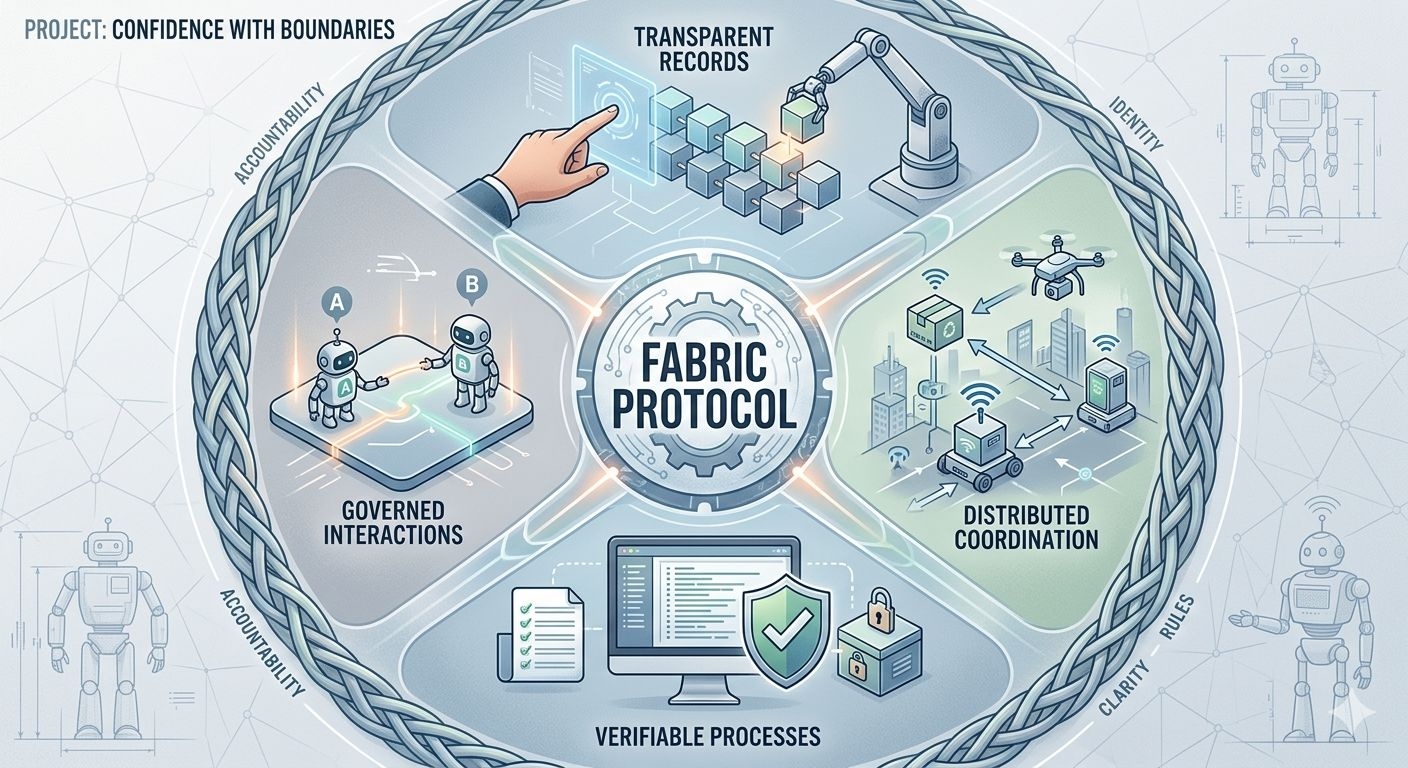

Fabric Protocol appears to emerge from that kind of repeated friction. It is supported by the Fabric Foundation, a non-profit initiative exploring how robots and autonomous agents might operate within shared infrastructure rather than isolated silos. The idea is not primarily about building smarter machines. Instead, it focuses on creating a common structure where robots, data, and decisions can be coordinated through transparent records and verifiable processes.

Conceptually, the shift is simple. Rather than allowing machines to operate entirely within private systems, Fabric introduces a framework where actions can leave a trace — a shared record of what was done, by which system, and under what conditions. The goal is not surveillance or control for its own sake, but clarity. If machines increasingly act on behalf of people, those actions should be explainable beyond the boundaries of a single organization.

Interestingly, systems like this tend to reshape human behavior as much as machine behavior. When developers know that actions may be visible and verifiable, they tend to structure workflows more carefully. Permissions are defined more precisely. Data sources become cleaner because messy inputs are harder to justify when everything is traceable.

The result is not perfection. It is discipline.

Fabric also reflects a broader shift in how autonomous systems are imagined. Instead of treating robots as individual tools controlled by isolated software stacks, the protocol frames them as participants in a shared network. In such an environment, coordination matters as much as capability. Identity, rules, and accountability begin to look like infrastructure rather than afterthoughts.

Of course, none of this guarantees safety or correctness. A transparent system can still make mistakes. Governance structures can become complicated. Open coordination introduces new questions about responsibility and oversight. Infrastructure may reduce confusion, but it cannot eliminate uncertainty.

In fact, uncertainty is part of the value. Systems that acknowledge the possibility of error tend to build mechanisms for checking and correction. They slow down just enough to allow understanding before action spreads too far. In environments where robots interact with people and physical spaces, that hesitation is not weakness. It is a form of protection.

For that reason, the real significance of Fabric may lie less in demonstrations and more in long-term deployment. Real infrastructure is rarely exciting. It is quiet, procedural, and occasionally inconvenient. But it reduces the number of moments where no one can explain what happened.

If networks like Fabric mature over time, their success may be measured in subtle ways. Fewer unexplained decisions. Clearer boundaries around what machines are allowed to do. Better records when something unexpected occurs.

The future suggested here is not one of perfect automation. Machines will still make mistakes, and humans will still misjudge systems that appear confident. But with stronger structures around them, those mistakes may become easier to trace, easier to correct, and less likely to repeat.

And perhaps the quiet embarrassment of trusting a system too quickly will happen a little less often.