@Fabric Foundation Robots make people uneasy for a reason that has nothing to do with science fiction. It’s the feeling that something is happening, and you can’t quite see the rules behind it. A robot moves through a space, makes a choice, and if it does something unexpected, the first question isn’t “is robotics good or bad.” The first question is much simpler: what happened, and who is responsible?

That question is why transparency matters. Not as a moral slogan. As a practical requirement.

In a demo, transparency feels optional. The environment is controlled, the operator is nearby, and the robot’s behavior is easy to explain because the problem has been simplified. Real deployments are not like that. Real deployments are messy. Warehouses are busy. Hospitals are sensitive. Public spaces are unpredictable. Rules differ by place, by time, and by who is watching. In those settings, you don’t get to rely on confidence or good intentions. You need proof that can survive disagreement.

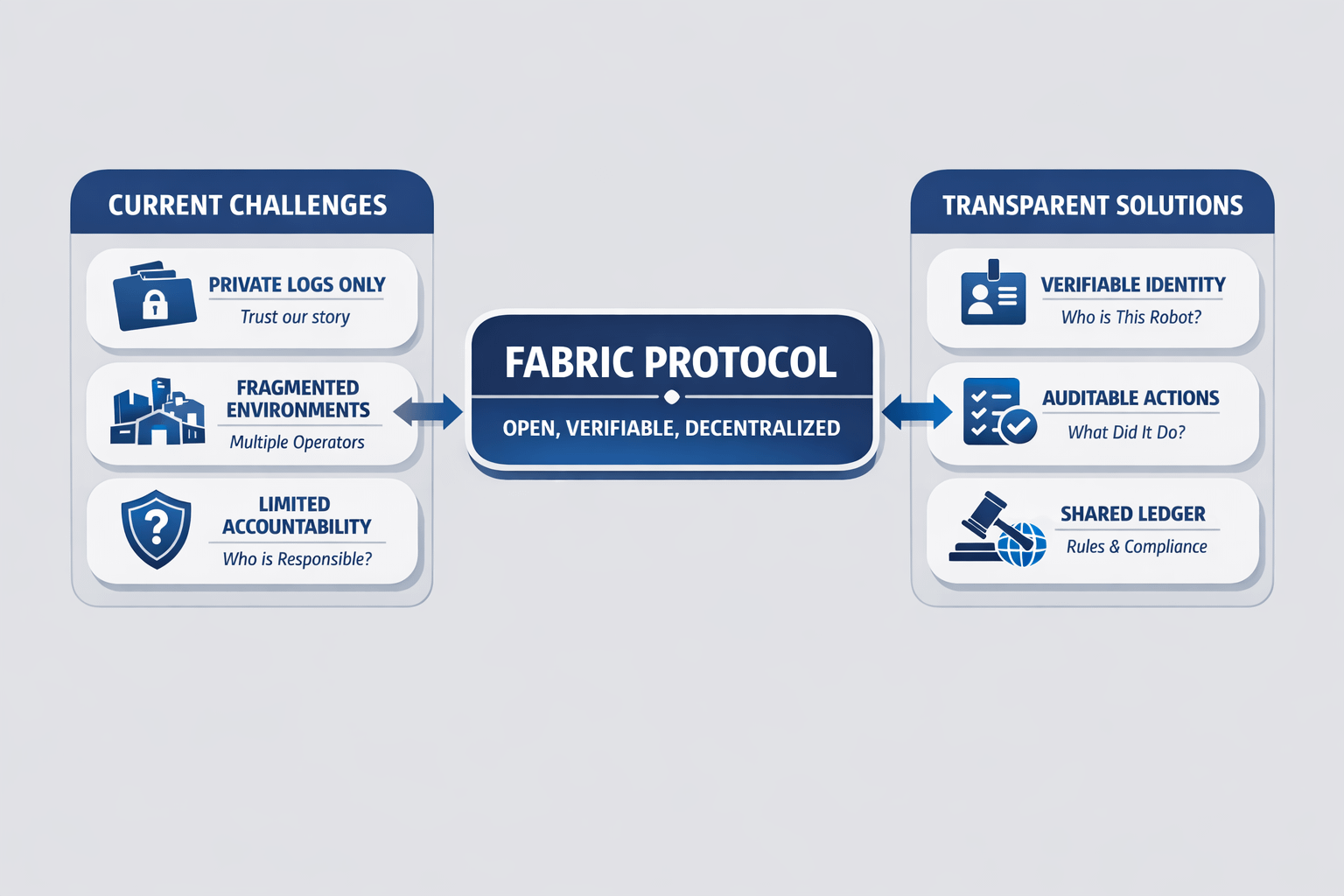

Most robotics systems today solve this with private logs. One operator owns the fleet, runs the software stack, and controls the record of what happened. If something goes wrong, the operator investigates and tells everyone the conclusion. That can work when everything stays inside one organization. It becomes fragile when multiple parties are involved, or when robots are expected to operate across environments that don’t belong to a single company. At that point, “trust our logs” starts to sound like “trust our story.”

Fabric Protocol is trying to build a different default. It frames itself as a global open network supported by a non-profit foundation, focused on governance and collaborative evolution of general-purpose robots through verifiable computing and agent-native infrastructure. It also talks about coordinating data, computation, and regulation through a public ledger, with modular infrastructure aimed at safe human–machine collaboration. Under the technical language is a simple instinct: if robots are going to scale beyond closed fleets, the coordination layer needs shared rules and shared accountability.

Transparency, in this context, doesn’t mean publishing everything. It means making the important parts verifiable. A robot’s work touches identity, permissions, compute, data access, and physical action. These are the points where trust usually breaks. Who is this robot? What was it allowed to do? What rules was it following? What data and compute did it use? If there is a dispute, can we reconstruct what happened without relying on one company’s internal dashboard?

The phrase “agent-native infrastructure” matters here because most systems were built for humans. Humans sign forms, log in, accept liability, and can be held responsible. Robots and software agents don’t fit naturally into that world. If you want them to be real participants, you need identity that can be verified, permissions that can be enforced, and records that can be audited. Otherwise every deployment becomes a custom integration, and every new partnership becomes a fresh trust negotiation.

This is where the idea of a public ledger becomes practical. Think of it as shared memory. Not a place to dump raw sensor data, but a place to anchor key events that matter for coordination and disputes. Identity registration. Permission changes. Task assignment. Task completion. Enough of a trail that if something goes wrong, different parties can point to the same record instead of arguing over screenshots.

Verifiable computing fits into the same picture. In robotics, the dispute is rarely about whether the robot moved. It’s about why it moved. Why did it take that route? Why did it stop? Why did it escalate or refuse? Those questions point back to computation and policy. If a system can show what computation ran, under what constraints, and what outputs guided the action, then explanations become evidence instead of narrative. Without that, you are left with “trust our internal logs,” which works only when you already trust the operator.

Regulation being part of the protocol’s framing is also important. Robotics doesn’t get to ignore regulation. Safety, privacy, and compliance are not optional in most real environments. If oversight is handled after the fact, robotics stays slow because every deployment requires manual reassurance. If traceability is built into the coordination layer, compliance becomes less about convincing someone and more about pointing to a process and a record.

Transparency also changes behavior. In closed systems, the operator enforces rules because the operator has control. In open systems, the network has to enforce rules because actions can be verified. That difference matters. It makes it harder to hide weak behavior behind private logs, and it makes it easier for honest participants to prove they did the right thing without relying on relationships.

The “modular infrastructure” idea matters because robotics will never be one uniform stack. Different robots will exist, different environments will demand different constraints, and different operators will have different standards. A modular approach suggests the shared coordination layer can remain stable while pieces evolve over time, which is how ecosystems grow without breaking trust every time something changes.

None of this makes robotics easy overnight. Hardware still fails. Edge cases still show up. But transparency is the thing that lets robotics scale without forcing everything into one closed operator’s world. It’s what turns “trust us” into “here’s the record.”

And in the end, that’s what people want when robots become real. Not hype, not promises, not confidence. Just a clear answer to the same question that always appears the moment something goes wrong: what happened, and can you prove it?