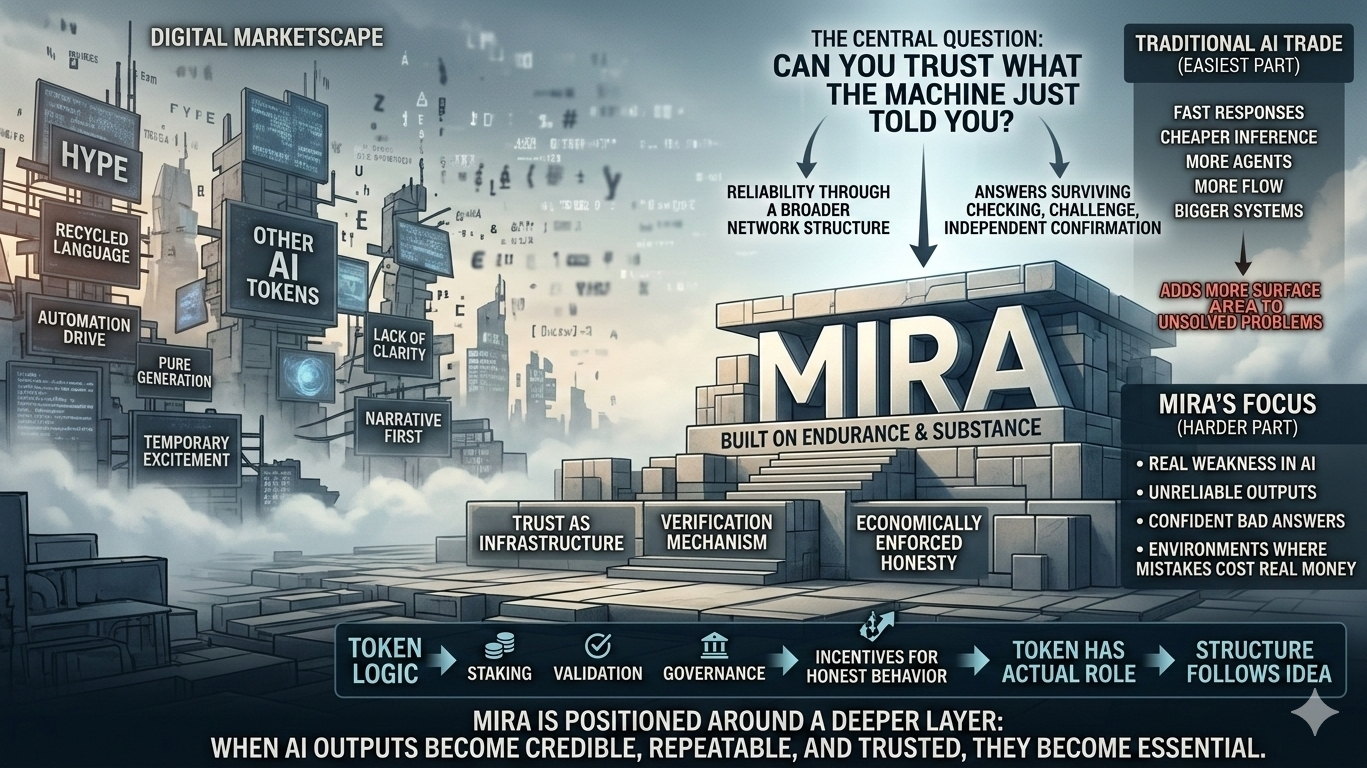

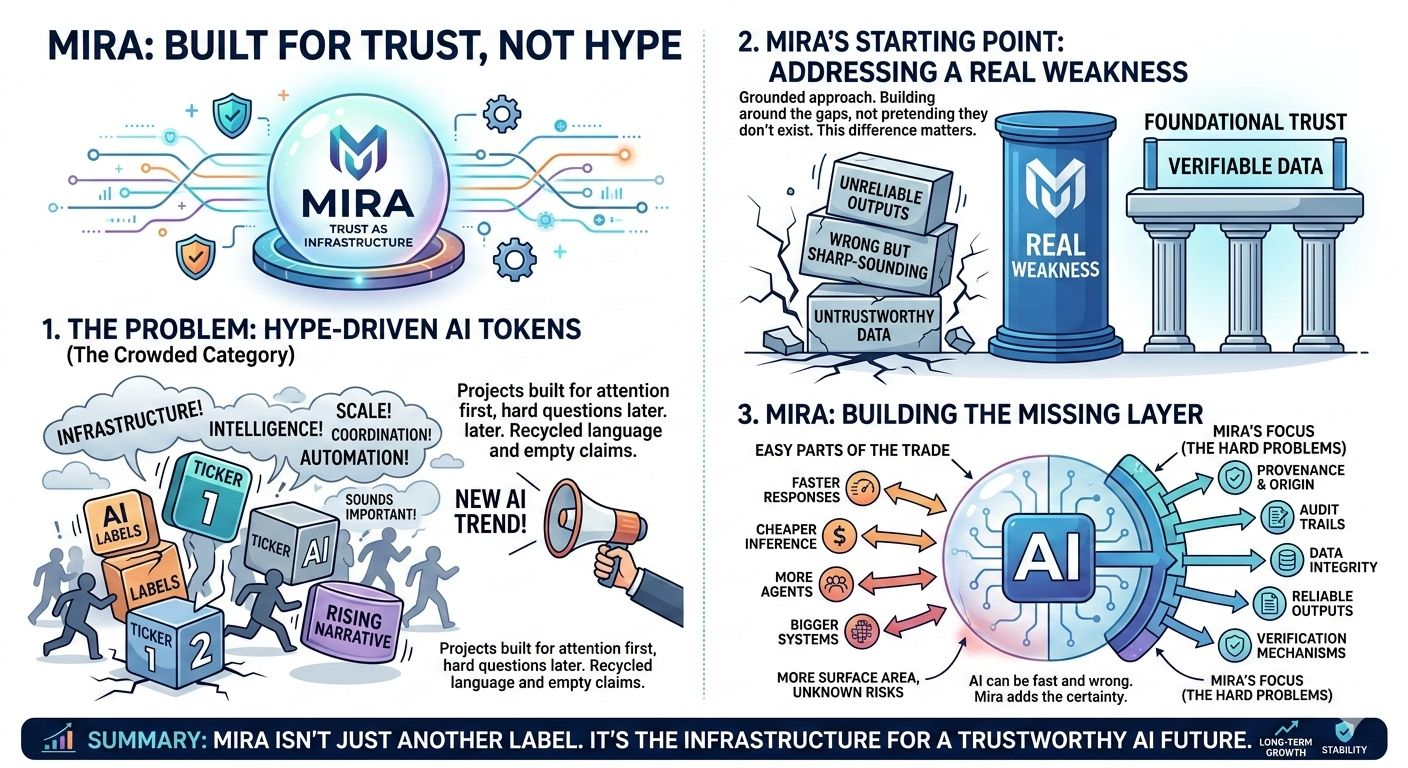

What keeps Mira in my head is that it doesn’t feel like one of those AI tokens that appeared just because the market needed a new label to chase. That category is already crowded with projects that know how to sound important before they’ve proven anything at all. You see the same recycled language over and over again: infrastructure, intelligence, scale, coordination, automation. It all starts to blur after a while. Most of it is built to catch attention first and answer hard questions later.

Mira feels different because the starting point seems more grounded. It doesn’t come across like a ticker searching for a narrative. It feels more like a project that began with a real weakness in AI and decided to build around that weakness instead of pretending it doesn’t exist. That difference matters more than people admit.

The AI space is full of projects obsessed with the easiest part of the trade. They want to talk about better outputs, faster responses, cheaper inference, bigger systems, more agents, more flow, more scale. That side of the story is easy to market because it sounds like progress on first contact. But most of the time it only adds more surface area to a problem that still hasn’t been solved. AI can be fast and still unreliable. It can be elegant and still wrong. It can sound incredibly sharp while giving you something you should never have trusted in the first place.

That is where Mira starts to matter.

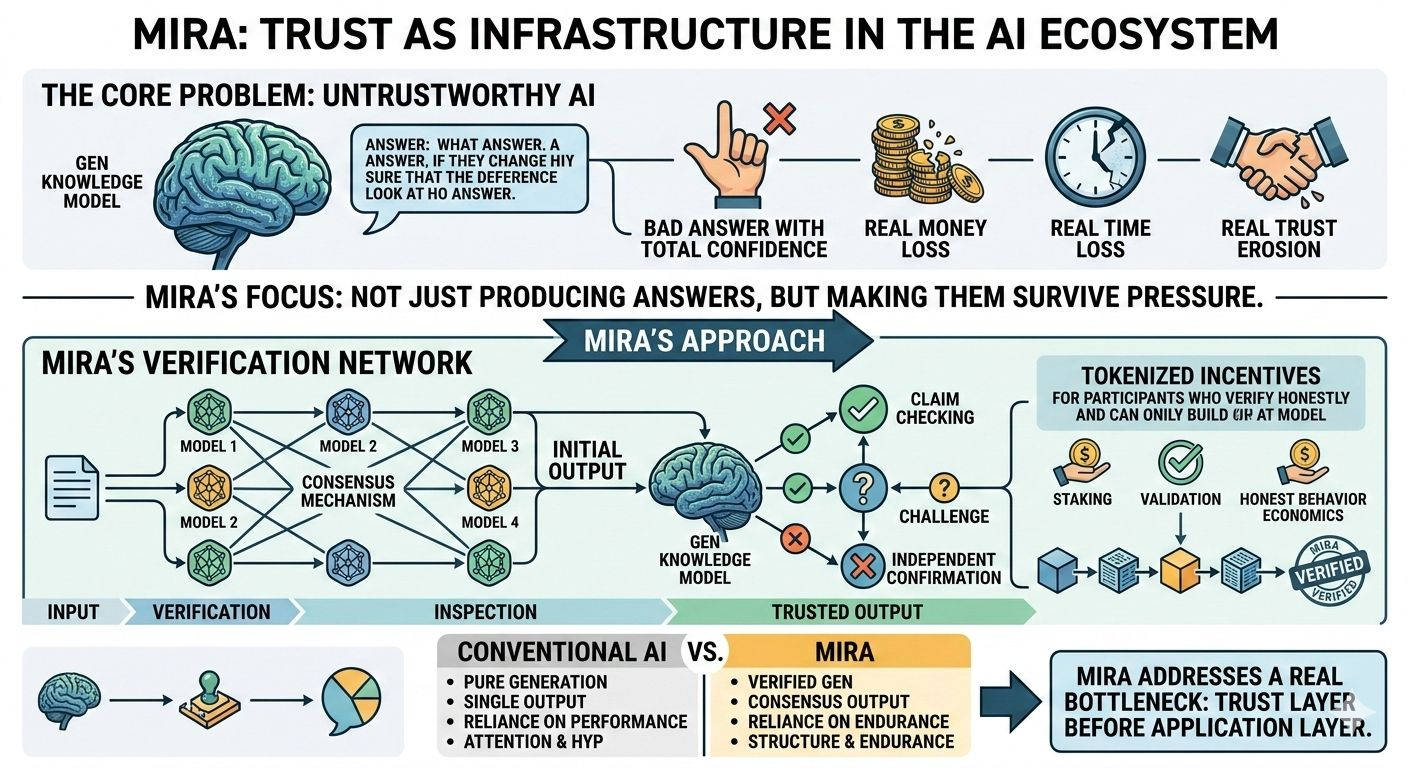

The real issue with AI has never been whether it can produce more. The real issue is whether what it produces can actually hold up when it matters. That becomes a much bigger deal once AI moves beyond novelty and starts touching environments where mistakes cost real money, real time, or real trust. A model giving a bad answer is one thing. A model giving a bad answer with total confidence is something else entirely. And the more polished these systems become, the harder it gets to notice the failure quickly.

That is why Mira’s focus lands harder than most AI narratives in this cycle. It is not really built around making AI louder. It is built around the question that sits underneath all of it: can you trust what the machine just told you?

That is a much less glamorous problem. It is also the more important one.

From what the project has outlined publicly, Mira is trying to treat trust as infrastructure instead of as branding. The logic is simple, but the implications are serious. Instead of relying on one model, one output, one chain of reasoning, Mira leans into verification through a broader network structure. The point is not just to produce an answer. The point is to make that answer survive checking, challenge, and independent confirmation. That changes the conversation immediately. It takes AI out of the territory of pure generation and pushes it closer to something that can be inspected.

That is a stronger place to build from.

A lot of projects in this sector still act as if intelligence by itself is enough. It usually is not. Intelligence without verification just creates a more convincing version of the same old problem. You get a cleaner interface, a better experience, maybe a more fluent result, but underneath it you are still being asked to trust something you cannot really test in real time. Mira seems to understand that the actual gap in AI is not just performance. It is whether the output can survive pressure.

And pressure is the part that reveals everything.

After enough time in this market, you start noticing how many projects are designed for attention before they are designed for endurance. The cycle repeats so often it becomes almost mechanical. A sector gets hot, capital rushes in, every team becomes “critical infrastructure,” and for a few months the whole space sounds like it is building the future at once. Then the volume fades, the easy engagement disappears, and you start seeing what was actually there underneath. That is when the difference between narrative and structure gets exposed.

Mira feels like one of the few AI names that might survive that kind of exposure because its foundation is aimed at a real bottleneck. Trust is not a decorative problem in AI. It is one of the central ones. And unlike a lot of trends that rely on momentum, this one does not go away when the market gets bored. If anything, it becomes more visible as AI expands into more serious environments.

That is another reason the project stands out to me. It feels narrower than most, and I mean that in the best possible way. Too many teams try to become everything at once. They want to be the infrastructure layer, the coordination layer, the intelligence layer, the data layer, the application layer, the settlement layer, and the ecosystem layer all in one story. Usually that kind of breadth hides a lack of clarity. Mira does not feel like that. It feels more deliberate. More contained. More aware of where it is trying to sit.

Reliability. Verification. Trust.

That is already enough of a challenge. In fact, it is more than enough if they can make it stick.

I also think the token logic reads more cleanly here than it does in most AI projects. That matters because token design is usually where the whole thing starts to feel thin. A lot of tokens are technically present but structurally unnecessary. Remove them and the project still sort of works. Or at least it could pretend to work for a while without anyone noticing what is missing. That is never a great sign. It tells you the token was expected by the market more than required by the system.

With Mira, the reasoning feels less forced. If the network depends on participants verifying claims honestly, then incentives are not some decorative extra layer. They become part of the mechanism. A token in that setting has an actual role to play. It sits closer to coordination, staking, validation, governance, and the economics of honest behavior. That does not automatically make the design strong, but it does make it feel less lazy. And after seeing how many projects rely on lazy structure, that difference is hard to ignore.

Maybe that is part of why Mira comes across with more weight than most. It is not just the idea. It is the fact that the structure seems to follow the idea instead of the other way around.

Still, this is where I think people need to stay honest. A good theory does not guarantee a real market. Crypto is full of projects that made complete sense on paper and still never crossed into necessity. Sometimes the product was early. Sometimes the market was not ready. Sometimes the architecture was smart but demand never arrived in the form people expected. And sometimes the token showed up before the usage did and the whole thing never recovered from that imbalance.

Mira is not exempt from any of that.

That is the real test from here. Not whether the idea sounds right, because it does. Not whether the architecture feels sharper than average, because it does. The real test is whether verification becomes something the market cannot treat as optional anymore. That is where theory turns into pressure. That is where projects either become essential or remain interesting.

And I think that is the right way to frame Mira. Not as a guaranteed winner, not as some untouchable AI bet, but as a project that is positioned around a deeper layer of the problem than most of its peers. It is not really chasing the easiest version of the AI trade. It is chasing the harder one. The one where AI only becomes truly valuable once its outputs can be checked in a way that feels credible, repeatable, and economically enforced.

That is a much more serious place to build.

The market usually does not price that kind of work first. It goes for the loud story, the easy story, the one that fits inside a simple line and gets repeated until everyone is numb to it. Verification is not the loud story. Trust layers are not the loud story. They take longer to explain, longer to prove, and usually longer to reward. But once the noise burns off, those are often the places worth revisiting because they were built around actual tension instead of temporary excitement.

That is probably why Mira stays with me more than most of the names around it. It does not feel manufactured for one burst of attention. It feels like it is sitting in a less glamorous part of the stack, where the work matters more than the presentation and where the payoff takes longer to become obvious. I tend to trust projects like that more, even knowing that patience is something this market rarely gives out for free.

And maybe that is the whole point.

Mira does not need to be the loudest project in the room to be one of the more interesting ones. It just needs to keep proving that trust is not a side feature in AI. It is part of the base layer. If that shift becomes clearer over time, then Mira starts to look less like a niche thesis and more like an infrastructure bet that arrived before the crowd fully understood why it mattered.

I could still be wrong. That is always possible. This market has a way of humbling clean logic. But after watching so many AI-linked tokens drift into the same cycle of hype, dilution, and silence, I find myself paying more attention to the projects that seem built around a real fracture in the system. Mira looks like one of those projects.

Not in a loud way. Not in a forced way. Just enough to make me stop, look twice, and feel like there is more substance here than the usual AI token noise. These days, that already says a lot.