I was thinking about Mira today in a very practical way, not as a concept, but as a response to a problem people are already living with. AI is getting integrated into real workflows faster than most teams can adapt their habits. First it writes drafts. Then it summarizes. Then it starts making recommendations. And sooner or later, someone connects it to an action, because that’s where the time savings feel real.

That’s the moment the reliability problem changes shape.

In chat, a mistake is usually just a wrong line. You can correct it, ask again, and move on. In automation, the same wrong line can become a step. A ticket gets closed for the wrong reason. A request gets approved on a shaky assumption. A policy summary includes one incorrect rule and that rule gets repeated until it becomes “how we do things.” The risk is not that AI can be wrong. The risk is that it can be wrong in a way that still sounds finished.

And that is exactly what hallucinations are good at. They don’t always show up as nonsense. Often they show up as a believable detail that fits neatly into a confident paragraph. Humans tend to trust fluent writing. We’re trained to treat clean structure as competence. That habit works most of the time with human writing, because humans usually hesitate when they don’t know. Models often don’t. They keep the same tone whether they’re recalling, reasoning, or guessing.

This is why “just make the model smarter” doesn’t fully solve it. Better models reduce error rates, but they don’t eliminate the basic failure mode: the system can still fill gaps instead of admitting uncertainty. And in critical workflows, “usually correct” is not a comfort. It’s a warning. Because autonomy means the system will run many times without you watching. The one time it guesses is the one time it can do damage.

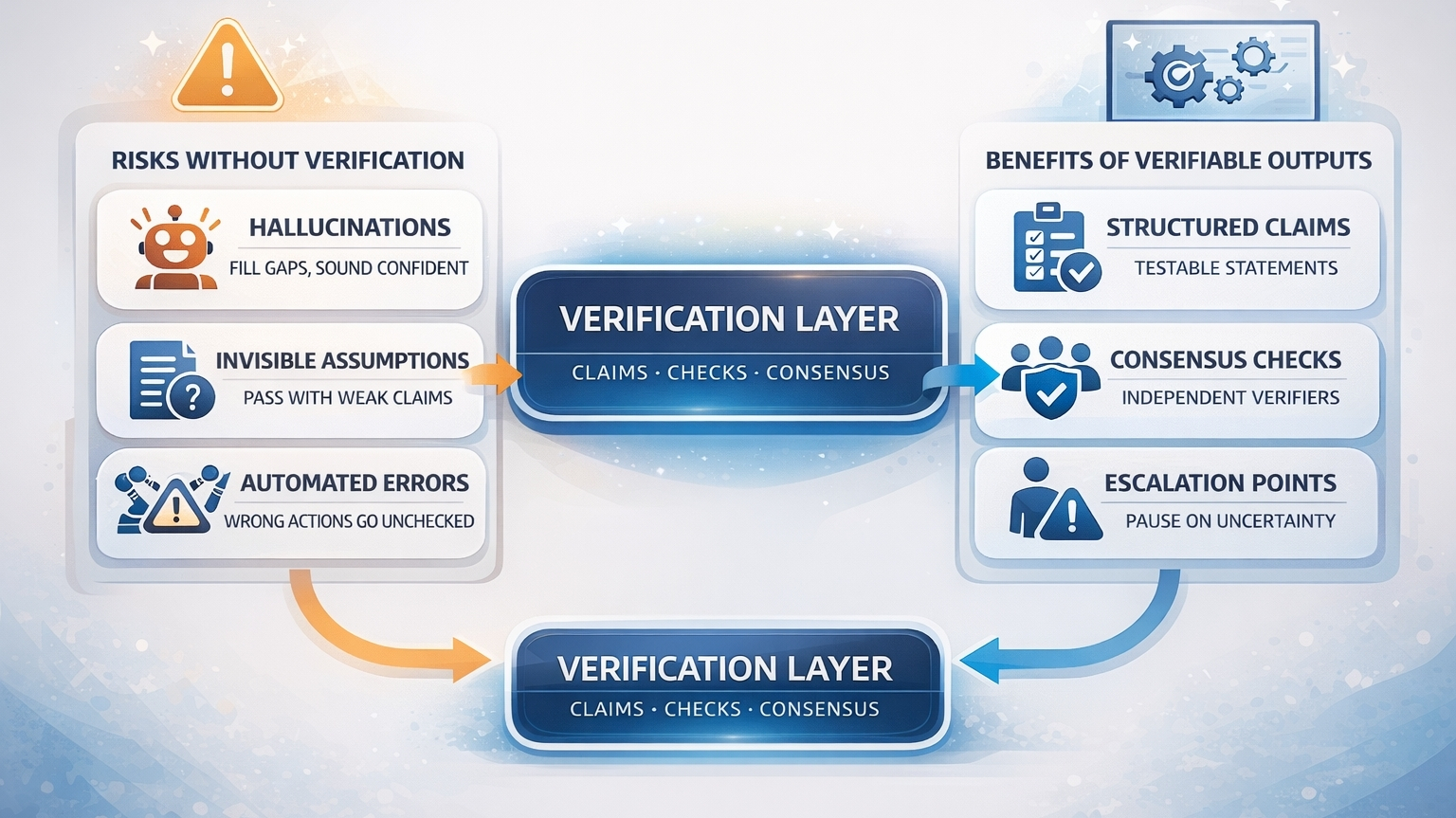

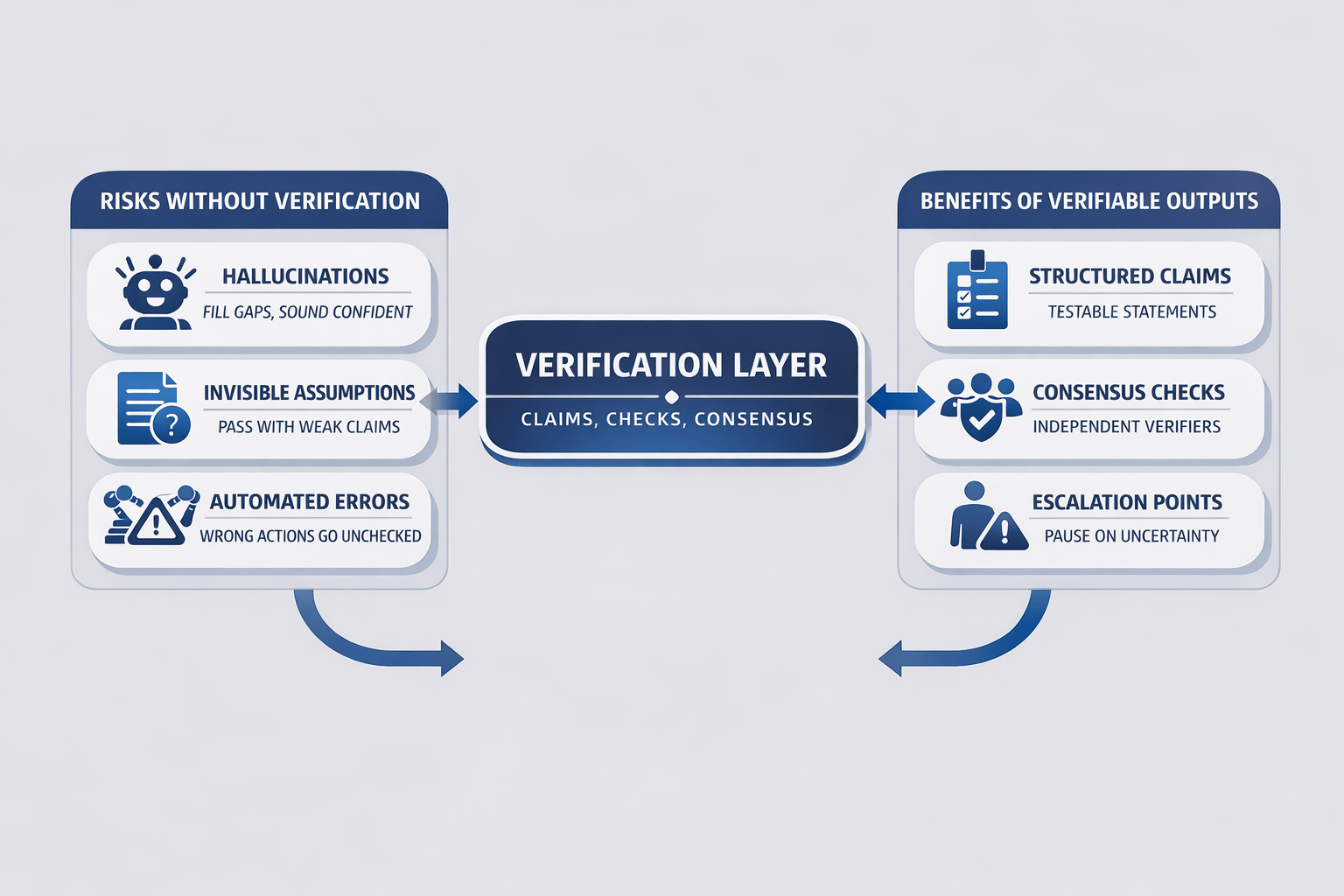

What I like about Mira’s framing is that it starts from something simple: you can’t verify a fog. If you treat an AI output as one smooth block, verification becomes vague. People do a vibe check. They skim the parts they care about. They miss the hidden assumption because it’s not clearly separated. That’s how weak claims travel.

Mira’s answer is to force structure. Break the output into claims. Not a paragraph, but a set of small statements you can point to. This date. This number. This rule. This conclusion. Once the output is claim-shaped, verification becomes practical. Different verifiers can check the same claim without debating what they’re checking. You’re no longer trusting the writing. You’re testing specific statements.

The next part matters even more: what happens when a claim is uncertain. In a healthy system, uncertainty should slow things down. If a key claim can’t be supported, the system should pause, ask for more context, or escalate to a human. It should not continue just because the paragraph reads well. That is the difference between AI that is useful for drafting and AI that is safe for automation.

The idea of independent verification and consensus is not about pretending truth becomes perfect. It’s about making weak points harder to hide. When multiple checks look at the same claim, disagreement becomes a signal. It tells you exactly where the risk is. That is valuable because most failures happen when risk is invisible, not when risk is obvious.

The deeper point is that reliability is not only an accuracy problem. It’s a workflow problem. Even a strong model can be used unsafely if the environment around it rewards speed over checking. A verification layer changes the environment. It creates friction in the right place. It asks the system to show what it relies on before it acts.

I don’t think the future is a world where AI never makes mistakes. I think the future is a world where important AI outputs come with receipts. Not a marketing badge. A real trail that shows what was checked and what still isn’t solid. That’s the kind of design that can make autonomy feel less like a gamble and more like a controlled process.

Do you think AI agents will become trusted only after verification layers become normal?