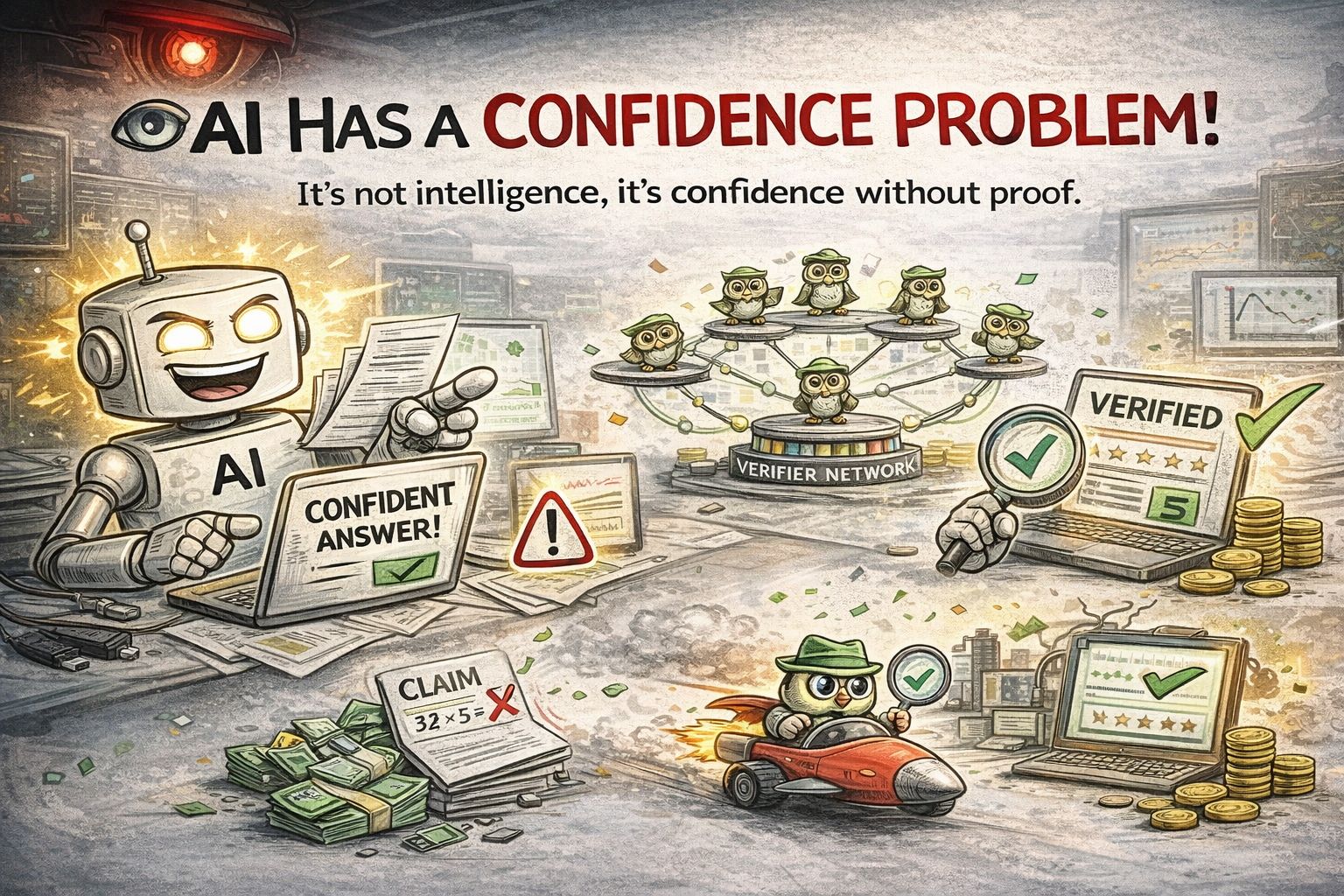

The biggest weakness of AI is not intelligence.

It’s confidence without proof.

Ask an AI a question and it will answer instantly.

Clean sentences. Strong tone. Zero hesitation.

But here’s the uncomfortable part:

AI often sounds right even when it’s wrong.

And most systems have no mechanism to prove whether the answer is actually correct.

They just generate.

Confidence replaces verification.

That’s the gap Mira is trying to address.

Instead of treating AI outputs as final answers, Mira treats them as claims that need validation.

The network distributes these claims to independent verifiers who check logic, facts, and consistency.

Only after verification does the result gain trust.

It’s a subtle shift, but an important one.

Right now the AI race is about who can generate the most intelligence.

Mira is asking a different question:

Who verifies it?

Because in a world where AI writes code, analyzes markets, and influences decisions, raw intelligence might not be the hardest problem.

Trust might be.