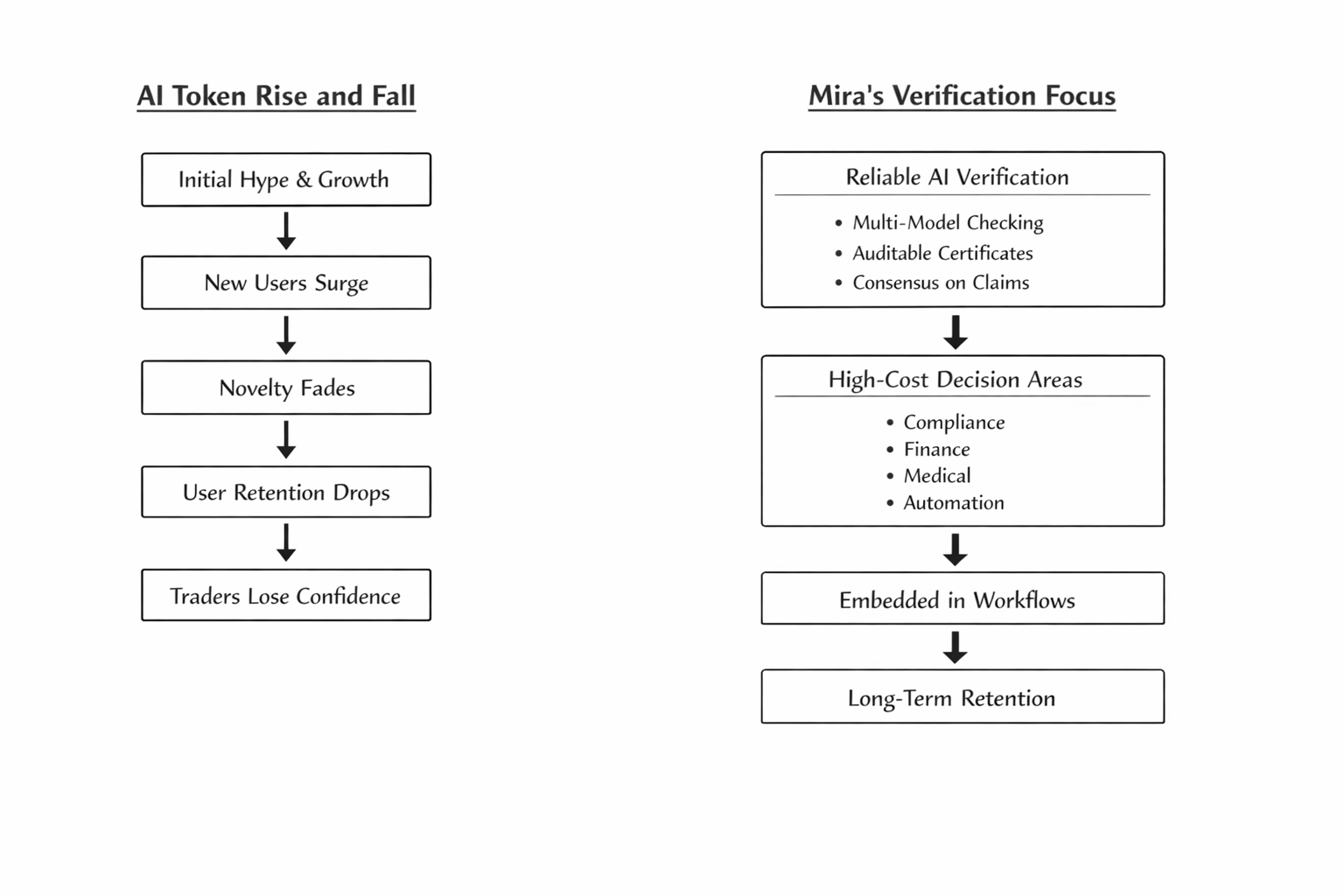

I got more careful with AI-linked tokens the first time I watched a clean product demo turn into a messy retention chart. The top line looked great for a week. New users came in, screenshots spread everywhere, and traders treated usage like it was already durable. Then people stopped coming back. That part matters more than most timelines want to admit. So when I started looking at Mira, I was not asking whether the idea sounded smart. I was asking a harder question: does verified AI create enough day-two value that users and developers stick around when the novelty fades? That is the trade for me. Not the launch. Not the listing. Retention. And the risk is obvious up front. MIRA is still a volatile token with a relatively small market cap, live price around eight cents, a circulating supply in the low hundreds of millions, and a max supply of 1 billion. Even basic supply figures vary across major data sites, which is exactly the kind of inconsistency that should make traders slow down before building conviction.

What kept me interested anyway was that Mira is not really selling “better AI” in the usual sense. It is selling verification. The whitepaper’s core idea is to take AI output, break it into independently checkable claims, and have diverse verifier models reach consensus on whether those claims hold up. The protocol frames that as a way to reduce hallucinations and bias rather than pretending errors disappear. That matters because it shifts the value proposition from raw intelligence to reliability. In markets, that is a very different thing. Raw intelligence gets attention fast. Reliability earns repeat usage, but only if users actually feel the pain of bad output often enough to pay for the fix. That is where the retention problem becomes the whole story. If you are building a chatbot for casual entertainment, slower verified output may feel like friction. People say they want trustworthy AI, but in low-stakes settings they often choose speed, novelty, and free access instead. Mira’s own product positioning leans into the opposite side of that trade. Its Verify API promises multi-model checking, auditable certificates, and less manual review for teams trying to run autonomous systems without babysitting every answer. That sounds useful. But useful is not the same as sticky. Sticky comes when verification is not a nice extra, but a workflow requirement. Think compliance-heavy research, financial agents, medical triage support, enterprise automation, anything where one bad output is expensive enough to change behavior. That is the wedge I am watching. And to Mira’s credit, there are early signs that it is at least operating beyond a lab demo. The project says its ecosystem serves over 4.5 million users and processes billions of tokens daily, and multiple market and news sources tied the September 2025 mainnet launch to that usage footprint. I do not treat that as proof of durable monetization. I treat it as proof that the network has reached the stage where retention can actually be measured. That is better than most AI narratives in crypto, which never get far enough to expose whether users return after the first curiosity spike. Still, scale headlines can hide weak engagement quality. I want to know how much of that activity depends on one or two flagship apps, how often developers re-query the system after initial integration, and whether verified outputs are becoming embedded in recurring workflows rather than occasional checks. The frustrating part is that trust as a product feature is harder to price than speed. Traders can see usage spikes. They can see volume. They can react to listings. Retention around reliability plays out slower and with less drama. Sometimes the market gets bored before the thesis has time to prove itself. That is a real tradeoff here. If Mira is right, it may be building in the correct direction while still underperforming the attention economy for stretches, because “fewer bad outputs over time” does not create the same immediate excitement as a flashy consumer AI launch. I actually think that tension is honest. But it also means the token can stay messy while the product story matures. So what would change my mind? If verified AI keeps being marketed mostly as a broad principle instead of showing up as recurring infrastructure spend, I get less interested. If the usage data stays high but the developer side remains gated, beta-heavy, or vague, I get cautious. If supply transparency keeps looking inconsistent across the market, I discount the equity-style story people try to project onto the token. But if Mira starts showing that verification is becoming default behavior in high-cost decision loops, not optional insurance, then retention looks a lot stronger and the investment case gets harder to ignore. That is the lens I would use if you are eyeing this now: do not chase the trust narrative by itself. Track whether trust is turning into habit. Watch whether users come back when verification adds cost, latency, or extra steps. That is where the real signal will show up. Dig there before the crowd does, because in this market the projects worth keeping are usually the ones that survive after the first excitement stops talking.