Every technology cycle develops its own kind of visibility. Some parts of the system sit directly in front of us. We interact with them every day, so naturally they become the center of the conversation. Other pieces stay further back. They do not appear on screens or marketing pages, yet they quietly determine whether the whole structure actually works.

AI seems to be moving through that same pattern.

Most attention still circles around applications. New assistants appear. Image generators improve. Tools for writing, coding, searching, designing. All of them sit at the surface where people can see immediate results. It is easy to assume that whoever builds the most popular interface will define the next phase of the industry.

That assumption feels familiar. It also feels a little incomplete.

Spend enough time observing how these systems operate behind the scenes and a different question starts appearing. Not about what AI produces, but about how anyone decides whether the output should be trusted.

That question does not always show up in headlines. It tends to surface later, usually when someone tries to use AI inside environments where mistakes carry consequences.

I remember watching a demonstration of an AI system summarizing financial data. The model sounded confident. The explanation was clear. But a small number was wrong. Just slightly. Enough that a human reviewer caught it before the report was published.

The interesting part was not the mistake itself. AI errors are not unusual. What stood out was how the entire workflow slowed down because someone needed to double check everything manually.

That moment reveals something subtle about the current AI landscape.

Generation is fast. Verification is still human.

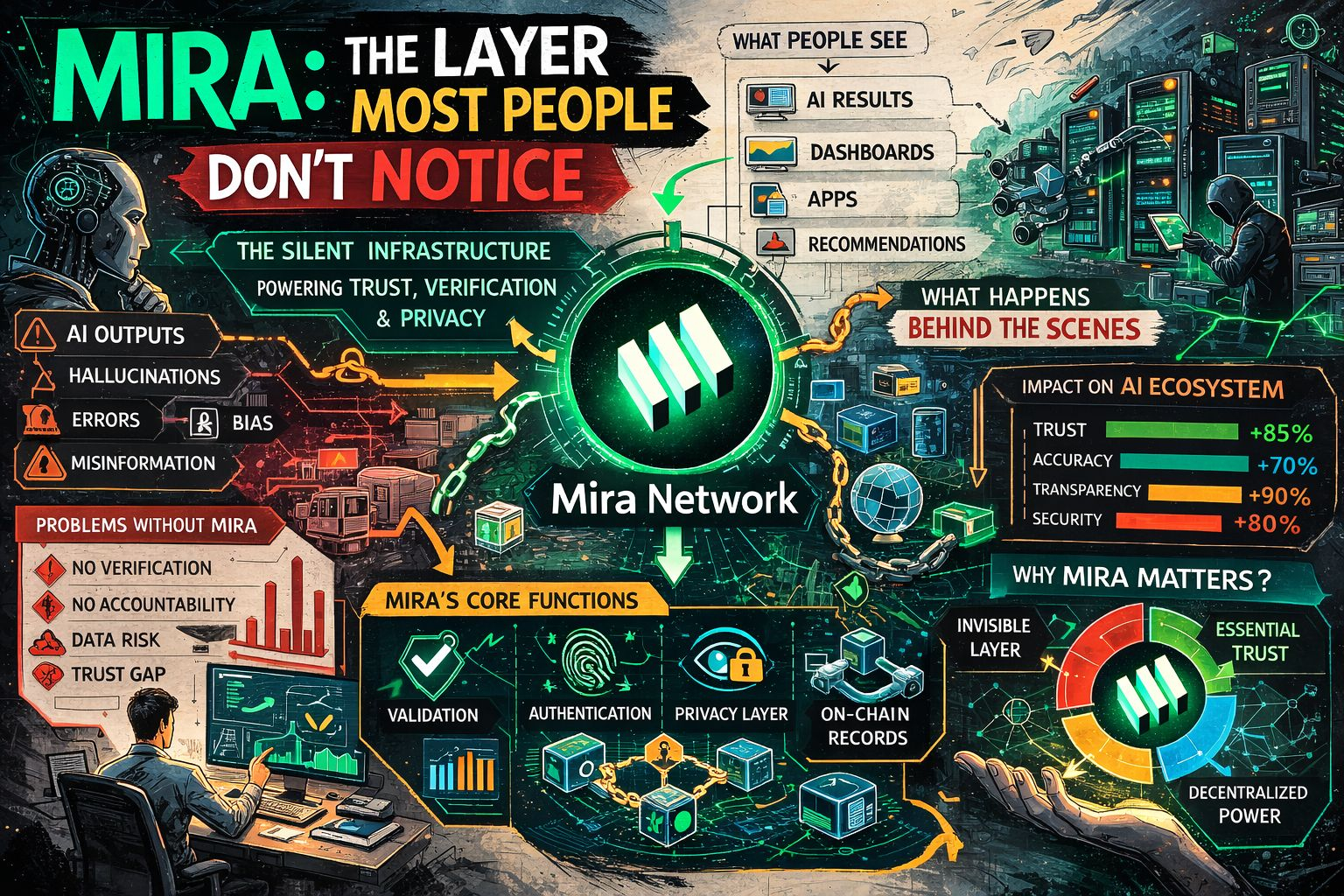

Projects like Mira begin from that tension. Not from the idea of building another AI model that answers questions more cleverly, but from the quieter observation that verification may become one of the most important pieces of the entire ecosystem.

At first glance Mira can be difficult to categorize. Some people describe it as an AI infrastructure network. Others call it middleware. Both labels capture part of the picture, although neither quite explains why the system exists in the first place.

The distinction becomes clearer when looking at how AI systems actually behave.

A large language model generates responses by predicting likely sequences of words. It does not truly confirm facts in the way humans imagine confirmation. The model estimates probabilities based on patterns in training data. Most of the time the result looks accurate. Sometimes it drifts.

And when it drifts, the confidence remains.

That characteristic has created what researchers often call the hallucination problem. The model produces statements that sound correct even when they are partially wrong. Early signs suggest this issue will not disappear entirely, even as models improve.

Which leaves developers with a practical decision. Either accept the risk or create additional layers that examine outputs before they are used.

Mira takes the second route.

Instead of trusting a single model, the network distributes verification across multiple independent AI systems. A claim generated by one model can enter the network and be evaluated by others. Each participant examines pieces of the claim, looking for inconsistencies or unsupported reasoning. When several models converge on the same conclusion, the output begins to look more reliable.

The process is less dramatic than it sounds.

Imagine a quiet panel discussion happening behind the scenes. Several AI systems looking at the same information, each approaching it from slightly different training data or reasoning paths. Agreement becomes a signal. Disagreement becomes a warning.

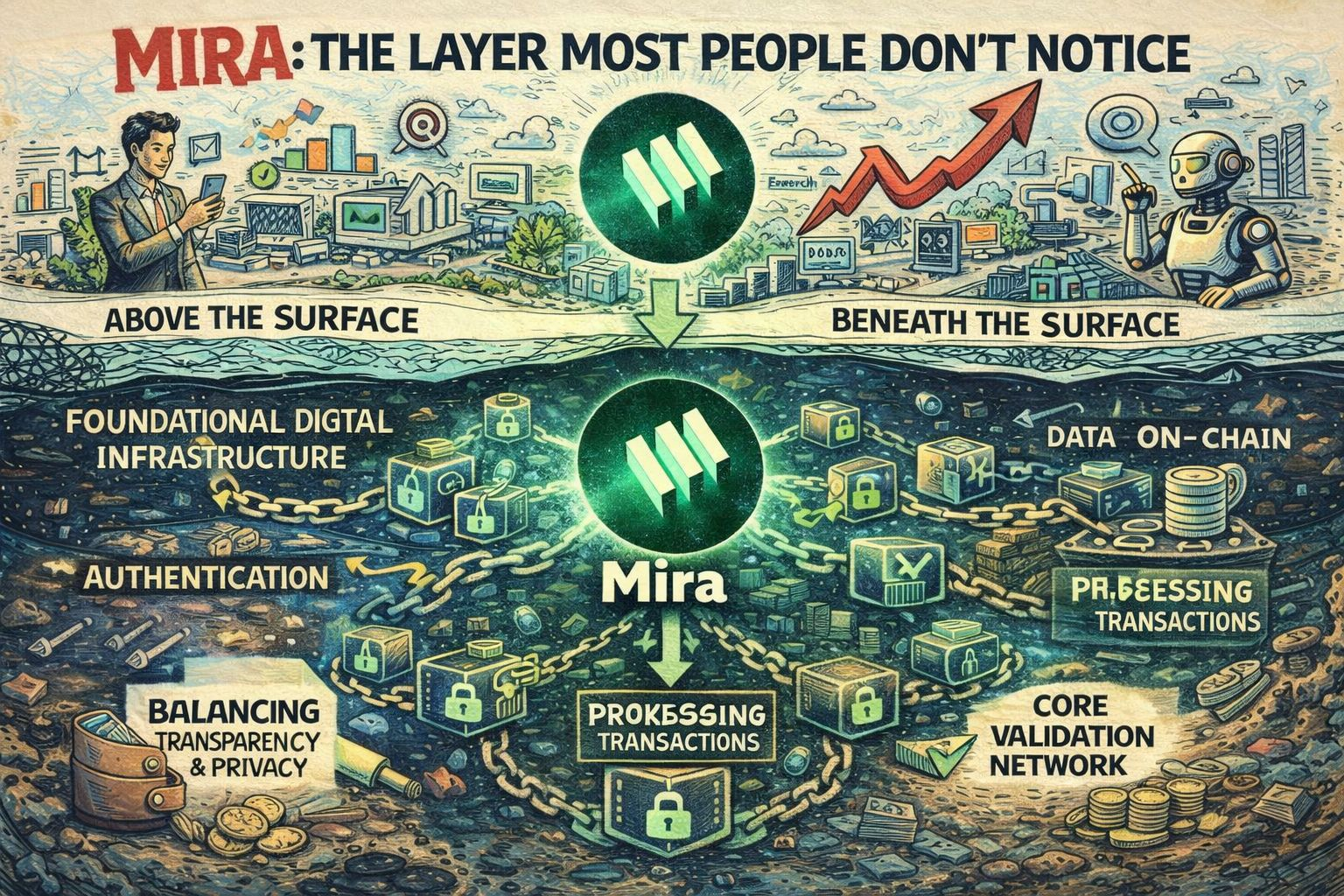

This design places Mira in an unusual architectural role.

It does not compete with front end AI tools that people interact with directly. Those tools continue to evolve on their own. And Mira does not attempt to replace large language models either. The network sits somewhere in between, examining the results produced by those models.

That position is why the middleware description appears frequently.

Yet the term middleware sometimes understates the ambition of verification layers. Traditional middleware mainly connects systems together. Databases talk to applications. APIs move data between services. The goal is coordination.

Verification adds another dimension. It evaluates the information itself.

In that sense Mira begins to resemble infrastructure. Infrastructure tends to operate quietly. Few people think about it until it fails. When electricity flows reliably through a city, nobody praises the wiring. When it stops, suddenly the system becomes visible.

Trust layers inside AI could follow a similar pattern.

If applications start depending on independent verification before displaying results, networks that coordinate those checks may gradually become part of the foundation. Not flashy. Not particularly visible. Just steady.

Still, markets rarely reward quiet layers immediately.

Human psychology tends to favor visible progress. Investors often look for products that demonstrate growth through users, downloads, or interface improvements. Infrastructure evolves differently. It grows through integration and dependency rather than attention.

Which creates an interesting tension around projects like Mira.

On one side the technical logic feels straightforward. Multiple AI models checking each other could reduce errors and improve reliability. On the other side adoption depends on whether developers actually choose to route their systems through a shared verification network instead of building internal solutions.

If this holds, the number of participating models will matter. Current figures suggest that more than one hundred AI models have already been integrated into Mira’s evaluation framework. That context is useful. Diversity of models increases the likelihood that errors are detected, since each model interprets information slightly differently.

But numbers alone do not guarantee lasting influence.

There is always the possibility that verification becomes a standard feature built directly into major AI platforms. Large technology companies have the resources to create internal consensus mechanisms. If those systems remain closed ecosystems, external verification networks could struggle to attract activity.

Latency introduces another uncertainty.

Verification takes time. Even if the process becomes efficient, evaluating a claim across multiple models inevitably introduces a small delay. In research or analytical environments that delay may be acceptable. In faster systems it might feel more noticeable.

And of course the regulatory environment continues to shift.

Governments are beginning to examine how AI generated information spreads and how it should be audited. If regulations require traceable verification steps for certain applications, networks built around consensus evaluation could become useful infrastructure almost by accident.

That scenario remains speculative for now.

So the original question still lingers in the background. Is Mira simply middleware connecting AI models together, or is it the early shape of a broader AI infrastructure layer?

The answer might depend less on the technology itself and more on how the ecosystem evolves around it.

Because in complex systems, value often accumulates quietly. Not where people first look. But somewhere underneath, where trust is slowly earned and reinforced over time.

@Mira - Trust Layer of AI $MIRA #Mira