In conversations about large AI models lately, one theme keeps coming up: reliability. People notice that even the most advanced systems sometimes produce answers that feel confident but are wrong, inconsistent, or influenced by unseen biases. These “hallucinations” and shaky outputs are not just quirks. They reflect deeper challenges with how AI is trained and evaluated.

Most AI services today validate quality behind the scenes using internal benchmarks or expert feedback loops controlled by a single organization. That kind of central control can help improve models, but it doesn’t offer transparent proof that every response is trustworthy. Users are left to decide what to believe based on reputation or brand strength. That’s where projects like Mira Network start to feel interesting.

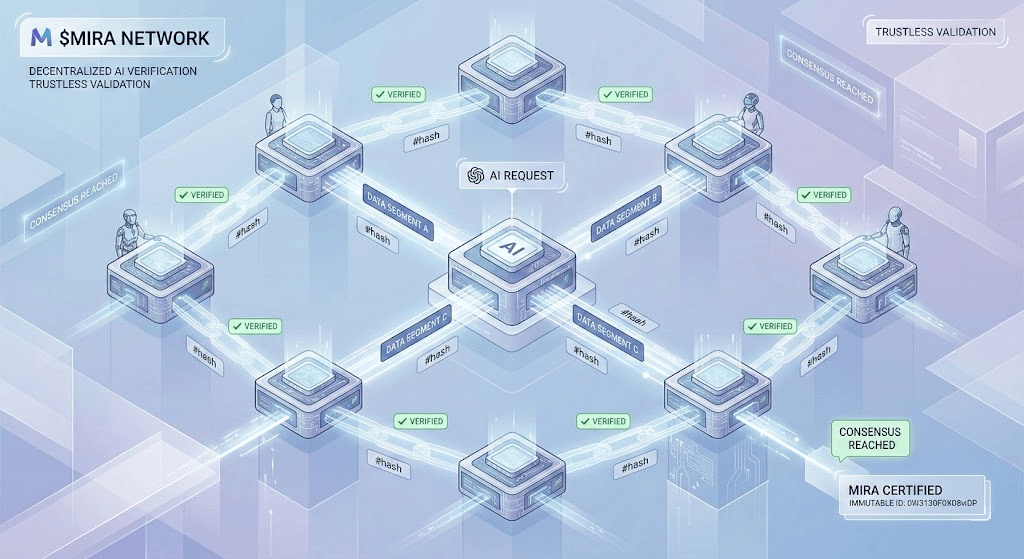

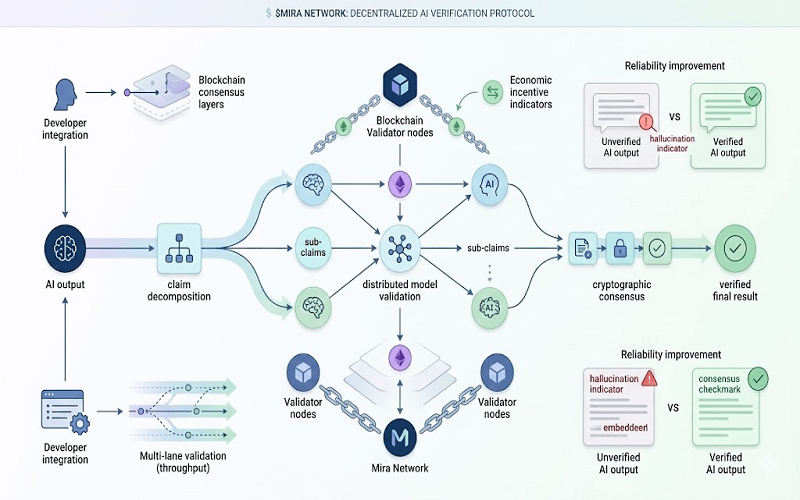

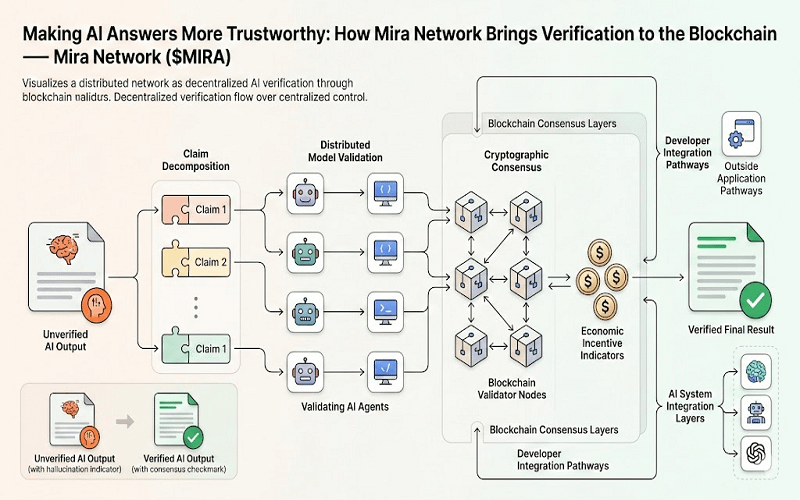

Mira Network is a decentralized protocol built with the idea that AI outputs should be verifiable by multiple independent agents rather than assumed correct because they come from a well‑known system. The core thought is simple: break complex AI answers into smaller claims, check each claim separately, and use a transparent consensus to determine if the claims hold up.

Instead of relying solely on one system’s internal metrics, the Mira approach assembles a network of validators. Each validator evaluates parts of an AI response independently. The protocol then uses blockchain consensus to agree on which claims are supported by evidence and which are questionable. In essence, this creates an open trust layer that sits alongside existing AI models.

This idea matters because centralized verification systems have limitations. When one authority checks content, users have to trust that authority’s methods and incentives. A decentralized system, at least in theory, spreads that trust across many participants. Decisions are not the result of a black box inside a single company. They come from a collective agreement among diverse validators.

What makes the Mira architecture unique is how it slices and checks content. Large answers are decomposed into individual assertions. Each assertion becomes a small unit that independent agents can evaluate faster and more consistently. Think of it like breaking a long research paper into bite‑sized claims and asking a panel of experts to give a yes or no on each one.

This distributed verification approach is recorded on a blockchain. Cryptographic proofs of each validation step are stored in a way that anyone can audit later. That means you don’t just get a final verdict. You get a ledger of who contributed, how they assessed each claim, and what evidence supported their conclusion. In a world where trust is often assumed rather than shown, this record can be meaningful.

Technically, this is not trivial. Coordinating many validators and achieving consensus on a decentralized network requires careful design. That’s where the blockchain aspect plays a role. It provides an immutable record and a decentralized mechanism for aggregating votes and assessments. The cryptographic layer ensures that nobody can easily tamper with the validation history without everyone noticing.

One of the motivations behind this design is to reduce reliance on centralized AI quality controls. Big AI providers can and do create internal evaluation systems, but those systems are often opaque. You don’t see how an answer was checked or who approved it. They may use human raters, automated benchmarks, or a mix. But the process is controlled internally. Mira’s model tries to shift that dynamic by opening up verification to many actors and making their work visible.

Inevitably, people want to know how this kind of decentralized checking helps in practice. There are several potential benefits that feel grounded rather than hype‑driven.

First, distributed verification can improve trust. If multiple independent validators reach the same conclusion about a claim, a user can feel more confident that it’s not a fluke or the result of a single perspective. This doesn’t guarantee perfection, but it does spread responsibility for accuracy.

Second, a transparent ledger of validations means researchers and developers can study how often claims hold up or fail. Over time, that data could help identify systematic issues in certain types of AI outputs, whether they relate to specific topics, phrasing patterns, or model behaviors.

Third, there’s an incentive layer. Participants who contribute valuable validation work might be rewarded in tokens like $MIRA . That provides a simple economic reason for people to engage and share their assessments. Incentives don’t solve all problems, but they do help align effort with outcomes in decentralized systems.

In contrast to centralized evaluation services, a decentralized layer doesn’t require users to trust a single company. Instead, users rely on transparent processes and community participation. That aligns more with the ethos of open systems and gives people more room to question or verify results independently.

It helps to put all this in context. Decentralized verification is not a magic solution that eliminates every reliability issue. It adds another layer of scrutiny, yes, but it doesn’t replace good model design or quality training data. It complements those things. If a model generates flawed outputs, decentralized checks can highlight and quantify that flaw. But they can’t make the original model inherently better on their own.

There are realistic limitations to this approach. For one, breaking down and validating every AI claim at scale consumes computational resources. Distributed verification means many agents need to run checks on parts of an answer. That can take time and energy, especially as responses grow longer or more complex.

Coordination is another challenge. Getting multiple independent validators to assess the same claim without conflict and then reaching consensus is not simple. The protocol needs to manage disputes, conflicting signals, and validators who might act incorrectly, whether by mistake or deliberately. Designing robust mechanisms to handle those situations takes thoughtful engineering and community governance.

Competition among decentralized AI infrastructure projects also complicates the picture. Mira Network is not alone in exploring how to add transparency and accountability to AI outputs. A crowded ecosystem means ideas evolve rapidly, which is good, but it also means no single approach is guaranteed to become dominant. Users and developers will likely experiment with different verification layers, consensus methods, and incentive structures.

It’s also worth noting that this ecosystem is still early. You won’t yet see a world where every AI answer you get is pre‑verified by a decentralized network before it reaches you. Adoption takes time, and integration with mainstream AI providers is not automatic. Developers have to build bridges between large language models and verification layers like those proposed by #MiraNetwork . That requires both technical work and community buy‑in.

With that in mind, it helps to think of decentralized verification not as a replacement for current quality control but as an additional tool. It’s a way of giving users more visibility into why an answer is considered reliable or not. It’s a way of spreading trust across many contributors instead of concentrating it in one place.

Another practical benefit lies in research and transparency. Because verification steps are logged and auditable, people can study patterns over time. They can ask questions like: which claims tend to be flagged most often? Do certain topics lead to more disagreement among validators? Patterns like these could inform future improvements in both AI generation and evaluation.

Consider too that a transparent verification ledger could help in educational settings or professional environments where evidence matters. If you’re making decisions based on AI output in a research paper or business analysis, having an auditable validation trail could be reassuring.

At the same time, it’s fair to remain grounded about expectations. Decentralized validation won’t be perfect. It won’t eliminate every error or bias. It won’t replace thoughtful human judgment. But it can create a more open environment where accountability for correctness is less of a private process and more of a public one.

Projects like @Mira - Trust Layer of AI raise questions that matter for the future of AI and blockchain together. They remind us that reliability is not just a technical challenge but a trust challenge.

When we think about how people will interact with increasingly powerful AI systems, adding layers that make outputs more verifiable feels like a thoughtful step rather than an extravagant claim.

That kind of careful thinking might be exactly what the space needs as it matures. #mira