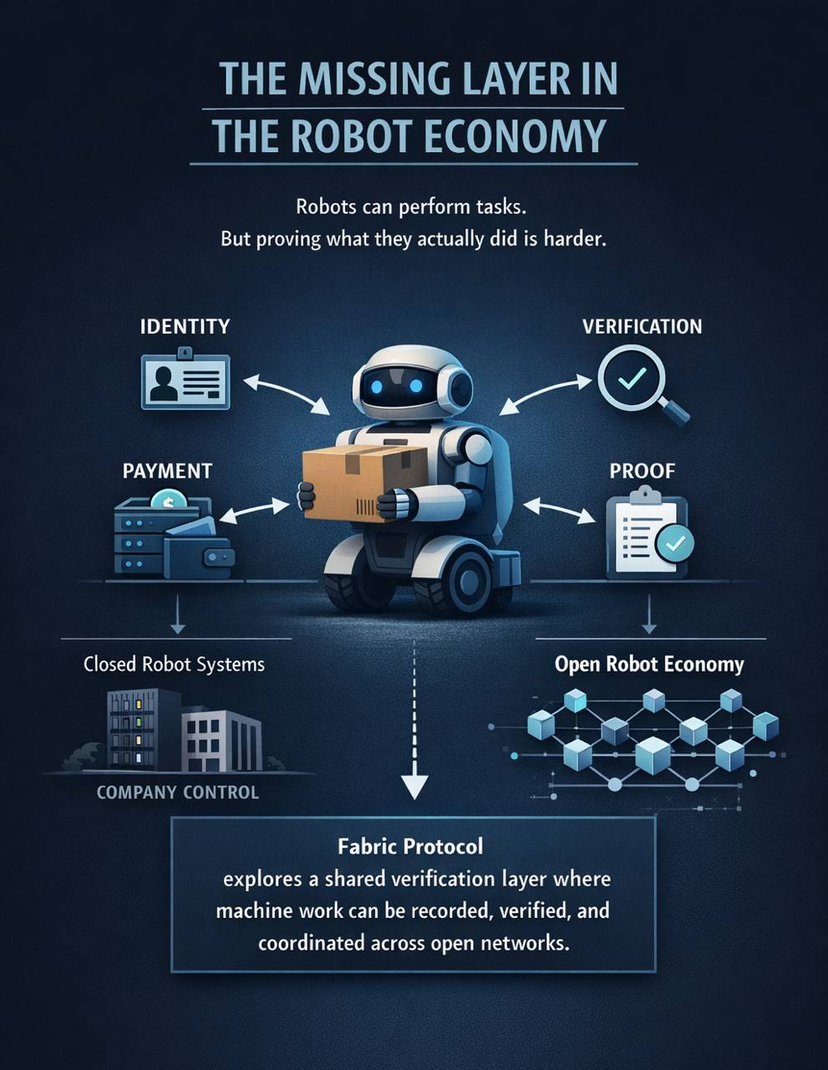

Imagine waking up in a neighborhood where machines aren’t mysterious black boxes owned by distant companies but are neighbors you can name, pay, and hold accountable. That’s the practical, slightly audacious picture behind Fabric Protocol: a set of rules and plumbing that gives physical robots on-the-ground identities, a way to prove what they did, and simple markets where work gets bought and sold. The idea is less about reinventing motors or sensors and more about building the social and economic scaffolding so lots of different robots, teams, and service buyers can work together without needing a single company to babysit everything. The project is stewarded by Fabric Foundation, which tries to be the civic glue that keeps the system honest and useful.

Imagine waking up in a neighborhood where machines aren’t mysterious black boxes owned by distant companies but are neighbors you can name, pay, and hold accountable. That’s the practical, slightly audacious picture behind Fabric Protocol: a set of rules and plumbing that gives physical robots on-the-ground identities, a way to prove what they did, and simple markets where work gets bought and sold. The idea is less about reinventing motors or sensors and more about building the social and economic scaffolding so lots of different robots, teams, and service buyers can work together without needing a single company to babysit everything. The project is stewarded by Fabric Foundation, which tries to be the civic glue that keeps the system honest and useful.

At the heart of the design is a small but powerful shift: attach verifiable evidence to robotic actions. If a delivery bot says it dropped a package at your door, that claim comes with something you can check — a signed trace, an attested sensor snapshot, or a compact cryptographic proof — so payment, reputation updates, or human review can happen automatically and fairly. That makes it possible to automate lots of microtransactions without trusting a single operator to tell the truth. It doesn’t solve every problem — these proofs show what happened, not why it happened or what the robot intended — but they change the default from “trust me” to “show me the receipt,” which matters a lot when services scale.

Money matters in this setup not as speculation theater but as coordination glue. A native token (commonly discussed as ROBO) becomes the common unit for staking, bonding, paying for tasks, and voting on protocol upgrades. Communities can pool tokens to buy and govern a fleet that serves a neighborhood; factories can stake tokens to guarantee quality for inspection microcontracts; a portion of service revenue can be used to buy tokens back, creating an economic loop between real work and on-chain value. How those tokens are distributed and governed will ultimately decide whether this model widens access to robotics or simply hands control to the first deep-pocketed players who show up.

Think of some everyday, low-glamour use cases to make this concrete. A co-op of small businesses could fund and manage sidewalk delivery robots: residents stake to prioritize deliveries, robots pay into a communal repair fund when they are used, and verified delivery proofs trigger payments automatically. A warehouse runs an on-chain auction for inspection tasks; robots bid, submit verified inspection traces when they finish, and get paid instantly — and their reputations update in a way future buyers can rely on. A municipal regulator might require environmental sensors to provide attested calibration proofs, making compliance audits cheaper while preserving selectivity about which raw data is exposed.

But the promise comes with honest frictions. Protocols tend to reward what they can measure; if the system prizes speed and completed tasks, participants will optimize for those metrics, sometimes at the expense of softer but important qualities like empathy, privacy, or long-term care. Liability is messy: when a robot with a wallet causes harm, who ultimately compensates the injured party — the device owner, the operator, the token stakers, or the task poster? Legal frameworks haven’t caught up, and the temptation to treat on-chain identity as a way to offload responsibility is real. Auditability is a double-edged sword: the same logs that validate a payout could become a tool for pervasive surveillance unless selective-disclosure primitives and privacy rules are baked in from the start.

There are also political risks. Token and validator economics can concentrate power if early insiders capture governance levers or the supply of high-quality hardware. Conversely, if allocation and onboarding are designed to be inclusive, the model could democratize access to automation — turning robots into civic infrastructure rather than private monopolies. That divergence isn’t technical so much as institutional: the cryptography and markets enable options, but social choices steer which option becomes reality.

A few practical moves would make the system more humane. Prefer proofs that support selective disclosure so you can verify compliance without handing over raw sensor logs. Build standardized escrow and insurance primitives so settlements are automatic and predictable when validated incidents occur. Pack governance templates for neighborhood co-ops and enterprise fleets so communities don’t need to reinvent legal engineering. And include oracle mechanisms that price local externalities — noise, congestion, privacy costs — so markets internalize social impacts instead of ignoring them.

If you step back, the most interesting thing here is the shift in perspective: robots stop being only tools and start behaving like actors in an economy — with names, reputations, and the ability to exchange value. That opens paths for new business models, community services, and regulatory approaches, but it also forces us to reckon with questions about fairness, surveillance, and who gets to shape the rules. The outcome will hinge less on clever cryptography and more on whether the institutions around the protocol — the foundations, communities, standards, and regulators — steer it toward public benefit or toward rent extraction. If you want, I can turn this into a plain-language briefing for a city council, a checklist to make a robot “protocol-ready,” or a short note examining token distribution and governance choices; tell me which and I’ll draft it now.

@Fabric Foundation #ROBO $ROBO