I tend to approach new infrastructure claims cautiously, especially when they intersect with robotics, distributed systems, and public ledgers. Over the past few years, I have seen many systems promise coordination, safety, and transparency, but relatively few address the practical constraints that emerge once real operators, regulators, and engineers begin interacting with them. What interests me about Fabric Protocol is not the promise of robots themselves, but the way the system frames the infrastructure around them.

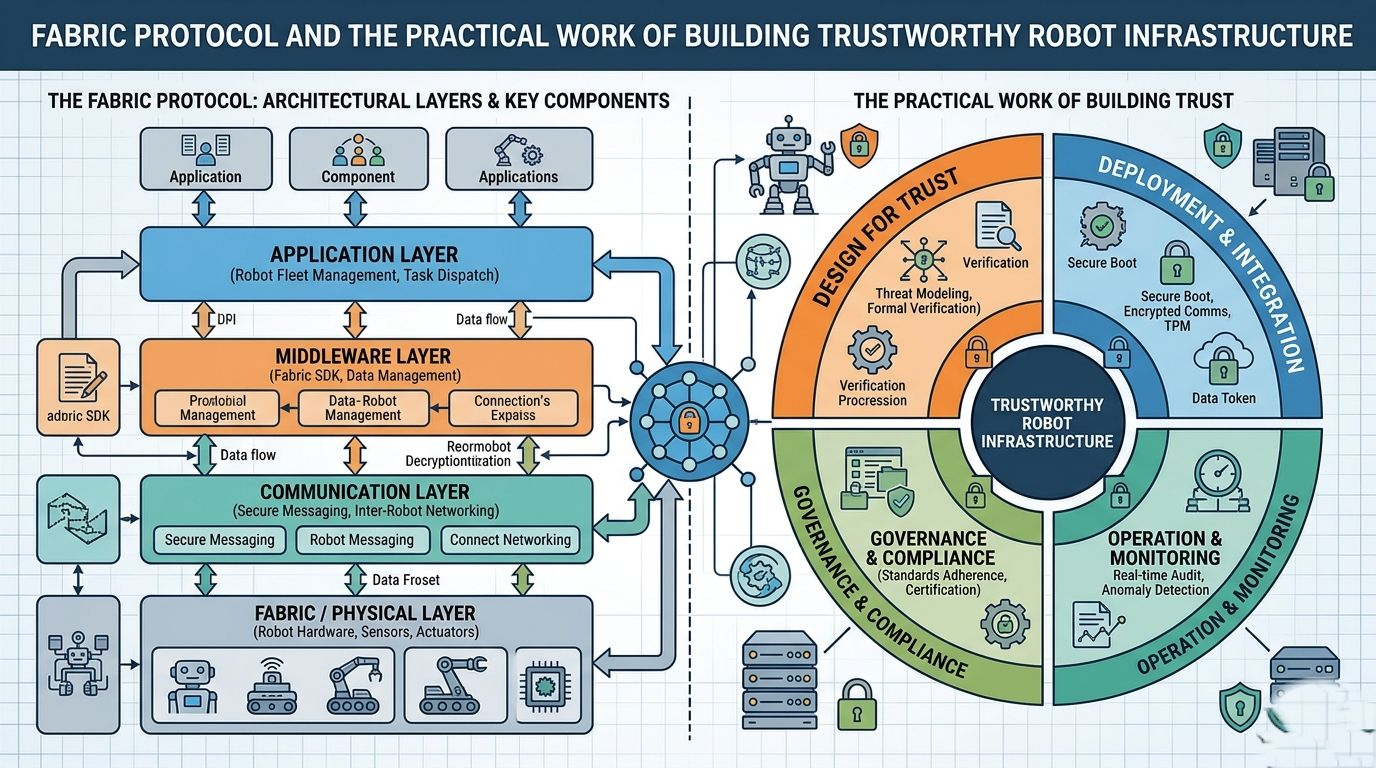

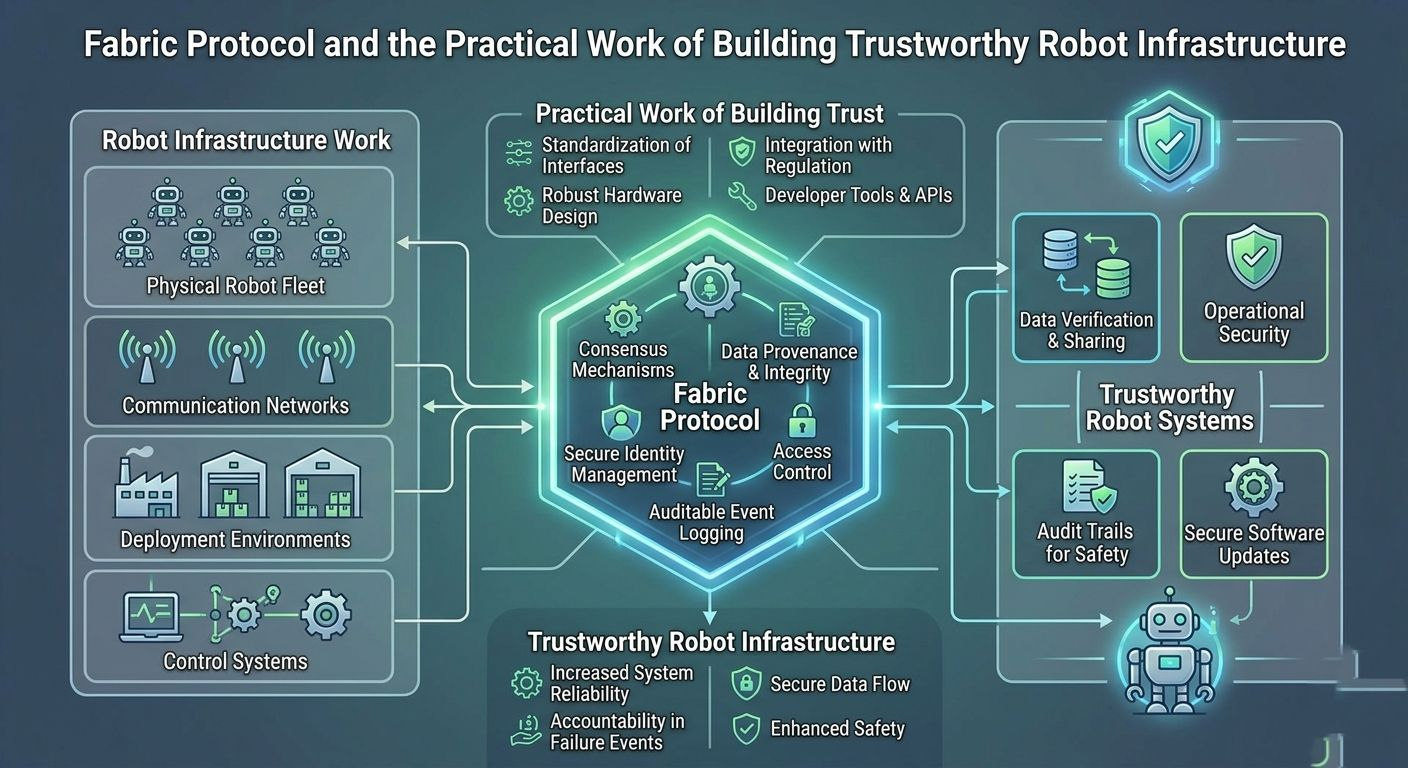

Fabric Protocol describes itself as a global open network supported by the non-profit Fabric Foundation. Its goal is to enable the construction, governance, and collaborative evolution of general-purpose robots through verifiable computing and what it calls agent-native infrastructure. The system coordinates data, computation, and regulation through a public ledger while combining modular components intended to support safe human-machine collaboration.

Rather than treating this description as a technological breakthrough, I find it more useful to examine the design philosophy implied by it. Systems that aim to coordinate robots in real environments inevitably encounter constraints that are far less glamorous than the demonstrations typically shown in presentations. Questions about compliance, auditability, operational stability, and operator trust usually become the defining challenges.

Infrastructure Before Capability

One of the first things that stands out in the description of Fabric Protocol is that it does not frame robotics primarily as a problem of capability. Instead, it frames the problem as one of coordination and infrastructure. Robots already exist. Software agents already exist. What tends to break down is the environment that allows multiple participants—developers, operators, regulators, and users—to interact with those systems in a predictable way.

From that perspective, the emphasis on a public ledger appears less about decentralization as an ideology and more about creating a shared record of activity. When systems operate across organizational boundaries, the difficulty is not simply executing computation but proving that it occurred under the expected conditions. A ledger can serve as a reference point for verification, auditing, and dispute resolution.

In practice, this kind of design tends to matter most in situations where no single party controls the entire system. If robots are being constructed, governed, and evolved collaboratively, participants need a way to observe and verify what has happened without relying exclusively on internal logs or private infrastructure.

Verifiable Computing as an Operational Constraint

The concept of verifiable computing also suggests a particular mindset about system design. When computation must be verifiable, engineers are forced to think about determinism, traceability, and reproducibility. These concerns often shape the architecture more than performance benchmarks or demonstration scenarios.

In regulated environments, verification is rarely optional. Auditors and compliance teams tend to ask questions that ordinary software design does not always prioritize:

Can an action be traced back to its origin?

Can a decision process be reconstructed after the fact?

Can multiple parties independently confirm that a system behaved as expected?

A protocol that builds verification into its infrastructure is implicitly acknowledging that robotics will increasingly operate in environments where these questions must be answered consistently.

What is notable here is not the presence of verification alone, but the idea that it is part of the core protocol rather than an external reporting tool. Systems that treat verification as a separate layer often struggle when operational complexity grows.

Agent-Native Infrastructure

The phrase “agent-native infrastructure” suggests that the protocol is designed with autonomous or semi-autonomous software actors in mind. Traditional infrastructure often assumes that humans are the primary operators and decision-makers. Agent-oriented systems reverse that assumption.

When agents participate directly in infrastructure, several practical concerns emerge. Systems must provide predictable interfaces, stable APIs, and clear operational boundaries. Automated actors cannot rely on informal interpretation or manual correction in the same way human operators can.

From an engineering standpoint, this places pressure on the underlying tooling. Monitoring systems must surface meaningful signals rather than ambiguous alerts. Default configurations must behave safely without requiring constant adjustment. APIs must be stable enough that automated processes do not fail unpredictably when interfaces change.

These details rarely attract attention in high-level descriptions, but they tend to determine whether systems remain reliable once deployed.

The Role of Modular Infrastructure

Fabric Protocol describes its infrastructure as modular. Modularity often reflects an attempt to manage complexity rather than to maximize flexibility for its own sake.

In systems that combine robotics, data coordination, and public ledgers, the risk of tightly coupled components grows quickly. A modular design allows individual parts of the system—such as data coordination mechanisms, computational components, or governance structures—to evolve independently.

From an operational perspective, modularity can also improve fault isolation. When one component experiences instability, it becomes easier to identify the source of the issue and limit the impact on other parts of the system.

For operators responsible for uptime and reliability, this separation is more than an architectural preference. It becomes a practical requirement when infrastructure must remain stable while individual components evolve.

Governance as a System Layer

Another aspect of the description that deserves attention is the inclusion of governance as part of the protocol itself. In many technical systems, governance emerges informally through social processes or external organizations. When governance is treated as an explicit component of infrastructure, it changes how decisions and accountability are handled.

In the context of robotics, governance often intersects with regulatory expectations and safety requirements. Decisions about how robots operate, how updates are introduced, and how responsibility is assigned cannot remain purely technical questions.

By coordinating governance through the same infrastructure that manages data and computation, the protocol suggests a unified framework for operational oversight. Whether this approach simplifies or complicates operations in practice would depend on implementation details, but the architectural intention is clear: governance is not an afterthought.

Privacy and Transparency

Any system that uses a public ledger must navigate the tension between transparency and privacy. Transparency can improve accountability and trust, but excessive exposure of operational data can create security or compliance concerns.

The description of Fabric Protocol indicates that coordination occurs through a public ledger but does not elaborate on the exact mechanisms used to balance these competing priorities. What can be inferred, however, is that the protocol views transparency as a structural element rather than an optional reporting feature.

For organizations operating in regulated environments, the challenge is rarely deciding whether transparency is desirable. Instead, it is determining how transparency can coexist with privacy obligations and operational security.

Operational Stability and Predictability

Infrastructure systems are often judged less by their theoretical capabilities and more by their predictability under pressure. When systems fail during routine conditions, operators can usually recover. When systems behave unpredictably during stress events, trust erodes quickly.

The design choices described in Fabric Protocol—verifiable computation, modular infrastructure, and ledger-based coordination—suggest an attempt to prioritize predictability. Systems that emphasize verification and shared records tend to make state transitions more observable and traceable.

This does not guarantee stability, but it does provide operators with tools for understanding system behavior when unexpected events occur.

Developer Ergonomics and Tooling

Another practical factor that shapes infrastructure adoption is developer ergonomics. Systems that require excessive manual coordination or unclear integration patterns tend to remain experimental rather than operational.

While the description does not provide details about developer tooling, the concept of agent-native infrastructure implies that APIs and system interfaces must support automated interaction. This typically requires clear documentation, predictable defaults, and monitoring capabilities that expose meaningful operational data.

For developers, the difference between a theoretical protocol and a usable system often lies in these details.

Trust Through Structure

The broader theme that emerges from Fabric Protocol’s description is that trust is being approached structurally rather than rhetorically. Instead of relying on assurances about safety or collaboration, the system attempts to embed verification, coordination, and governance directly into its infrastructure.

In environments where robotics interacts with human operators, institutions, and regulatory frameworks, this structural approach may prove more sustainable than purely capability-focused designs.

Robots may capture public attention, but infrastructure tends to determine whether those systems remain reliable, auditable, and trustworthy over time.

Concluding Observations

When I look at Fabric Protocol through this lens, what stands out is not an ambitious promise about robotics but a relatively grounded attempt to define the environment in which such systems might operate responsibly.

The emphasis on verifiable computing, shared coordination through a ledger, modular infrastructure, and integrated governance reflects a recognition that robotics will increasingly operate within institutional and regulatory contexts.

If the system succeeds, it will likely be because it addresses the mundane but essential details that infrastructure operators care about: traceability, reliability, observability, and predictable interfaces.

These qualities rarely generate headlines. But they are often what allow complex systems to survive scrutiny and continue functioning long after initial excitement fades.

@Fabric Foundation #ROBO $ROBO