What caught my attention is a simple question: if general-purpose robots are going to work across many environments, make decisions under uncertainty, and rely on skills contributed by many different people, why should that future be organized by one company instead of an open network? I keep returning to that because robotics seems to be reaching a point where control matters as much as capability. A robot is not just software on a screen. It can move through the physical world, interact with property, affect safety, and shape labor. Once that becomes true, the coordination model becomes part of the product itself.

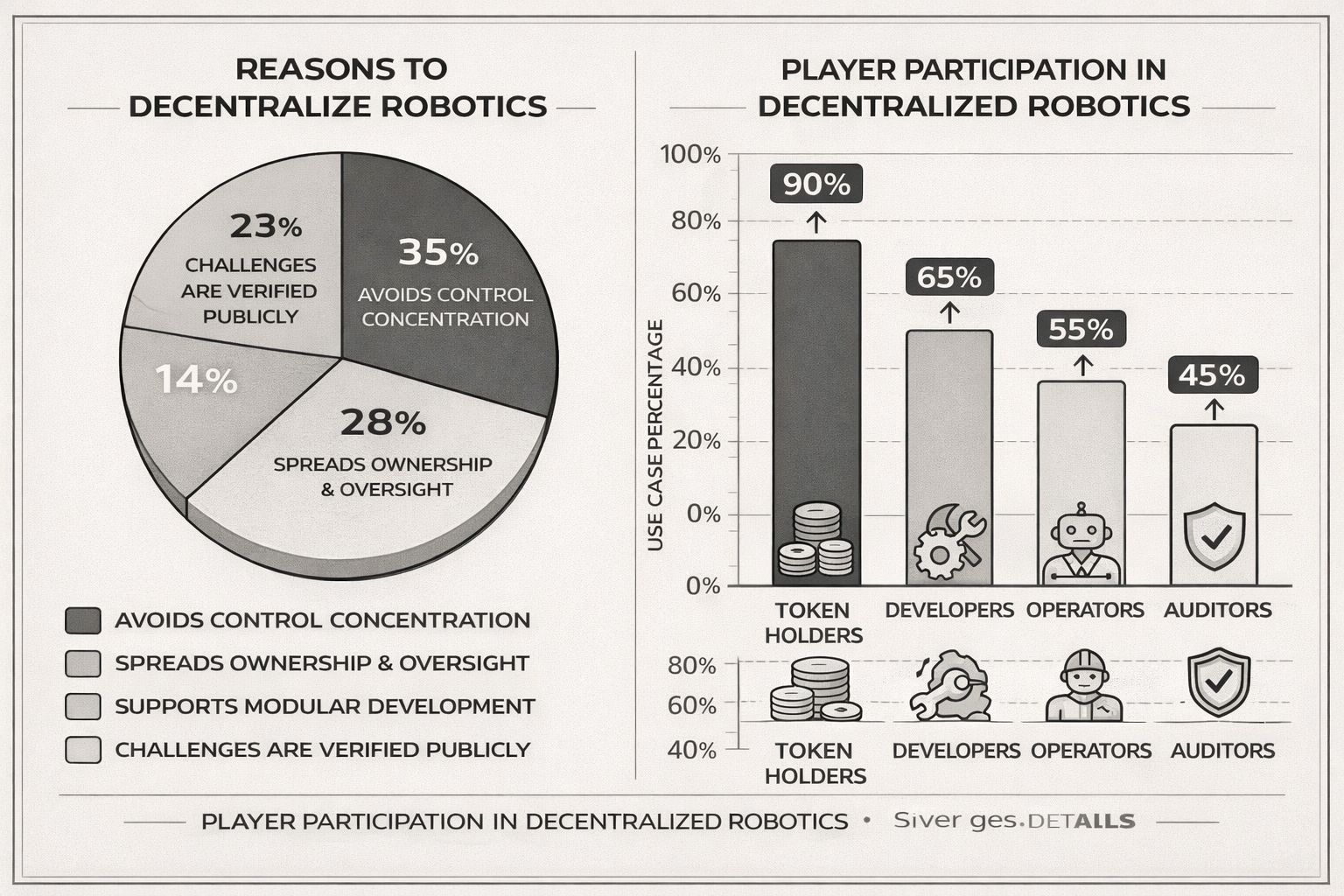

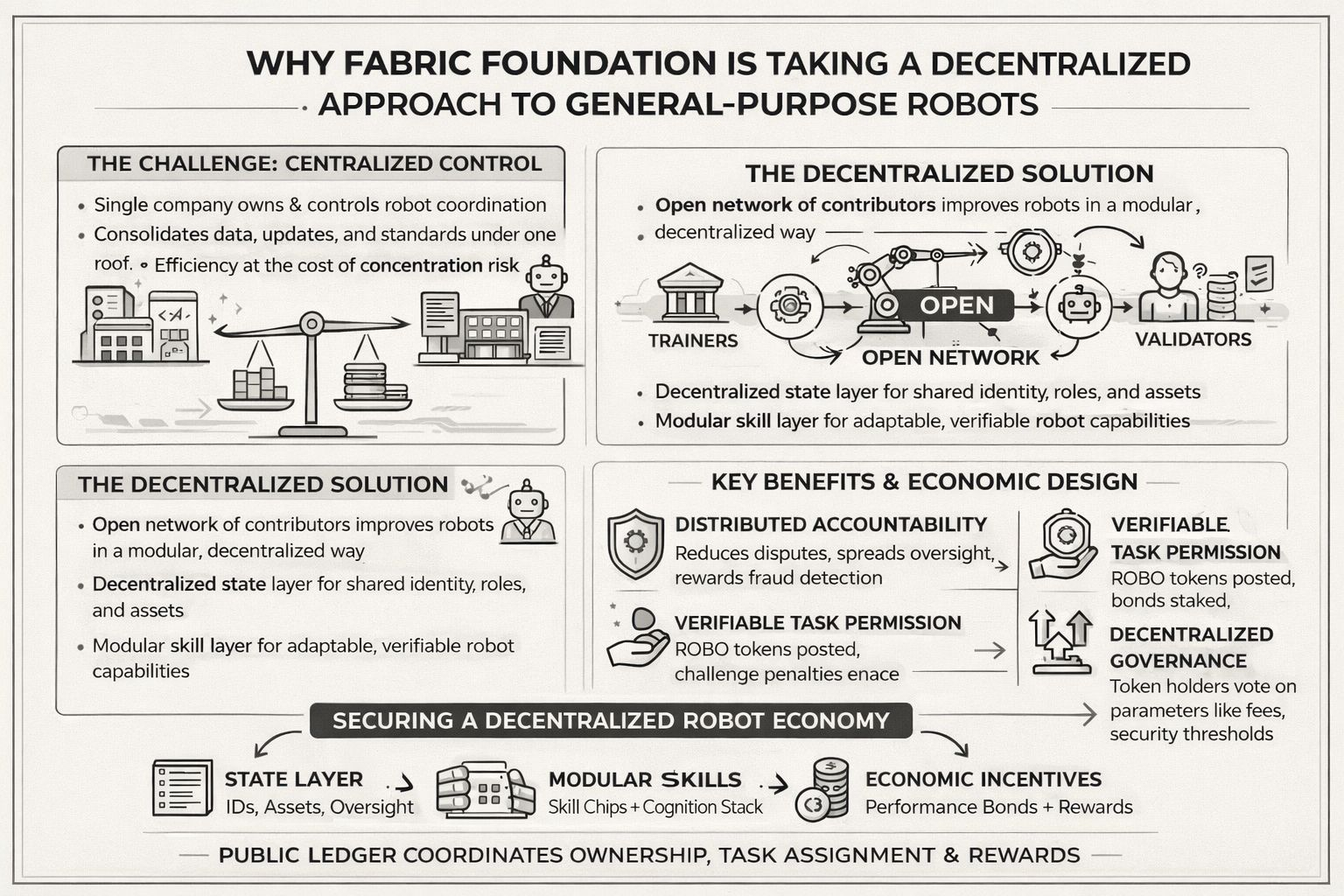

My view is that the real friction is not only building a capable machine. It is deciding who gets to train it, update it, verify it, profit from it, and challenge it when something goes wrong. In closed systems, those rights usually collapse into one stack: one operator owns the data, ships the model, sets the rules, defines acceptable behavior, and captures most of the upside. That may look efficient at first, but it also creates a concentration problem. If robots become useful across transport, logistics, services, and domestic work, then closed ownership could turn a broad technological shift into a narrow control layer. The case for decentralization here feels less ideological than structural. It is about spreading oversight, contribution, and accountability across a wider system.

To me, a closed robot stack looks like building the roads, writing the traffic laws, issuing the licenses, operating the taxis, and judging the accidents under one roof.

That is why Fabric Foundation seems to start from coordination rather than from a single finished machine. The whitepaper frames the system as a decentralized way to build, govern, own, and evolve a general-purpose robot, with public ledgers coordinating computation, ownership, and oversight. It also leans into modularity instead of one opaque intelligence block. The robot is described as an AI-first cognition stack made of many function-specific modules, with skill chips that can be added or removed more like apps than permanent firmware. That matters to me because decentralization becomes much more practical when capability is broken into understandable pieces. Different contributors can improve skills, data, validation, and operations without needing total control over the whole machine.

The deeper logic, as I read it, is that general-purpose robotics is simply too broad to scale well as a sealed product. The network is meant to support multiple robot form factors, interact with different hardware platforms, and leave room for open-source alternatives in the stack where possible. That tells me the decentralized approach is not just about token mechanics. It is also about avoiding a bottleneck where one vendor decides which bodies, drivers, and capabilities count. A general-purpose machine economy likely needs an open state layer for identity and trust, a modular model layer for skills, and an execution environment where new contributors can plug in without asking a central gatekeeper for permission each time.

The state model is important here because it creates a shared record of identities, responsibilities, assets, and task relationships across the chain. In a robotics economy, that matters more than people sometimes admit. Machines, operators, developers, and validators all need legible roles if the system is going to coordinate physical work rather than just digital messages. The model layer then separates functions into modular capabilities so the intelligence stack can evolve without forcing every improvement into one closed package. Consensus is not only about transaction ordering in this design. It also helps determine which participants are selected, trusted, and economically exposed when work is assigned and verified. Then the cryptographic flow ties actions to proofs, attestations, and challenge procedures so claims do not rest only on reputation.

The economic design is where the argument becomes more concrete. Instead of treating the token as a passive claim, the protocol ties it to work, settlement, and responsibility. Operators post refundable performance bonds in ROBO to register hardware and provide services, with parts of those reserves allocated as collateral for specific tasks. Selection for work is influenced by bond weight and holding duration, and those reserves can be slashed for misconduct, spam, downtime, or fraud. Fees for compute, data exchange, and API activity are settled in the native asset even when tasks are quoted in more stable units for predictability. That negotiation detail stands out to me because it feels practical rather than decorative. Price can be negotiated in a way that is easier for users to reason about, while settlement and accountability still remain inside the chain’s own economy.

I also think the protocol is trying to solve a harder robotics problem than people usually admit: physical work often cannot be proven as neatly as digital computation. A robot task in the real world is only partially observable, which means the answer is not perfect proof but a mix of challenge-based verification and penalty economics. Validators monitor quality and availability, investigate disputes, and receive compensation from fees and from successful fraud detection. If bad behavior is proven, part of the task stake can be slashed, split between a truth reward and a burn, and the operator has to re-bond before returning. That feels like an important reason to decentralize this kind of system. When machines affect the real world, trust should not depend on “believe the operator.” It should depend on a structure where dishonest behavior becomes economically irrational.

Governance fits into that same logic. Holders can lock tokens to obtain veROBO for signaling around operational parameters such as fees, verification thresholds, quality controls, and upgrades. I read that as a narrower and more useful role than vague community governance. The point is not that everyone should micromanage a robot. The point is that the rules around access, validation, and protocol evolution do not remain trapped inside one company dashboard. In a system meant to coordinate developers, operators, validators, users, and machines, procedural governance is part of how decentralization becomes durable rather than symbolic.

My honest limit is that this approach still depends on execution quality, not just clean theory. Open coordination can reduce concentration, but it can also become slow, messy, and difficult to standardize across real hardware. Modular skills are attractive, yet safety, latency, and interoperability remain unforgiving in robotics. So my conclusion is measured: the decentralized approach makes sense here because the challenge is bigger than building one smart machine. It is about building a public coordination layer for machines that people can inspect, challenge, and improve. If general-purpose robots do become infrastructure, would a closed model really be the safer place to start?