Verification was never the problem.

What happens after verification is.

I spent some real time inside SIGN today. Not just clicking through features or skimming a demo actually watching how the system behaves when you push it. And what caught my attention wasn't the speed, the interface, or the usual pitch about decentralization. It was something that took me a little longer to articulate.

SIGN doesn't treat verification as a process. It treats it as an economic layer.

That one shift changes a lot.

Most systems handle credentials the same way. You complete something, you get proof, and that proof just... sits there. Stored in a wallet, shown on a profile, occasionally screenshot for LinkedIn. Rarely put to actual use.

SIGN behaves differently.

Verification here isn't the finish line. It's what starts the next thing.

I actually made a small mistake during one of my verification steps while testing. The system caught it immediately no waiting, no manual review, no back-and-forth. I fixed it in seconds and kept moving. That small moment told me something: this system wasn't built assuming everything goes smoothly. It was built for how people actually behave. Messy, imperfect, trying again.

Most platforms are optimized for ideal conditions.

SIGN is built for real ones.

But the more interesting part kicks in after verification completes.

Every verified credential becomes programmable. Not just something you can reference — something that can trigger an outcome. Rewards, access, recognition — all of it can connect directly to verified actions without needing another layer of trust sitting in between.

Proof that doesn't lead anywhere is just storage.

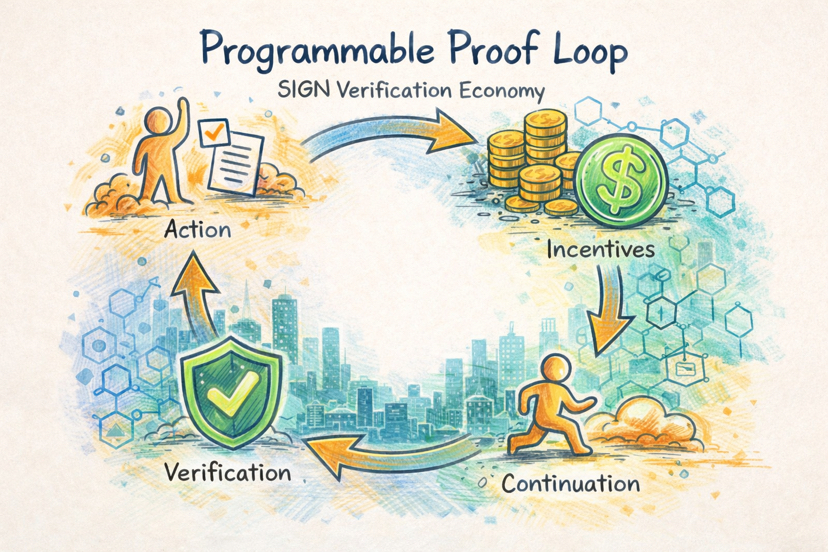

The loop SIGN creates looks like this:

Action → Verification → Incentive → Continuation

In most ecosystems, that loop is broken somewhere in the middle. Verification drags. Rewards take time. By the time anything happens, the momentum is already gone.

SIGN compresses that whole cycle. And that compression is where it starts to feel genuinely useful not just technically interesting.

In education, this could close the gap between finishing something and being recognized for it. In freelancing, verified work becomes portable proof of quality something that carries meaning even with people who've never worked with you before. In communities, contribution stops being a popularity contest. Verified actions create a record that's transparent and directly tied to real incentives.

What I also noticed is how SIGN handles the decentralization angle.

It doesn't lead with it. Doesn't require you to understand it before the product makes sense. The experience feels usable first the decentralization is underneath, doing its job quietly. That design choice matters more than people give it credit for. Adoption doesn't come from ideology. It comes from things that just work.

The incentive model is worth noting too. During testing, the rewards were small. Deliberately so, it seems. Not structured to generate excitement, not inflated to attract attention. And honestly, that's probably the right call. Big rewards create noise. Small, consistent rewards shape habits. And habits are what actually build lasting systems.

The honest challenge, though, is scale.

Verification systems tend to hold up fine in controlled conditions. The real test comes when volume increases, inputs get messier, and people start looking for edges to exploit. That's where most of these models start showing cracks.

Whether SIGN can maintain integrity under that kind of pressure is the real open question.

Because if it can it's not just a better way to verify credentials.

It's a fundamentally different way of assigning value to proof.

That introduces something new to the crypto economy: a layer where actions aren't just recorded, but continuously converted into outcomes that actually mean something.

The question isn't whether SIGN works.

It's whether anything else will keep up once proof becomes programmable.