There’s a line from Pixels that stuck with me, and it wasn’t even the main selling point. It was the quieter idea underneath, that a studio can look at player behavior, decide what experiment to run, and actually execute that decision inside the same system.

On paper that sounds efficient. And it is. But the more I think about it, the more it feels like something bigger is happening there.

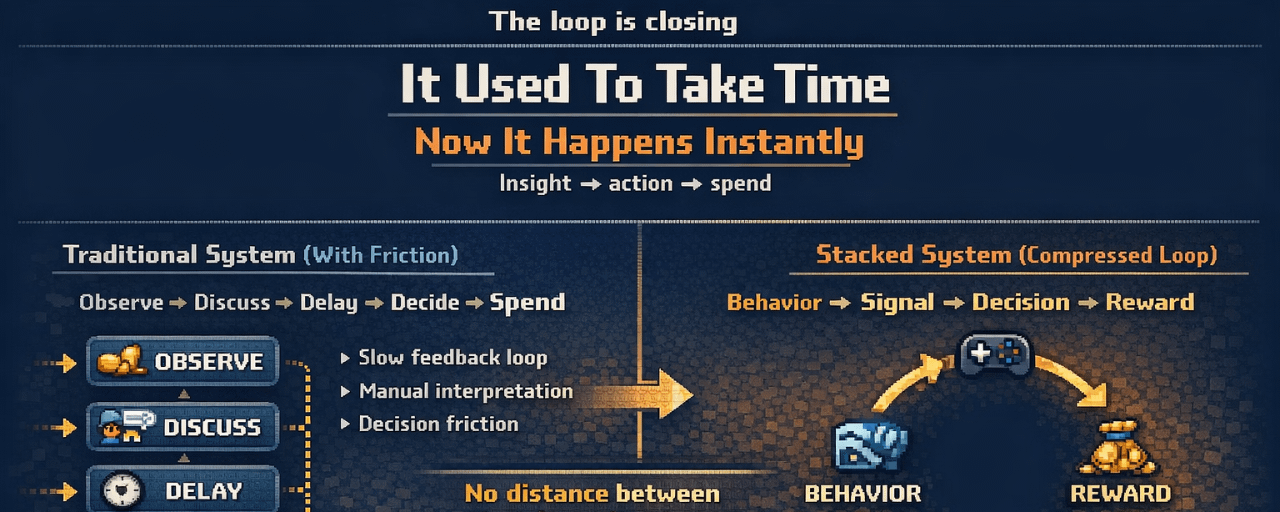

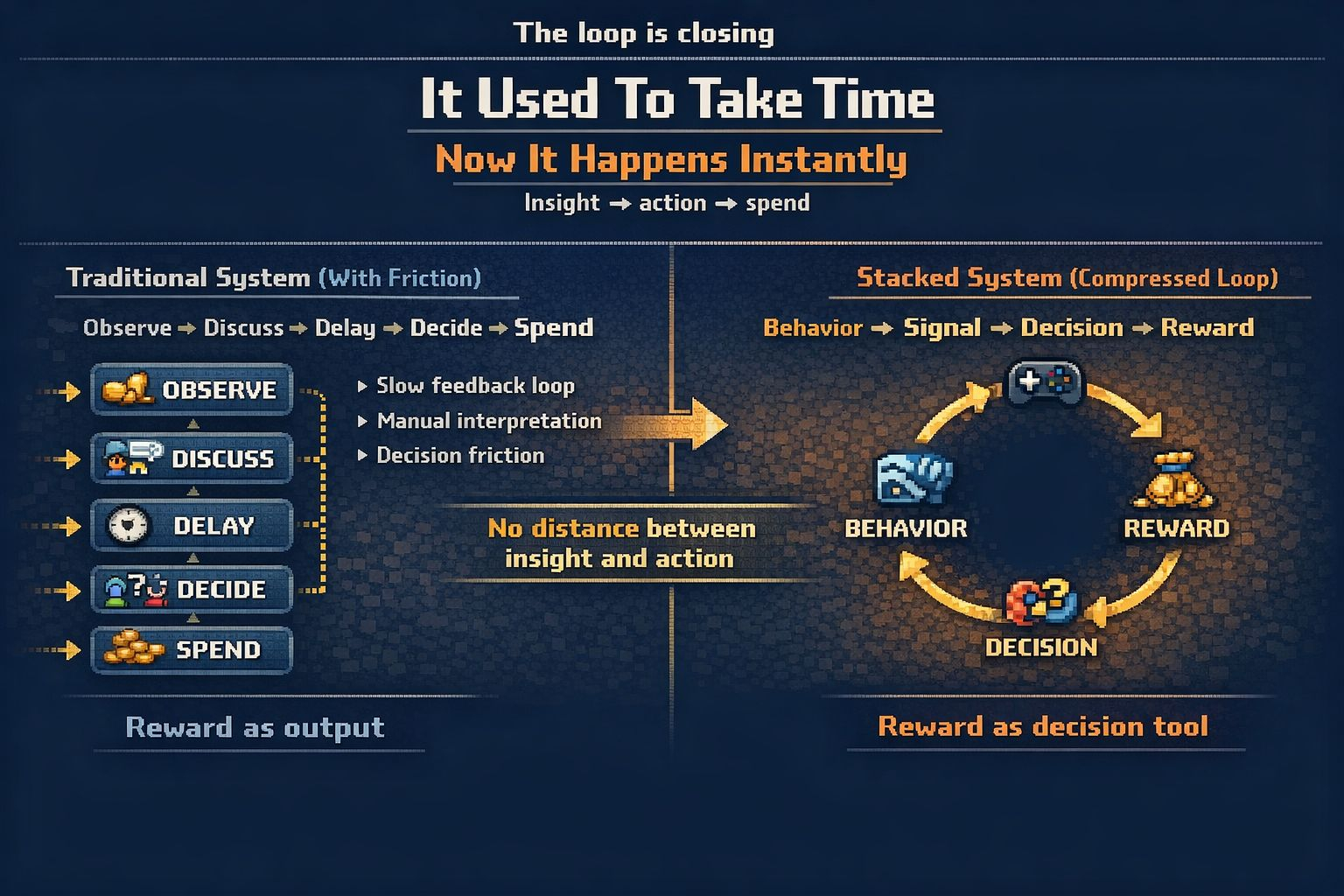

Before, there was usually a gap between noticing a problem and doing something about it. A team would see retention drop, argue about what it meant, wait for more data, maybe test something slowly. That delay was frustrating, but it also acted like a filter. It forced a bit of hesitation before money was spent.

With Stacked, that gap feels much smaller.

And I don’t think the main story here is just “better rewards” or “more efficient campaigns.” It feels more like Pixels is building a tighter loop between seeing behavior, interpreting it, and acting on it. That’s a different kind of system. One where rewards are not just outputs, but part of the decision-making process itself.

That’s where it gets interesting, and a bit uncomfortable.

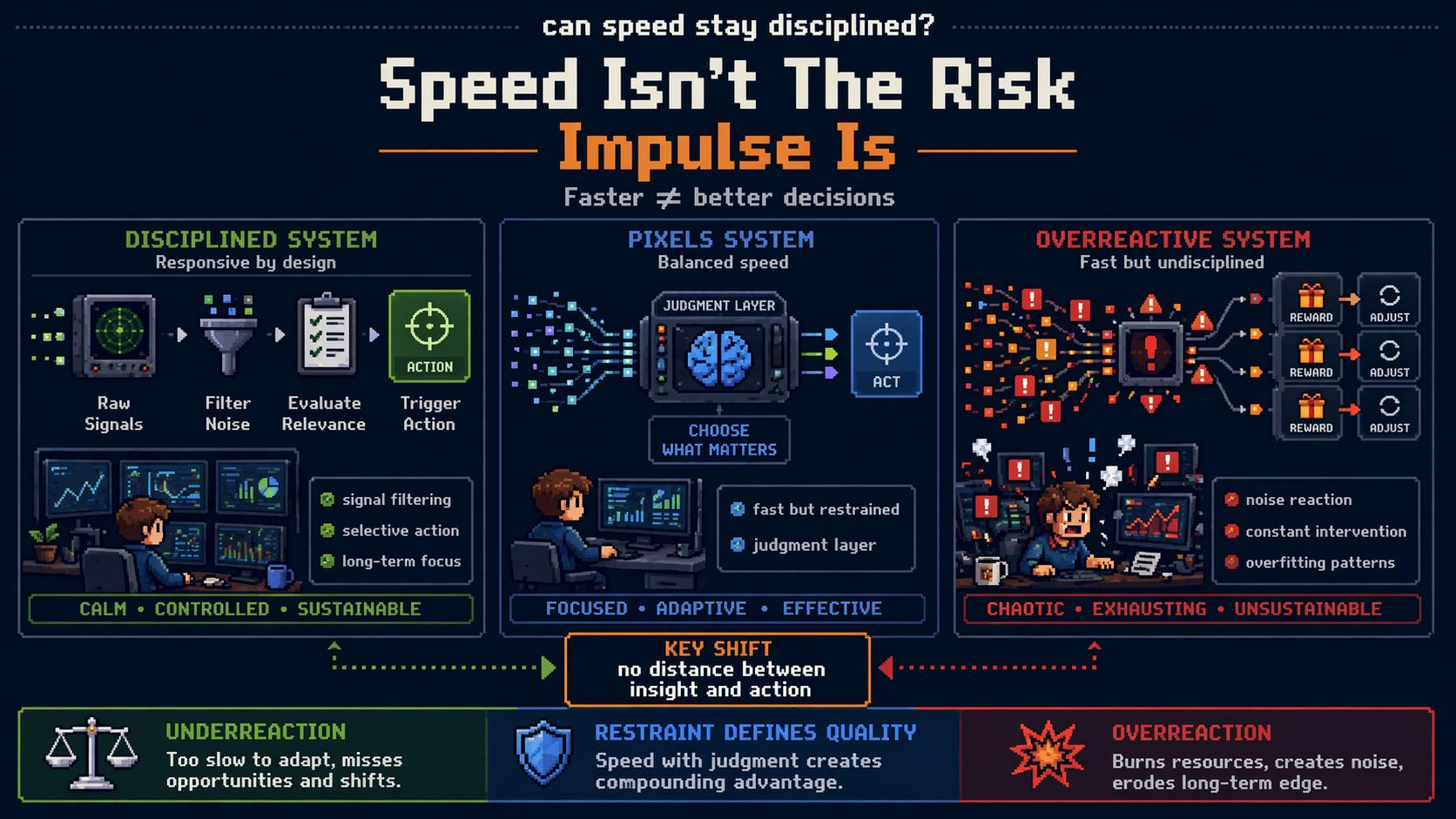

Because faster loops are appealing. If you can spot a drop between certain player cohorts, test an intervention quickly, and measure whether it improves retention or revenue, it sounds like a clear upgrade from how things used to work. Studios have wasted a lot of time and money on slow, disconnected tools.

But when that loop becomes too tight, something else happens.

The distance between “we noticed something” and “we paid to fix it” starts to disappear. And when that happens, it becomes easier to react to signals before fully understanding them. A pattern shows up, it looks clean, it feels actionable, and suddenly it’s already being tested.

That’s the part I keep thinking about with Pixels.

A system like this doesn’t just make studios more responsive, it can also make them more reactive. And those two things aren’t the same. One improves decision-making, the other can distort it.

It’s not really a criticism of Stacked itself. If anything, it means the tool is doing what it’s supposed to do, reducing friction. But that old friction, as inefficient as it was, sometimes forced teams to slow down and question what they were seeing. Not every drop is a real problem. Not every pattern needs intervention.

What makes Pixels more interesting here is that this isn’t coming from a team that only understands rewards in theory. It’s built out of a live game environment, where things like churn, farming behavior, and economy balance have already been tested under pressure. That doesn’t remove the risk, but it probably changes how they approach it.

Still, I think the real challenge is not speed. It’s restraint.

If the system gets very good at detecting and acting, then the value shifts to knowing when not to act. When to ignore a signal. When to let behavior play out instead of trying to correct it immediately. That kind of judgment doesn’t come from the system itself, at least not entirely.

And that’s also where PIXEL starts to feel a bit different to me. If more of these decisions, these timed interventions, these reward experiments are flowing through the same infrastructure, then the token isn’t just sitting at the edge of the system. It’s closer to the moment where decisions are made.

Not just distribution, but intention.

I’m still not sure how that evolves across more games or more studios, but it feels like a meaningful shift. The question isn’t just whether Pixels can make LiveOps more active. It’s whether it can make it faster without making it impulsive.

And that’s a harder balance than it sounds.