Mira Network is such that project that made me stop before it went to the top of the stack of AI ideas.

That stack is getting unbelievable at the moment!

Another token is an AI infrastructure every week. A different pitch on intelligent agents, automated solutions or a new digital economy with models. The language is always impressive. The charts are always presentable. However, when you read a little further in it the same trend tends to prevail. A model gives answers. The story is affixed with a token. The rest is just narrative.

Mira attracted me in another manner.

Not that it can deliver superior models. There are a lot of teams that are going after that. Not since it purports to be a substitute to current AI systems. That argument manifests itself everywhere. The question Mira appears to be posing is what made me slack.

Not how AI becomes smarter

But how AI proves it is right

That is a difference that may not seem that big, but it alters the entire issue.

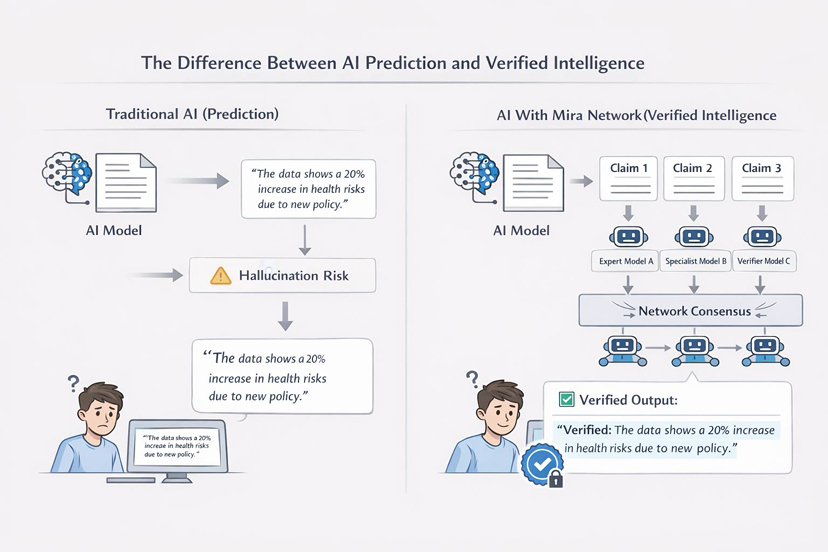

Since, unfortunately, when you look at the state of AI right now, intelligence is no longer the most critical issue. Essay writing, code generation, research paper summarizing, and explanations of complex issues are systems that are already in place. The problem shows up later.

The answers cannot be trusted fully.

It is that instability that Mira appears to be so concerned with.

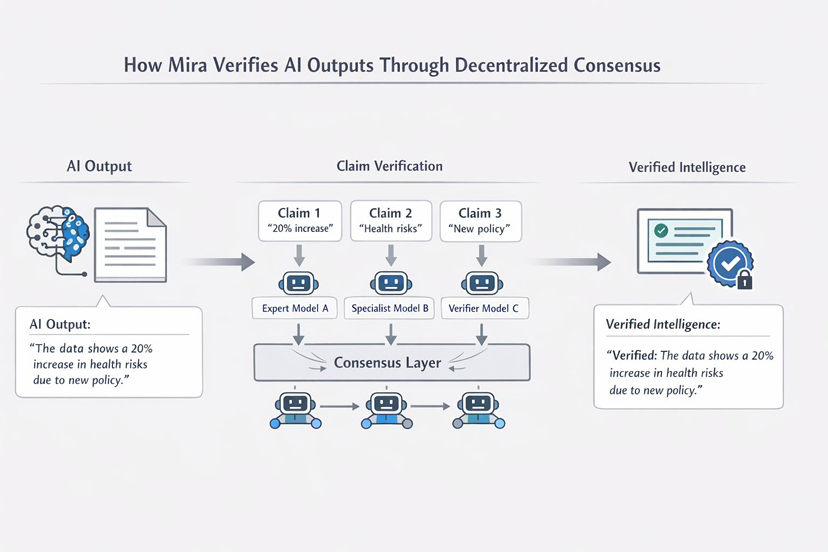

The network attempts to construct a verification layer of intelligence instead of constructing another model. In case an AI comes up with an answer, the system will not automatically accept it as true. The production is divided into minor claims. Every assertion is subsequently verified by several networked models. The models independently evaluate the same claim and the network is a way of combining their responses in a bid to reach consensus.

Simply, the system is not dependent on single voice.

It is based on the consensus of many.

That thought comes to mind more of peer review compared to prediction. In study we do not easily believe what is said by one individual. We test it. Others review it. Evidence accumulates. Finally, Mira appears to be transferring an equivalent idea to machine intelligence.

The interesting aspect of the system is how it is surrounded by incentives.

Checking within Mira is not a technical task. It is also economic. Claim validating nodes require the payment of value to join the network. They get reward when they confirm information correctly. Their stake can be punished in case their answers are constantly not in line with one another.

This is that random guessing is costly.

The system encourages the validators to do reasoning.

The other Mira’s design solution that stood out to me is the way that the network is working with complex information. Mira does not request that a model assesses a specific paragraph or argument in its entirety, but rather disaggregates it into smaller claims. Individual claims can then be checked individually, occasionally by models that are experts in other fields.

Evidence behind the answer

And perhaps that is the greater point here

The conversation on AI has been about generation over the years. Bigger models. Faster outputs. More data. It appears that what Mira is questioning is verification.

Since intelligence in the absence of accountability will ultimately fail.

Machines cannot simply make noises that sound convincing should they be to work in such serious fields as research, finance, healthcare, law. They require organization in their lives. A method of demonstrating reliability rather than making assertions of reliability.

That is the rub Mira is attempting to deal with.

Whether or not it is successful is a different question altogether.

However, what I will tell you is that in a market full of ventures attempting to create smarter machines, a network attempting to test machine intelligence is a far more interesting place to begin.

That is Mira!