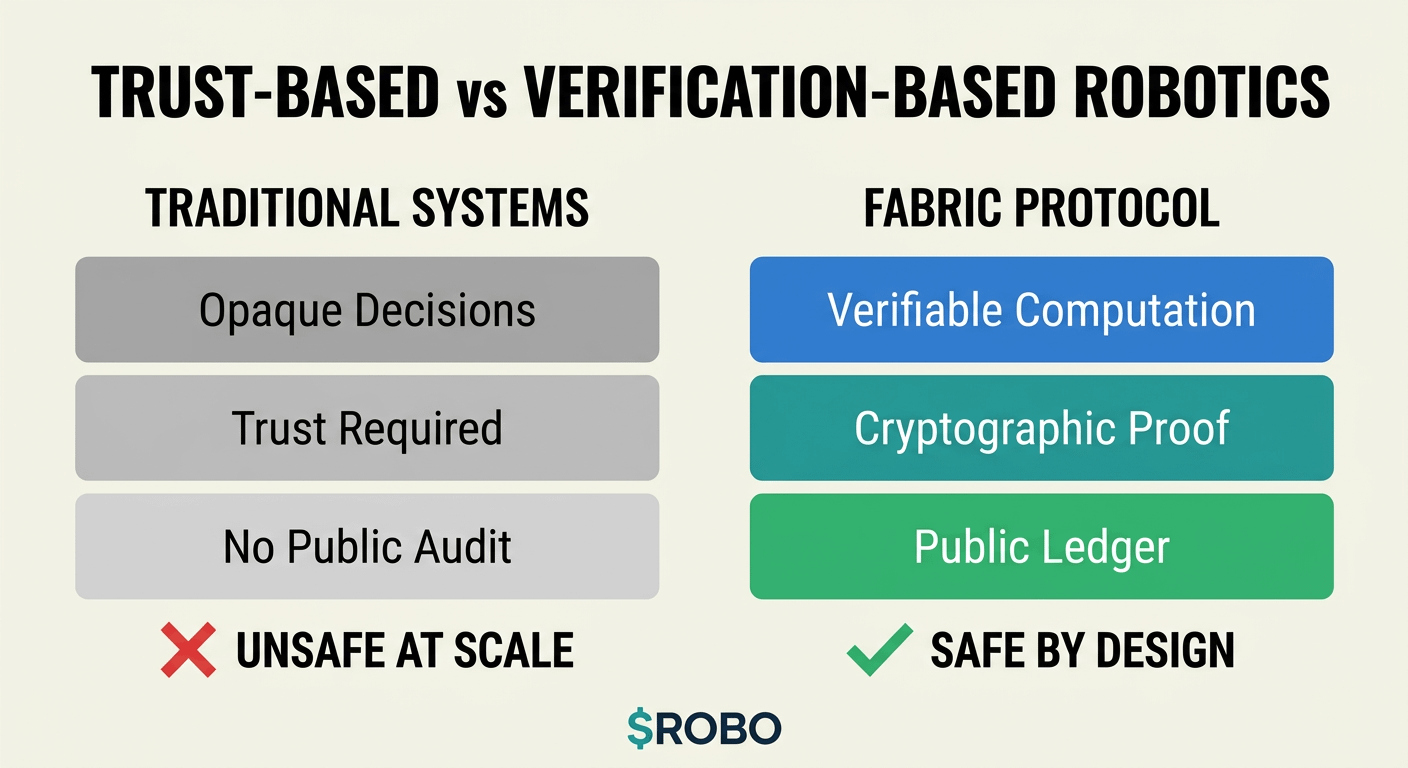

There is a problem at the foundation of how most robotics systems are being built today, and the problem is not primarily technical in the sense of hardware limitations or software bugs that can be fixed through better engineering. The problem is architectural, and it has to do with the fact that most robotics platforms ask humans to trust systems whose decision-making processes are opaque, whose data sources are unverifiable, and whose governance structures are controlled by entities whose incentives may not align with the long-term safety and reliability that general-purpose robotics requires. @Fabric Foundation approached this problem from a different starting point, which is that trust in robotics systems should not be based on the reputation of the company that built them or the promises made in marketing materials. It should be based on the ability to verify computationally that a robot did what it claimed to do, used the data it claimed to use, and followed the rules it was supposed to follow.

This distinction between trust-based systems and verification-based systems becomes critical as robots move from controlled industrial environments where failures are contained and predictable into general-purpose environments where they interact with humans in unstructured ways. In a factory setting where a robot performs the same motion thousands of times per day in a space where no humans are present, trust-based systems work adequately because the environment is constrained and the failure modes are well understood. In general-purpose settings where robots need to navigate public spaces, interact with people who did not consent to be part of a robotics experiment, and make decisions that affect safety in real time, trust-based systems are insufficient because the complexity of the environment makes it impossible to predict all failure modes in advance, and the consequences of failures are too severe to accept opacity in how decisions were made.

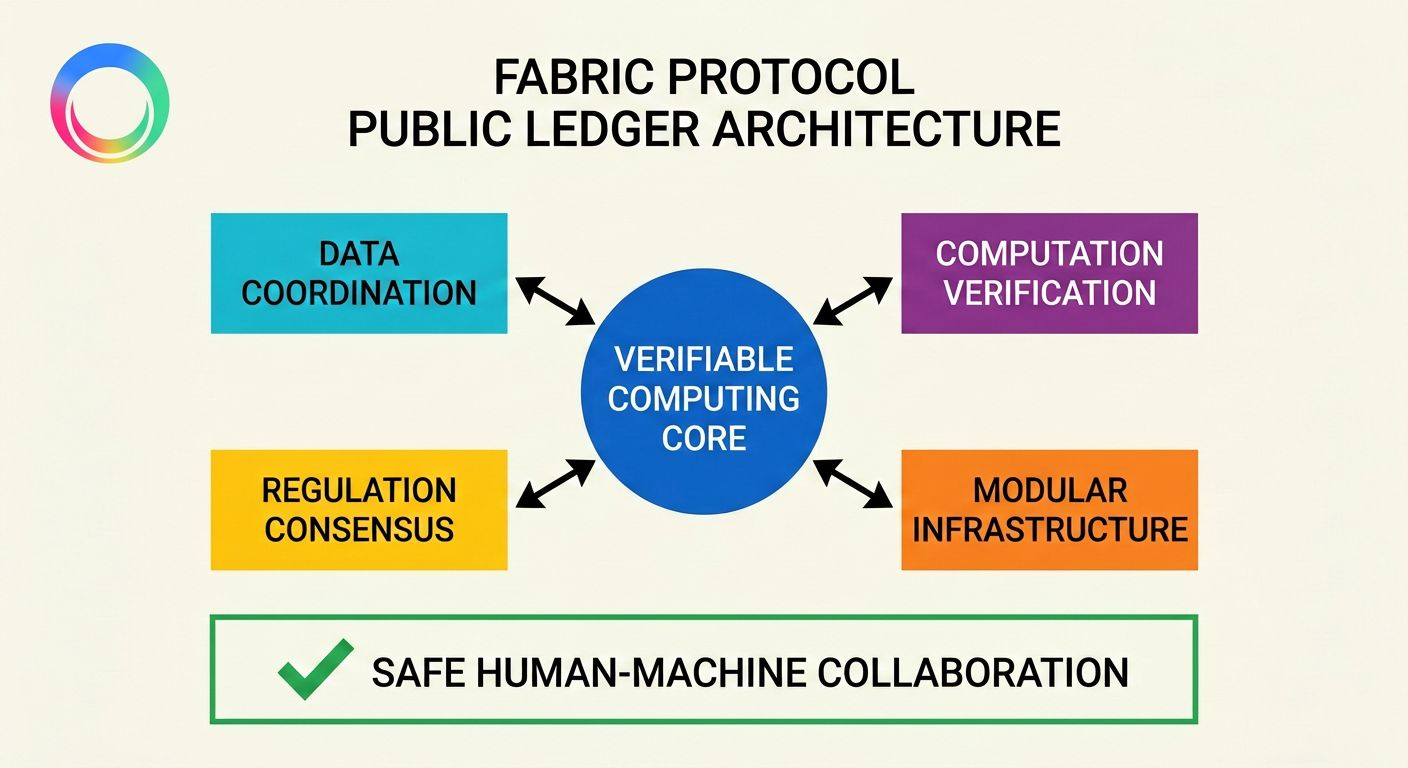

Verifiable computing as implemented in Fabric Protocol changes the architecture fundamentally by making it possible to prove cryptographically that a computation was performed correctly on specific input data without requiring access to the underlying system or trust in the entity operating it. When a robot makes a decision, that decision is not just logged in a database that could be modified or deleted. It is recorded on a public ledger with a cryptographic proof that ties the output to the specific computation and the specific input data that produced it. This means that anyone with the appropriate tools can verify independently that the robot's behavior matched its programming and that the data it relied on was accurate at the time the decision was made.

The practical implications of this architectural choice become visible when considering specific failure scenarios that matter in general-purpose robotics. If an autonomous delivery robot causes an accident, the relevant questions are not just what happened but why it happened, what data the robot was using at the moment of the incident, and whether the robot was following its programmed rules or whether there was a deviation from expected behavior. In trust-based systems, answering these questions requires cooperation from the company operating the robot, access to proprietary logs that may or may not be complete or accurate, and expert analysis that most people affected by the incident are not equipped to perform or evaluate.

In a verification-based system built on @undefined infrastructure, the answers to these questions are available on the public ledger without requiring permission from any centralized entity. The computation that the robot performed is verifiable. The data it used is verifiable. The rules it was following are verifiable. This does not mean that accidents become impossible or that liability questions disappear, but it does mean that the basic factual questions about what the robot did and why can be answered with cryptographic certainty rather than relying on trust in self-interested parties to provide accurate information.

The modular infrastructure approach that Fabric Protocol implements addresses a different dimension of the same safety problem, which is that robotics systems need to evolve as understanding of safety requirements improves and as new capabilities become available, but evolution in closed systems creates vendor lock-in dynamics that prevent the broader ecosystem from improving at the pace that safety demands. When a robotics platform is controlled by a single company, that company decides which updates get deployed, which safety features get prioritized, and which governance rules apply, and those decisions are made based on the company's incentives rather than based on what is optimal for the ecosystem as a whole.

$ROBO coordinates this evolution through public ledger governance where data coordination, computation verification, and regulation consensus happen transparently with participation from the entire network rather than being decided behind closed doors by a small group of executives optimizing for quarterly earnings. This does not mean that governance becomes easy or that every decision will satisfy every participant, but it does mean that the decision-making process is visible, auditable, and subject to verification rather than being hidden inside proprietary systems where accountability is limited to whatever the controlling entity chooses to disclose.

#ROBO $ROBO @Fabric Foundation