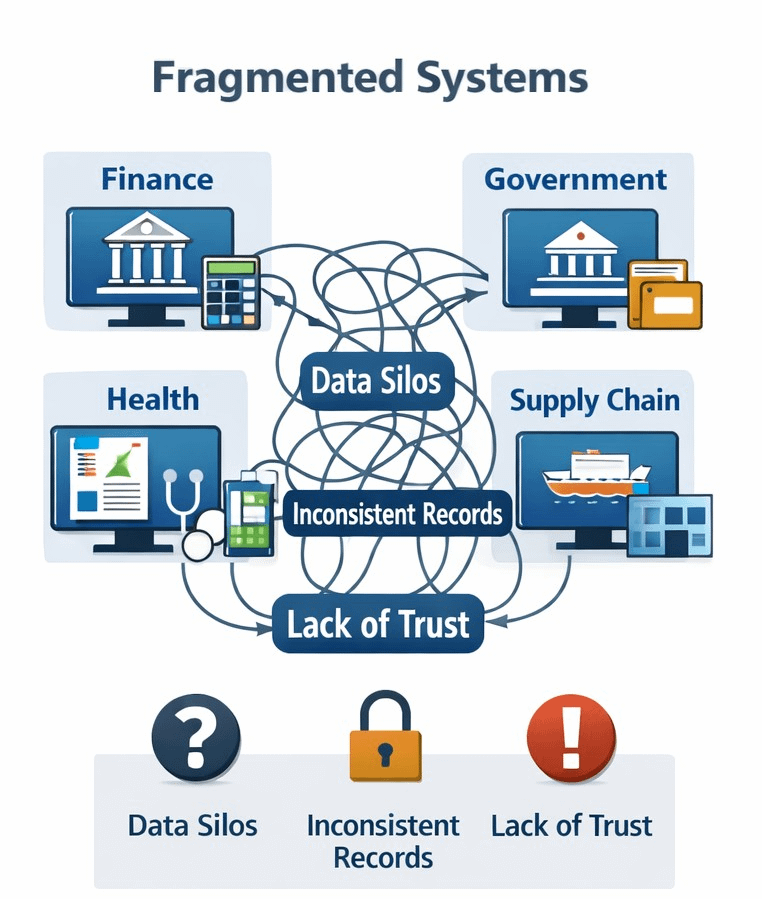

I keep coming back to a simple thought that most digital systems are pretty good at recording actions yet much worse at proving what those actions actually mean. I used to think fragmentation was mainly an efficiency problem that created delays and duplicate work and left people struggling through bad handoffs. The more I look at it the more it feels like a trust problem. When data moves across agencies and vendors and apps and chains the hard part is not only whether something happened but whether anyone else can verify who said it and under what rules and whether it still holds. That is how I understand the center of the Sign thesis. In Sign’s current documentation S.I.G.N. is framed as a system architecture for money and identity and capital while Sign Protocol is described as the shared evidence layer for creating and verifying structured records through attestations.

What I find useful here is that it shifts the conversation away from asking which app runs the workflow and toward asking where the proof actually lives. Sign’s language is very direct about that and it treats attestations as portable verifiable proofs that can move across systems and time instead of treating them like a side feature that sits off to the edge. Identity is not enough and execution is not enough and storage is not enough because the missing piece is evidence that remains readable outside the original system. That is why the thesis feels bigger than one protocol. It is really an argument that modern infrastructure should do more than process transactions or permissions and that it should produce proof of what was authorized or distributed or approved or changed in a way that can still be inspected later. Sign also makes clear that this can sit in public or private or hybrid deployments which matters because most real institutions are not going to live at one extreme or the other.

Part of why this angle gets more attention today than it would have five years ago is that the surrounding standards have grown up. In May 2025 W3C published Verifiable Credentials 2.0 as a web standard and described a way to express digital credentials that is cryptographically secure and privacy respecting and machine verifiable. Around the same time the EU’s digital identity framework moved from broad ambition toward concrete implementation because the regulation says member states must provide EU Digital Identity Wallets by the end of 2026 and the Commission has already adopted implementing regulations that cover trust services and electronic attestations of attributes. In August 2025 NIST also revised its digital identity guidelines to reflect a changed digital landscape with updated requirements around identity proofing and authentication and federation and privacy and user experience. When those pieces move at once the idea of verifiable infrastructure stops sounding theoretical and starts to look like a practical layer of digital administration.

I also think AI has changed the emotional weight of this discussion in a way that is hard to ignore. Before this provenance could sound like a niche concern for compliance teams and standards bodies. Now it feels closer to ordinary public life. The Content Authenticity Initiative explicitly describes its mission as restoring trust and transparency in the age of AI and the broader C2PA effort is trying to make provenance and authenticity verifiable across media systems. What stands out to me is that this work is becoming less experimental and more operational because C2PA says its conformance program and official trust list launched in mid 2025 and that the old interim trust list was frozen on January 1 2026. That does not solve the problem of deception but it does show the wider environment moving toward verifiable claims and signed histories and shared trust rules. Sign sits inside that larger turn even though it applies the same logic to identity and agreements and distributions and institutional records rather than only to media.

What surprises me is that the thesis is actually fairly modest once you strip away the branding. It is not saying that software can remove politics or that a signature makes a claim morally true. It is saying something narrower and more durable which is that fragmented systems become more governable when they produce verifiable evidence by default. That is a strong claim but it is also a limited one. An attestation can prove that a statement was issued by a particular party and that it has not been tampered with but it cannot guarantee that the original statement was wise or fair or honestly sourced. Bad inputs can still be signed and power can still be abused and privacy can still be mishandled. I find that important because the most credible version of this thesis is not utopian and it treats verification as a discipline rather than a cure.

So when I read Sign as a thesis rather than as a product pitch I come away with something that feels grounded. The real move is from systems that ask us to trust their internal coherence to systems that can export proof across boundaries. If that shift keeps happening infrastructure will look a little less like a collection of disconnected databases and a little more like a network of claims that can actually be checked. Whether Sign itself becomes central is still an open question. But the underlying idea that evidence should be built into the architecture rather than stapled on afterward feels increasingly hard to dismiss.

@SignOfficial #SignDigitalSovereignInfra $SIGN