I didn’t really think about how often I get treated differently by the same system until it started repeating. Same app, same usage, but the offers weren’t the same anymore. A friend would get something better, or earlier. I’d get nothing, then suddenly something very specific, almost like it arrived late but on purpose. At first I brushed it off as randomness. It didn’t stay random.

That’s the part that keeps coming back when I look at $PIXEL. It still presents itself like a normal game economy. You show up, you play, you earn something if you stay active. Simple loop. But after a while, it stops feeling evenly distributed. Some players seem to get pulled into deeper layers without doing anything obviously different on the surface. Others just circle around the basic loops, even if they’re putting in time.

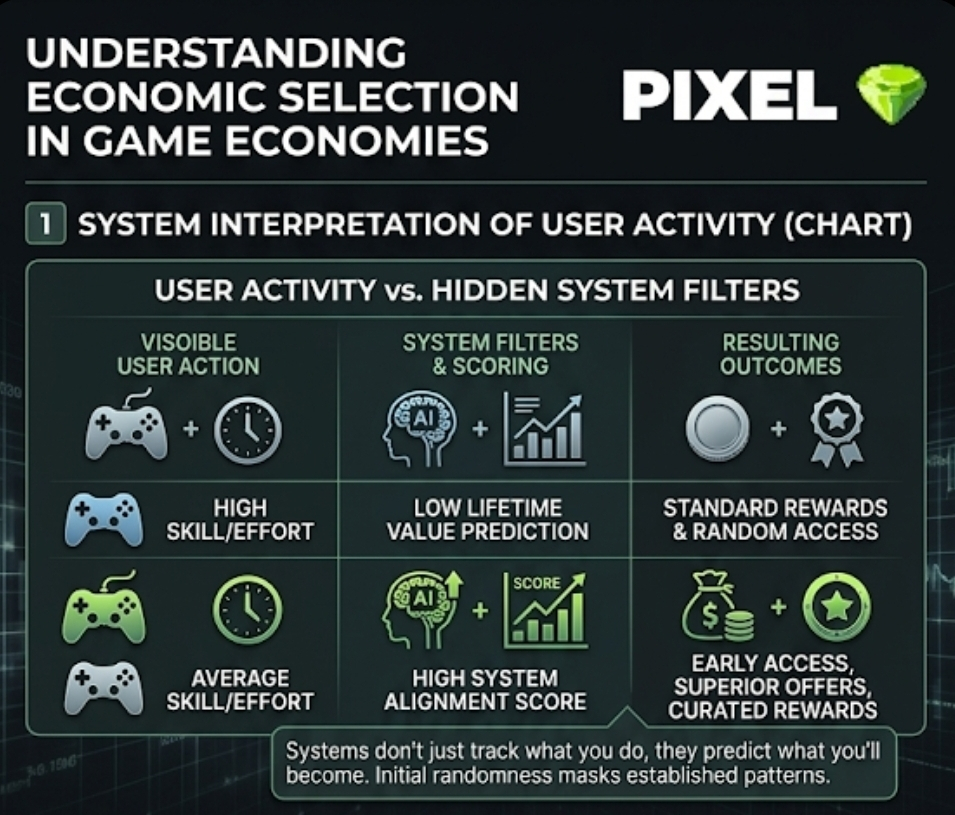

I used to assume this was just about skill or effort. That explanation works for a bit, then it starts breaking. Because you see players who aren’t necessarily better, but they seem to fit the system better. That’s harder to explain. It feels less like performance and more like alignment.

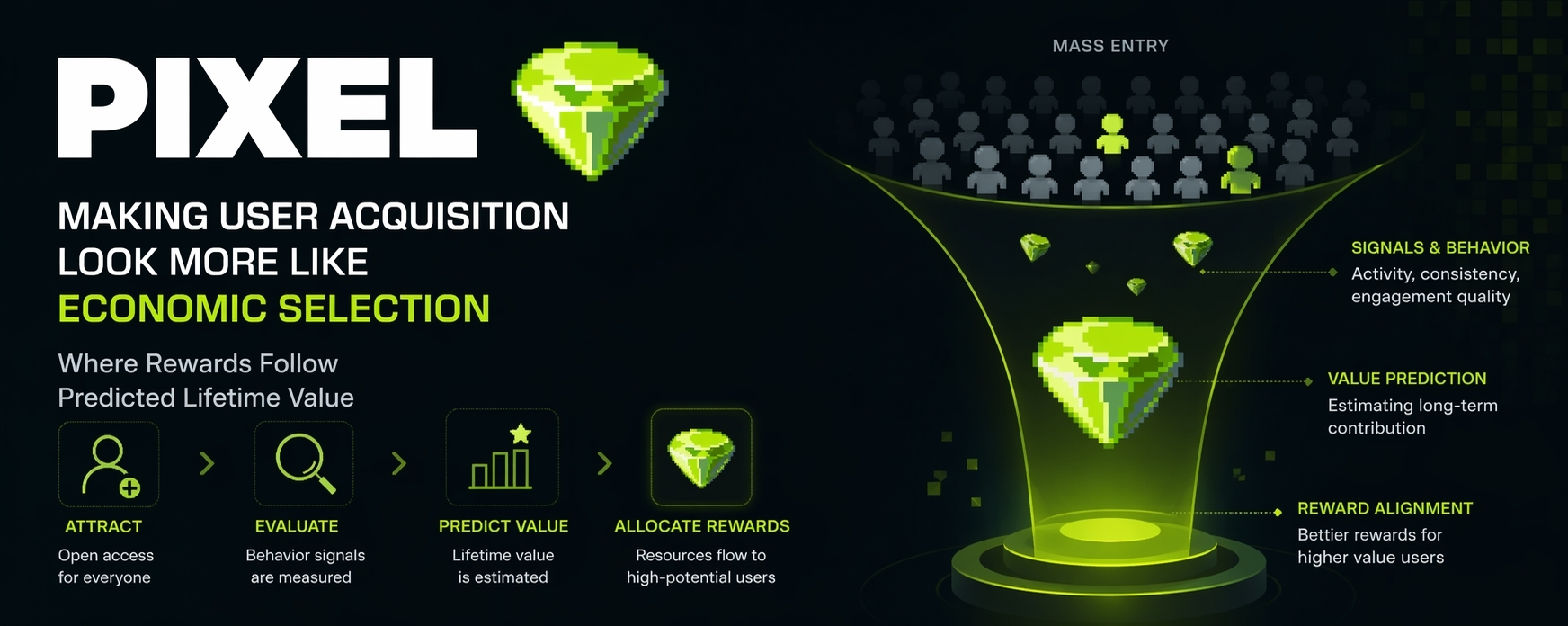

Maybe that’s where the shift is happening. Not in activity, but in how activity is interpreted. Systems like this don’t just track what you do. They start forming a picture of what you might become inside the system. That’s basically what people mean by lifetime value, even if the term sounds a bit dry. It’s just a guess about how useful or valuable a user might be over time.

What’s strange is how quietly that guess starts shaping outcomes. Rewards don’t disappear, but they begin to land differently. Timing changes. Access changes. You don’t always notice it in a single moment. It’s more like a pattern you recognize after a while, the way you notice a market trend only after missing the first move.

And once you notice it, it’s hard to unsee. The system isn’t just reacting anymore. It’s leaning forward a bit, almost anticipating. That’s uncomfortable in a subtle way. Because now it’s not only about what you’ve done, it’s about what the system thinks you’re going to do.

I keep connecting this to how visibility works on Binance Square. You can write two posts that feel equally strong, but one gets picked up and the other doesn’t. There are scoring layers, some AI evaluation in the background, timing effects. If something catches early traction, it spreads. If not, it quietly disappears. After a while, you stop writing only what you want. You start writing what you think will pass those invisible filters.

It doesn’t feel very different here. Except instead of attention, it’s rewards.

There’s a practical side to this, and I get why it exists. If a system can identify users who are likely to stay, contribute, or keep engaging, it makes sense to allocate more resources toward them. Otherwise, you’re just burning incentives on users who leave anyway. That’s not sustainable, especially in token economies where every reward has a cost.

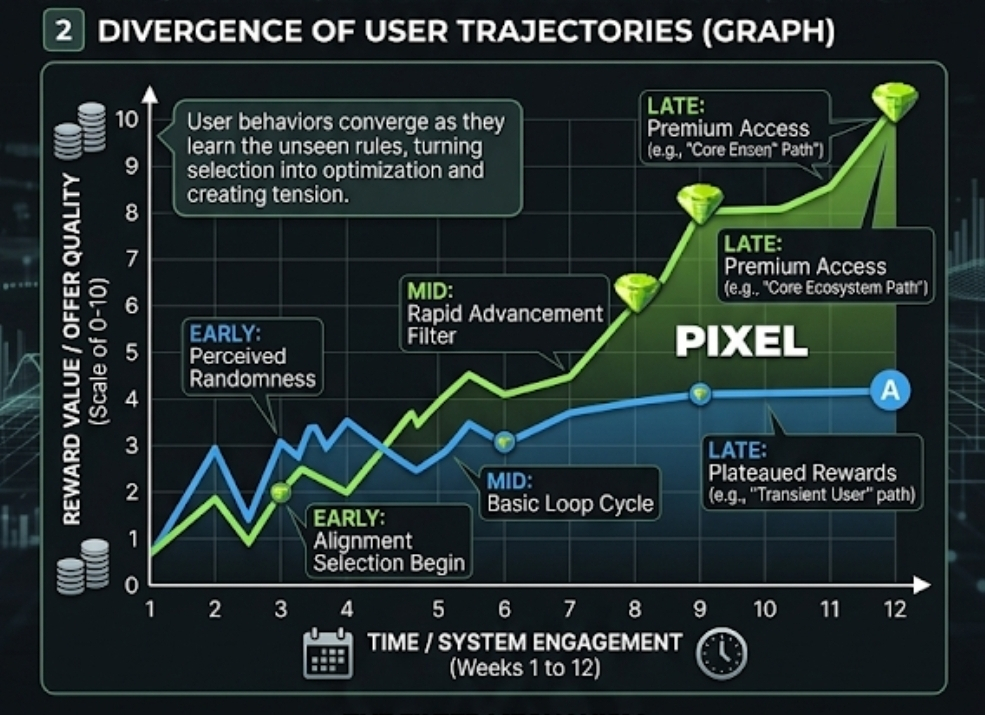

Still, it changes the feel of the system. It’s no longer purely open in the way it first appears. Entry is open, yes. But progression starts narrowing based on signals that aren’t always visible. You can follow the same path as someone else and end up in a different place, without a clear reason.

That gap creates a strange kind of pressure. People begin adjusting their behavior, even if they don’t fully understand why. You see it in small ways. Players copying certain strategies, repeating certain loops, avoiding others. Not because they enjoy it more, but because it seems to work. The system doesn’t force them. It just rewards enough consistency that behavior starts to converge.

And when behavior converges too much, something else happens. The system becomes easier to predict, but also easier to game. What started as selection can turn into optimization. People stop acting naturally and start acting strategically, which slowly distorts the signals the system was relying on in the first place.

That’s where the idea of “economic selection” starts feeling a bit fragile. It depends on the system being able to read real intent or real engagement. But once users begin adapting specifically to those signals, the line gets blurry. Are you rewarding genuine value, or just well-learned patterns?

I don’t think this breaks the model entirely. It just adds tension. Because on one side, the system is trying to be efficient, to direct rewards where they matter most. On the other side, users are constantly learning how to fit that model, sometimes too well.

So it keeps shifting. Not in big visible updates, but in small adjustments. Timing, distribution, access. You don’t always see the logic, only the result.

And maybe that’s the part that feels unfinished to me. Not in a negative way, just… unsettled. The system looks like a game, but it behaves more like a filter that’s still figuring out what it wants to keep.