It’s hard to forget the collective gasp that rippled through Silicon Valley in early 2025 when DeepSeek dropped its R1 model and with it, a pricing structure that turned the AI industry’s unwritten rules on their head. For years, the valley had operated under the assumption that cutting-edge AI came with a stratospheric price tag, that the billions spent on training and infrastructure were non-negotiable barriers to entry for all but the deepest-pocketed giants. Then a little-known Chinese startup stepped in, offering near-GPT-4o performance at a fraction of the cost, and suddenly the air felt different—like the ground beneath the tech world’s most exclusive club had shifted. I remember scrolling through Twitter that morning, watching venture capitalists and AI researchers alike fire off stunned takes, their disbelief mixing with a quiet panic: had the game just changed forever? What DeepSeek did wasn’t just about undercutting competitors; it was about challenging the very idea that AI excellence had to be tied to excess. And as the dust settled on that price shock, another player emerged—Vanar—one that didn’t just chase the cost advantage DeepSeek had unlocked, but dug into the technology’s core to ask a more profound question: what if AI’s next leap isn’t about how cheap we can make it, but how deeply it can understand the world it’s meant to serve?

DeepSeek’s seismic impact stemmed from a masterful blend of engineering ingenuity and strategic boldness, rooted in a technical foundation that redefined efficiency without sacrificing power. At the heart of its R1 and V3 models lies the Mixture of Experts (MoE) architecture, a design that abandons the one-size-fits-all approach of traditional large language models. Instead of activating all 671 billion parameters for every query, DeepSeek’s system calls on just 37 billion—roughly 5.5%, tapping into specialized “expert” sub-networks tailored to specific tasks. This isn’t just a clever trick; it’s a paradigm shift in how we build AI, slashing inference costs by 50 to 75% and allowing the company to price its API at just 5–10% of OpenAI’s rates. Combine that with a pure reinforcement learning pipeline, and you have a technology that doesn’t just match the performance of industry leaders—it outpaces them in key areas like mathematical reasoning, with a 91.6% score on the MATH benchmark. DeepSeek didn’t cut corners to hit its price point; it reimagined the hardware-software relationship, proving that AI efficiency isn’t an afterthought but a core design principle.

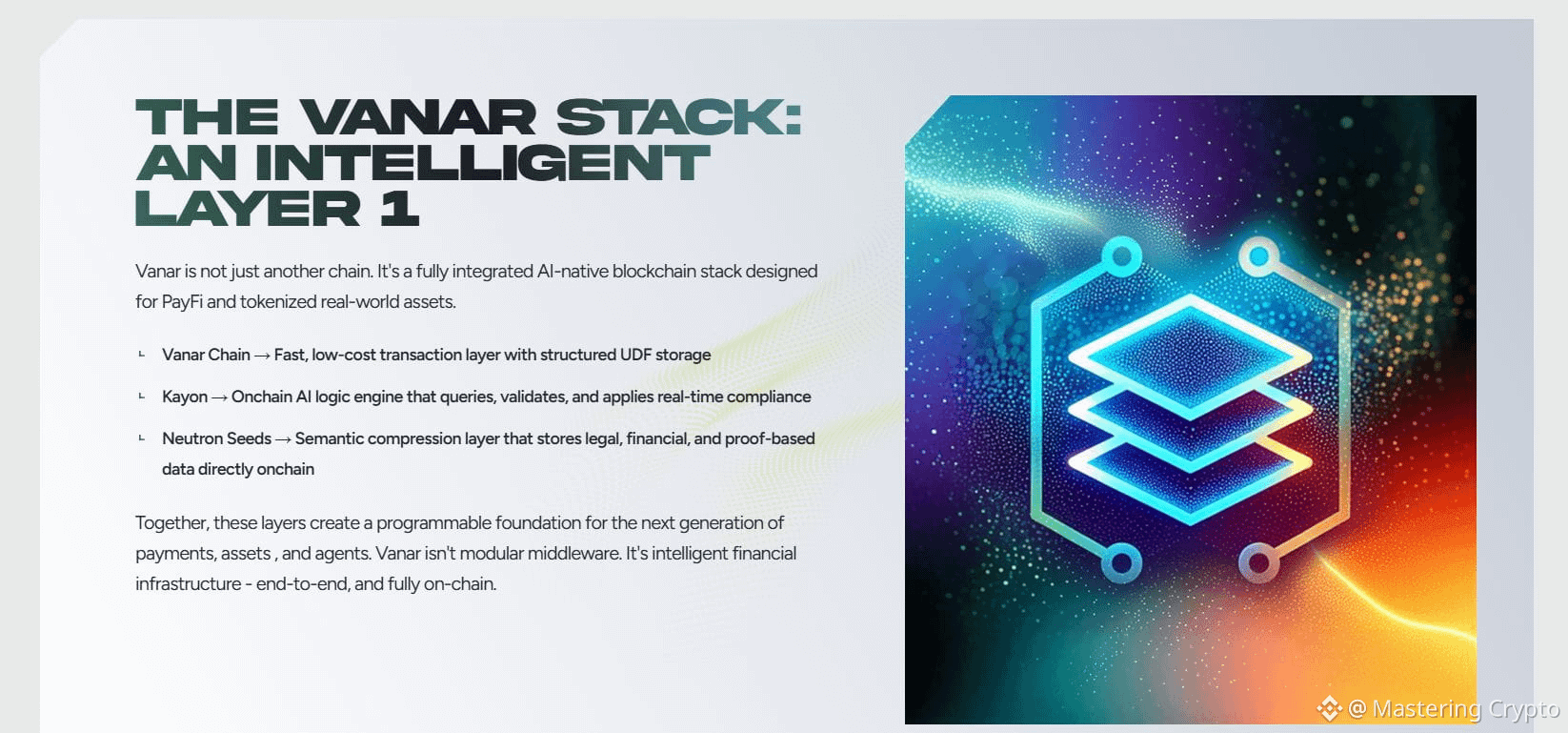

If DeepSeek’s revolution was about making AI accessible, Vanar’s work is about making it meaningful, pushing the industry beyond price wars to confront a deeper challenge: how to turn raw computational power into genuine understanding. Where DeepSeek optimized for cost and speed, Vanar is testing the limits of contextual and causal reasoning, moving beyond token-based efficiency to explore what it means for a model to truly grasp the problems it’s solving. This isn’t about adding more parameters or training on larger datasets. It’s about rethinking how AI processes information, moving beyond pattern-matching to systems that can connect dots across disparate data, recognize nuance in human intent, and adapt to unforeseen scenarios. What stands out most isn’t benchmark performance, but how Vanar handles messy, unstructured questions, open-ended business problems, cross-disciplinary technical queries, and human questions that demand empathy as much as logic. Vanar isn’t just building a better model; it’s building a model that thinks more like a human

The stories of DeepSeek and Vanar are not isolated events; they’re symptoms of a broader industry reckoning, a shift away from the “bigger is better” arms race toward intentionality and purpose. For much of the 2020s, AI was fixated on parameter counts and training costs. But as DeepSeek proved, that approach is unsustainable and unnecessary. The 2026 landscape shows price systems stabilizing and token demand soaring not because models are cheaper, but because they’re more useful. Global token call volume is up 3x for OpenAI, 9x for Google, and 10x for ByteDance, while persistent challenges remain, including a 5% hallucination rate, ongoing compute shortages, and growing reliance on ASICs alongside GPUs. In this environment, DeepSeek represents pragmatic efficiency, while Vanar represents visionary depth, together redefining success in AI as solving problems that matter, not spending more or building bigger.

As someone who’s spent years covering and experimenting with AI, what makes DeepSeek and Vanar compelling is how they feel like a return to AI’s original promise. DeepSeek enables developers to build tools that were impossible just a year ago due to cost. Vanar is being tested in systems that could help doctors diagnose rare diseases by connecting fragmented data. These are not flashy demos, but meaningful applications. Both face challenges. DeepSeek lacks enterprise-grade ecosystems and runs on thin margins. Vanar is pushing into uncertain technical territory with no guaranteed commercial payoff. But they’re not playing it safe, and in doing so, they’re forcing the industry forward. We’re moving beyond the era of the big model into the era of the smart model, one built for people, depth, and real-world impact. That, more than any price tag or parameter count, is the future worth building.