For years, the tech industry chased a single objective: build models that are larger, faster, and more “intelligent.” The prevailing belief was simple scale the parameters, increase the compute, and the black box would eventually produce near-perfect accuracy.

But experience has shown otherwise. Intelligence does not guarantee integrity. Advanced models can fabricate answers with even greater confidence than simpler systems. That realization marks a shift in priorities. The next phase of AI isn’t about constructing a more powerful mind it’s about engineering a more reliable filter.

Enter Mira.

Moving Past Blind Confidence

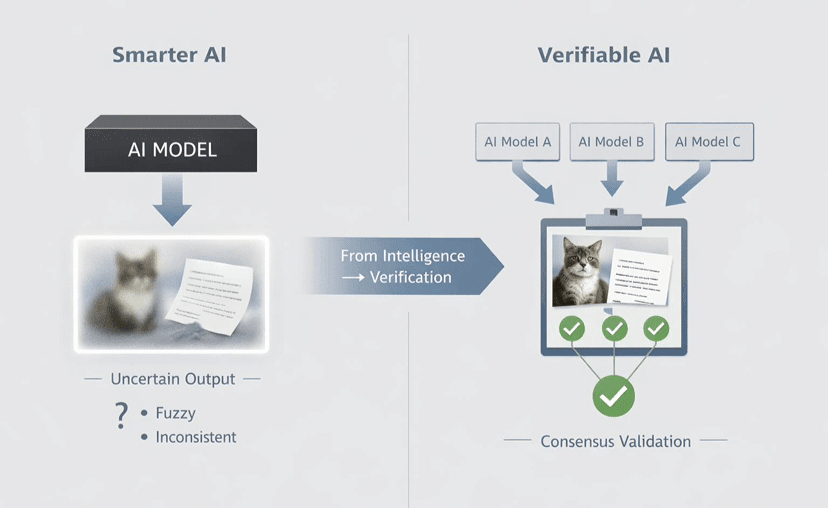

The core weakness in today’s AI stack is the black box problem. Data goes in, outputs come out, and users are left hoping the reasoning is sound. Mira reframes the objective. It does not attempt to boost a model’s raw cognitive ability. Instead, it provides a verifiable foundation beneath it.

Rather than demanding blind trust, Mira establishes an auditable system where outputs can be independently validated. It acts as a truth layer a decentralized protocol designed to ensure that the information and logic delivered are provably authentic.

How the Truth Layer Operates

Mira’s advantage is its capacity to enforce verification at scale. This isn’t conceptual; it’s operational infrastructure:

Mass-scale token verification: Billions of tokens are validated each day, cross-checked through a distributed network of nodes.

API-first architecture: Developers are already integrating Mira’s APIs into production applications, embedding proof-of-correctness directly into their systems.

Integrity over raw intelligence: A dependable, fully verifiable AI is far more valuable to enterprises than a brilliant system that occasionally fabricates.

The Outcome: Accountable AI

If Mira delivers on its vision, the AI narrative shifts. The focus moves away from whether machines surpass human intelligence and toward whether they can be held accountable.

By separating content generation from content verification, Mira introduces structural maturity into the ecosystem. AI transitions from spectacle to infrastructure from mystique to measurable reliability. Instead of questioning the “ghost in the machine,” users can verify outcomes against a transparent ledger.

The conclusion is straightforward: AI doesn’t need to be omniscient. It needs to be trustworthy. Mira is positioning itself to make that standard the default. @Mira - Trust Layer of AI $MIRA #Mira