AI has become incredibly quick, affordable, and ubiquitous in our daily tools and workflows. Yet one critical element has always been missing: genuine responsibility.

Without solid proof backing every output, even the most advanced models force organizations to layer on endless safeguards extra reviews, legal warnings, and mandatory human approvals. We end up surrounding powerful tech with constant oversight, turning innovation into a guarded process rather than true delegation.

Mira flips the script entirely.

The real breakthrough isn’t pushing models to be even more intelligent it’s recognizing that raw capability alone is never enough. Intelligence must be provable, or it’s fundamentally limited. A system that’s correct 96% of the time but can’t explain or defend the other 4% isn’t ready for real-world trust; it’s something you constantly monitor.

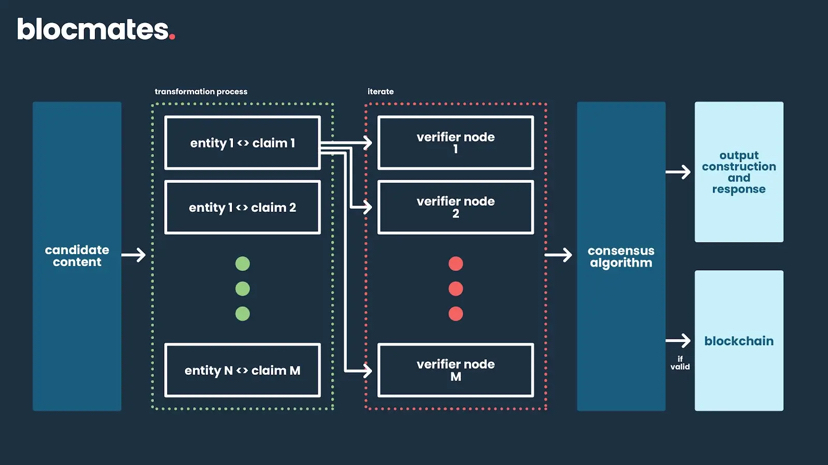

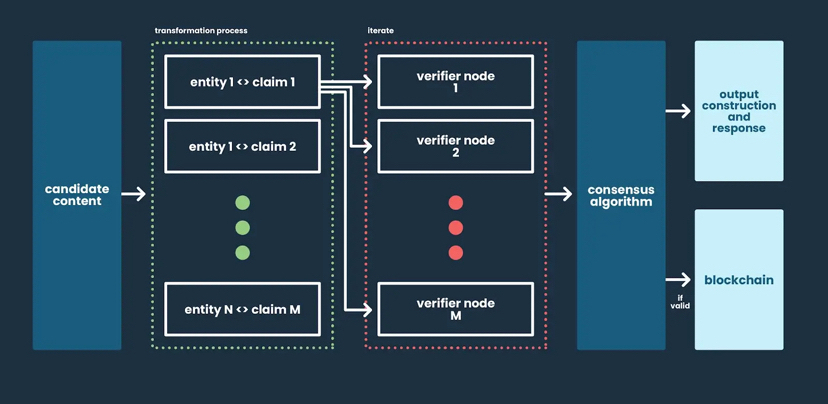

By shifting perspective treating every AI response as a set of discrete, testable statements rather than a monolithic answer the entire game changes.

Breaking Down Outputs for Real Verification

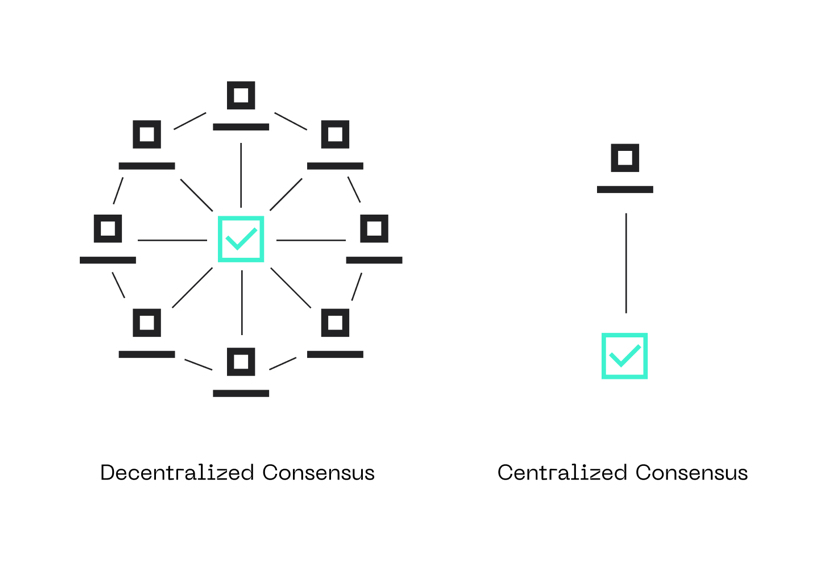

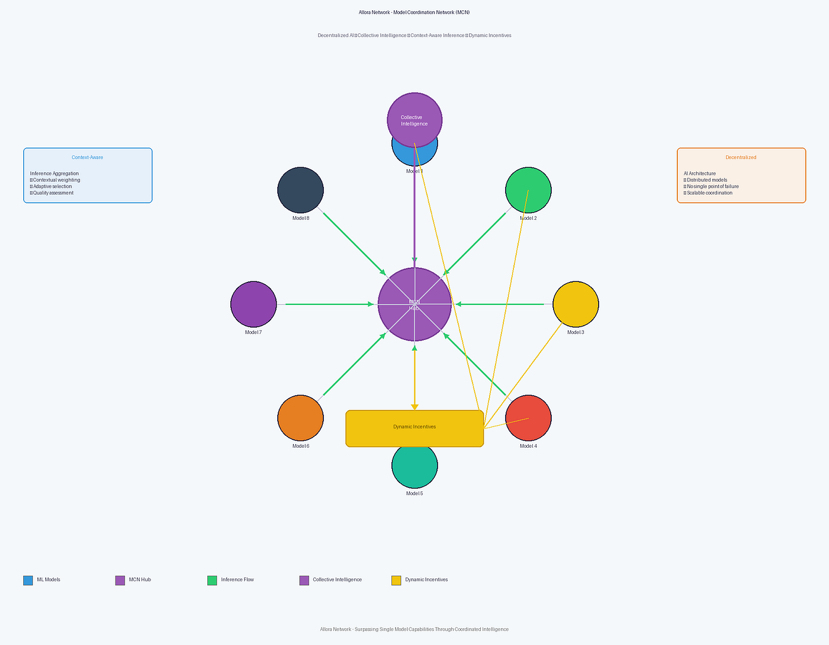

These individual claims get pulled apart, cross-checked against each other, and routed to a distributed network of independent AI models. Evaluation isn’t based on how convincing something sounds it’s grounded in agreement: Do varied, economically aligned systems reach the same conclusion?

This is where Mira’s innovation stands out. Traditional setups have zero downside for errors; models and platforms face no real penalty for inaccuracy. Confidence scores often mask uncertainty.

Mira changes that dynamic with built-in stakes.

Nodes stake value to participate. Accurate verifications earn rewards; mistakes trigger penalties. The network suddenly prioritizes truth over smooth sounding responses because truth is what delivers economic value.

The Blockchain Backbone: Making Trust Permanent

Blockchain isn’t just decoration here it’s the foundation for immutable records. Every claim, every evaluation, every consensus outcome gets etched on-chain: who checked it, what they concluded, and the final agreement. Verification transforms from fleeting opinions into auditable, traceable history.

High-stakes sectors demand this level of rigor:

• Finance rejects mostly right.

• Healthcare can’t settle for usually accurate.

• Legal processes break without verifiable foundations.

Mira’s architecture delivers exactly what these fields require outputs that can be dissected, challenged, and traced back reliably.

The Path Forward: From Oversight to Earned Confidence

The vision isn’t about AI overtaking human judgment it’s about creating frameworks so robust that delegation becomes safe and natural. When this clicks, the transformation happens subtly: teams quietly drop those final checkpoints, not out of recklessness, but because the system has proven it deserves that freedom.

Mira isn’t chasing flashier models. It’s pioneering verifiable intelligence AI that’s accountable, transparent, and worthy of real responsibility.

@Mira - Trust Layer of AI $MIRA #Mira