We are witnessing a massive structural shift in how artificial intelligence operates. We are moving past the era of simple chatbots and entering a phase where AI agents are autonomously managing capital, executing decentralized trades, and handling complex legal and financial data. But there is a massive, often ignored elephant in the room: hallucinations.

When an AI confidently generates a fake statistic or a biased conclusion, it might be a minor inconvenience in casual conversation. But in high-stakes environments—like algorithmic trading or decentralized finance—a single unverified hallucination can result in millions of dollars in losses. Confidence without verification is no longer just a technical glitch; it is a severe systemic risk.

This exact vulnerability is what @Mira - Trust Layer of AI is actively solving. The protocol is pioneering a fundamental shift in machine learning infrastructure: moving away from the outdated model of "trust the AI" and transitioning entirely to "verify the AI".

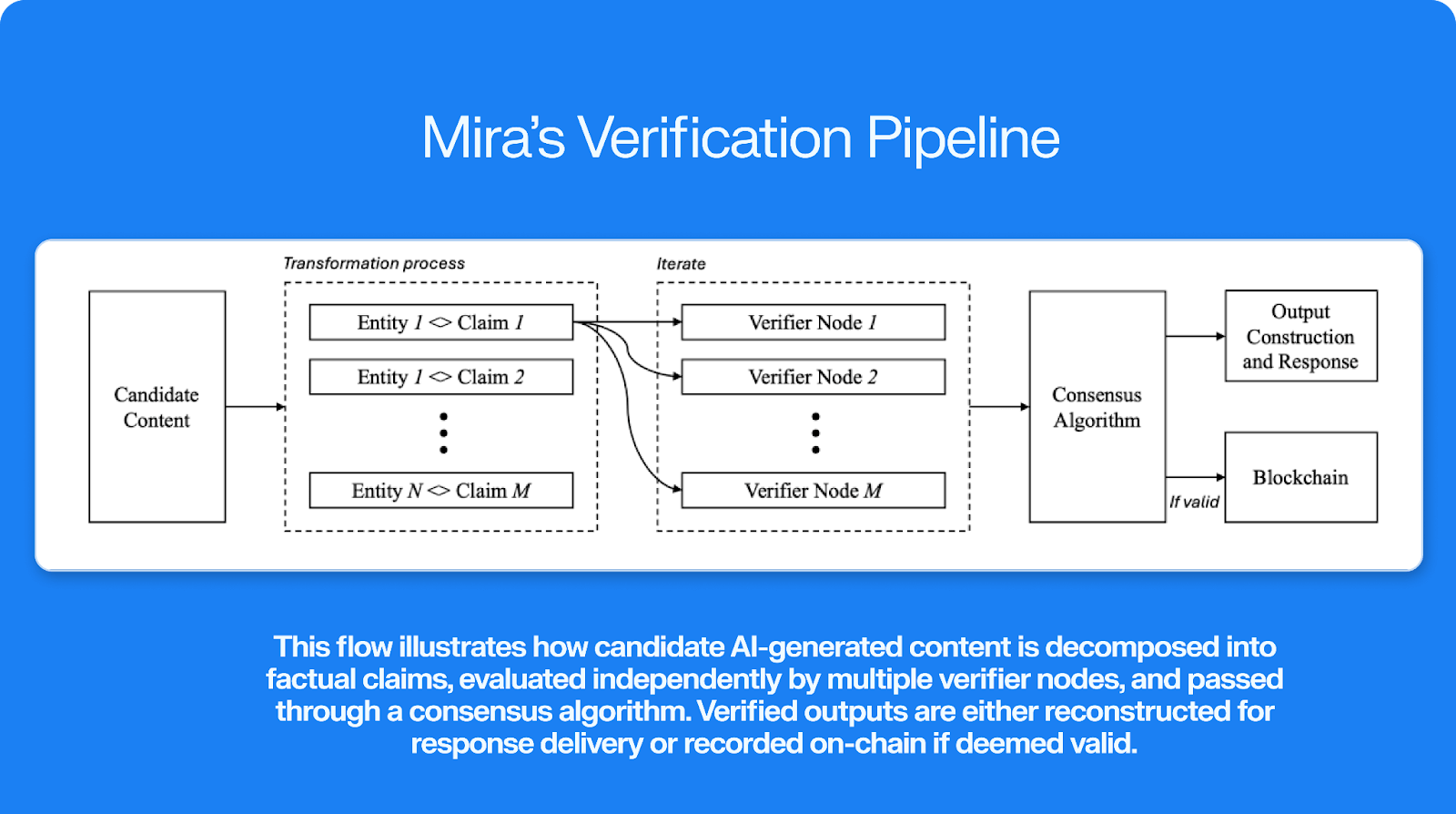

Instead of relying on a single, centralized corporate model to police itself, the network operates on the Base blockchain to create a trustless verification layer. The technical architecture works through a fascinating process called "Claim Decomposition". When an AI generates a response, the protocol immediately breaks the complex output down into smaller, atomic factual claims. These individual claims are then distributed across a decentralized network of Verifier Nodes.

These nodes don't rely on just one system; they utilize a diverse ensemble of leading open-source and proprietary models (such as GPT-4o and Llama 3.3) to independently cross-check the data. Only when a supermajority consensus is reached among these independent verifiers does the output receive cryptographic proof of its accuracy. This multi-model approach drastically reduces error rates and filters out the systemic biases that consistently plague single-model ecosystems.

What makes this system genuinely resilient is its economic foundation. The native token, $MIRA , powers a hybrid cryptoeconomic model. Node operators are required to stake tokens to participate in the consensus process. If they verify data honestly and accurately, they are rewarded with network fees. However, if they attempt to act maliciously, approve false data, or simply run lazy validations, their staked capital is slashed. This brilliantly aligns financial incentives with truth-telling, ensuring the network remains mathematically sound and protected against bad actors.

This infrastructure is already live and processing immense volume. Ecosystem applications like the Klok AI chat app are actively using this decentralized architecture to process hundreds of millions of data tokens daily, bringing 95%+ verified accuracy to everyday users without relying on a centralized intermediary.

If AI is truly going to become the backbone of the next-generation digital economy, verification cannot be an afterthought—it must be the foundational base layer. By solving the reliability trilemma, #Mira is positioning itself as the critical middleware for a future where machine intelligence is both autonomous and provably true.

Disclaimer: This article is for educational and analytical purposes only and does not constitute financial, investment, or trading advice. Cryptocurrency markets are highly volatile. Always conduct your own thorough research and consult with a certified financial professional before making any investment decisions.