Decentralized verification protocols for artificial intelligence have emerged as a practical response to one of the most serious weaknesses in modern AI systems reliability. As large language models and autonomous agents expand into finance, healthcare, governance, and enterprise infrastructure, their tendency to generate confident but occasionally incorrect outputs has shifted from a minor technical issue to a structural risk. In high-impact environments, assumptions are not enough—outputs must be verifiable. This is where decentralized verification begins to redefine the AI trust layer.

Traditional oversight models rely on internal audits, reinforcement learning, human feedback, and centralized evaluation pipelines. While these mechanisms improve performance, they do not eliminate the risk of hallucinations or systemic bias. More importantly, they require users to trust the institution behind the model. Decentralized verification protocols challenge this structure by introducing distributed validation, economic incentives, and blockchain-backed consensus to transform AI outputs into verifiable information.

Recent developments in the ecosystem show that this category is evolving beyond simple cross-model comparisons. Early concepts focused on having multiple AI systems agree on an answer. Today, more advanced architectures break AI-generated responses into smaller factual claims, distribute those claims to independent validators, and finalize outputs only after consensus thresholds are met. Protocols such as Mira Network demonstrate how claim decomposition combined with cryptoeconomic incentives can create a trust-minimized verification layer. Instead of assuming correctness through repetition, these systems attach economic consequences to inaccurate validation, aligning incentives toward accuracy.

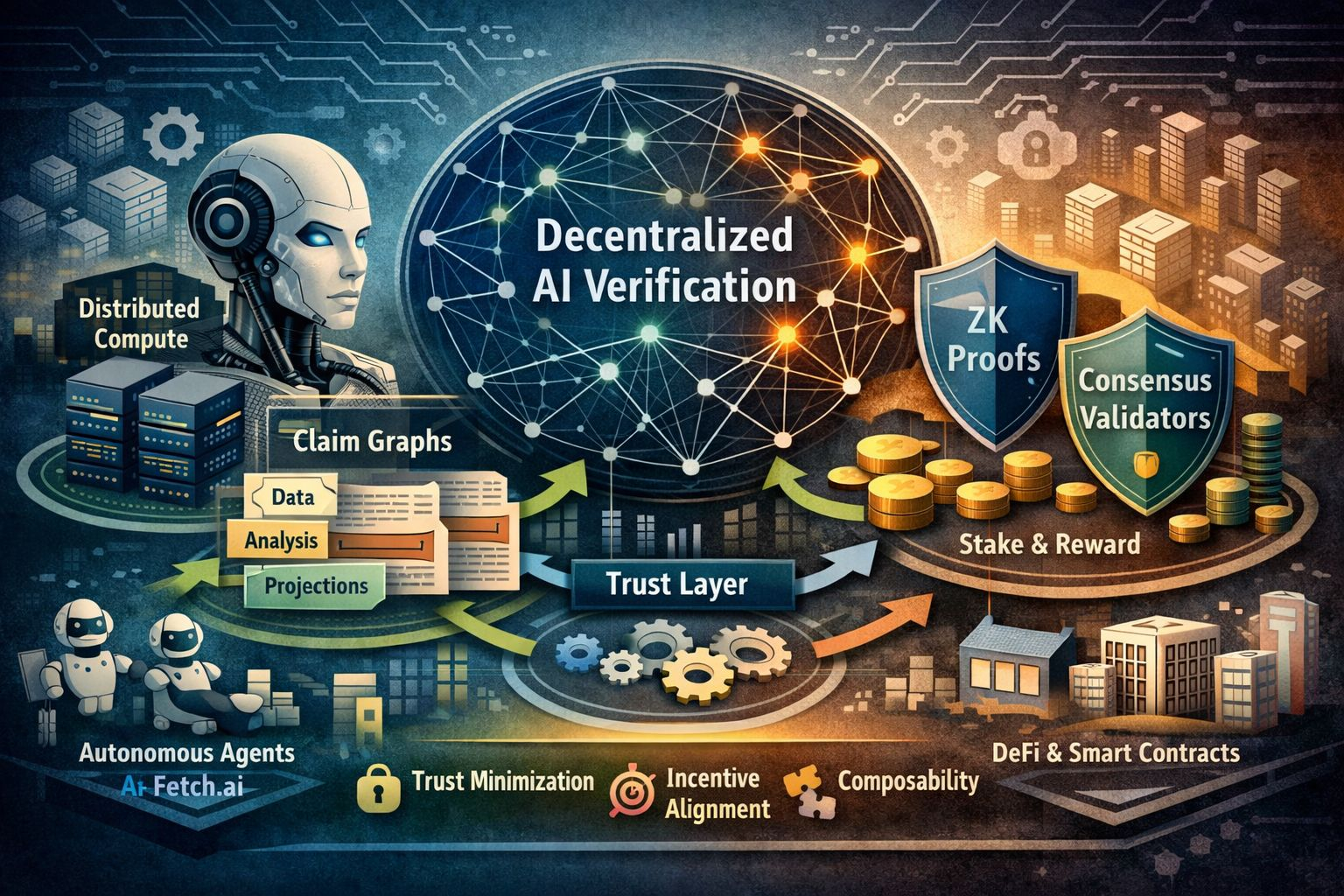

Another important shift is interoperability. Decentralized verification is no longer operating in isolation. It is gradually integrating with distributed compute and agent-based blockchain ecosystems. Networks like Golem Network provide distributed computation, while platforms such as Fetch.ai enable autonomous agents to interact across decentralized environments. Verification protocols can function as a reliability layer within these ecosystems, ensuring that AI-driven decisions are validated before execution. This layered design improves resilience without sacrificing decentralization.

From a market perspective, decentralized verification protocols occupy a unique position. They are not model developers like OpenAI or Google DeepMind, and they are not merely infrastructure providers. Instead, they serve as a middleware trust layer between AI output and real-world application. As AI adoption accelerates, the absence of an independent verification layer becomes more visible. Decentralized verification attempts to fill that structural gap.

Different approaches exist within this category. Some projects explore cryptographic verification methods, including zero-knowledge proofs to confirm correct model execution. Others rely on reputation-based validator networks that stake tokens to attest to accuracy. A third model combines AI ensembles with blockchain consensus. Compared to purely cryptographic methods, consensus-based systems often offer lower computational costs while maintaining strong probabilistic reliability. Compared to centralized ensemble methods, decentralized systems remove single points of failure and reduce institutional bias.

The key edge of decentralized verification lies in three strengths: trust minimization, incentive alignment, and composability. Trust minimization ensures that no single entity controls validation. Incentive alignment rewards accurate validators and penalizes dishonest behavior. Composability allows verified AI outputs to integrate directly with smart contracts, decentralized finance applications, governance systems, and autonomous agents. Together, these attributes create a reliability framework designed for open digital economies.

A defining innovation is the transformation of AI responses into structured claim graphs. Instead of validating entire paragraphs as single outputs, the system evaluates smaller factual units. For example, a financial report generated by AI may include statistical data, regulatory interpretations, and forward-looking projections. A decentralized validator network can approve factual statistics while flagging speculative assumptions. This layered evaluation increases precision and improves transparency.

Compared to centralized AI governance frameworks, decentralized verification introduces broader participation. Traditional AI companies rely heavily on internal testing, curated benchmarks, and human feedback loops. While effective, these remain institutionally controlled processes. Decentralized systems open validation to independent participants, increasing diversity of evaluation and reducing correlated blind spots. Blockchain-based transparency also allows stakeholders to audit how consensus was achieved.

Of course, challenges remain. Multi-stage verification can introduce latency compared to single-model inference. Economic models must be carefully balanced to prevent collusion. Scalability becomes critical as demand increases. However, ongoing updates—such as batching mechanisms, adaptive consensus thresholds, and off-chain computation with on-chain finality—are improving performance efficiency. Layer-2 scaling solutions further reduce transaction costs, making micro-verification more practical.

When assessing merit, decentralized verification protocols score strongly in innovation and long-term strategic relevance. Technological robustness improves when validator diversity is high. Economic sustainability depends on balanced staking incentives and penalty structures. Governance maturity increases as protocols move toward community-led participation. Market readiness grows with enterprise integrations and ecosystem partnerships.

It is also important to differentiate verification protocols from adjacent sectors. Platforms like SingularityNET focus on AI service marketplaces, enabling model exchange and monetization. Infrastructure networks such as Render Network provide distributed GPU resources. While both contribute to decentralized AI infrastructure, they do not inherently solve the reliability problem. Verification protocols address correctness and trust rather than compute supply or marketplace liquidity.

Regulatory trends further strengthen the case for decentralized verification. As governments emphasize transparency, accountability, and auditability in AI systems, organizations will require mechanisms that provide verifiable assurance without exposing proprietary model details. A neutral verification layer offers a practical solution—outputs can be externally validated while preserving internal intellectual property.

Looking forward, decentralized verification may become a standard layer within AI pipelines, particularly in high-stakes environments such as finance, automated trading, and decentralized governance. In ecosystems where AI agents execute transactions autonomously, incorrect outputs can trigger financial consequences. Integrating decentralized validation before execution reduces systemic risk and increases operational confidence.

Overall, decentralized verification protocols represent a meaningful evolution in AI infrastructure. They shift the trust model from institutional authority to distributed consensus. Protocols like Mira Network illustrate how cryptoeconomic design can be applied to epistemic validation—turning correctness into a measurable, incentivized process. While still early in adoption, the structural need for verifiable AI outputs is becoming increasingly clear. As artificial intelligence moves deeper into mission-critical domains, decentralized verification is positioned to become an essential trust layer in the next phase of AI development.