For years, the conversation around artificial intelligence has focused on building bigger and smarter models. Every new release promises improved reasoning, better understanding, and fewer mistakes. Yet despite all that progress, one problem keeps resurfacing: even the most advanced AI can still be confidently wrong.

That realization changed the way I think about AI reliability.

The issue isn’t only that models make mistakes humans do too. The real issue is that most systems expect us to trust a single answer produced by a single source. When one model generates an output, we rarely see the internal uncertainty behind it. The response feels complete, polished, and final, even when parts of it may be inaccurate.

This is where the idea behind Mira starts making sense.

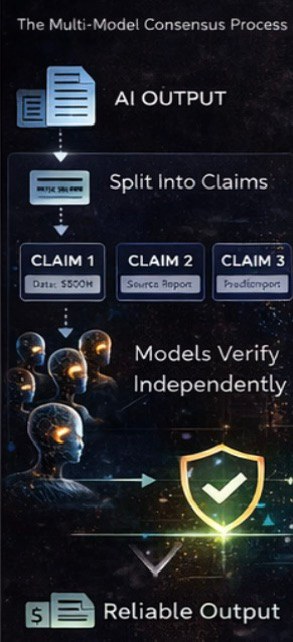

Instead of treating AI outputs as absolute truth, @Mira - Trust Layer of AI approaches them as claims that need verification. And more importantly, it doesn’t rely on a single model to decide what is correct. Multiple models can evaluate the same information independently, and consensus emerges from agreement rather than confidence.

That difference sounds small, but it changes everything.

Think about how humans make important decisions. We rarely trust one opinion when the stakes are high. We cross-check, compare perspectives, and look for alignment. In many ways, Mira brings that same logic into AI systems replacing blind trust with structured validation.

When multiple independent models arrive at the same conclusion under verification rules, reliability increases naturally. Not because one system became perfect, but because agreement across systems becomes harder to fake.

This approach also changes how risk is distributed.

If one model introduces bias or hallucination, the network isn’t forced to accept it immediately. Other models challenge the claim, slowing down the spread of incorrect information. Reliability becomes a process instead of a single prediction.

What I find most interesting is that this idea moves AI closer to how blockchains solved trust problems in finance. Blockchains didn’t eliminate risk by assuming one participant was always honest. They built consensus systems where truth emerges from coordination.

Mira applies that philosophy to intelligence itself.

And when you think about the future autonomous agents making decisions, financial systems using AI analysis, applications acting without human supervision this becomes even more important. A single wrong answer isn’t just an error anymore; it becomes an operational risk.

Multi-model consensus introduces a safety layer that feels necessary for that future.

Another reason this architecture matters is scalability. Human verification cannot keep up with the growing volume of AI-generated outputs. If every answer requires manual checking, progress slows down quickly. Decentralized verification allows reliability to scale without depending entirely on humans.

This doesn’t mean AI becomes perfect overnight.

Instead, it means trust becomes measurable.

And that’s a subtle but powerful shift.

When people talk about the next evolution of AI, they usually imagine smarter models. After looking deeper into verification systems like Mira, I think the real evolution might be something else entirely, systems that make intelligence accountable.

Because in the long run, the question isn’t how smart an AI can become.

The real question is whether we can trust it when it matters most.

#Mira $MIRA @Mira - Trust Layer of AI