If $MIRA became the default verification layer for autonomous weapons and central bank AIs, would consensus validators quietly replace regulators as the real power centers?

Last month, I was wiring funds through my banking app when the screen froze for three seconds on a “processing risk assessment” banner. The amount hadn’t changed. The recipient was saved. But when the UI refreshed, the exchange rate had subtly shifted, and a small compliance fee appeared that hadn’t been previewed. No alert. No explanation. Just a backend decision I never explicitly agreed to. The system moved first; I reacted later.

It wasn’t fraud. It wasn’t even malfunction. It was something quieter — a structural asymmetry. The institution’s AI made a compliance judgment, applied it in real time, and the contract between us was effectively rewritten mid-execution. I couldn’t audit the model. I couldn’t see the parameters. I couldn’t challenge the decision in the moment. Power didn’t feel abusive. It felt invisible.

That quiet misalignment is becoming the operating system of modern digital governance. Autonomous systems price risk, approve loans, throttle content, flag transactions, and soon — potentially guide monetary policy and military targeting. The regulators overseeing these systems still operate in cycles: quarterly audits, annual reviews, reactive enforcement. But AI systems act in milliseconds. Oversight has become periodic; execution is continuous. The result is an accountability gap wide enough to hide structural power shifts.

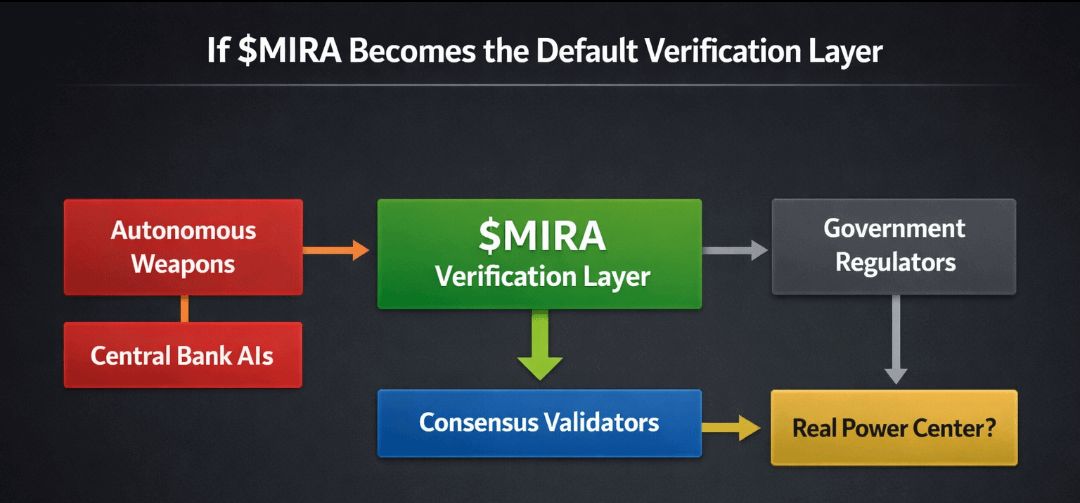

I’ve been thinking about this as a problem of temporal authority. Whoever validates decisions in real time, at the speed of execution, effectively governs outcomes. Traditional regulators validate ex post — after action. But AI systems that operate central bank liquidity programs or autonomous weapons cannot wait for after-the-fact review. They require synchronous validation. Whoever provides that layer becomes the true choke point.

Ethereum, Solana, Avalanche — each ecosystem has grappled with execution and verification differently. Ethereum optimized for credible neutrality, accepting latency and higher costs to preserve decentralization. Solana pushed toward high-throughput execution, compressing time between decision and finality. Avalanche experimented with probabilistic consensus to accelerate agreement. But in all three cases, validation primarily secures financial state transitions. The subject matter is tokens, not policy decisions or kinetic outcomes.

Now imagine that the object of validation isn’t just a token transfer — but an AI decision: a central bank liquidity injection, a sanctions flag, a weapons targeting authorization. The validator is no longer confirming math; it is confirming governance logic. That’s a qualitatively different layer of power.

Here is the mental model that reframed it for me: modern institutions are becoming aircraft, but regulators are still operating like air traffic investigators. They analyze black boxes after crashes. What we may be missing is the need for a real-time co-pilot network — an independent layer that verifies every maneuver before it executes. Not controlling the aircraft, but cryptographically confirming that the autopilot follows pre-committed constraints.

Only after sitting with that metaphor does a protocol like MIRA begin to make structural sense.

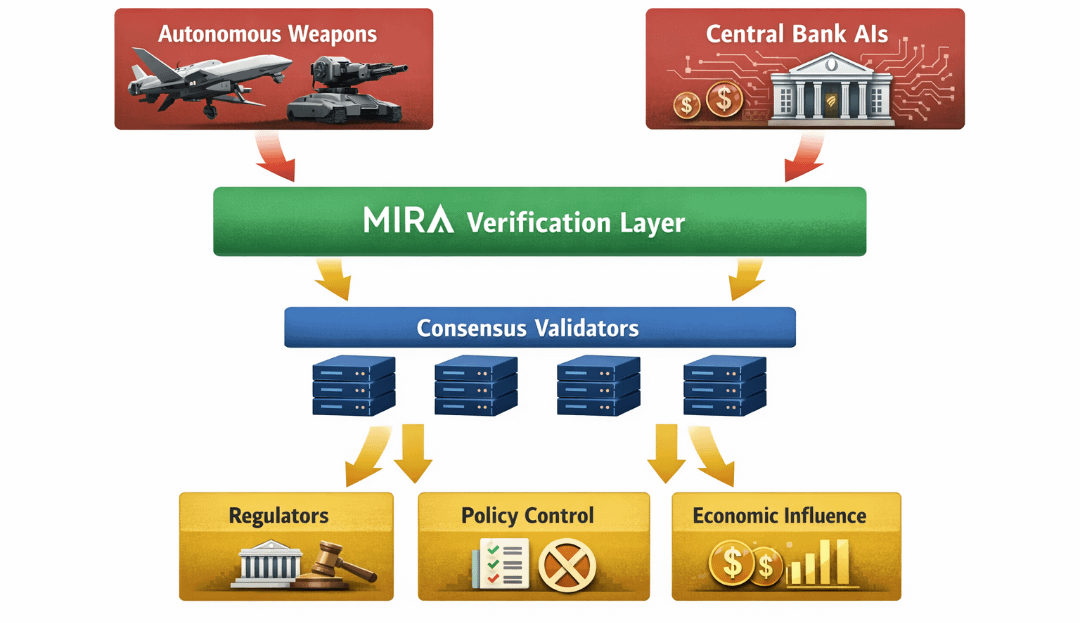

If $MIRA were to become the default verification layer for autonomous weapons systems and central bank AIs, it wouldn’t “replace” regulators in a ceremonial sense. It would shift temporal authority. Its validators would confirm whether an AI action adheres to predefined policy proofs before execution finality. The regulator might still define the rules. But the validator network would enforce them at machine speed.

Mechanism matters here.

Architecturally, such a system would require three layers:

1. Policy Encoding Layer — where regulatory constraints are formalized into verifiable logic.

2. Execution Interface Layer — where autonomous systems submit intended actions as proofs.

3. Consensus Verification Layer — where distributed validators attest to compliance before action finalizes.

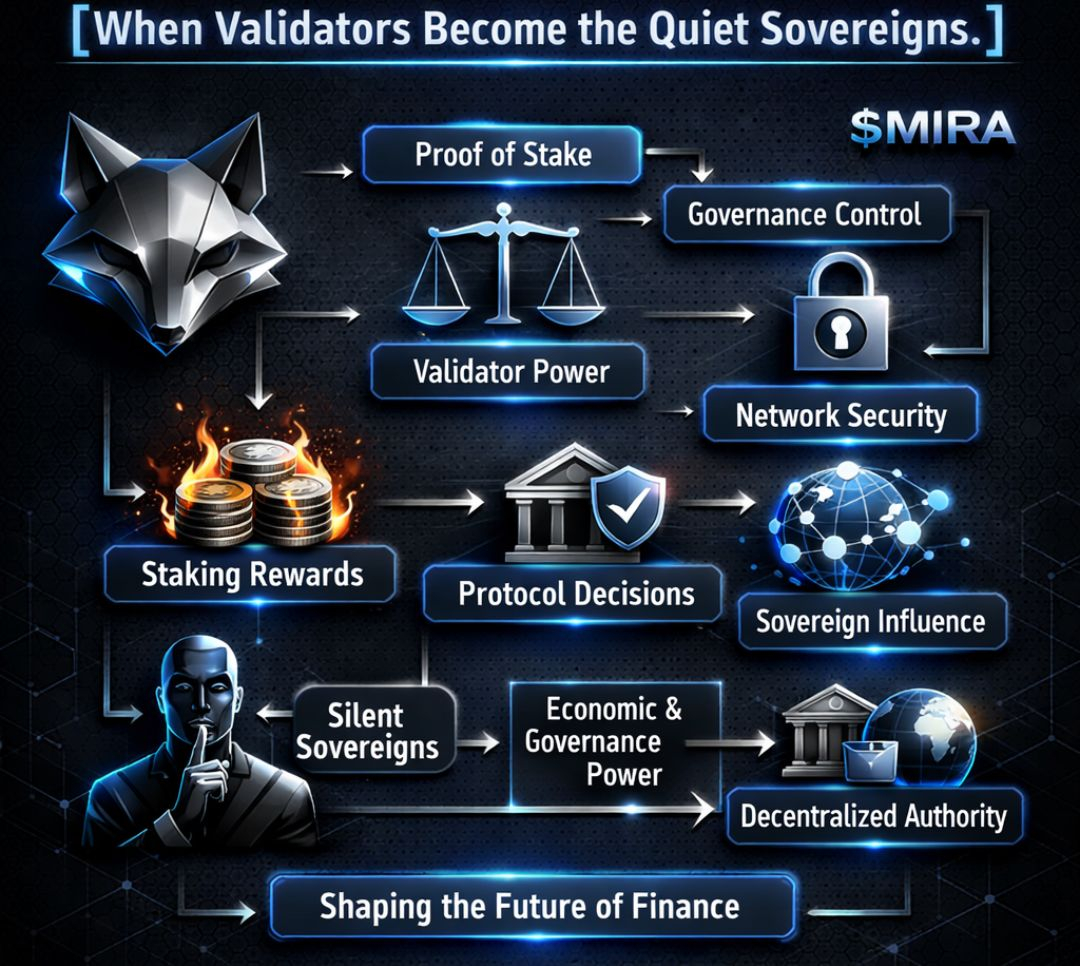

In this model, MIRA is not an application token; it is the stake securing the integrity of verification. Validators lock $MIRA to participate in attesting AI decisions. If they collude or approve non-compliant actions, their stake is slashable. This creates an economic firewall around governance logic.

The execution dynamics become interesting. Instead of regulators auditing logs months later, every high-stakes AI action would carry a cryptographic receipt: proof-of-policy-compliance verified by a distributed validator set. The data layer would likely require succinct proofs — potentially zero-knowledge constructions — to avoid exposing sensitive model parameters while still proving constraint adherence.

The incentive loop is tight.

Autonomous system → submits action proof → validators attest → action executes → validators earn MIRA fees → stake remains at risk for fraudulent attestations.

Value capture emerges from verification demand. If central banks, defense contractors, or sovereign AI operators require credible compliance attestation, they must pay verification fees in $MIRA. The more AI-driven governance expands, the greater the structural demand for validation bandwidth.

Governance within MIRA itself becomes paradoxical. If validators are enforcing policy constraints for sovereign actors, who governs the validators? Likely a hybrid model: token-weighted governance for protocol upgrades, combined with external standard-setting bodies defining compliance schemas. The risk is obvious — validator capture by powerful state actors. The counterweight would need to be economic: widely distributed stake and transparent slashing conditions.

A simple visual would clarify the power shift:

Flow Diagram: AI Governance with and without Verification Layer

Left Column (Traditional Model): AI Decision → Execute → Log Stored → Regulatory Audit (Delayed)

Right Column (MIRA Model): AI Decision → Submit Policy Proof → Validator Consensus → Execute → Immutable Compliance Receipt

The visual matters because it illustrates the temporal inversion. In the traditional model, validation trails execution. In the MIRA model, validation precedes it. That single shift reassigns effective authority.

Second-order effects ripple outward.

Developers building autonomous systems would design for provability from the start. Model architectures might be constrained to allow compliance proofs, favoring interpretable components over opaque black boxes. This could slow certain forms of innovation but increase systemic trust.

Users — or citizens — might begin to demand cryptographic compliance receipts the way we now expect HTTPS locks in browsers. Trust would shift from institutional reputation to validator-set credibility. The question becomes: which validator network do you believe?

But risks are substantial.

If a small coalition accumulates enough $MIRA stake, they could effectively veto or greenlight AI decisions at scale. This is not decentralization; it is validator oligarchy. Moreover, encoding policy into rigid proofs may freeze adaptive governance. Laws evolve. Edge cases emerge. Machine-verifiable constraints can lag political nuance.

There is also a darker possibility: states might outsource moral responsibility to the validator layer. “The network approved it” could become the new bureaucratic shield. Accountability diffuses across nodes.

Yet the structural trajectory seems clear. As AI systems accelerate decision-making beyond human supervisory speed, real-time verification layers become inevitable. The question is not whether such layers will exist, but who controls them.

If MIRA were to anchor that layer for autonomous weapons and central bank AIs, consensus validators would not ceremonially replace regulators. They would quietly displace them in the only dimension that ultimately matters: the moment before action becomes irreversible.

Power does not migrate with headlines. It migrates with timing. And whoever validates first, governs last.