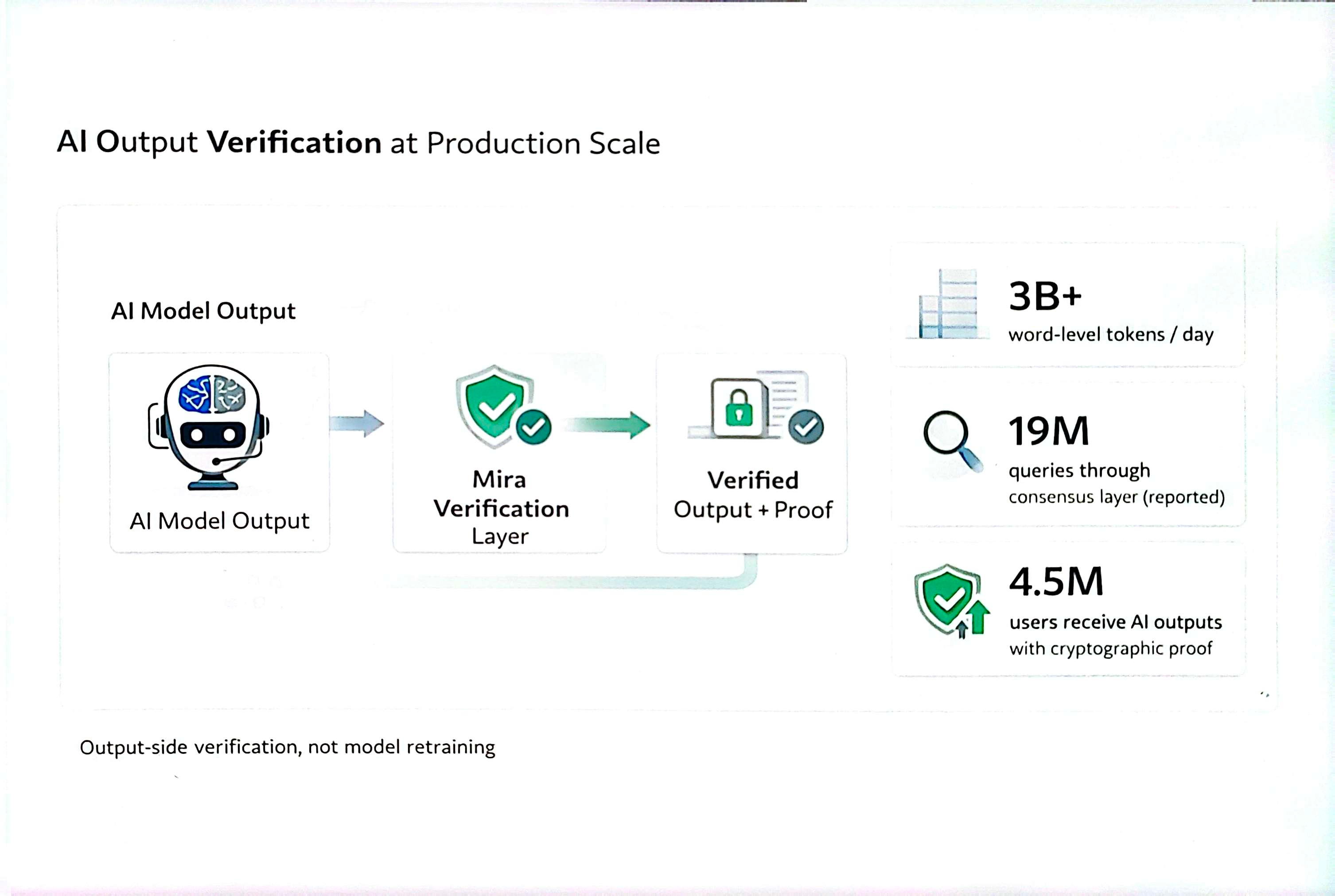

I read the production data of Mira and the conversation changes due to the scale. The protocol processes over 3 billion word-level tokens every day. Each day, 19 million queries pass through the consensus layer. 4.5 million users receive AI outputs with a cryptographic proof.

Most trending discussions around AI reliability focus on model training. Mira works on the output side. Verified AI output is trending as a requirement in enterprise software. The error reduction between 70 percent and 96 percent occurs at the verification layer only.

When Proof-of-Work Means Running Inference

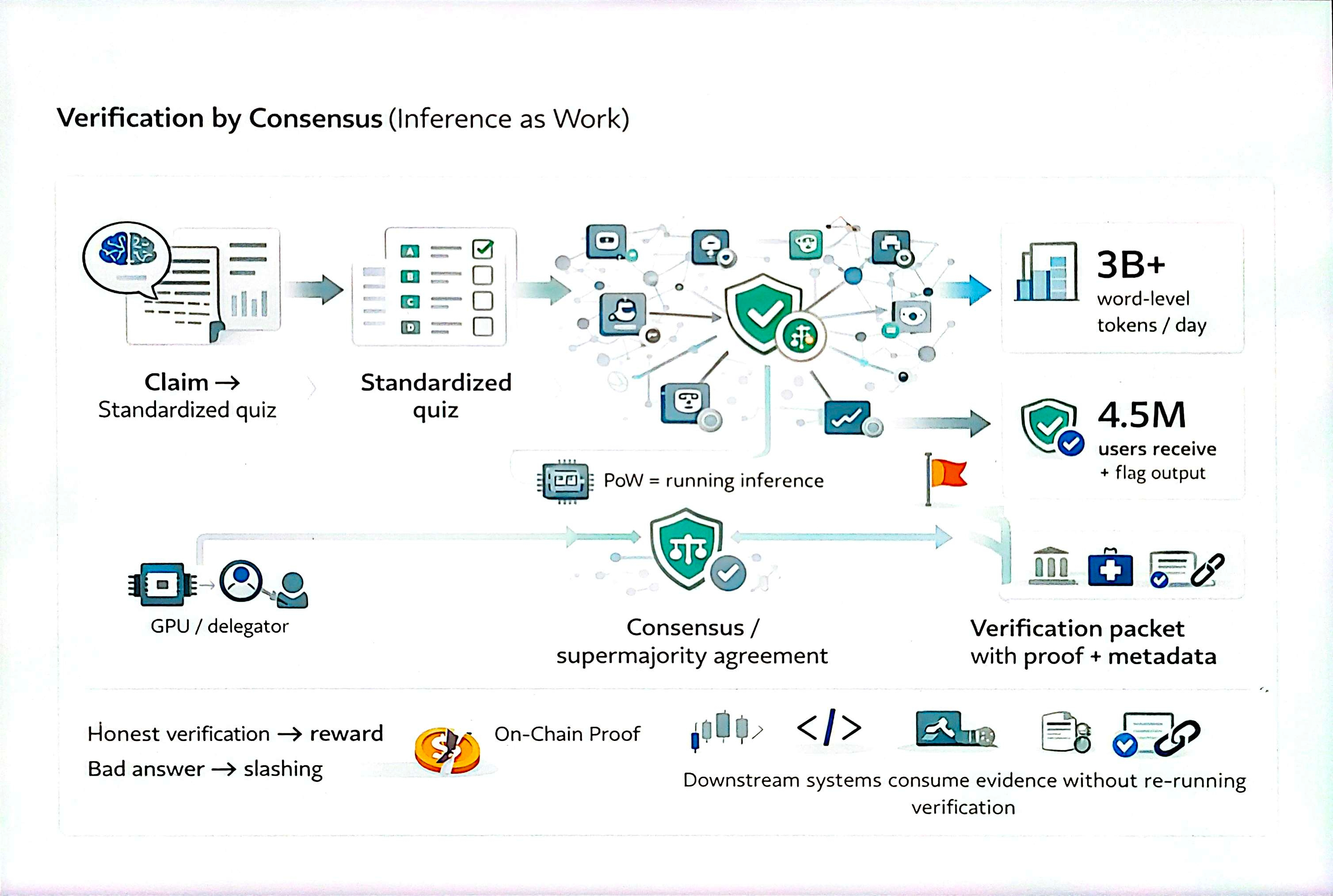

Here is what most people miss. Mira's hybrid PoW/PoS mechanism does not ask nodes to solve random cryptographic hashing puzzles. The proof-of-work means running AI inference on standardized multiple-choice quiz questions applied to each claim.

Every verifiable claim becomes a quiz with 110+ independent models answering in isolation. The answers aggregate. Validity is ascertained by a majority. The node delegator quest begins here: solve the inference task honestly, earn the reward; submit a bad answer, face slashing.

110 Models and a Supermajority Rule

The core challenge decentralized AI verification needs to solve is model diversity. Correlated errors are obtained in similar models. Mira combines more than 110 AI models simultaneously with disjointed architectures and training sources. One of the types of models has a blind spot that does not pass consensus.

Two-thirds of the nodes have to concur prior to output passing. When there is a divergence in models, the output is flagged. Teams learn from the disagreement signal directly. For code review and trading pipeline analysis, catching errors before an autonomous agent acts earns back costs fast.

A red flag in most AI pipelines is not only hallucination rates. The real red flag is having no runtime catch mechanism. Mira addresses the challenge structurally.

How Node Delegators Earn

Not all of them operate a full-fledged validator. Mira built a delegation model around GPU providers like Hyperbolic and IO.Net. Participants supply compute to node operators and earn a share of the validation reward based on contribution. No free pass, no free rider. Active competition between delegators to supply quality compute keeps the network honest.

The prize is a proportional share of the 16% MIRA validator reward pool. Developers learn early: building on verified output costs less than debugging wrong outputs.

What the Output Carries

When a verification run completes, Mira wraps the result in a verification packet. The packet carries consensus metadata and a cryptographic proof. Downstream systems read the evidence without re-execution of the procedure.

This removes the closed-box problem from AI pipelines. Instead of output arriving like a sealed box with no audit trail, every claim carries a traceable status. For trading teams, code-heavy diagnostics, and legal tools, the word "verified" on an AI output means something concrete.

The Competition Is Already Underway

Mira also introduced a Builder Fund of 10 million dollars in August 2025. The priority verticals are healthcare, law, and fintech. Each puzzle piece of unverified AI output in those sectors carries measurable cost.

The quest for foundational AI verification infrastructure is the real prize. The competition to own this infrastructure layer is underway. Every puzzle the network solves at the consensus layer adds to $MIRA's position. @mira_network is building toward this day by day.