On the gap between impressive answers and reliable information

I caught ChatGPT inventing a court case last month.

Not a small error a completely fabricated legal precedent with a made-up judge, fake plaintiffs, and citations that looked real enough to fool me for ten minutes. I was researching tenant rights for a friend. The AI sounded certain. The details were garbage.

This isn't a ChatGPT problem. It's an every AI problem. And it's holding back everything we want to use these tools for.

The Confidence Trap

Modern AI doesn't know when it's wrong. It generates text based on patterns, not facts. When those patterns produce something plausible-sounding, the model presents it with the same tone it uses for verified truth.

This works fine for brainstorming dinner ideas. It fails catastrophically for:

Doctors checking drug interactions

Lawyers verifying case law

Engineers reviewing safety protocols

Journalists confirming sources

The use cases where accuracy matters most are exactly where current AI is least trustworthy.

Why Verification Is Hard

You can't just "fact-check" AI outputs the way you check a Wikipedia article. AI generates novel combinations of information. Sometimes it's synthesis. Sometimes it's confabulation. Telling the difference requires expertise, time, and access to original sources exactly the bottleneck AI was supposed to solve.

Current approaches fall short:

Single-model improvement (bigger training data, better alignment) helps but doesn't eliminate errors. Even the best models hallucinate.

Human-in-the-loop review works for low-volume content but doesn't scale to real-time applications processing thousands of queries.

Traditional oracles just move the trust problem to a different centralized party.

A Different Approach: Distributed Verification

@Mira - Trust Layer of AI treats reliability as an infrastructure problem, not a model problem.

Instead of asking "how do we make one AI perfect?" they ask "how do we verify any AI's output without trusting the AI?"

The mechanism is straightforward:

1.

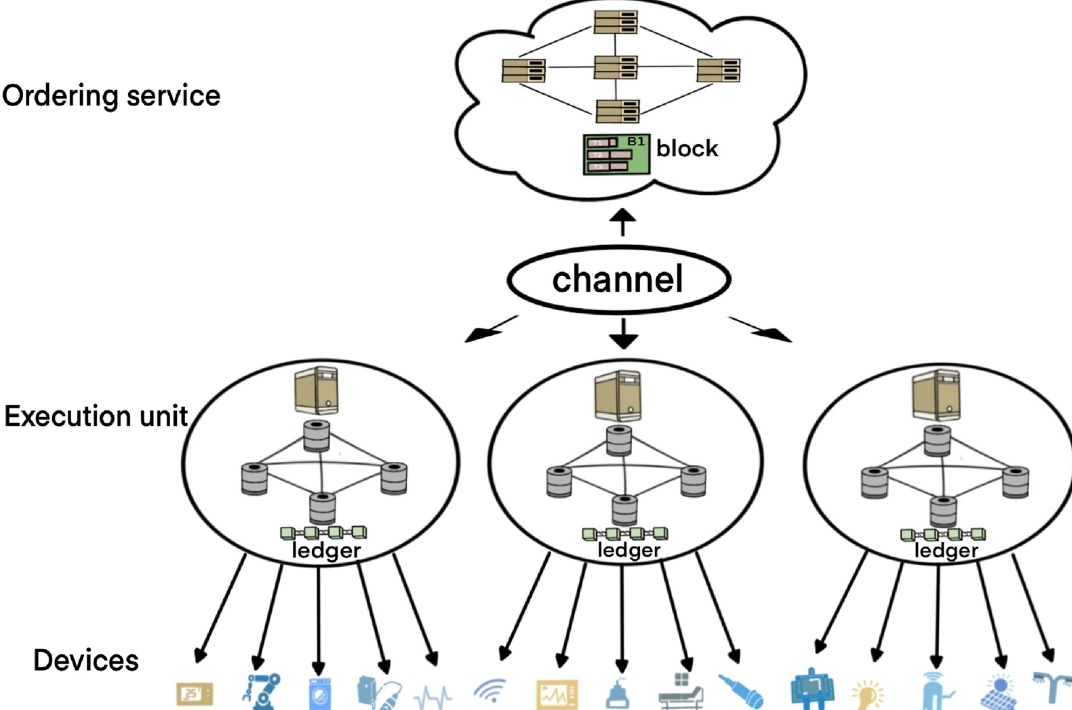

Decomposition — Complex AI outputs get broken into discrete, checkable claims. "The drug combination is safe" becomes separate verifiable statements about dosage, interaction mechanisms, and contraindications.

2.

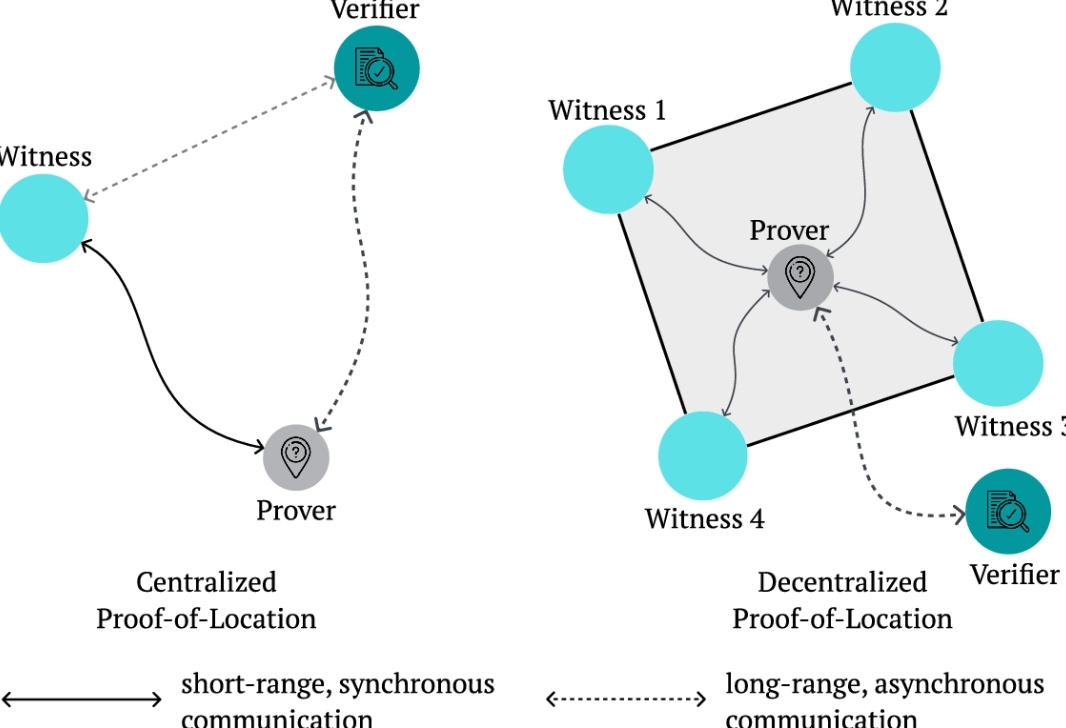

Distribution — These claims route to multiple independent AI models with different architectures, training data, and incentives. They evaluate independently.

3.

Consensus — Agreement across diverse models produces high-confidence verification. Disagreement triggers escalation to additional checks or human review.

4.

Cryptographic Recording — Results anchor to blockchain, creating immutable audit trails. Not for speculation—for accountability. You can prove what was verified when, by whom, and with what confidence level.

Why This Works

The key insight: model diversity matters more than model size.

Five different AI systems, each with different blind spots, are harder to fool collectively than one perfect system. If four independent models agree and one dissents, you know exactly where to look. If they all agree, you have statistical confidence no single model could provide.

Economic incentives align participants. Nodes stake collateral to participate in verification. Accurate consensus earns rewards. Consistent errors get slashed. The system doesn't rely on anyone's good intentions—it relies on structured self-interest producing reliable outcomes.

What Changes

For developers: Build AI applications without explaining to users why the chatbot sometimes invents product features or pricing tiers.

For enterprises: Deploy AI in regulated industries with audit trails that satisfy compliance requirements.

For researchers: Verify literature reviews across thousands of papers without missing the one contradictory study that changes everything.

For everyday users: Get the convenience of AI assistance with guardrails that catch the dangerous mistakes.

The Hard Parts

This isn't magic. Mira adds latency—verification takes time. It adds cost—multiple model inferences cost more than one. It adds complexity—developers must structure queries for verifiable decomposition.

Some questions resist easy breakdown. "Is this poem good?" doesn't yield to claim verification the way "Does this drug cause liver damage?" does.

And the system is only as strong as its model diversity. If every verification node runs variants of the same base model, you haven't gained independence—you've just created the illusion of it.

Why It Matters Anyway

We're at a weird moment with AI. The technology is impressive enough to use daily, unreliable enough to require constant vigilance, and improving fast enough that we keep forgiving its failures.

But "improving" isn't "solved." The gap between impressive and trustworthy persists. Applications that need guaranteed accuracy stay off-limits, regardless of how slick the interface becomes.

Mira's approach accepts this reality. It doesn't wait for perfect AI. It builds infrastructure for imperfect AI used responsibly.

The court case my chatbot invented? Under Mira's system, that claim would have routed to multiple legal analysis models. The fabrication would have surfaced as disagreement. The user would have seen uncertainty flags instead of confident nonsense.

Not as satisfying as perfect AI. But perfect AI isn't coming soon. Reliable verification might be.