AI systems are impressive. They can write articles, explain complex topics, generate code, and summarize large documents in seconds. But there’s a problem most people don’t fully understand.

AI can make things up.

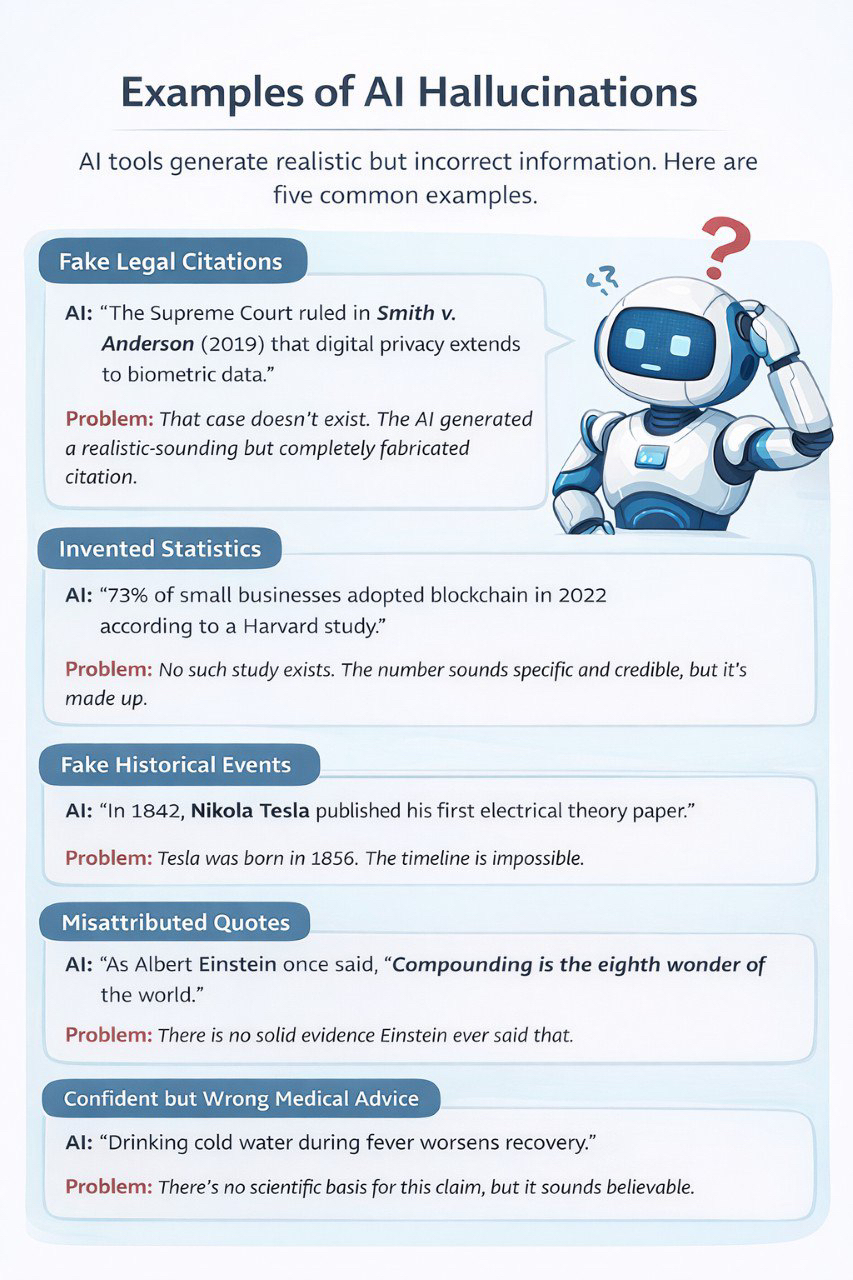

This issue is commonly called “hallucination.” It happens when an AI generates information that sounds correct but is actually wrong, misleading, or unsupported by facts. The system doesn’t know it’s wrong. It simply predicts the most likely sequence of words based on patterns it has learned.

The dangerous part is confidence. AI responses often sound clear and certain, even when they contain mistakes. For casual use, that might not cause serious harm. But when AI is used in research, finance, healthcare, or technical decision-making, incorrect information can create real consequences.

That’s the hidden risk.

As AI adoption grows, more people are relying on these systems without fully checking the outputs. Over time, small inaccuracies can turn into bigger problems, especially if the information is used to make decisions.

This is the problem @Mira - Trust Layer of AI is trying to address.

Mira Network is building a system designed to reduce the risk of AI hallucinations. Instead of accepting an AI response at face value, Mira introduces a verification step.

Here’s how it works.

When an AI produces an answer, Mira breaks that answer into smaller statements. These statements are then sent to a group of independent validators within the network. Each validator reviews the claims separately. The system then measures the level of agreement between them.

If most validators confirm the information appears accurate, the output is considered verified. If there is disagreement, the response can be flagged for further review or rejected.

The idea is simple, don’t rely on a single model. Instead, rely on agreement across multiple independent checks.

This approach reduces the chance that one model’s mistake becomes an accepted fact. While it doesn’t guarantee perfection, it lowers the risk of unchecked errors.

Mira also uses economic incentives to encourage honest participation. Validators must stake $MIRA tokens to take part in the network. If they provide accurate validations, they earn rewards. If they act dishonestly or approve inaccurate claims, they risk losing part of their stake.

This creates accountability. Participants have something at risk, which encourages careful verification rather than careless approval.

It’s important that Mira doesn’t eliminate hallucinations at the source. It doesn’t change how AI models generate responses. Instead, it adds a layer that checks those responses before they are relied upon.

As AI becomes more integrated into daily workflows and professional environments, reliability becomes more important. Hallucinations may seem like a small flaw, but in the wrong context, they can cause serious issues.

Mira Network’s approach focuses on reducing that risk by adding structured verification and shared accountability.

It’s about making AI outputs more trustworthy before people act on them.