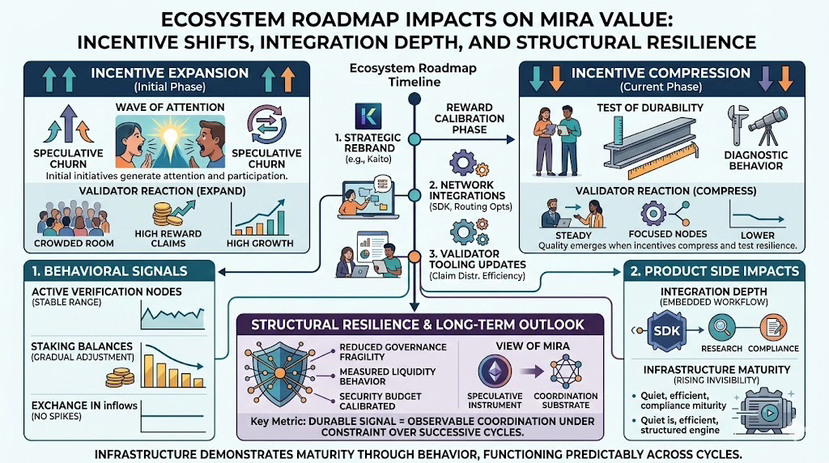

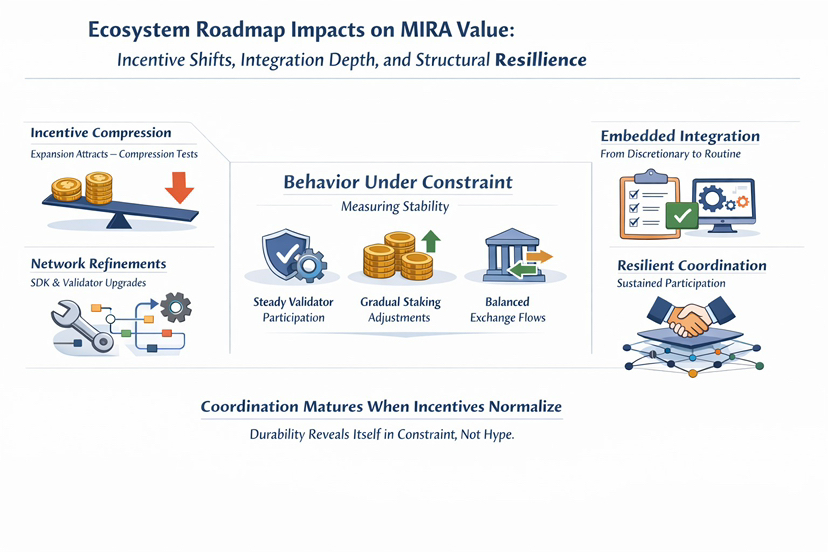

I have found that the clearest measure of network quality emerges when incentives compress, not when they expand. Expansion attracts participation. Compression tests it. When rewards normalize and narrative velocity slows, behavior becomes diagnostic.

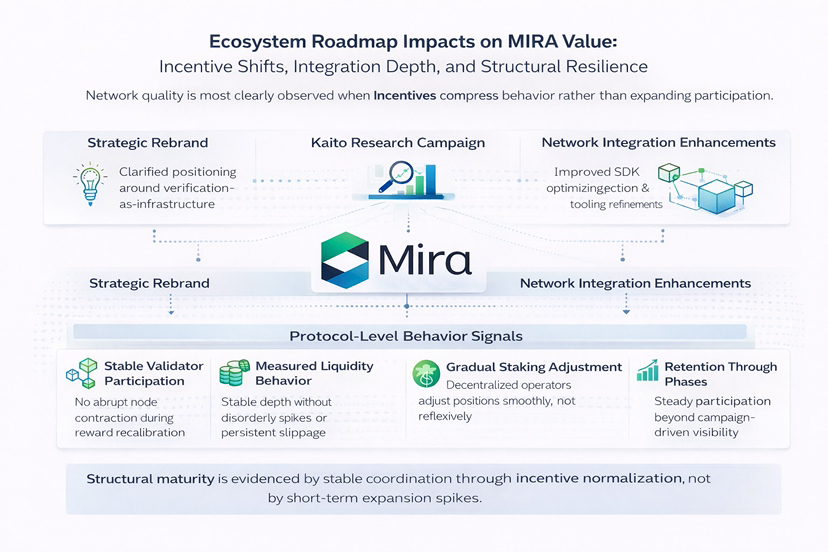

The recent ecosystem developments around MIRA, particularly the Kaito campaign, the strategic rebrand, and incremental network integrations, appear modest in isolation. But structurally, they adjust the network’s incentive topology. SDK refinements and routing optimizations lowered integration friction at the developer layer. Validator tooling updates improved claim distribution efficiency. They refine how verification demand flows through the network.

The relevant question is not whether these initiatives generate attention. It is whether they alter behavior.

Since reward recalibration phases, validator participation has not exhibited abrupt contraction. Active verification nodes have remained within a stable range rather than collapsing in response to emissions tapering. Staking balances have adjusted gradually, suggesting heterogeneous operator cost bases instead of synchronized withdrawal.

Exchange inflows have not spiked disproportionately following campaign driven visibility, which reduces the probability of short-term speculative churn dominating structural participation. Retention through lower attention periods is a stronger signal than expansion during high-visibility windows.

On the product side, integrations matter because they convert discretionary usage into embedded workflow logic. When verification calls are integrated into research pipelines, compliance reviews, or developer environments, participation shifts from reactive to routine. Infrastructure matures when it becomes invisible. The strongest systems often generate less noise over time because they are functioning predictably.

Incentives reveal network quality because they impose economic consequence. Validators stake capital against correctness. If dispute frequency remains bounded during volatility, incentive calibration may be functioning within expected parameters. Compression tests equilibrium. So far, the response appears measured rather than disorderly.

From a long term capital lens, several implications emerge.

Low validator churn reduces governance fragility. Measured liquidity behavior supports execution reliability for integrators. The security budget appears calibrated to sustain participation without excessive dilution. That does not eliminate risk, mispricing at the task validation layer, throughput expansion stress, or integration lag remain plausible challenges. But current behavioral data reflects stability rather than strain.

I increasingly view MIRA less as a speculative instrument and more as a coordination substrate. As infrastructure strengthens, it tends to become quieter. Campaigns may accelerate visibility. Rebrands may refine narrative clarity. But durability is revealed when participation persists independent of attention cycles.

The open question is not whether ecosystem initiatives can attract activity in expansionary phases. It is whether coordination remains intact across multiple compression cycles. If validator persistence, liquidity continuity, and disciplined reward response continue under normalized incentives, the network’s structural alignment may prove durable.

Infrastructure does not announce its maturity. It demonstrates it through behavior. The only durable signal is observable coordination under constraint. Whether that coordination compounds over successive cycles will determine the long-term character of the system.