A few months back, while reviewing my trading journal entries from volatile market periods, I realized how often I had second guessed Ai assisted analyses due to subtle inaccuracies that slipped through unchecked. That doubt shifted when I dug into @Mira - Trust Layer of AI , a decentralized protocol that applies zero knowledge proofs in a way that finally brings verifiable trust to AI outputs. As a long time trader navigating both crypto volatility and AI tools for insights, this felt like the missing piece and it’s not just smarter models, but provably reliable ones.

Zero-knowledge proofs let someone demonstrate a computation is correct without revealing the details behind it. In Mira’s context, this technology underpins a system where Ai generated content gets broken down into discrete, testable claims. A network of independent verifier nodes, each running different AI models, evaluates these claims through consensus. Only when a strong majority agrees does the output receive a cryptographic certificate confirming its reliability. This decentralized setup draws from blockchain’s proven consensus mechanisms, blending proof of stake for economic security with verification incentives to discourage dishonest behavior.

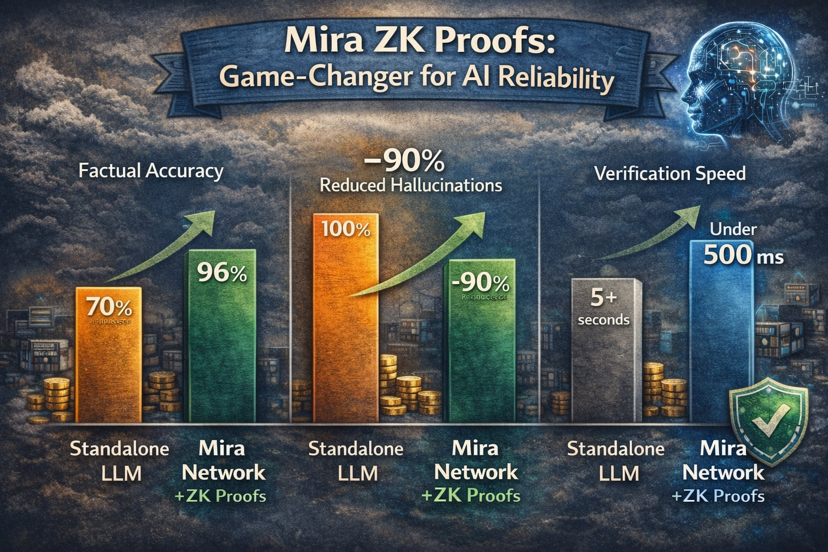

From my perspective as someone who has built and deployed AI-driven trading signals, the impact is profound. Standalone large language models often hover around 70% factual accuracy in specialized domains like finance, according to various benchmarks and Mira’s own evaluations. Through their consensus filtering, that figure climbs to 96% in production tests, achieved without retraining underlying models. Hallucinations, those confident but false assertions, drop by roughly 90% because inconsistent or fabricated details rarely survive scrutiny from diverse models. I have applied similar verified outputs to on chain data queries, spotting discrepancies in transaction patterns that unfiltered AI overlooked, aligning closely with verifiable sources.

The integration of ZK proofs adds another layer of strength. Mira’s approach, enhanced through partnerships like with Lagrange for zkML capabilities, allows private model inferences while generating succinct, verifiable proofs. Verification happens in under 500 milliseconds in many cases, suitable for time sensitive applications. In decentralized finance, this means AI oracles can deliver predictions or risk assessments backed by cryptographic evidence, mitigating manipulation risks that have historically affected protocols. The network’s testnet has handled tens of thousands of verifications with high consensus success rates, demonstrating practical scalability.

Emotionally, this evolution eases a persistent tension I have felt in the space. Trading involves enough uncertainty from market forces; adding unreliable AI only compounds the stress. Mira’s system introduces accountability that mirrors the transparency we demand in blockchain transactions. It is not about perfection, but about creating a foundation where errors become detectable and correctable through distributed, incentivized checks. In high-m stakes areas like healthcare diagnostics or legal analysis, where a single mistake carries heavy consequences, the reduction in unsupported claims offers genuine peace of mind.

Mira’s use of ZK proofs transforms AI from a powerful but unpredictable tool into a dependable layer of infrastructure. It points toward a future where reliability underpins innovation rather than limiting it. What possibilities open when we can trust AI outputs with cryptographic certainty in supply chain forecasting, personalized education, or global risk assessment? These questions feel less speculative now, more like the next natural step forward.