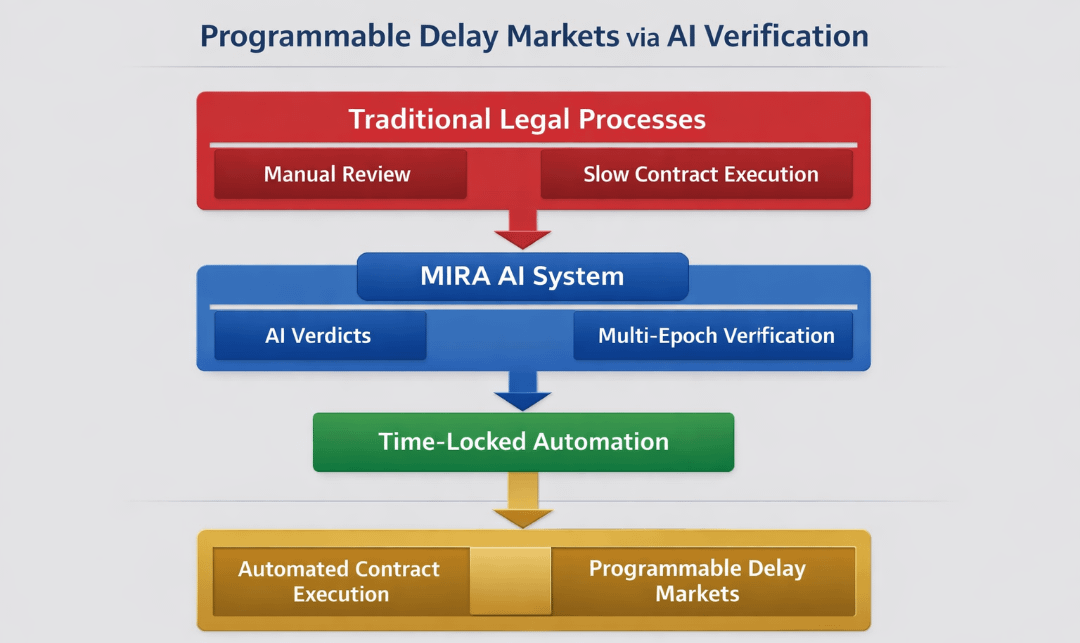

If $MIRA enabled time-locked AI verdicts that auto-execute contracts after multi-epoch verification, would legal systems become programmable delay markets?

Last week I tried canceling a subscription I barely used. The button said “Cancel anytime.” I clicked it. A loading spinner blinked for three seconds, then the page refreshed and showed a smaller line: “Cancellation effective next billing cycle.” No alert. No explicit consent. Just a backend rule I hadn’t negotiated. Somewhere between my click and the server response, a contract executed on terms I didn’t see.

It wasn’t a dramatic failure. The service didn’t crash. My card wasn’t hacked. But the experience felt quietly broken. I acted in real time; the system responded on deferred logic. The agreement wasn’t dynamic — it was static code wrapped in friendly UI. The platform held timing power. I held a button.

Modern digital systems are built on invisible latency advantages. Algorithms can update prices mid-checkout. Policies can auto-apply after fine-print triggers. Decisions are often made in background epochs users don’t perceive. We operate in present tense; systems operate in scheduled enforcement windows. That asymmetry is subtle but structural.

The deeper misalignment isn’t about decentralization versus centralization. It’s about who controls delay.

I’ve started thinking of digital contracts as “frozen clocks.” When you sign up, the clock is set. Terms are embedded. If circumstances change — new data, new behavior, new context — the clock doesn’t adapt. Enforcement triggers when it was pre-coded to trigger, not when evidence matures. Legal systems mirror this: filings, review periods, appeals. Everything runs on institutional time, not informational time.

Now imagine contracts not as frozen clocks, but as programmable hourglasses.

An hourglass doesn’t just measure time; it visualizes flow. Sand moves, but you can flip it. You can widen the neck to slow or accelerate flow. More importantly, you can inspect it mid-process. The idea isn’t instant execution. It’s observable delay with conditional release.

Blockchains like Ethereum introduced programmable contracts, but execution is still mostly event-triggered and immediate once conditions are met. Solana optimized throughput and low-latency finality — great for speed, less oriented toward staged verification. Avalanche experimented with subnet architectures, letting application-specific chains define custom rulesets. Each ecosystem improved performance or modularity, but the core assumption remained: once a condition is satisfied on-chain, execution should follow quickly.

Speed has been treated as virtue.

But what if delay — structured, programmable, multi-epoch delay — becomes the feature?

This is where $MIRA enters the frame. Not as a faster chain. Not as a governance token chasing votes. But as a verification layer that treats time itself as an economic primitive.

If MIRA enabled time-locked AI verdicts that only auto-execute after multi-epoch verification, then contracts would not trigger on single-pass computation. They would require layered consensus across temporal checkpoints. An AI system issues a verdict — for example, whether a service breach occurred or whether a dataset meets compliance thresholds. That verdict is not final. It enters an epoch window.

During that window, multiple validators — human or machine — re-evaluate the output across separate data states. Each epoch is cryptographically recorded. Only after threshold agreement across epochs does the contract execute. If disagreement surfaces, the hourglass widens; delay extends; additional evidence is incorporated.

Legally, this begins to resemble a programmable delay market.

Instead of courts imposing fixed appeal windows, delay becomes tokenized and adjustable. Parties could stake MIRA to accelerate review (by subsidizing validator attention) or to extend scrutiny (by funding additional epochs). Time is no longer passive. It is budgeted, priced, and verified.

Mechanistically, this requires three architectural principles:

1. Verdict Abstraction Layer – AI outputs are wrapped as verifiable objects with metadata: model version, dataset hash, inference timestamp.

2. Multi-Epoch Consensus Engine – Rather than single-block finality, verdicts pass through scheduled checkpoints. Validators re-run or challenge outputs using slashed stake mechanisms.

3. Time-Locked Execution Module – Smart contracts subscribe to verified verdict objects, auto-executing only after epoch consensus reaches a predefined confidence score.

The MIRA token anchors incentives. Validators stake to participate in epoch review. If they rubber-stamp incorrect AI verdicts, they lose stake. If they surface valid discrepancies, they earn rewards. Users who request additional scrutiny fund expanded epochs. Developers integrating the system pay for verification depth based on risk tolerance.

Value capture emerges from verification demand, not transaction count. High-stakes contracts — insurance, cross-border trade, automated compliance — require more epochs, thus more validator participation and token utility. Low-stakes actions might clear quickly with minimal review. Time becomes elastic and market-priced.

Governance shifts accordingly. Instead of debating parameters abstractly, stakeholders adjust epoch length, quorum thresholds, and slashing intensity based on empirical dispute rates. The system adapts around error frequency rather than ideology.

Second-order effects are non-trivial.

Developers might design contracts assuming delay buffers exist, shifting from defensive over-collateralization to evidence-backed execution. Users could choose verification depth the way they choose insurance coverage. Enterprises might prefer programmable delay markets over jurisdiction shopping, especially for AI-driven decisions that cross borders.

But risks surface quickly.

Delay markets can be gamed. Wealthy actors might perpetually extend epochs to stall enforcement. Validator cartels could coordinate to fast-track verdicts for favored clients. Excessive delay could undermine user trust, especially in consumer-facing apps where immediacy is expected. There’s also epistemic risk: if underlying AI models are systematically biased, multi-epoch verification might simply amplify shared blind spots.

The design only works if validators have heterogeneous data access and meaningful economic exposure. Otherwise, the hourglass becomes decorative — sand moving, but no real scrutiny.

Still, the structural shift is hard to ignore. If legal systems become programmable delay markets, enforcement moves from institutional scheduling to cryptoeconomic timing. Contracts would not just ask, “Is this condition true?” They would ask, “Has this condition remained true across verified time?”

That distinction changes power.

In today’s systems, whoever controls the clock controls the outcome. In a multi-epoch verification architecture, the clock becomes shared infrastructure. Delay is no longer friction. It is negotiated evidence.

And when time itself becomes programmable capital, law stops being a static document and starts behaving like an adjustable protocol.$MIRA #Mira #Mira @Mira - Trust Layer of AI